The simple answer is when everything in the world is smart. But if you think deeply, you would find that the continuous progression to make things easy in life is what makes the world smarter day-by-day – the sky is the limit. In the world of computing, consider the 17[SUP]th[/SUP] century era when humanbrain was used as a computer and it took ~200 years when in 19[SUP]th[/SUP] century the first mechanical computer was invented by Charles Babbage considered as father of the computer. Today we are in much advanced state and the pace of innovation is pretty fast. Technology definitely makes things smarter, life easier, and pace of doing things faster.

Today we are talking about IoT which makes all devices around us smart enough to sense and act as programmed by us, whenever and from wherever we want. What makes it possible? Sensor is not a synonym of smart, but it is the technology which enables smart things to be done. Various types of sensors can detect every movement, temperature, pressure, light etc. and activate its device to do something. We often hear talk of a world with a ‘Trillion Sensors’ associated with IoT, and we are getting there….

In 2014 MEC(MIG’s MEMS Executive Congress), Chris Wasden, Executive Director, Sorenson Center for Discovery and Innovation, University of Utah talked about the number of internet devices in use: >5 Billion today and is expected to reach 18B by 2018, and the number of sensors crossing 1 Trillion by 2025. And he talked about platform leaders (device, chip, MEMS etc.) to emerge and MEMS to co-create an industry platform to reach the 1T target.

Interestingly, foundry leaders are taking great interest in MEMS. George Liu, Director, TSMCtalked about multiple technology drivers (Personal, Home, City, Automotive and so on) in the context of IoT as against mainly computers in last several decades. He recognized the importance of sensors in making the devices intelligent and smart and also the gaps (material, architecture, low power, integration, packaging, capacity and price) that need to be filled to bring MEMS into main stream. And how can foundry contribute in filling the gap? Of course supply chain, ROI, scaling and so on, but what caught my attention are sensor and MEMS PDKs and joint process & product development between design and foundry. Wow! This can open up big opportunity for fabless MEMS development. This reminds me about one of my blogs (What will drive MEMS to drive I-o-T and I-o-P?) in which there was emphasis on standardization which can bring MEMS into volume production, and GLOBALFOUNDRIES pursuing the path of IC fab-like production discipline for MEMS.

Getting to 1T sensors is not a slam dunk; like EDA enabled fabless IC development, we need highly sophisticated and integrated automation including modeling to accelerate MEMS development. In the days to come we will see newer and newer MEMS devices, which is beyond our imagination today. But that reality has to be complemented by automated tools which can model the MEMS accurately, integrate them at system or IC level and verify accurately as fast as possible.

Taking at look David Cook’sblogat Coventorwebsite where he mentions about CoventorWare and MEMS+for MEMS+IC co-design, modeling, simulation and analysis, and SEMulator3Dfor virtual fabrication of MEMS devices to cut down on long build-and-test cycles through fab and improve yield before production, I concur with him that these tools are very apt in today’s environment to cater to the complexity of a variety of MEMS, yet meet the shrinking time-to-market window. In fact this reminds me about another blog written by Gunar Lorenz on new capabilities in MEMS+ 5.0– Breakthrough MEMS Models for System and IC Designers.

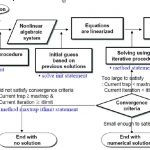

In MEMS+ 5.0, Reduced Order Models (ROMs) of MEMS devices (which allows writing out snap shots of sophisticated nonlinear multi-physics models into Verilog-A protecting the IP) can be exported into Simulink schematics for system designers and circuit schematics for IC designers. Verilog-A ROMs can run up to 100 times faster than full MEMS+ models in CadenceVirtuoso or MATLABSimulink. Users can decide whether to write out ROMs in Verilog-A for circuit schematic or MROM (a new file format) for Simulink. The environment provides good set of controls for users to tradeoff between accuracy and speed. Simulation results from MROMs can be viewed and animated in 3D, just like results from full MEMS+ models.

Smart tools to develop smart MEMS, smart MEMS to develop smart devices and smart devices to make smart eco-system are must to create a smart world!