Intel Inline with lowered numbers- 2015 Revs to be Flat…

Capex Slashed by 13% to $8.7B- 10nm at risk???

Mortgaging the future???

Is the foundry business dead???

Desperately seeking growth!!!

Intel Inline…

Intel reported revenues of $12.8B and EPS of $0.41 in line with downward revised estimates after chopping $1B out of Q1 previous expectations. Client computing was the main culprit for the hit down 16% Q/Q and down 8% Y/Y. Data center was down 10 sequentially but up 19% Y/Y.

The company sounds like it is hoping for some upside from the summer rollout of Windows 10 but we wouldn’t hold our breath waiting for positive impact from that introduction as we think the XP upgrade is well played out already. Guidance is for revenues to be flattish for 2015 with an obvious bias to the downside. Gross margin will be good at 61% obviously helped by reduced spending. We didn’t hear much positive hope for desktop PC’s other than the several hopefully comments about Windows 10.

We also didn’t hear a lot about tablets or mobile as those numbers are now buried in the financial results where they can’t be as easily picked apart.

No comment on M&A but lots of questions…

As expected there was no comment on the Altera rumors but it is clear from the poor momentum of the PC business that Intel has to look elsewhere for growth

Capex Cut is ominous…

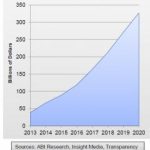

Cutting capex down to $8.7B is very ominous from our perspective. Obviously Intel can help make its earnings numbers and get EPS growth in a flat revenue environment by cutting expenses of which Capex is the major number. Intel had already fallen to the number three position behind Samsung and TSMC and looks to be falling to a distant third place very quickly.

The company made the normal excuses on reuse and moving 22nm capacity to 14nm but we don’t buy that as the full reason. We find it hard to believe that Intel is that much better at reuse and efficiency than TSMC and Samsung. Capex is being cut because business is not that good. Intel did comment that yields and ramp of 14nm was better than planned but its been a long time and it should be very good by now.

You don’t get to be a leading player without spending a lot of money and we are very concerned about the company mortgaging its future to make near term earnings numbers to satisfy the street. We think this is potentially short sighted as it will help near term numbers but put in jeopardy Intel’s technology dominance that made the company what it is today. We hope we are wrong.

10nm at risk???

Though Intel refused to comment about 10nm timing it is clear from everyone in the industry that they have pushedout spending and its impossible to push out spending and reducing capex as much as they have without slowing their 10nm schedule. Our guess is that we are in the range of a 9 month to one year delay from what it could have been. 10nm was supposed to be in Israel and though Intel talked about 10nm spending in the second half of 2015 we haven’t heard a lot about it in the field.

Can TSMC catch Intel at 10nm???

Given TSMC’s increasing capex coupled with their stated aggressive 10nm plans it feels as if TSMC has a real opportunity to catch up with Intel at 10nm. There is likely a continuing downward bias in capex at Intel and its likely that management will be looking at ways to cut or delay spending to support EPS.

Foundry noticeably absent from call….

You wouldn’t know that Intel was allegedly in the foundry business from the conference call as there was zero mention of it. If TSMC does either catch Intel at 10nm or come close then the reason for a fabless customer to use Intel foundry services would fall to below zero as the only reason we can think of is their technology lead. If they lose that then foundry is officially out of business as its more expensive than TSMC and Intel is harder to work with.

Intel cutting 22nm capacity…

Intel did comment that 22nm capacity would be cut as fab capacity transitioned to 14nm. This supports to support the weaker demand environment as Intel is usually able to milk returns out of older fabs for a longer period of time. To be cutting 22nm capacity is a sign that 14nm is good but also a sign that demand is not strong enough to keep it pumping out devices.

Equipment companies likely Whacked…

The Intel capex news while not unexpected is probably a lot worse than most bullish analysts and equipment company management were hoping for. The Intel cut obviously adds to the already strong industry headwinds we have been talking about. At this point the equipment industry is standing on the one leg of memory spending as foundry and Intel (the other leg) aren’t supporting the weight. If memory spending gets more wobbly we are going to topple over.

Beware of Intel exposure…

Equipment companies that rely on Intel are obviously getting hurt but its not like anyone was expecting an increase. If Intel’s business is just a proportional share of an equipment company’s business its still hard to figure out whats going to increase to make up for the Intel shortfall. We are now potentially looking at a down year for capex as a possibility….

Robert Maire

Semiconductor Advisors LLC

Also read: Moore’s Law is dead, long live Moore’s Law – part 1