The semiconductor industry is steadily charting its course toward 5G chipsets with the availability of extremely complex system-on-chip (SoC) designs that support the surge of data traffic over next-generation wireless networks. Take Blu Wireless Technology, for instance, the IP supplier from Britain that is using the Arteris FlexNoC interconnect in its Hydra WiGig and backhaul subsystems.

Blu Wireless, which designs and licenses wireless modem IP for multi-gigabit communications standards like WiGig, is among the early entrants in the commercial 60 GHz chipset market. The chipsets for 60 GHz millimeter wave radio with up to 7 Gbit/s speeds have passed the certification tests and are ready for production and launch in 2016.

Blu Wireless develops PHY/MAC solutions for high-speed wireless networks

The tri-band WiGig chipsets—comprising of the transceiver, power amplifier and antenna—can handle links at 2.4 GHz, 5.8 GHz and a number of unlicensed 60 GHz bands.

For s start, the WiGig-ready chipsets aim to cut the TV cord by facilitating in-home video streaming and other high-speed multimedia applications in the entertainment domain. But the far bigger prize for these chipsets is the nascent 5G market where Blu Wireless is eyeing its IP for both backhaul links to small cells as well as high-bandwidth links to 5G terminals.

Anatomy of a 5G Baseband Chipset

The Bristol, England–based Blu Wireless is a supplier of IP for gigabit-rate wireless modems operating in the millimeter-wave frequency bands. The company’s 60 GHz modem is based on its Hydra PHY/MAC technology that boasts a phased-array antenna.

It’s a baseband DSP solution that uses a hybrid hardware and software architecture while employing heterogeneous multiprocessing technology in order to reduce interference. Here, it mixes fixed-function DSP blocks with highly-optimized parallel vector DSPs, which in turn, creates a very complex DSP pipeline.

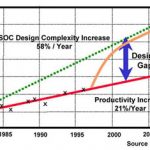

That’s essential because multiple DSPs in the digital controller have to handle a lot of arbitration and quality-of-service (QoS) tradeoffs—for example, latency vs. bandwidth—while requiring to limit the size of the die and implement SoC-wide power management. As a result, Blu Wireless needed an SoC interconnect solution that would allow it to implement end-to-end QoS and guarantee the on-chip latency and bandwidth requirements.

Blu Wireless has licensed the Arteris FlexNoC interconnect IP for its Hydra WiGig and 4G backhaul subsystems. The Arteris FlexNoC fabric primarily caters to the digital side, where it serves as the main interconnect in the baseband, allowing Blu Wireless to make latency and bandwidth optimizations per link.

The FlexNoC interconnect architecture encompasses serialization, buffering, arbitration, etc.

The digital controller is responsible for high-bandwidth digital signal processing. Arteris is also offering its FlexNoC interconnect solution on the analog side, but it’s a smaller NoC part.

The Arteris FlexNoC interconnect IP allows easy simulation and exploration to decide link widths, the number of wires and QoS setting. Here, FlexExplorer, a component of FlexNoC interconnect fabric, allows the simulation using SystemC models of candidate network-on-chip (NoC) architectures.

Specifically, for WiGig, the challenge for the digital controller is to keep the RF system “fed” to achieve the best possible data rates. The rates to and from the PHYs that are connected to the controller exceed 2 Gbit/s. Blu Wireless sells both digital and analog IP for WiGig to comms chipmakers and calls it the MAC subsystem.

Heading Toward 5G

It’s worth remembering that millimeter wave is most likely going to play a crucial role in breaking the bandwidth barriers for the realization of 5G networks. The industry giants like Samsung are heavily focusing their 5G development work on millimeter wave communication amid its potential as an ultra-dense network for small cells.

Moreover, 5G isn’t merely about mobile Internet services offered over wide-area cellular networks. In fact, the notion of 5G encompasses multiple connectivity solutions to provide communications services for a myriad of new devices, ranging from smartwatches to drones to connected cars.

Blu Wireless founder and CEO Henry Nurser demonstrating a 60 GHz module

Blu Wireless is part of the XHaul Project—one of the initiatives from the European Commission’s 5G Infrastructure Public Private Partnership (5G-PPP)—that is focusing on backhaul technologies while using both millimeter-wave radio and fiber-optic links.

Blu Wireless is contributing millimeter wave modems in the trial network that is targeting sub-1ms latency and speeds of up to 10 Gbit/s. The XHaul Project is being conducted in Blu Wireless’ hometown Bristol; other participants include Huawei, Telefonica I+D, TES Electronic Solutions and the University of Bristol.

Also read:

Interconnect Watch: 3 Chip Design Merits for Network Applications