In Part 1, we reviewed four of the highlights of the recent TSMC Technology Symposium in San Jose. This article details the “Final Four” key takeaways from the TSMC presentations, and includes a few comments about the advanced technology research that TSMC is conducting.

Continue reading “Key Takeaways from the TSMC Technology Symposium Part 2”

What SOC Size Growth Means for IP Management

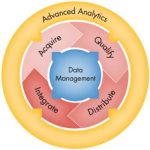

Whether or not in the past you believed all the of rhetoric about exploding design complexity in SOC’s, today there can be no debate that SOC size and complexity is well beyond something that can be managed without some kind of design management system. As would be expected, development of most larger designs relies on a data management systems, but the significant need that has arisen is for release and IP management. This is particularly true for IP, because it now constitutes such a large portion of any new SOC design.

Dedicated GPU and CPU chips have billions of transistors. SOC’s also are reaching this size and contain huge amounts of reused IP – often hundreds of blocks. It has been said that the chips of yesterday are now today’s embedded IP. This IP can be internally developed or externally sourced. Furthermore, the SOC design project itself can span multiple sites and teams. Many IP management tools used by design teams grew out of data management systems that then added on features to deal with IP and release processes. One major shortcoming of this approach is that the IP and design release process requires backtracking to add information to the design data.

Last month I spoke at length with Simon Butler, CEO of Methodics, about their approach to this problem. Rather than retrofit a data management system to attempt to add release and IP management features, they decided 5 years ago to build a complete system based on IP release and reuse. In doing so they were able to make sure their platform was data management agnostic. Simon has seen growth in the use of Perforce for enterprise revision control, and Methodics is open to it or any number of other underlying data management systems.

The most important aspect is that they use the underlying data management system in its native form, making it an open system. This makes it easier to integrate their ProjectIC into an existing corporate Perforce installation, with preexisting assets. Perforce provides a lot of valuable services, but Methodics has invested 20 man years of development on top of Perforce, to accommodate large multi-site design with potentially thousands of users. Simon says that this has consistently allowed Methodics to win challenging benchmarks.

Digging deeper, Simon explains that they have come at this problem from an enterprise IT angle, by taking concepts from DevOps, which is a movement in software development to enable rapid and continuous releases, improving quality of results by combing disciplines from development, release, testing and IT. Simon calls what they are doing “DevOps colliding with EDA”. This concept connects developers with consumers, and through continuous release monitors quality.

Methodics has gone beyond what other design management solutions are doing by implementing not just file level versioning, but by solving the more difficult challenge of using block level dedup to save space and increase performance. A fascinating example of Methodics innovation is their development of a hardware appliance, WarpStor. It is a content aware NAS optimizer that attaches to the network and links the design management software with existing high performance NAS subsystems. By serving as in intermediary with caching and intelligence about the design data, it can reduce workspace creation time, storage requirements and improve throughput.

Methodics has taken the time to rethink the nature of the IP development, release and management. Part of this comes through Simon’s previous experience in this space, and part of it is because their CTO, Peter Theunis, has an extensive background in enterprise software and infrastructure development. Their recent strong growth and 100% customer retention rate is indicative of a successful strategy and execution. For more information on Methodics you can find their website here.

Qualcomm Rounds Out IoT Offerings

Lots of chip companies like ARM Holdings, Intel, NVIDIA and Qualcomm are spending time and effort to find a place for themselves in the IoT market because they, like I, believe in a gigantic, future market. Some companies are focusing on wearables and drones while others are looking to automotive and smart home. Qualcomm previously spent a lot of their effort on repurposing smartphone chipsets for IoT purposes, which in the short term made sense, but in the long term wasn’t really sustainable.

Qualcomm saw this challenge and I believe has addressed it with a multitude of new technologies that address almost every major IoT segment. This is partially because Qualcomm is a big enough company, like Intel, that they can focus on multiple verticals at the same time and actually grow businesses out of them. At CES 2016, Qualcomm showed off the products they have designed purpose-built for IoT. These products include a smart home reference platform, an automotive grade Snapdragon 820A, an LTE modem optimized for IoT and a Bluetooth smart SoC for IoT. They also made some announcements around Wi-Fi connectivity and self-organizing networks, which still somewhat ties into IoT.

Smart home reference platform

Qualcomm’s smart home reference platform is based on Qualcomm’s Snapdragon 212 processor which is more specifically designed to provide all of the things necessary to help someone, like Nest, Ring, or Canary build a smart home device or light hub. This reference platform is designed to allow virtually all of the ‘smart’ devices in the home to communicate with one another and to enable a single, easily manageable point of control. As stated earlier, it runs a Snapdragon 212 processor which has a 1.3 GHz quad-core ARM7 processor and Adreno 304 GPU. These specs alone are enough to allow for some decent media capabilities as well as processing things like audio, voice, and video all at the same time. It also has built-in wireless connectivity with 802.11a/b/g/n as well as Bluetooth 4.1, Bluetooth LE and NFC. These specs make it quite clear that this platform isn’t designed for the high-end and that its purpose is to satisfy the needs of the soon-to-be-mainstream smart home market. What is interesting, however, is the added support for a display and 8 MP camera, which allows smart home hub designers to run with their designs and integrate displays and cameras into their hubs if they want.

Internet of autos

In the automotive sector, Qualcomm announced the Snapdragon 820A, an automotive variant of the Snapdragon 820 smartphone processor. This processor is designed to deliver the absolute best Qualcomm performance and experience to users and is very likely why they’ve introduced the Snapragon 820A because the only other automotive chip Qualcomm had was the Snapdrgon 602A. This upgrade to the Snapdragon 820A means that automakers, like Audi who will be using the 602A in their 2017 vehicles for their MMI (Multi-Media Interface), now have access to significantly more horsepower to power the infotainment of the vehicle. Qualcomm said the Snapdragon 602A will be shipping in the A5 and Q5 models in Audi’s 2017 lineup. It appears now like NVIDIA will hold the higher models like the Q7 and A8.

The Snapdragon 820A has all of the features of the Snapdragon 820 which include the powerful Kryo CPU cores, Adreno 530 GPU and Hexagon 680 DSP. There is also a Snapdrgaon 820Am which integrates a Snapdragon X12 LTE modem, much like the consumer version of the Snapdragon 820. This part could be Qualcomm’s key to success as it enables 600 Mbps downlink and 150 Mbps uplink and is essentially designed with car manufacturers’ update cycles in mind. Because the X12 LTE modem is so far ahead of where most networks are today, car manufacturers are essentially ‘future proofing’ their wireless connectivity for the next 2-3 years. Plus, having the modem integrated into the SoC is something that most automotive manufacturers would love to have since it allows them to free up space not having to find a place for and run wires for two different chipsets. In addition to industry- leading connectivity, the 820A also has “smart” (aka cognitive) features like its Zeroth technology which allows the vehicle to identify, through computer vision, objects and potential dangers and to continually learn as it continues to be used.

The Snapdragon 820A also features driver assistance with lane departure warnings, vehicle detection and traffic sign recognition. These features are designed to get Qualcomm into the heart of the connected car rather than just powering the infotainment system. They want to control the dash and center console in addition to the infotainment system. This means more, future integration of Snapdragon capabilities in cars and more chips shipped. Qualcomm also incorporates V2X capabilities which are designed to prevent collisions with other vehicles or people through vehicle to vehicle communication or vehicle to mobile device communication.

I’m fascinated between the back and forth between Qualcomm, NVIDIA and Intel in this space.

Low power LTE for IoT

One feature missing from most wearables today is a low power, wireless solution, so one doesn’t need to have their phone to make it useful. Battery-powered, industrial IoT solutions or even drones need this same capability, too. As Qualcomm is a connectivity company, it comes as little surprise that the company announced a new LTE modem at CES 2016, the Snapdragon X5 LTE (9×07) which is designed to cover the company’s bases in the LTE connectivity area while also providing IoT connectivity. In fact, the X5 LTE is pin compatible with Qualcomm’s other IoT chipsets, the MDM9207-1 and MDM9206. The X5 LTE modem also features a tiny, integrated ARM Cortex A7 applications processor to allow for a complete solution that supports LTE connectivity up to LTE Cat 4. This is the first time Qualcomm has integrated a “true” applications processor into a modem to my knowledge as they’ve traditionally integrated modems into their applications processors. This is yet another move by Qualcomm to help keep down costs for their customers by utilizing less board space and having a smaller bill of materials. These chips are all designed to fill the connectivity needs of IoT OEMs and to allow for them to build the best possible devices at the lowest possible cost. This strategy should allow Qualcomm to be somewhat competitive with others in the space, since they are offering three different connectivity options that are all pin compatible with each other.

New “BlueTooth” SoC

Another connectivity announcement, born out of Qualcomm’s acquisition of CSR is the CSR102X family of Bluetooth 4.2 SoCs designed for ‘always-on’ Bluetooth connectivity. This chip is squarely focused on satisfying the Bluetooth needs of the lowest power wearable, home automation platforms and smart remote controls where performance and battery life are absolutely crucial. This family of Bluetooth chips is also designed to improve the Bluetooth audio capabilities of devices connected via Bluetooth for improved voice commands and audio quality. I could see a processor like this targeted at a FitBit versus and Apple Watch.

Wi-Fi SON makes sense of multiple Wi-Fi frequencies and features

Last but not least is Qualcomm’s introduction of Wi-Fi SON (Self-Organizing Networks) as a part of their suite of Wi-Fi capabilities. Wi-Fi SON borrows the SON capability from the 3GPP Release 8 LTE standard and is borrowed from Qualcomm’s own UltraSON technology designed for small cell LTE networks. These Wi-FI SON features are designed to bring the cellular experience to Wi-Fi networking by making the process of connecting and sending data much less painful than it is today. This means that the routers have self-configuring, self-managing, self-healing and self-defending capabilities thanks to the chips built into the access point.

These features are designed to make Wi-Fi more plug and play while also having autonomous QoS (quality of service) management for different types of data as well as being able to enable mesh and multi-hop network topologies with multiple access points connected together to deliver optimal coverage. The self-defending capabilities are also extremely important to prevent people from hacking into a wireless network purely by bruteforcing their way in. With learning capabilities, the Wi-Fi SON routers can adapt to the security situation and prevent unauthorized access. Companies like Linksys, ASUS, TP-Link and D-Link are already on-board with Wi-Fi SON capabilities and D-Link already won an innovation award for that capability. Qualcomm also said that they expect to have cellphone-side features that can make of Wi-Fi SON in the future as well, but didn’t give many details. Wi-Fi SON is also designed to work on not just the latest 802.11 standards, like 802.11ac, but also downwards towards N as well.

Wrapping up

Overall, Qualcomm’s announcements at CES 2016 were squarely focused on more firmly placing the company’s foot in the IoT space and gives the company a more solid foundation for when IoT starts to become commonplace. Until then, we are going to have to wait and see what products get created with all of these new and different chips and wireless capabilities. Qualcomm is establishing themselves as one of the big wireless solutions providers for IoT and enabling their customers to deliver the same kinds of IoT experiences that their smartphone customers expect to receive while also being cost-sensitive and supporting the right standards.

More from Moor Insights and Strategy

4 goals of memory resource planning in SoCs

The classical problem every MBA student studies is manufacturing resource planning (MRP II). It quickly illustrates that at the system level, good throughput is not necessarily the result of combining fast individual tasks when shared bottlenecks and order dependency are involved. Modern SoC architecture, particularly the memory subsystem, presents a similar problem. Continue reading “4 goals of memory resource planning in SoCs”

Of Steering Wheels and Buggy Whips

At the heart of automated driving is control of the steering wheel, gas and brake pedals in the car. Based on NHTSA’s recently negotiated agreement with car makers, those selling cars in the U.S. will add automatic emergency braking to their cars by 2022. So it seems that we humans are already ceding control of the brake pedal. Can the steering wheel be far behind? (Cars with automated driving will also see the gear shift removed.)

A three-way battle is emerging for control of that steering wheel in the car. NHTSA and European regulators want to keep it in the car. Google wants to remove it. Tesla wants to leave steering wheel control entirely at the discretion of the driver at his or her own risk.

I mention Tesla because the company has managed to straddle NHTSA’s and SAE’s defined levels of automated driving to the consternation of the rest of the automotive industry. But more on this in a moment.

At the core of the battle is whether or not a computer can handle the steering entirely on its own or requires the help of the driver and, if it does require assistance, how much and under what conditions and legal obligations? Google is suggesting the computer can and should handle the entire steering process without human assistance. NHTSA and SAE believe the driver should be prepared and able to steer at all times within specified guidelines. Tesla wants to leave steering decisions to the computer or the driver depending on circumstances and at the driver’s risk and discretion with no guidelines.

(Clarification: Multiple car makers are preparing Level 3 automation for use in predefined areas with predictable automation activation and de-activation. This contrasts with the ad hoc Tesla approach.)

I met with a supplier of resistive touch sensors for steering wheels this week, Guttersberg Consulting, to explore this topic. I couldn’t help but wonder if it was actually the turn of the century and I was meeting with a maker of buggy whips. Aren’t steering wheels going to become superfluous? This gentleman assured me that steering wheels will continue to be in high demand far into the future.

Supporting his contention that steering wheels will endure is the preference expressed by regulators in the U.S. and Europe – that steering wheels remain in cars. (The Vienna Convention, which applies in Europe, also requires a driver to be physically present.) This executive from Guttersberg Consulting, living in Germany and, therefore, Europe, believes consumers everywhere are still enthusiastic about driving – which helps explain the interest in steering wheels and Level 3 automated driving.

Level 3 automated driving – based on NHTSA and SAE guidelines – is a hybrid driving experience that provides for the driver standing by, ready to re-take control of the car. Level 3 requires a driver detection system and a process for determining when the driver is present and when that driver must take control with a sufficient amount of time provided for transition to human driving.

Level 3 contrasts with the approach of Tesla Motors which has no system for driver detection or driver awareness determination – though there is a driver alert when the computer discovers it can no longer proceed on its own. The lack of driver detection explains the YouTube videos with Tesla “drivers” pictured in the rearseat during vehicle operation. Tesla’s autopilot is almost entirely ad hoc and up to the driver with Tesla relieved of responsibility.

Outside of Germany, most car companies have indicated their plans to skip Level 3 because of what is seen as an insurmountable challenge of somehow implementing a safe hand-off of control from computer to human. German car companies are so far still seeking to enable a Level 3 experience since it appeals to their interest in preserving and extending the role of the human driver in vehicle control – ie. BMW’s “Ultimate driving experience.” Maybe this becomes the ultimate ASSISTED driving experience.

The question, therefore, is how long it will take to transition from a steering wheel-centric Level 2 automated driving experience to a steering wheel-optional Level 4 and whether Level 4-capable cars will continue to have steering wheels. And, further, what role will this Level 4 vehicle play? Will the car be owned and will anyone want to own this car?

My steering wheel-enhancing friend at Guttersberg is approaching automated driving from a completely different standpoint. Rather than STARTING with automated driving as the goal of the complete driving experience, his company views automated driving as a default system to protect the driver from him or herself or in the event of incapacitation or driver distraction.

Guttersberg’s technology is intended to recognize when the driver has taken control or re-taken control of the car or, even more importantly, when the driver has lost control of the car. In the event of driver distraction or a medical emergency, Gutterberg’s technology will detect the presence or absence of a hand on the wheel.

Should the driver’s hand leave the wheel due to fatigue or medical emergency, the Guttersberg-enhanced steering wheel will alert a system that will allow the car to shift automatically to an automated safe mode and call for assistance, if appropriate. The steering wheel airbag will also be there to protect the driver in the event of a crash.

A host of companies including TRW and Autoliv are focused on enhancing the steering wheel to make driving safer, while companies like Guttersberg and Neonode are focusing on using the steering wheel as the ultimate driving sensor and interface. The steering wheel of the future will detect the driver and his or her attention to the driving task.

Guttersberg’s vision of automated driving is a compelling one. Automated driving becomes the ultimate safety system – always standing by to take control when the car is in danger of a potentially catastrophic maneuver. Guttersberg’s resistive sensors, which do not interfere with steering wheel heating, also enable interfaces for accessing content and other applications.

Automated driving becomes the default driving mode in this view, rather than the primary driving mode. Looking at automated driving this way shifts the automated driving thought process away from a door-to-door Level 4 phenomenon with all of the related challenges and ownership disruption, to the ultimately safe driving experience desired by most car buyers. In such a world steering wheels are far from buggy whips. They are an essential tool for making driving safer.

Roger C. Lanctot is Associate Director in the Global Automotive Practice at Strategy Analytics. More details about Strategy Analytics can be found here: https://www.strategyanalytics.com/access-services/automotive#.VuGdXfkrKUk

Waze May Not Be So Evil After All

In contrast to the opinions in a recent article here, I think Waze is extremely beneficial to the individuals who use it, other drivers – by virtue of more efficient road usage, and the various jurisdictions that oversee roads and highways. For those not familiar with Waze, it is a smartphone app that provides navigation and route planning using real time traffic information. The major premise is that by using GPS information and user reports, Waze can assemble a more accurate picture of road conditions and hazards. By combining crowd sourced traffic and hazard information with route planning, Waze is effective at shortening commute travel time and improving safety.

Waze is really good at route planning. When it sees congestion it will direct users to other roads that can be quicker. Instead of leading to more congestion on side roads as has been suggested, my experience is that it has a bias towards highways – they are usually faster after all. Plus, if one of the alternate routes becomes overloaded, Waze will plan routes that avoid that congestion too. Waze serves as a load leveling system for roads that increases overall utilization and efficiency.

More than once, when I have encountered traffic and flagged it, immediately Waze will update the map to make the route yellow or red. Waze ranks user reports based on how long the user has been using Waze, and probably on the accuracy of their previous reports. It is not unlike a consumer credit scoring system for Waze traffic reporters. One complaint about crowd sourced traffic is that individuals might try to rig the system by falsely reporting traffic on their favorite route home. This is prevented by checking to see if their vehicle is actually moving – as opposed to parked – and by comparing that one user’s report to other vehicles in the same area.

Because Waze uses real time information, its maps and traffic info are up-to-the-minute, avoiding a major problem that traditional GPS navigation systems encounter. Waze will even provide ETA updates or route changes based on changing traffic conditions during a drive. Having Waze run on a smartphone is also good because phones are more easily updated than built-in navigation systems found in most cars. My 2005 SUV has an obsolete boat-anchor GPS system that has become unusable due to its ancient user interface, limited capability, and inability to read its outdated road data DVD. Updating it with a newer system is simply not an option. During the life of a car, its owner(s) will likely go through several generations of smart phones and app updates.

The Waze user interface is very easy to use and is not distracting. I’d probably have a harder time using a frozen in time built in navigation system. Furthermore, Waze can fine tune their app to continuously improve the ease of use and minimize driver distraction. Yes, it does display ads, but only when you are stopped.

Next comes the topic of how municipal traffic information should be shared. Contrary to the assertion that Waze is stealing the keys to the city, Waze is sharing information exactly as it should be. Cities have closure and road condition information that needs to be distributed – in any and every way that is feasible. Choosing to share this information with Waze in no way limits their ability to share it with others.

Waze too has useful information sourced from its users. Sharing this with municipalities makes sense, especially in emergencies and during unpredictable events. There does not seem to be a down side to the exchange of information when both parties, and the public stand to benefit.

Perhaps, as some argue, Waze is undermining 511 initiatives. However, 511.org openly makes their database available to app developers and even features Waze and other navigation and traffic apps on their site. The type of information that 511 systems can provide is best delivered in a frictionless manner during navigation system route planning and visually in real time on the road. Far from being a threat to 511 Waze seems to be complimentary.

Along with pot holes, objects in the road and stalled vehicles, users can report police car locations. People usually don’t bother reporting police cars that are in motion. Only the ones parked by the road are reported, so Waze is not a useful way to locate police if you are contemplating a crime. And, anyways, for that there are police radio scanners. However, for the same reason police cars are marked to begin with, these reports can help encourage drivers to slow down and drive more cautiously near a police car location. Isn’t that to goal of traffic enforcement anyway, not simply to issue tickets?

We have all seen empty highway patrol vehicles when they park near road construction projects – same idea. In fact, the Highway Patrol probably wants Waze users to report them. What’s more, the absence of a reported police car on Waze offers no assurance that you are in a free for all speeding zone.

Another previously stated comment related to the federal railway administration’s efforts to ensure that Google maps has accurate information for railway crossings on roads. I’m certain that this information is not being made exclusively available to Google. But regardless, the good news is that Waze shares Google Map information – so train crossing information will be incorporated into the Waze App. By the way, Google acquired Waze some time ago, so Waze already benefits from Google traffic info and other map features. And, as was incorrectly asserted, Google Maps is not just a smartphone app, but an extensive database used through the web and programs like Google Earth.

Waze is not some evil plot to sequester road and traffic information. Rather it is a brilliant and in many ways obviously useful service that helps optimize traffic levels. More than once it has saved me from wasting time in traffic by finding an alternative route that simply avoided congestion.

One, Two, Many – Why You May Not Be Replaced By A Robot

Some aboriginal tribes in Australia see little value in counting and are believed to discriminate only between “one”, “two” and “many”. This is not through lack of intelligence; beyond two they simply lose interest in the details. We can smile and feel superior but I suspect we are not much better when it comes to predicting our technology future. We understand “one” (what we have right now) and technical experts reasonably understand “two” (modest future enhancements on what we have right now), but I respectfully submit that, given our lack of attention to detail, our best guesses when we look just a little further out are no better than “many”.

Not that this stops us couching forward-looking views in the appearance of considered wisdom. A current example can be found in popular coverage of Artificial Intelligence (AI) and robots. There’s no question that impressive advances have been made on both fronts. Vision systems now recognize targeted classes (such as road signs or dog breeds) with higher accuracy than we are able and others have bested human competitors in chess, Go and game-shows. And it is common for factories to use robots rather than human workers because they are faster, more accurate and cheaper to operate and maintain.

So naturally we jump to our “many” predictor (while skipping the boring details) – if these things are already possible, surely it is only a matter of time before all human tasks are performed by artificially intelligent robots and then what will we do? Depending on the writer, the end-game is either a utopia where we all do whatever we please – learn, indulge our artistic passions and play games – or a dystopia where the machines rule and we humans become at best slaves to serve the machines, or worse yet a virus to be eliminated.

This is all very well for science fiction novels, but if we’re aiming for an informed opinion on future trends, I think we can and should do better than casual extrapolation from a few points (something none of us would do to a graph). Especially we should realize that where machines do well, they do so in performing bounded, repetitive and (in manufacturing applications) high-volume tasks in a stable and well-characterized environment. Some tasks may seem particularly impressive (winning at Jeopardy or Go) but they still fall within this description. Remove any of these constraints and the machines become expensive doorstops. By way of example consider three human tasks where the popular view falls short on closer examination.

Think about drywall installation – not a skilled task by most measures. It doesn’t require a lot of training, it’s repetitive and it certainly doesn’t require advanced education, but think about the constraints. The environment is not well characterized – houses have different and often custom shapes and the area around the house (though which you must carry the drywall) may be a sea of mud. The installer must work in confined spaces running over floors designed only to hold people and furniture. Homes under construction are scattered all over the place and the drywall machine has to be moved to each house for each installation – not exactly a high-throughput application. Maybe you fix this by only manufacturing pre-built homes on an assembly line. But a home is very important part of our identity. I doubt that anytime soon we will all submit to living in mass-manufactured boxes.

Next take teaching. Teachers do many things; think about just one – getting students to understand subject matter. Teachers are required to have degrees and additional qualifications – if you like they need a substantial database of information – but a good teacher needs more than that. They need to be able to see which students are stuck, understand why they are stuck and help them get past those problems, perhaps by presenting the material in a different way. They may also need to encourage or motivate a student. These are skills that require understanding how we learn in general and how a particular student deviates from that norm, something we barely understand ourselves, much less know how to code into a machine.

Let’s get away from mechanical (and perhaps interpersonal skills) needs and consider a software programmer as another example. Educational skills are arguably comparable to those for a teacher but the application is different. A programmer must map problem requirements into code or search existing code for a bug (not so different from a teacher, where the code is the student’s understanding). We can automate through higher programming abstractions but unless the requirement is a minor increment on an existing code base, the programmer must creatively select a method from a potentially infinite set of possibilities. This requires judgment as much as experience, which is as difficult to teach as it would be to codify for a machine.

These are just three of a wide range of tasks that we humans perform. At the end of it all, people are very adaptable and machines are much less so. Could machines eventually become more adaptable? Perhaps, but would it be worth the effort? I doubt it – replacing you with a robot would not be anywhere near as cost-effective as extending your abilities though AI and automation (with the expectation that you will also adapt). This will certainly change the nature of human-powered work. It just won’t change the need for human-powered work. Which, I respectfully submit, is how our technology future will really evolve.

Analog Mixed-Signal Layout in a FinFET World

The intricacies of analog IP circuit design have always required special consideration during physical layout. The need for optimum device and/or cell matching on critical circuit topologies necessitates unique layout styles. The complex lithographic design rules of current FinFET process nodes impose additional restrictions on device and interconnect implementation, and on device placement, which further complicates the analog IP layout task.

To manage some of the layout complexities, features have been added to the schematic/layout tools to record the design intent — i.e., a schematic database property (“constraint”) which can be used to assist with initial layout generation, and be checked against the final implementation. Yet, the need for additional analog IP layout automation remains a key issue.

I recently had the opportunity to review the topic of analog IP layout productivity with Bob Lefferts, Director of R&D for Mixed-Signal IP at Synopsys. Bob was passionate about the topic, and he should know — he manages the CAD team that supports over 1,000 analog IP designers and layout engineers, who deliver a breadth of IP functionality over multiple process nodes and multiple foundries.

First, a little background on analog layout design…

The implementation of analog circuits involves the judicious placement of devices — and especially, multiple device “fingers” — in a manner such that groups of devices will have matching characteristics. The goal is to reduce the sensitivity of circuit performance to mask overlay and fabrication on-chip variation, aka “OCV“. These same considerations apply to the interconnect patterns connecting matched devices (and their individual fingers).

To represent this design requirement, layout tools have been enhanced to accept constraints added to the design database. For example, schematic devices in a differential input pair can be tagged as “matched”; the corresponding device and pin layouts could have constraints added to require:

- a specific orientation (although most current FinFET process nodes require a single orientation for gates and lower-level metal layers)

- relative positioning

- min/max separation, or “bounding box” area limits

- alignment between devices (e.g., center/edge; horizontal or vertical alignment)

- symmetry of devices relative to an axis

A “centroid-type” layout of a diff pair has been used historically, as it reduces sensitivity to x, y, and rotational overlay tolerances.

(Example of centroid orientations of multiple device fingers in a matched diff pair. )

A more sophisticated layout assist feature is to allow grouping of individual cells, such that all devices in the group are bound and move together.

Bob highlighted that these typical constraints are no longer sufficient in FinFET process nodes, due to additional requirements:

- minimizing local mask density variation

- assigning common multipatterning “mask color” layer designations, while simultaneously satisfying color density balance design rules

- incorporating dummy layout data for regular litho periodicity; satisfying FinFET-on-grid and gate periodicity restrictions

- matching layout-dependent effect (LDE) parameters in device models

An additional consideration that Bob stresses was the process design rule restrictions on device channel length and device channel width. His team is developing design flows in the most advanced FinFET process nodes, so that qualified IP is ready with production PDK releases from the foundries. These process nodes offer very limited options for device channel length, which severely hampers custom analog design. As a result, the implementation must incorporate multiple devices in series, to effectively realize a longer L value — this amplifies the complexity of generating analog layout to satisfy matching and variation-insensitive requirements.

Bob also spoke about constraint-driven layout, saying, “Several unsuccessful attempts have been made where EDA tools have asked the layout/design engineer to add textual constraints so the layout effort can be automated. But layout engineers don’t think like a programmer and instead operate in a visual context. Asking a layout person to create lines and lines of textual constraints is like asking an engineer to write poetry — only a very few will be successful.”

Bob clearly drove home the need for continued improvements in analog IP layout productivity, beyond the recording of constraints. He said, “We have a very close collaboration with the Synopsys custom tools R&D development team. Our design team meets regularly with R&D, and provides input on new features. These features invariably become part of the custom layout editor, schematic editor, and simulation environment. Specifically, we have worked on a unique method to improve the layout productivity on complex FinFET device and cell layouts. First, we place devices on interconnect tracks and then deal with fins, instead of snapping devices to fins, and then trying to make the interconnect line up. We also added a level of automation such that the layout engineer can concentrate on connecting devices to match the schematic while meeting all of the design rules.”

Complex pattern of matched series/parallel devices in analog layout (from Synopsys)

At the upcoming SNUG meeting in Santa Clara, Bob will be presenting details of the productivity gains that his design team has realized, and the results of the collaboration with the tools R&D team, in the talk “FinFET IP Design Using Synopsys Latest Innovation in Custom Tools” .

If you are a Synopsys user, I would encourage you to attend SNUG Silicon Valley on March 30-31, and Bob‘s presentation, in particular. Here are links to the SNUG registration and schedule:

https://www.synopsys.com/Community/SNUG/Silicon%20Valley/pages/default.aspx

-chipguy

Key Takeaways from the TSMC Technology Symposium Part 1

TSMC recently held their annual Technology Symposium in San Jose, a full-day event with a detailed review of their semiconductor process and packaging technology roadmap, and (risk and high-volume manufacturing) production schedules.

Continue reading “Key Takeaways from the TSMC Technology Symposium Part 1”

Internet of Things Augmented Reality Applications Insights from Patents

US20150347850 illustrates an IoT (Internet of Things) AR (Augmented Reality) application in a smart home. A smart home IoT device communicates via a local network to a user AR device (e.g., smartphone) for providing the tracking data. The tracking data describes the smart home IoT device. The AR devices can recognize the smart home IoT device in the camera view based on the tracking data. Once the smart home IoT device is identified in the camera view, the AR application can augment the camera view with additional information and control interface about the smart home IoT device. The user can control the smart home IoT device using the AR device.

US20150347850 illustrates an industrial IoT AR application. A machine broadcasts a status of the machine and tracking data related to the machine to a user AR device. The status includes a presence, an operating status, operating features, and characteristics of the machine. The tracking data includes a physical identifier and location information of the machine. When the user AR device is in proximity to the machine, the user AR device authenticated with the machine. Then, the augmented reality application in the user AR device generates directions to the machine and descriptions of the machine. The AR application can augment the camera view with interactive virtual functions associated with functions of the machine.

US20140063064 illustrates an IoT AR application in a connected car. An AR head-up display in the connected car displays the overlaying a virtual image regarding the surrounding environmental information on an actual image of the external vehicle that is observed through the transparent display. The surrounding environmental information regarding the vehicle includes information about events occurring outside the vehicle (e.g., location information of the other vehicle, speed of the other vehicle, traffic lane of the other vehicle, and an indicator light status of the other vehice), background information within a predetermined distance (building information, information about other vehicles, weather information, illumination information), accident information of the other vehicle, and traffic condition information. The surrounding environmental information are obtained via the V2X (V2V and V2I) communication system.

US20150310667 illustrates an IoT AR system for providing contextually relevant AR contents to a user. The IoT AR system extracts features of a specific object in a location in a field of view of a camera of the user AR device. The IoT AR system assesses a situation of the user associated with the user AR device based on information obtained from surrounding IoT devices and sensors. Then, the IoT AR system provides the context-aware visualization regarding the specific object based on the assessed user situation.