UVM has become a preferred environment for functional verification. Fundamentally, it is a host based software simulation. Is there a way to capture the benefits of UVM with hardware acceleration on an FPGA-based prototyping system? In an upcoming webinar, Doulos CTO John Aynsley answers this with a resounding yes. Continue reading “Webinar alert – Taking UVM to the FPGA bank”

Webinar alert – Smart homes demanding low power Wi-Fi

There are two camps of thinking on the IoT: those who believe Bluetooth and Wi-Fi rule the edge, and those who support any of dozens of other wireless networking specifications for their various technical advantages. The ubiquity of Wi-Fi in homes helps devices connect in a few clicks – so why don’t more IoT designers use it? Continue reading “Webinar alert – Smart homes demanding low power Wi-Fi”

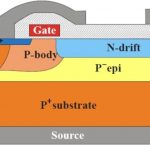

3D TCAD Simulation of Silicon Power Devices

Process and device engineers are some of the unsung heroes in our semiconductor industry that have the daunting task of figuring out how to actually create a new process node that will fit some specific, market niche with sufficient yield to make their companies profitable and stand out from the competition. One such market segment is silicon power devices where the transistors are used to charge or switch a battery, control an electric motor, make a power supply, lighting control, automotive controls, or drive a discrete power device. In the early days the engineers would design a process experiment, fabricate, then take measurements to analyze how effective their ideas were, iterating until satisfied.

Today we cannot tolerate such slow iteration cycles, so instead we turn to specialized EDA software called 3D TCAD where the process and devices can be modeled and simulated to predict their electrical performance prior to actually fabrication. Silvaco is hosting a webinar on April 14th to show us how a 3D TCAD simulation can be done for silicon power devices.

LDMOS device cross-section

The webinar looks at how to apply both 2D and 3D cell design for vertical LOCOS (LOCal Oxidation of Silicon) power devices. Another application area for 3D TCAD is the 3D current filaments simulation for multi-cell IGBT (Isolated Gate Bipolar Transistors). On the software side the Silvaco tool called Victory Device will be shown:

- Architecture of the software

- 3D rapid prototyping to detailed physical simulation

- Meshing approach

- Solvers

3D electric field distribution. Field is maximum at the corner of the trench.

Who should attend a webinar like this on 3D TCAD? If you’re a power device designer, power device process development engineer, production engineer, power device researcher, or a materials researcher then this webinar is going to be relevant. Using a TCAD tool like Victory Device shows you the electrical and thermal behavior of: Power MOS, LDMOS, SOI, thyristors and IGBTs. You can even model and simulate wide bandgap materials like SiC and GaN. The devices that you model can be embedded with a circuit then simulated with a SPICE circuit simulator to look at timing, current and power performance.

Using TCAD software enables you to do electrical, thermal and optical characterization of semiconductor devices, pus optimize the performance by making rapid iterations. This TCAD methodology will actually reduce the total process development time. You can even explore novel device technologies for use in next-generation devices.

Even though this webinar is focusing on power device users, with a TCAD tool like Victory Device you and model and optimize CMOS devices like FinFET and FDSOI. Here’s an example 3D FinFET simulated with a 3D fully unstructured tetrahedral mesh:

Even compound semiconductors can be modeled and optimized: SiGE, GaAs, AlGaAs, InP, SiC, GaN, AlGaN, InGaN. Optoelectronic response for devices like solar cells and CMOS image sensors can also be simulated. Finally, you can even model the effects of radiation like: single event upset (SEU), single event burnout (SEB), total dose and dose rate.

Webinar

This webinar is scheduled for April 14th at 10AM PDT, so sign-up today online.

Related Blogs

Mobile Unleashed…Reviewed

I finished reading Don Dingee and Dan Nenni’s book, Mobile Unleashed, the Origin and Evolution of ARM Processors in Our Devices. I guess by way of disclosure I should say that Don and Dan both blogged with me here on SemiWiki for several years before I joined Cadence, and Dan’s last book Fabless was co-authored with me (SemiWiki members can download it free). So I don’t claim complete objectivity.

Let me start by pointing out that this is a book for professionals in the mobile and semiconductor industries. This is both its strength and its weakness. You will learn a lot of things you didn’t know, even if you have been in the industry for decades. On the other hand, this is not the book to give to your mother to give her more of an idea about what you do all day. But as it says in the foreword:

We have waited over two decades for someone to tell this story.

Since the foreword was written by Sir Robin Saxby, the original CEO of ARM, this is high praise indeed.

You probably know the big picture story of ARM. Back in the day before the IBM PC, when there were dozens of different computer architectures, a company called Acorn won the contract to build the computer around which the British Broadcasting Corporation (BBC) created a series of educational television programs about computers and programming. Acorn struggled to repeat that success and took the decision that none of the commercially available microprocessors met their needs and so they would build their own microprocessor. In retrospect this was a great idea, but in some ways at the time it must have looked like something somewhere between insane and the ultimate in NIH (not-invented-here). The book covers this in more detail than I have seen before.

The processor was not a success and Acorn continued to struggle and was acquired by Olivetti, which soon was only interested in IBM PC-compatible products for the business market. The big stroke of luck came when Apple decided to build the Newton. The original ARM® processors were not designed specifically to be low power, nobody cared back then. But with its very simple architecture, it delivered the highest performance per watt of any processor available. Since the Newton needed reasonable power to do handwriting recognition, but also had to run on batteries, this was a really important measure and they selected ARM. But they insisted Acorn/Olivetti spin it out as a standalone company and so ARM was created (the A stood for Acorn originally, before it changed to Advanced).

The next big development was between TI (Wally Rhines, as it happened, before he became CEO of Mentor) and Nokia and ARM. One disadvantage of a 32-bit RISC architecture was that the code density was not good, since every instruction took 32 bits. On the plane on the way home from the meeting at Nokia the idea of what became Thumb was already well along: a mode in which the ARM would use 16-bit instructions, expand them to 32-bit and feed them to the original decoder. Code density would be good, and they wouldn’t need to do a complete redesign (this was before synthesizable cores). Thus, the ARM7TDMI was born, with the project lead being Simon Segars, who is ARM’s CEO today.

See my interview with Simon Segars, The Design that Made ARM

Nokia grew to about a third of the entire mobile industry as first car phones and then mobile phones in general took off and became ubiquitous. ARM found itself sitting on a rocket ship as two things happened. Microprocessors became a small enough part of a chip that early SoCs could be designed with an embedded microprocessor and other circuitry. Suddenly every semiconductor manufacturer needed a microprocessor if they didn’t have their own in-house one already, and there weren’t very many choices available for license. Plus, with mobile taking off, everyone wanted to participate. So many (eventually most) semiconductor companies licensed the ARM7TDMI.

That takes the history of ARM to its second phase. The early days of ARM history are gradual: the architecture, the first ARM, the spinout, the ARM7, Thumb, mobile. But the second phase began in just an hour. Steve Jobs walked onto the stage of YBCA and said he would be announcing three new products:The first one is a widescreen iPod with touch controls. The second is a revolutionary mobile phone. And the third is a breakthrough Internet communications device.

Of course, we all now know that all three products were one, the first iPhone. And the world changed.

It wasn’t even obvious at the time how much it was changing. The CEOs of both Microsoft and Nokia dismissed it as just a handset. Both companies would effectively be driven from the mobile market by iPhone and its Android imitators.

The second half of Mobile Unleashed is the smartphone era. It doesn’t cover everything or it would be unreadable. It focuses on a few companies: Apple, Samsung, and Qualcomm. These are the right companies to focus on since they make all the money. The rest of the handset market, in aggregate, loses money. The story of each company is told in some detail: how they entered mobile and what they have done to keep competitive as the computational power expected of a smartphone has exploded but the power budget has remained roughly constant.

The book wraps up with a look into the future, in particular the market for wearables and Internet of Things (IoT), and the briefest mention of servers. But as the last chapter before the epilogue ends:The next chip that changes the world may come from any number of sources, but there is a good chance it will run on ARM technology.

There is a book called Certainly More Than You Want to Know About the Fishes of the Pacific Coast. If you don’t have a background in the semiconductor industry then this book will certainly tell you more than you want to know about ARM processors. But if you do have a background in the semiconductor industry then this is a book well worth reading. No matter how much you know about ARM and Apple and Qualcomm, Don knows more, and you will learn a lot.

This is actually cross-posted from Breakfast Bytes over at Cadence. If you wondered why I vanished from Semiwiki and haven’t found out yet, then go there and take a look. I put out a blog every day, sometimes about something Cadencey but most of the time not (the last couple of days have been CDNLive Silicon Valley so you can expect some coverage of the parts I attended, although with 12 parallel tracks that is a fraction of what was available).

Click here and check out my new home. There are already over 100 blogs up. There is a box towards the top right where you can subscribe and get an email whenever I post, which, like my blogs on Semiwiki. is normally 5am Pacific, unless it is tied to a Cadence press release (they don’t cross the wire until 7.45am). Get something interesting to read with your morning coffee.

Breakfast Bytes, fresh every morning.

Fabless vs IDM for Data Centers: Silicon Photonics as a Disruptive Force?

I recently received a copy of a book entitled Silicon Photonics III (Amazon) and while perusing the book I was captured by the first chapter entitled ‘Silicon Optical Interposers for High-Density Optical Interconnects’. The chapter covered the work of a team in Japan on an idea they termed “on-chip servers” and “on-board data centers”. It brought back to mind an article on SemiWiki about ARM and TSMC and how the server market will be the next big battle between Fabless and IDMS (Data Center Fabless vs IDM). Could silicon photonics be the disruptive force that enables such a battle in the data centers?

The team that authored the referenced chapter are part of a collaborative project between the University of Tokyo, Photonics Electronics Technology Research Association (PETRA) and Advanced Industrial Science and Technology (AIST). Their idea is to combine the die that would comprise a server board into a single packaged element using a Silicon Optical Interposer. This is 2.5D / 3D stacking with a twist as it makes use of silicon photonics for the horizontal connections between the die. Stacked die are still vertically connected using through-silicon-vias (TSVs). Laser-diodes (LD), optical modulators (OM), photo-detectors (PD) and optical wave guides (OW) are integrated on the silicon interposer and are used by the digital ICs to communicate to each other. The group has demonstrated working prototypes with FPGAs flip-mounted on top of such an interposer running error-free inter-chip communications with bandwidth density of 30 Tbps/cm[SUP]2[/SUP] with a channel line rate of 20Gbps.

Conclusions from the authors were as follows. “Since the maximum lithography field size (stepper shot size) in area and signal I/O pad percentage out of total pads for CPUs have currently been 8.58 cm2 and 33% respectively and will be so in the future, we can obtain an overall inter-chip bandwidth at the level of several tens of Tbps by using silicon optical interposers, which is sufficient for the required bandwidth in the late 2010s or early 2020s.”

Take this one step further. The goal of the group in Japan is to by 2022 be able to show what they termed an “on-board Data Centers” which will combine multiple “on-chip servers” onto a single optical board. Communications between the on-chip servers both on a single board and between boards in a rack would be done through photonic optical communications. To enable this the group is working on what they call “optical I/O cores”. The first incarnation of these optical I/O cores will be designed to be mounted in an Active Optical Cable (AOC) module and then the next generation will be designed to be integrated around a host-LSI in the LSI’s package. The group has already demonstrated error-free data links using the Optical I/O Cores at 25 Gbps over a 300 meter multi-mode fiber (MMF). This implies that 100Gbps data links (25 Gbps x 4 channels) are feasible with a 3X longer reach than conventional solutions with less power consumption than conventional SR10, SR4, LR4 or PSM4 implementations even when you count the power required for the laser diodes to drive the optics. Will the IDMs such as Intel or Fujitsu use this type of architecture to keep the fabless guys out of the data centers? Or could a fabless company (say a Qualcomm with ARM cores) use this type of architecture as a wedge against the incumbent IDMs in the data centers? Intel is already making news with silicon photonics (Intel Announcements).

With all of that said, if we are truly going to see a battle between IDMs and Fabless in the server space we are going to need to start seeing some action out of the fabless foundries towards aggressively supporting production volume silicon photonics solutions.

Fit-for-purpose IoT ASICs are about more than cost

We’ve been saying for a while that it looks like there is a resurgence in design starts for ASICs targeting the IoT. A recent webinar featuring speakers from ARM and Open Silicon (and moderated by Daniel Nenni) affirms this trend, and provides some insight on how these designs may differ from typical microcontrollers.

One of my first friends and mentors in the electronics industry who was an electromechanical packaging wizard always said, “Hammer to shape, file to fit, paint to match.” Just about anything can be custom fabricated, including a chip these days. Does it make sense to build custom parts for IoT projects? (If that question sounds familiar, we covered another webinar from different vendors on the same topic last month – now we hear from ARM.)

ARM has always been up to the challenge of what Tim Menasveta, Cortex-M Product Manager, describes as fit-for-purpose designs. A good example of the type of design envisioned for the IoT is the Beetle test chip, leveraging a Cortex-M3 and a Cordio radio IP block and designed to run mbed OS. Tim tossed an interesting factoid: the mbed OS developer base has grown to over 150,000 developers at the close of 2015.

But is this approach cost-effective for moderate volume IoT starts? One advantage is IoT parts are usually implemented on mature nodes. ARM is also pitching the idea of multi-project wafers to get to engineering samples for less than one might think – $16k on 180nm, and $42k on 65nm according to ARM data.

That is just fab costs however – ARM is, after all, in the IP licensing business. To help get licensing costs down, ARM has created DesignStart around the Cortex-M0 core. DesignStart offers a Cortex-M0 design and simulation package free of charge for evaluation purposes to registered users. When the design is ready for fabrication, ARM offers a simplified “fast track” commercial license for the Cortex-M0 priced at $40k. (No hints on pricing for Cordio.)

Access to processor IP does not a chip make. That is where Open Silicon comes in, with turnkey ASIC experience. With their Spec2Chip IoT ASIC platform, Open Silicon is trying to be a one-stop shop for IoT developers. Pradeep Sukumaran, Sr. Solutions Architect at Open Silicon, illustrated how they are leveraging a custom FPGA board for rapid prototyping. FPGAs offer huge benefits in IoT prototyping. Hardware IP can run with actual software at a relatively high clock speed – 50 MHz is achievable with newer FPGAs, even higher under favorable conditions.

Sukumaran makes a compelling case for BOM reduction with custom chip design, but I see high value in the overall IoT system solution experience Open Silicon brings to the table. My talk at the upcoming IEEE Electronic Design Process Symposium later this month centers on how designing and optimizing chips for actual IoT software is critical to building trust into devices.

I’m finding that IoT innovation is coming from makers – grab a module, write some code, take some measurements. My old friend and mentor would have appreciated this approach. Where previous generations spent time eliminating chips and passives and reducing printed circuit board layer counts, new generations will be after optimized IoT ASICs to not only reduce costs but to differentiate solutions from what others with merchant chips are doing.

The ARM environment that Open Silicon is working with offers a wide range of software and scalability. If for some reason a Cortex-M0 doesn’t offer enough performance, moving up the core ladder to other Cortex-M variants is straightforward. Spec2Chip looks to risk and schedule for IoT teams, whether inside a larger firm or at a startup.

To see this entire presentation plus the Q&A portion where Daniel asks several probing questions of both ARM and Open Silicon, register for the archived webinar here:

Car Companies Confront Data Sharing

Senior OnStar executives have long intoned at industry events that the customer owns his or her data. The only problem is that the customer is only allowed glimpses of his or her data. They don’t have control of that data in spite of their so-called ownership of it.

It’s a complex challenge especially given the fact that car companies have obligations to preserve privacy and ensure security – and there is the possibility that vehicle data might be used against the interest of the auto maker. Nevertheless, the voices are growing for data sharing with the loudest of those voices coming from the automotive aftermarket.

So it was somewhat surprising that an aftermarket association stepped forward last week to help quash legislation seeking to empower consumers with full control of and access to their vehicle data, as described in legislation before the Rhose Island legislature:

RELATING TO MOTOR AND OTHER VEHICLES -CONSUMER CAR INFORMATION AND CHOICE ACT

The Auto Care Association and the Coalition for Auto Repair Equality (CARE) applauded the Rhode Island House Legislature for considering legislation (HB 7711) requiring car companies to provide car owners with the ability to control where information transmitted by vehicle telematics systems is sent. The two associations then asked the legislature to set aside the bill while the aftermarket task force works cooperatively with auto makers to resolve the data question.

The two organizations testified before the Rhode Island House Committee on Corporations regarding the benefits of telematics to the auto care industry, including the ability for shops to obtain diagnostic data from a vehicle before it arrives at the shop, which could improve service bay efficiency and speed the vehicle repair process. The significance of this testimony derives from the potential influence of individual U.S. states on national policy governing vehicle repairs. It was Massachusetts’ adoption of Right to Repair legislation which helped to open up access to OEM diagnostic data in the first place – paralleling similar initiatives in Europe.

Aftermarket service providers are seeking to extend their repair rights further by enabling wireless or remote access to vehicle diagnostic codes. Consumers and repair shops can gain access to at least some of those codes today with aftermarket devices via OBDII plug-in devices such as those from Automatic, Vin.li or Verizon Hum. But car makers are working to limit the access of these devices beyond standard codes for emissions testing and other purposes.

Car makers are concerned that the OBDII port ultimately represents a source of vehicle vulnerability to hacking. Ford, Subaru, General Motors and some others have begun to provide the means for consumers to share their driving data with their insurance companies for the purposes of obtaining discounts. Ford and GM have also enabled – again, along with a growing list of competitors – consumers to access data on vehicle health and performance. It’s a start.

The groups testified that, “All of the data available from embedded systems currently goes to the vehicle manufacturer, allowing them, and only them, to reap the benefits of this technology. Specifically, armed with the extensive data about a customer’s vehicle, combined with the means to communicate directly with the driver in real time, the vehicle manufacturer has the ability to steer the motorists to the dealership or to a service establishment that may be a strong purchaser of their parts and information.

“While our associations both applaud and support the goal of HB 7711, at this time we cannot support passage.” In the end, the bill was set aside.

In the testimony, the two groups stated that, “While legislation may be necessary in the near future, we strongly believe that a collaborative approach would be faster and more effective, and we are more than willing to work to make that happen.”

CARE and the Auto Care Association explained that both groups are working with other associations as part of the Aftermarket Telematics Taskforce, which has been meeting with the car companies in an attempt to find common ground. “This process is in its early stages, and therefore it is difficult to judge whether we will be successful. Should our attempt to find an agreement not be successful in the near future, we likely will begin pursuing a legislative resolution and would welcome the help of the Rhode Island Legislature in order to resolve this very critical issue.”

No two car companies have taken the same approach to vehicle connectivity or the sharing of or access to vehicle data. There are few standards governing so-called vehicle “gateways’ for accessing data and security and privacy have become increasingly severe barriers to greater sharing of vehicle data.

Organizations such as automobile clubs, AAA in the U.S., have been advocating loudly for more open access to vehicle data on behalf of their commercial concerns for coveted vehicle repair and insurance business opportunities. It’s reassuring to see more reasonable voices prevailing in this debate.

Auto makers are struggling to come to terms with sharing vehicle data. The last thing they need at this particular moment is a legislative mandate.

Roger C. Lanctot is Associate Director in the Global Automotive Practice at Strategy Analytics. More details about Strategy Analytics can be found here: https://www.strategyanalytics.com/access-services/automotive#.VuGdXfkrKUk

Silicon Valley Myth Keeps Growing

I thought my ears were deceiving me when a commentator (I believe it was David Brooks) gushed with admiration on NPR a few years ago that it was great that Jeff Bezos of Amazon was injecting Silicon Valley thinking into the Washington Post. What?!?!?! Unless my Google got jammed, Amazon is in Seattle. But Silicon Valley got the tagline. “Jeff Bezos is bringing the Seattle Rainforest to the Washington Post” just doesn’t sound cool.

Silicon Valley is unique and wonderful, yada yada, so is Amazon’s rainforest, but it gets blind uncritical credit as the be-all and end-all of entrepreneurship. Having helped raise four kids, I am used to nobody ever listening to me, but even I was surprised that noone noticed when Harvard Business Review published my 2010 exhortation to “Stop Emulating Silicon Valley” and I am perplexed at the persistence and self-perpetuation of Siliconology(my favorite is Billy-Can Valley.) (Hey, why not SiliFran Valley, anyhow, you heard it hear first – now that the former prune capital has grown into the City?)

Here is just today’s latest juicy example

Kleiner Perkins, Google Ventures and friends (yes, mostly HQ’ed in SiliFran Valley) invested $120 million in “Silicon Valley Startup Juicero.” Is it cool? I Tesla-vate just from the picture. Think Keurig, Segway, SodaStream, Coravin, and other standalone, hard-to-make, complex consumer products. Some succeeded, some flopped (think Segway), and for some the jury is still out.

Will the $700 Juicero succeed? Who knows? All else being equal, I suppose a complex consumer product with big direct competitors (like Tesla) with $120 million of funding has a much better chance at success than one that has less funding. Do I hope it succeeds? Sure, why not?

Is it a SiliFran Valley startup? NOPE. Juicero is one of hundreds or thousands of Silicon Valley Transplants. It has its roots in New York, and in a failed juicery, Organic Avenue, started in 2002. One more time: ROOTS IN NEW YORK! Long story, but as a result, co-founder David Evans, apparently without even a (gasp!) accelerator or a hackathon, hacked away in his Brooklyn (you read it here: BROOKLYN – OMG!) apartment and came up with a prototype, and so on. Then after his innovative juicer started to work and the risk started to recede, (admittedly reading between the lines and based on seeing it happen), the SV money guys (yep, still mostly guys) said “Come to Silicon Valley.”

Am I a grumpy, snow-bound winter-weary Bostonian? Of course, but I lived, entrepreneur-ed and invested for 22 years in Israelicon Valley, way before it was popular, and I have seen surprising and impressive entrepreneurship almost everywhere I have seen people. That’s what successful entrepreneurs do – they surprise us. And they can do that anywhere in the world.

The Most Important Point You May Have Missed at CDNLive 2016!

This was the best keynote lineup I can remember at a user group meeting. All four speakers are visionaries but from very different perspectives. The video of the event will be up later this month but from my first count the word “System(s)” was mentioned 32 times and the underlying message will transform the semiconductor industry and EDA yet again, absolutely.

In case you haven’t noticed, SemiWiki added a navigation bar with our main sources of traffic. The new categories (Mobile, IoT, Automotive, and Security) can also be viewed as systems because in our world that is what they are and that is where our traffic is taking us. Systems companies, or more specifically, Fabless Systems Companies, are now leading the semiconductor industry and EDA is quick to follow. The EDA Consortium even renamed itself Electronic Systems Design Alliance (ESD Alliance).

All good news, right?, not necessarily, especially if you are a small to medium “point tool” EDA company…

First up was Cadence CEO Lip-Bu Tan speaking on the expanding system design opportunities. This is one of the best keynotes I have seen from him, very clear and definitely inside his comfort zone. The one thing that really got me excited was when he mentioned the Deep Learning Revolution which I agree with 100%. Devices are getting exponentially smarter and that means we will never have enough compute power and that will continue to drive new process nodes and tools keeping us all employed.

Second was Steve Mollenkopf, CEO of QCOM. Steve started out calling QCOM a systems company which I can attest to. We did the history of Qualcomm in our latest book, Mobile Unleashed, if you want to know the backstory. According to Steve, QCOM spent the first 30 years connecting people and the next 30 years connecting everything else. The Snapdragon SoC platform is a big part of that but 5G is the critical link because super processing power is only as good as the link to the cloud. And you can’t talk about 5G without talking about Qualcomm.

Third was Sanjay Jha, CEO of GlobalFoundries. Since my day job is working with the foundries I have a sincere appreciation for what he is trying to accomplish. Sanjay mentioned that power consumption is a critical measurement of semiconductors which is why GF is offering FinFET and FD-SOI technology, both of which have been trending on SemiWiki for the last three years. In fact, “low power” has been one of the top search terms since we started in 2011. Sanjay also talked about 5G and Silicon Photonics which is our newest SemiWiki category and something you will be reading much more about in the near future.

Fourth was Tom Beckley, EVP of Custom IC and PCB at Cadence. Notice that he owns both custom IC and PCB? Systems, systems, systems… Tom talked about systems design enablement, vulnerability, and functional safety. The centerpiece of course was the 25[SUP]th[/SUP] anniversary of Virtuoso and the new unified simulation environment that was announced this morning. Tom Dillinger covered it for SemiWiki: Analog Design Verification — Traceability is Required

Okay, that concludes my 500 words. To truly appreciate the keynotes you will have to watch them but let me end with this:

FinFET design is complex, double and quadruple patterning is difficult, chip power and performance are critical, system level design will require fully integrated tools, and the next generation of system fabless companies have VERY tight product schedules and are much more risk adverse than what we are used to.

Bottom line: The rich EDA companies will get richer and the point tool vendors will be forced to innovate or die… Just my opinion of course.

Innovation in Transistor Design with Carbon Nanotubes

The New York Times article “IBM Scientists Find New Way to Shrink Transistors” by John Markoff focuses on the goal of the semiconductor industry to create smaller transistors in order to remain competitive while emphasizing cutting-edge design strategies with the use of carbon nanotubes. By switching from traditional methods to carbon nanotubes, IBM’s Physicist Wilfried Haensh, of the research group, highlights the improvements in power saving and ultimately increasing the speed of IBMs microprocessors sevenfold.

The transistor industry constantly faces what is known as the “Red Brick Wall”, or the inability to shrink the transistor due to technical limitations. In the mid-1980s, technical limitations surrounded ways to break the one-micron barrier in which methods using optical technology proved to be the solution. Early in 2000, these technical limitations changed to include gate stack, interconnects, and others. Still, the transistor industry discovered new ways to adapt to these crises and continue. Today, IBM faces technical limitations include a variety of factors such as electrical resistance, temperature, and materials.

Electrical resistance refers to the difficulty of passing electrical current through conductive material and with more resistance less electricity will flow. Electrical resistance also refers to the relationship between voltage and current. In essence, higher resistance will limit the electric current able to flow through the wire. Reducing electrical resistance will also increase processor chip speed while shrinking the physical limitations.

Along with electrical resistance, as transistor temperature increases, so will the collector current. A continual increase in heat will cause thermal runaway to occur, ultimately breaking the transistors. Limiting both electrical resistance and heat becomes a priority in order to maintain transistor stability as well as higher performance gains. All of these technical limitations compound to impact processor chip speed and pose challenging design choices for chip designers.

For a little over a decade, multi-core technology has been implemented for more efficient power consumption as opposed to increasing the processing speed, due to resistance and heat increases in smaller transistors sizes in conventional silicon transistors. In response to these technical barriers, IBM has chosen to switch from silicon to carbon nanotubes because of its useful properties in its transistors. Carbon nanotubes are strong, light, and conductive tubular cylinders of carbon atoms which form a one atom thick matrix. Carbon nanotubes also have none of the major physical degradation common to other metals, making it more stable as well as 15 times more conductive and with 1000 times more current capacity than copper. Dario Gil at IBM recognizes the clear advantages of carbon nanotubes over other materials, stating: “Of all the possible materials, this one is at the top of the list by a long shot.”

Another key innovation with the use of carbon nanotubes involves IBM’s new design approach towards the placement of the carbon nanotubes in transistors. The new design features the use of carbon nanotubes in parallel rows to connect ultrathin metal wires together, all the while focusing on decreasing the contact size to metal wires. By finding ways to align the carbon nanotubes close together, IBM can shrink the size of each transistor. In this way, the carbon nanotube will be used for electrical switching and help perform the essential functions of a transistor. IBM proposes future enhancement to design in the next decade by decreasing the contact size from 40 to 28 atoms in width.

Zach Allen & Parun Thamutok

References

J. Markoff, “IBM Scientists Find New Way to Shrink Transistors,” The New York Times, 01-Oct- 2015. [Online]. Available at: http://www.nytimes.com/2015/10/02/science/ibm- scientists-find-new-way-to-shrink-transistors.html?_r=1. [Accessed: 10-Mar-2016].

“Nanocomp Technologies | What Are Carbon Nanotubes?,” Nanocomp Technologies | What Are Carbon Nanotubes?, 2014. [Online]. Available at: http://www.nanocomptech.com/what-are-carbon-nanotubes. [Accessed: 10-Mar-2016].

M. Lapels, “Has The IC Industry Hit A ‘Red Brick Wall’?,” Semiconductor Engineering, 09-Jun-2014. [Online]. Available at: http://semiengineering.com/will-ic-industry-hit-red-brick-wall/. [Accessed: 10-Mar-2016].