ArterisIP has been a SemiWiki subscriber since the first year we went live. Thus far we have published 61 Arteris related blogs that have garnered close to 300,000 visits making Arteris and NoC one of our top attractions, absolutely.

One of the more newsworthy announcements this week is the addition of Ty Garibay to the Arteris executive team. Ty is a decorated semiconductor executive with 30+ years experience and has his name is on 34 patents through his architect and design leadership roles at Motorola, Cyrix, SGI, Alchemy Semiconductor. He also managed ARM’s Austin Design Center and ARM cores development and IC engineering for Texas Instruments’ OMAP application processors group. Most recently, Ty was Vice President of IC Engineering for Altera, and led FPGA IC design at Intel after the acquisition in 2015.

What brings you to ArterisIP? What is your history with the company?

I worked with Arteris for more than 10 years as a customer, at both Texas Instruments and Altera, now acquired by Intel. I’ve known CEO and Founder Charlie Janac for many years. My engineering team worked closely with Arteris’ team in the original development of the NoC generation tools.

When I felt it was time for me to do something different, after the Intel acquisition of Altera, I looked around and decided to try something completely new. I am making a transition from being an engineering manager for a large organization to a technical role as an individual contributor at a small, private company. I was looking for something different, challenging, and innovative. This was a group I thought I could fit into and enjoy working with.

What technology trends do you see? How will you help address these at ArterisIP?

I’ve been in the semiconductor business for more than 30 years. In the early part of my career I focused on processor development, and the last 10 years I focused on SoC development. The original mobile phone megatrend that created the Network-on-Chip (NoC) opportunity for Arteris is continuing into other markets.

SoCs are becoming more complicated and complex, and more IP blocks are coming from different providers, both internal and external. There are different interfaces, and more power management is required. Moving from 28-nanometer process technology to 16 nm, 10 nm, and 7 nm technologies increases challenges exponentially. It is harder to get the interconnect wires where they need to be and to meet ever-increasing performance goals. All of these challenges are converging, creating a need for a new generation of NoC IP tools.

Another trend that we’re starting to see for the first time since the early 2000s is a considerable number of new entrants into the semiconductor market. ASIC players and system companies are now designing their own chips: Microsoft, Google, Facebook, and Amazon to name a few. The variety of chips and new design teams coming into the industry is greater than I have seen in over a decade. Configurable NoC interconnect IP and the associated IP generation tools can play a critical role in enabling new teams entering new markets. The teams of people involved in these new endeavors often don’t have a long track record in chip design, but with our tools and features we can help new teams be successful. There is a significant, growing market for ArterisIP.

What have you seen at ArterisIP over the years as a customer, and now a partner?

One of the things that made working with Arteris pleasant and positive over the years was the commitment the team had to their customers’ success. Arteris was always extremely flexible in regard to supporting different functionality, meeting different requirements, using IP tools in a variety of ways, and adapting Arteris’ offerings to our methodology. As a customer I appreciated this, and now being part of the team, it is something that I can help carry on. One of the things I’m trying to do in my role as CTO is represent the customer in discussions of what we are developing for the future, how we create our user experience, and the expectations we have for how the customers use our IP and network generation tools. Those are things we can improve on by bringing my customer experience inside the company.

Why do you think interconnect is important for today’s SoC landscape?

It goes back to the two trends I discussed before of increasing complexity, the number of IPs and relative lack of cohesive design team experience. In other words, engineers in a company are experienced in and of themselves as individuals. However, there are a lot of new teams forming at new companies and this is a challenge. I think we can add more value with ArterisIP tools by helping new teams be productive and more successful with their first iterations.

What do you see as the most innovative technology directions in the industry within the interconnect area?

We see growth in both the number of participants and dramatically growing volumes in automotive ADAS (Advanced Driver Assistance Systems) and autonomous driving chips, where ISO 26262 functional safety and resilience capabilities are critical. These chips cannot be sold unless manufacturers can guarantee their suitability for safety-critical applications. In that realm, we are looking to innovate by adding unique features to our resilience offerings. The goal is to enable our customers to take their products to functional safety certification much more efficiently, and get to market more quickly.

Another area where interconnect can add value is in the high-end, high-performance networks on-chip. Currently, we don’t offer what the networking industry thinks of as key networking functions in the interconnect. We do provide packetized communication, but we don’t have a lot of routing capabilities that a protocol like PCI-Express has.

I think there will be a need for some of those higher-level protocols to come into the silicon to enable the incredibly complex systems with 10, 20 or even 40 different high-performance masters. This will be essential so that the software for safety-critical and mission-critical applications have a guaranteed quality of service on the network, enabling their deterministic forward progress. This poses a big challenge regarding area, power, and timing. It is an opportunity for us to enable a whole new system that leverages these capabilities, to extend into the chip capabilities that are currently only available at the board and chassis level. And to do it in a manner that is more efficient from a PPA perspective than is done today.

What do you see as the most important technology being implemented at ArterisIP?

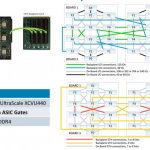

As part of the chips becoming larger and more complex the biggest challenge for our customers is to implement what we generate for them into silicon. They need to place it, route it, and get it all working at their desired frequency of operation. These tasks have become a massive challenge for them, which is why we are trying to help with our new toolset called PIANO, which eases the implementation of our network-on-chip interconnect into physical design. The customer can consider the evolving floor plan, the timing of the chip, and all the different interfaces together. Early analysis and planning can provide a much more deterministic path to final closure, reducing the customer’s total design time and time to market.

With the PIANO technology, we believe we can help our customers accelerate their design processes and improve their productivity. We are planning to integrate this functionality into everything we do. As designs become more complex at 10 nm and 7 nm, our tool flow has to become completely physically-aware. Our goal is to help our customers make the right decisions about interconnect all the way from the beginning of the design cycle to the final product. To me, the most important thing is to enable our customers to tape out a real chip. We can add features, and functionality, but none of it matters unless it works at speed, the right power envelope, and within the customer’s development schedule.

How do you see the role of ArterisIP’ technology evolving into the future? Are there opportunities to build on the foundation of interconnect technology?

The technology we have today, and what we are developing for the next generation, is foundational to chip design. It provides a fundamental ability for engineers to create more complex chips, faster. It is an infrastructure we can build on to add value in different ways for our customers. For example, one could imagine that if we can see all the traffic on the chip, that there are potential opportunities in terms of security. A customer may need to provide adversarial security within the silicon and interconnect. This could allow you to watch for certain events, detect patterns, and implement within the chip the diverse types of monitoring chip-to-chip interconnect allows in today.

There is also a role for interconnect in debug and performance monitoring. Since we are transporting all the traffic, we can create and derive metrics from that traffic. The more data that can be made available to our customers, the better that all systems operate. Facilitating that and allowing people to implement it more easily is an area where we can have an impact, because the interconnect touches every part of the chip, creating an opportunity to use the interconnect as the basis for the organization of a design process. If we can work with all the IP providers, perhaps we can support standards for how IP can be re-used, and what protocols can be used for the communication of bits through the NoC, or how the information about the IP is used by hardware and software design teams. This is a way to continue making a design process easier for our customers by building around our network.

I personally think there are a number of different opportunities for us to add value to our customers, with the network-on-chip at the center of the system.

Also Read:

CEO Interview: Michel Villemain of Presto Engineering, Inc.

CEO Interview: Jim Gobes of Intrinsix

CEO Interview: Chris Henderson of Semitracks