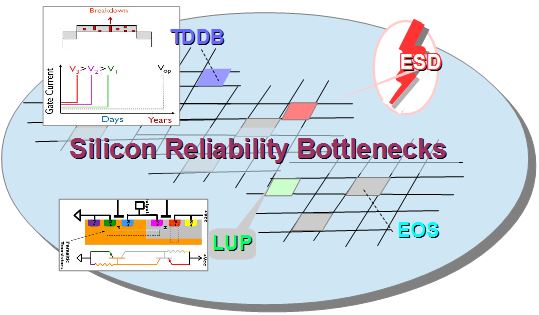

On their first quarter earnings call Intel announced that volume production of 10nm has been moved from the second half of 2018 to 2019 due to yield issues. Specifically, they are shipping 10nm in low volume now, but yield improvement has been slower than anticipated. They report that they understand the yield issues but that improvements will take time to implement and qualify.

During the question and answer segment they said it is “really tied to this being the last technology tied to not having EUV and the amount of multi patterning and the effects of that on defects”.

This has led to a lot of speculation about what is causing the yield issues. I have seen some comments that everyone is doing multi-patterning Intel’s explanation doesn’t make sense and there is some speculation that the yield issues are related to cobalt. I thought it would be useful to explore multi-patterning usage and cobalt usage and how these differ between companies and what the impact may be on yields.

There are four companies currently pursuing leading edge logic: GLOBALFOUNDRIES (GF), Intel, Samsung, and TSMC. The following will explore multi-patterning and cobalt usage by company.

Multi-Patterning

In the front end of the line all four companies are using Self Aligned Quadruple Patterning (SAQP) with multiple cut masks for Fin formation and Self Aligned Double Patterning (SADP) for gate formation. At contact some versions of Litho-Etch are used, either Litho-Etch-Litho-Etch (LE2), or Litho-Etch-Litho-Etch-Litho-Etch (LE3) or even LE4 (EUV for Samsung). These are all similar between the companies except for Samsung’s use of EUV. In the Back End Of Line (BEOL) is where we see a significant differences. GF and TSMC both use SADP for critical metal layers, Intel uses SAQP for 2 metal layers and Samsung is expected to use EUV for critical metal layers.

We believe GF and TSMC are both ramping yield on schedule. It is possible that the yield issues Intel is seeing are related to SAQP in the BEOL. BEOL metal layers require multiple block layers and this is complex to implement. The first block layer would remove the layers needed for subsequent block layers, the way this is addressed is block layers are applied as reverse images and then once all the block layers are done, the whole pattern is reversed. Implementing multiple block layers in concert with SAQP versus fewer block layers at SADP is more difficult. This could explain why multi-patterning may be more difficult at Intel. Intel has a 36nm pitch in the BEOL versus GF and TSMC at 40nm and the smaller pitch is more difficult to achieve. We don’t know much about Samsung’s process yield ramp or exact specifications but certainly their early use of EUV may presents some challenges for them and we wouldn’t be surprised if Samsung encounters yield issues as well.

Cobalt Usage

I have also seen comments about cobalt usage suggesting Intel’s use of cobalt may be the issue. The first comment I want to make here is everyone is using cobalt, not just Intel although there are differences in usage.

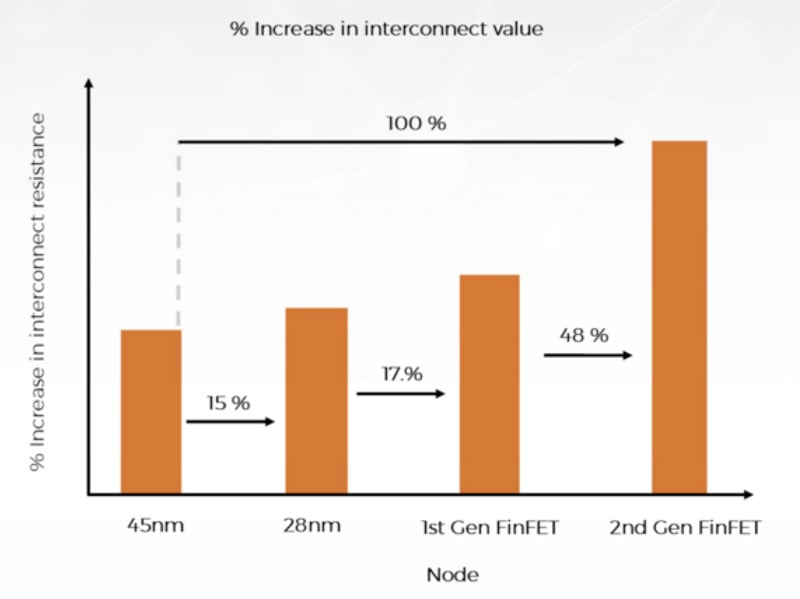

- Liners/caps – we believe all four companies are using cobalt for liners and caps on critical metal layers. Historically liners are Ta/TaN and switching to Co/TaN improves electromigration and the copper “wetting” during processing. Cobalt caps on top of the metal lines also improve electromigration.

- Contacts– we believe all four companies will also use cobalt filled contacts although there may be differences in how it is deposited (more on this later).

- Local interconnect – Intel uses cobalt filled metal lines for M0 and M1, GF does not and we don’t think TSMC does either. A key here is that as interconnect pitch shrinks, copper resistance goes up and eventually cobalt becomes a lower resistance solution. We believe Intel went to cobalt because it is beneficial for resistance at 36nm, with GF and TSMC at 40nm they likely didn’t see the need. We are curious to see what happens with Samsung, we believe they may also have a 36nm minimum metal pitch and it will be interesting to see if they use cobalt interconnect. They are co-authors on technical papers for 7nm with cobalt M0 so they have certainly looked at it.

We know that GF uses CVD to deposit cobalt for their cobalt filled contact and we have heard that Intel deposits cobalt with plating. We have also heard that Intel may have void issues. Perhaps plating cobalt is creating some cobalt issues, we do not think there are fundamental issues with cobalt.

Conclusion

I believe Intel’s comment on multi-patterning issues is probably the driver of their yield problems. They were more aggressive in their shrink than others and getting to 36nm minimum metal pitches with SAQP and multiple block layers is in my opinion the likely problem.

Cobalt may also be a contributor but I don’t think it is the main problem.

Also Read: Intel delays mass production of 10nm CPUs to 2019