Anirudh Devgan (President of Cadence), gave the third keynote at CDNLive Silicon Valley this year. He has clearly become adept in this role. He has a big, but supportable vision for Cadence across markets and technologies and he’s become a master of the annual tech reveals that I usually associate with keynotes.

Anirudh opened with factors driving system design across major verticals: aero and defense, datacenters, mobile, auto and industrial. These drive trends in distributed and cloud-computing, 5G and edge computing (5G, recently a future, is catching up fast), automotive and industrial disruption, machine learning and deep learning. And in support of all this, the need for more complete optimization across systems, and always improving design excellence and productivity.

Cadence is organizing its approach to these new opportunities through SDE (System Design Enablement) 2.0, a three-level attack through support of design excellence (EDA and IP), System Innovation (a new area) and Pervasive Intelligence (also a new area). All of this leverages Cadence core-competence in computational software, the CS plus math expertise that underlies most EDA technologies. To Anirudh this is very important; Cadence needs to build and grow around existing core-competencies. Since a lot of system analysis requires computational software, as does ML, these are reasonable directions.

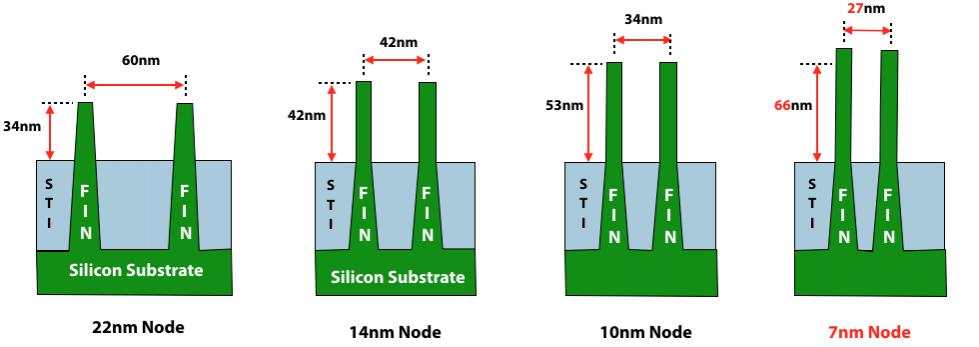

This requires a larger view of systems design because customers in all of these markets are now expecting to optimize complete systems, down into the chip/multi-chip design. It also requires an expanded view of computation, embracing the rapid ascendance in support of and use of AI technologies. This doesn’t mean that investment in “conventional” EDA takes a back seat. At the more advanced process nodes, tools must continue to progress in capability. Performance, and coupling with foundries becomes even more essential.

Stepping back for a second from new and shiny stuff, Anirudh had to toot Cadence’s (and his) horn on the dominance of the Cadence digital flow – 20% better PPA than alternatives in the full flow and over a hundred 7nm tapeouts.

Back to the new stuff, first Machine Learning. Anirudh breaks this up into Inside (ML inside a tool), Outside (eg. ML optimizing a flow for improved PPA) and Enablement (eg the support for customer ML objectives through the Tensilica IP). As examples of Inside, a tool looks more or less the same to a user but runs faster or delivers a better result; he cited Tempus as an example where these capabilities are already available.

An example of Outside ML is an iterative flow around Genus and Innovus, using learning gained through earlier runs to improve subsequent runs. Cadence has shown cases where they can get improvement in TNS of 10-20% using such flows. A somewhat different example is use of ML in optimizing PCB automated routing in Allegro where design times can be significantly reduced.

In System Innovation, one of Anirudh’s big reveals was the Clarity 3D solver, from die to package and system. I won’t spend much time on this because Tom Simon wrote about Clarity earlier, but some of the use-cases Anirudh cited may be new – an automotive application with LPDDR4, package, PCB and DIMM connector, a datacenter application for a 112G connection from server to cable to server, and a 5G handset application analyzing fanout wafer-level packaging through 40 DDR signals and power. All of this of course in distributed (and elastic) compute. Anirudh cited this as a good example of leveraging core expertise – building on Cadence know-how in solving matrices – not a skill you would find among most big-data experts.

The other big systems initiative is the partnership with Green Hills software; Anirudh noted that this has Cadence starting to play a role in a $4.5B system analysis market. Coupling that expertise together with early software bring-up and debug on the Palladium and Protium platforms enables analysis and optimization to move into pre-silicon design and further supports the total system objective that so many of the verticals are now finding essential.

I for one am eager to see support of electronic design expand beyond the narrow bounds of package and board and embrace at least some of the latest and greatest trends in tech. We need to play a bigger role in applications innovation. Cadence certainly seems to be taking some interesting steps in that direction.