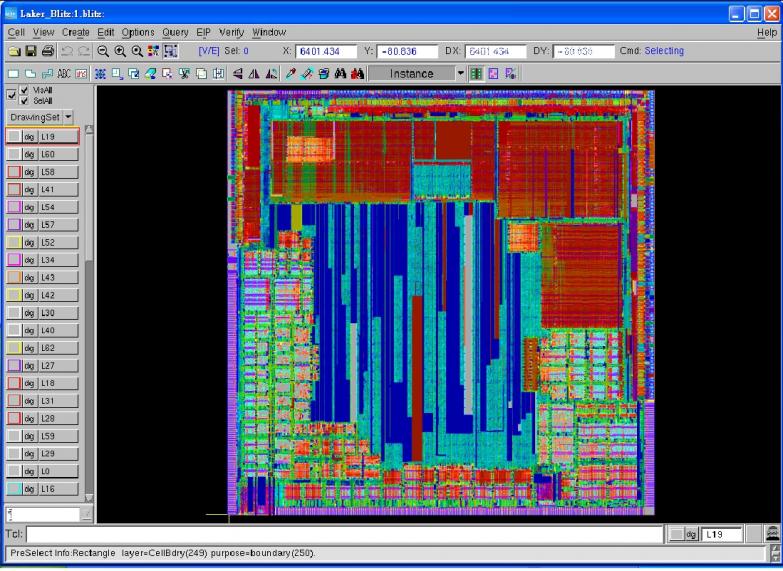

Little known fact, SpringSoft, Inc. is the largest supplier of EDA software in Asia with headquarters in both Hsinchu, Taiwan, and in Silicon Valley, CA. You will be hard pressed to find a company that does not use SpringSoft products and being located right down the street from the top two foundries doesn’t hurt either.

Continue reading “SpringSoft Update 2012!”

Synopsys Update 2012!

Synopsys just delivered second quarter 2012 results with improved revenue on a year-over-year basis. Unfortunately operating expenses are said to be out of control. I’m not a stock guy so for more financial information and analysis try the Motley Fool article HERE.

The interesting thing to note is that Synopsys still has a pile of cash, some $800M, so expect more acquisitions in all areas of the business especially IP, in my opinion. The IP business is a clear differentiator for Synopsys and they are running away with it.

You can tell a lot about a company from their DAC plan. Synopsys has gone AMS mobile this year:

Synopsys at DAC 2012

In addition to special events, Synopsys will offer informative presentations and demonstrations of its comprehensive portfolio of integrated system-level, implementation, verification, IP, manufacturing and FPGA solutions. Visit Synopsys at Booth #1130 to learn about the newest solutions available to help enable the next 25 years of innovation. For more information on these special events and all Synopsys’ activities at DAC visit www.synopsys.com/DAC.

Synopsys DAC Events, Monday, June 4, 2012:

- AMS Verification Luncheon: Boost Productivity Using Synopsys’ AMS Verification Solution

11:30 a.m. – 1:30 p.m., Marriott Hotel, Golden Gate Ballroom, Salon B

Industry leaders from AMD, ARM, GLOBALFOUNDRIES, Micron and NVIDIA share experiences using Synopsys’ AMS verification solutions in some of today’s most challenging designs.

- IC Compiler Luncheon: Leading the Way to 20nm Design with Synopsys’ IC Compiler™ Software

11:30 a.m. – 1:30 p.m., Marriott Hotel, Golden Gate Ballroom, Salon A

Hear from experts in foundry, processor, wireless and consumer electronics companies, such as STMicroelectronics, GLOBALFOUNDRIES and Samsung, that have successfully met the 20nm design enablement challenge with IC Compiler.

- Customer Insight Sessions: High-Performance, Gigahertz+ Success with Synopsys’ Galaxy™ Implementation Platform

2:00 p.m. with Samsung and 3:00 p.m. with NVIDIA, Moscone Convention Center East Mezzanine, Room 220

Technical experts will discuss the latest high-performance design trends, challenges and solutions. They’ll share best practices and innovations in high-performance technology that help address Gigascale, Gigahertz+, low power and advanced geometry design challenges.

- Synopsys’ PrimeTime® Software Special Interest Group 2012 Dinner: Next-generation Hierarchical Timing Technology — HyperScale

6:00 p.m. – 8:30 p.m., Marriott Hotel, Golden Gate Balzlroom, Salon A

At this event, you will see Synopsys’ R&D team unveil the new underlying engines for its hierarchical timing technology, HyperScale. Industry experts will also share their experience with this innovative new technology, which demonstrated up to 10X faster and smaller full-chip timing analysis runs with signoff quality results matching flat analysis. Speakers include timing experts from LSI, NVIDIA, Samsung and Synopsys. The event will be moderated by Brian Bailey of EE Times. PrimeTime Ecosystem Partners will also be there, including ARM, Chip Estimate, eSilicon, Global UniChip Corp., Library Technologies, Nangate, Open-Silicon, Platform Computing, an IBM company, Runtime Design Automation, Samsung, SmartPlay, SpringSoft, Target Compiler Technologies, Univa and Z Circuit.

Synopsys DAC Events, Tuesday, June 5, 2012:

- ARM, GLOBALFOUNDRIES, Samsung, Synopsys Partner Breakfast: Breaking through Barriers — High-Performance and Energy-Efficient ARM Powered SoCs at 32/28nm and 20nm

7:15 – 8:45 a.m., Marriott Hotel, Golden Gate Ballroom, Salon A

In this session, experts from ARM, GLOBALFOUNDRIES, Samsung and Synopsys will describe key design and manufacturing challenges facing designers at 32/28nm and 20nm and how through collaboration, the companies are addressing these challenges. The collaboration combines semiconductor manufacturing, EDA and IP enablement, shared cycles of learning and silicon proof-points to enable a complete silicon-proven design enablement and manufacturing-ready solution for optimized implementations of ARM Powered high-performance and energy-efficient SoCs.

- Customer Insight Sessions: High-Performance, Gigahertz+ Success with Synopsys’ Galaxy™ Implementation Platform

10:00 a.m. with Cavium and 2:00 p.m. with Samsung, Moscone Convention Center East Mezzanine, Room 220

Technical experts will discuss the latest high-performance design trends, challenges and solutions. They’ll share best practices and innovations in high-performance technology that help address Gigascale, Gigahertz+, low power and advanced geometry design challenges.

- Verification Luncheon: SoC Leaders Verify with Synopsys

11:45 a.m. – 1:45 p.m., Marriott Hotel, Golden Gate Ballroom, Salon A

Given the complexity of today’s SoC designs, incremental tool improvements will not be sufficient to deliver the required order-of-magnitude boost to verification productivity. At this luncheon, AMD, Broadcom, Cavium, Freescale, Qualcomm and ST-Ericsson will share their views on what’s driving SoC complexity and how their teams have achieved success. They’ll also discuss the latest developments in verification. The event will be moderated by John Chilton, senior vice president of marketing and strategic development at Synopsys.

- IPL Alliance Luncheon: Reaping the Benefits of iPDKs

12:00 p.m. – 1:30 p.m., Marriott Hotel, Golden Gate Ballroom, Salon B

At the 6th Annual IPL Luncheon, presenters from multiple foundries will highlight the benefits of the Interoperable PDK (iPDK) standard and their experiences in developing and deploying foundry iPDKs. The IPL Alliance will also present an update on current and future IPL projects.

- Customer Insight Session: Mixed-Signal SoC Design Success with the IC Compiler Custom Co-Design Solution

4:00 p.m. with STMicroelectronics and Proteus Biomedical, Moscone Convention Center East Mezzanine, Room 220

Attend an informative session on Synopsys’ mixed-signal SoC implementation solution and custom co-design methodology advancements. Learn how leading companies have successfully tackled difficult “big D/little A” mixed-signal physical design challenges using Synopsys’ unified cell-based and custom implementation solution with IC Compiler.

Milestones to Building a Successful Technology Software Company!

If you are coming to San Francisco for DAC 2012 and want the best traditional Italian seafood meal visit Scomas. I was just there, the salmon is fresh from right outside the Golden Gate. Have them prepare it anyway you want, you can’t go wrong!

Milestones to Building a Successful Technology Software Company is the first in a series of conversations exploring concepts and best practices for emerging companies. This is a brilliant idea so congratulations to the person who thought of it, uh wait, that would be me. The semiconductor ecosystem desperately needs emerging companies to innovate and solve the many puzzle pieces that we call modern semiconductor design and manufacturing.

Paul McLellan blogged it here: EDAC Emerging Companies: Learn How to Emerge.

The abstract is here: Milestones to Building a Successful Technology Software Company.

Register for the event HERE (it’s free).

Date & Time:

Thursday, May 31[SUP]st[/SUP] 2012

6:00 PM Reception

7:00 PM Emerging Companies Conversation

8:00 PM Q&A

Location:

Silicon Valley Bank

3005 Tasman Drive

Santa Clara, California 95054

Complimentary Parking

(Map)

Ravi Subramanian, Berkeley Design Automation CEO, is one of the speakers. I have had the pleasure of working with Ravi and can tell you he is charming, intelligent, humble, a great communicator, and has the technology pulse of the top semiconductor companies around the world. Just like me, except the humble part.

Ravi has a unique background for EDA, most interestingly, I would bet he is the only EDA CEO who has climbed Mt. Fuji at night. He is certainly the only EDA CEO named to Rutberg & Co’s CTIA Wireless Influencers, a list of the most influential persons in the wireless industry (and he has been on this list since 2007). Ravi also has 17 AMS patents.

I just had lunch with Ravi, he will talk about his experience in building two successful private companies and some of the key lessons he has learned as an entrepreneur. He will cover topics that go from “code to company” as he calls it while citing lessons from his 3G wireless IC startup, Morphics Technology (which he started in 1998), and Berkeley Design Automation, the leader in nanometer mixed-signal IC verification.

Ravi will also cover leadership, product development (both IC and software), customer-focused innovation, hiring, raising capital, innovating in business models, going up against giants, and scaling a company (and scaling a team).

Ravi is not an armchair quarterback, he is in the game and headed for the IPO Bowl! This is a must attend event for all Silicon Valley Entrepreneurs, myself included.

Tanner EDA Update 2012

Rather than fly to Southern California this week I decided to drive. Airport parking and security, flying to Irvine and renting a car, driving to San Diego and back to Irvine, then flying home is just too much. Call it Porsche therapy, I would rather drive. On the way home I will take the scenic 101 and enjoy the ocean views.

First stop was Tanner EDA in beautiful downtown Monrovia, which reminded me of my hometown Danville. Tanner has a SemiWiki landing page so you can read all about them HERE. I had lunch with John Zuk, VP of Marketing and Greg Lebsack, President. We ate at a great Italian place right down the street where I had one of the best salmon stuffed cannellonis! YUM!

Dr. John Tanner has a very interesting legacy which I will go into with much more detail later but quickly, Tanner EDA first appeared at the 1988 DAC in Anaheim, which was the 25th DAC. John Zuk shared some DAC photos from the Tanner archive which were quite funny. DAC and Tanner EDA sure have changed! Since then the company has shipped over 33,000 licenses of its software to more than 5,000 customers in 67 countries. Wow!

You can tell a lot about an EDA company by their DAC plan. This is definitely the case with Tanner EDA. Tanner EDA will be exhibiting at booth 1126; offering the following Product Demonstrations, and Corporate & Technology Roadmap meetings to interested participants:

Product Demonstrations for Analog Designers:

HiPer Silicon: Tanner’s full-flow analog design suite covers Schematic capture, SPICE simulation, Layout, and Physical Verification. Now including Open Access!

Learn about the latest features and capabilities; including enhanced design collaboration and layout interoperability with Cadence Virtuoso 6 and other Open Access EDA tools.

Click here to make an appointment to attend this product demonstration

HiPer Simulation AFS: Tanner’s latest front-end Design & Simulation offering bolstered by BDA’s FastSPICE capability

See the latest T-Spice and Berkeley Design Automation FastSPICE tools in action; in a cohesive, integrated front-end tool flow.

Click here to make an appointment to attend this product demonstration

HiPer DevGen: A “silicon aware” analog layout acceleration tool that recognizes and generates common structures; an essential tool for high productivity and layout quality.

Learn about this game-changing add-on tool for L-Edit. HiPer DevGen automatically recognizes and generates common structures including:

Differential pairs, Current mirrors, Resistor dividers, and MOSFET arrays

Click here to make an appointment to attend this product demonstration

Product Demonstrations for Mixed-Signal Designers

HiPer Simulation A/MS: Tanner’s latest Analog/Mixed-Signal offering that bridges Analog and Digital Verification.

View a demonstration showing Verilog A and Verilog A/MS within T-Spice integrated with Aldec’s Riviera-PRO simulator.

Click here to make an appointment to attend this product demonstration

HiPer Silicon A/MS: A cohesive Analog/Mixed-Signal flow that bridges Analog and Digital Verification and adds Synthesis, Static Timing and Place & Route.

See Tanner’s latest full-flow mixed signal offering; a highly productive tool flow integrating HiPer Silicon with high performance tools from Aldec and Incentia.

Click here to make an appointment to attend this product demonstration

Product Demonstrations for MEMS Designers

L-Edit MEMS Designer: unsurpassed capabilities and productivity for microelectromechanical systems.

Learn how to reduce time-to-market; watch how L-Edit combines with high-performance add-ins that include curve tools, DXF import/export and design rule checking (DRC).

Click here to make an appointment to attend this product demonstration

MEMS 3D Solid Modeler – a powerful and easy-to-use 3D viewing tool.

Learn about this latest offering – powered by SoftMEMS – that creates a 3D view of a MEMS device from a selected layout area and fabrication process description.

Click here to make an appointment to attend this product demonstration

Corporate and Technology Roadmaps

Tanner EDA: 25 Years of Productivity, Price-Performance and Customer Care

Want to learn more about Tanner EDA business and Technology strategy? Find out about our history, learn about some of our customers’ success stories, and get an opportunity to see what’s in store for the future.

Click here to make an appointment to attend this session

PDKs: An Essential Element of Analog Design Success

Process Design Kits (PDKs) are a critical enabler for design enablement and productive workflow. Learn about Tanner’s latest PDK and design flow initiatives; including foundry-certified kits from Dongbu HiTek, TowerJazz, and X-Fab.

Click here to make an appointment to attend this session

Tanner EDA has a rich history and a very strong story for affordable AMS design. Read about the recent integration with Berkeley Design Automation Analog FastSpice and the Aldec Verilog A simulator. Lots of things going on so be sure and make the time to visit them at DAC 2012. Better yet, download their tools for a free 30 day test drive!

Chip in the Clouds – “Precipitation”

Since around the posting of my prior blog [Chip in the Clouds – “Gathering”] to now many events have taken place. Facebook announced its intent to acquire Instagram for $1B in cash and stock, completed its initial public offering, announced an Instagram competitive product by releasing “Facebook Camera” and has been busy addressing the question of whether it disclosed material information about its near term earnings selectively to certain institutional investors. What do these Facebook related events have anything to do with cloud-based chip design? At the surface it looks like nothing and therein lays a major issue.

Facebook or Google, Instagram or Facebook Camera, Google+ or for that matter any internet-based social-media offering cannot work without one important component and that is the semiconductor chip running the computers and server farms that power these social-media platforms and apps. A great majority of the population does not know and/or does not consciously think about this. What would happen if the semiconductor industry goes on strike for a month? This will not happen and neither am I suggesting it but just imagine the impact to the social-media world and the rest of the world if this were to happen. In spite of this key role, semiconductor companies do not receive anywhere close to the relative valuations that social-media companies are receiving. Instagram received a billion dollar valuation after taking in just millions of dollars over a 2-year period to produce an app that allows a user to add effects to photos. Compare that to the billions of dollars that go into the semiconductor industry and the little things that are output (I’m speaking figuratively about the micro sized chips that are produced and literally about the relatively tiny valuations semiconductor companies receive).

As newer complex chips are being designed and produced, the cost to develop these chips has increased multi-fold but the size of the average funding rounds has remained about the same as it was many years ago. Investing in semiconductor companies has become too risky even for VCs. VCs are now able to make smaller investment rounds in social-media companies and see success/failure in a shorter time interval compared to a semiconductor investment.

But that does not mean innovation in semiconductors will come to a halt. Nor does this mean that the semiconductor industry is facing death. But it is true that the industry is going through a serious ailment. Venture capital funding has slowed down to a trickle. In spite of this, as the famous phrase “Life will find a way,” from the movie Jurassic Park, the semiconductor industry will find a way out of this ailment. This is the industry that has been most innovative over its 60-year history in terms of technology as well as its cost-reduction delivery.

Innovative companies have been doing their part to help semiconductor companies deal with the ailment and continue to deliver great new products that benefit the world. The value chain producer model helped chip companies avoid a large portion of their fixed cost investment without sacrificing their ability to design and deliver cutting-edge innovative products. Marseille is utilizing a proprietary Virtual silicon design methodology/technology to rapidly prototype products before committing to silicon thus mitigating silicon respin risks and accelerating time to market for its customers. Marseille could help the industry by licensing this technology to other semiconductor companies who it does not compete with.

SiCADas a cloud-based silicon design company will allow its customers the ability to extend the EDA tools and IT budgets at the same time enhancing their ability to bring products to market faster. Customers don’t like feeling captive to any supplier. With the recent consolidations in the EDA world, the time is ripe for customers to demand a heterogeneous cloud-based silicon design platform. Chip in the clouds precipitation has begun. Expect the downpour to continue. Hear directly from customers and suppliers by attending the DAC 2012 panel “Is EDA in the Cloud Just Pie in the Sky?” hosted by Nitin Deo on June 6, 2012 at 1:30pm at the Moscone Convention Center.http://www.linkedin.com/in/kalarrajendiran

Semiconductor Industry Standards Update 2012

Standards have been proven to reduce cost of operations, drive greater process efficiencies and offer greater opportunities for start-up companies to infuse fresh technology in the design and manufacturing of IC’s. Si2 standards have been targeted to resolve “pinch-points” in the overall semiconductor supply chain with a steadfast focus on rapid adoption of these standards.

This day-long program, consisting of 4 individual events, will showcase activities currently underway with an eye towards demonstrating the value of these programs to the program participants and to the overall semiconductor industry. Therefore, this day-long session should entice engineers and technologists working at both current and cutting-edge technology nodes and also managers responsible for driving both design and manufacturing strategy, and related financial and staffing decisions. A featured part of the program will celebrate the 10th Anniversary of OpenAccess.

- A complimentary luncheon and an afternoon reception will highlight this occasion.

- All sessions are free, register here.

- For admission into the Moscone Center you will need to register here for DAC.

- Note that there is a Free Monday option, which will be sufficient to get into the convention hall and then to Room 301. Register here for a Free Pass!

[TABLE] cellspacing=”3″ style=”width: 100%”

|-

| style=”width: 53px” | 09:00am – 10:30am

| OPS Comes to Life

The Open Process Specification (OPS) standard, from Si2’s OpenPDK Coalition, contains all of the data elements that are necessary to create a Process Design Kit (PDK) in any EDA vendor’s or company proprietary design flow. This session is designed to appeal to any engineer working with PDKs and any engineering manager making budgeting or ROI decisions concerning PDK development. It will describe the structure and organization of this very important new standard in the industry and examples of the use cases that this standard will cover.

Presentations:

- Introduction: Jim Culp (IBM)

- Status / Next Steps: Gilles Namur, (STMicro)

- Symbols: James Masters, (Intel)

- Callbacks and Parameters: Gilles Lamant, (Cadence)

|-

| style=”width: 53px” | 10:45am – 12:15pm

| DRC+ – The Next Frontier

DRC+ augments standard DRC by applying fast 2D pattern matching to physical verification to quickly identify problematic 2D patterns called hotspots In contrast to more time consuming lithographic simulations used after tapeout, DRC+ is transparent to designers as part of their normal DRC checks and it’s fast enough to be inserted throughout the entire design flow.

This award winning technology has been contributed to Si2 and it forms the basis for our next generation OpenDFM standard. This session will cover process characterization, pattern recognition and physical verification using DRC+ acceleration that can be used by any DRC engine.

Presentations:

- Introduction / Quick Refresher on DRC+: Vito Dai (GLOBALFOUNDRIES)

- Standardization Status: SW Paek (Samsung)

- Next Steps: Jake Buurma (Si2)

- Panel: Rachid Salik (Cadence), Vito Dai (GLOBALFOUNDRIES), SW Paek (Samsung), Concetta Riccobeni (LSI), Fred Valente (TI)

|-

| style=”width: 53px” | 12:15pm – 01:45pm

| Lunch: Si2 Open Luncheon, A celebration of the 10th Anniversary of OpenAccess

This complimentary lunch will briefly host the annual open Si2 meeting that will include a short presentation on the “state-of-the-union” at Si2 and announcement of the results of the annual election of Si2’s board of directors. This will be a preamble to the celebration of the 10th anniversary of Open Access. It will cover the road traveled from the genesis of a dream to the reality of today. It will showcase presentations and testimonials, and will recognize key individuals who have contributed to the success of OpenAccess. This event is sponsored by Cadence Design Systems, GLOBALFOUNDRIES, LSI, NanGate, and Spectral Design & Test.

|-

| style=”width: 53px” | 02:00pm – 03:00pm

| New Si2 Standards In Action On Real-World Tools

This session will demonstrate the recently published Si2 Open Process Design Kit (OpenPDK) standards and Open Design for Manufacturability (OpenDFM) standards working with commercially available tools. Si2 standards promote interoperability and these demos are designed to illustrate that point. The demos will show the OpenPDK symbol standard validating the correctness or incorrectness of a schematic symbol change as compared to the standard in real time. The OpenDFM standard will demonstrate an integrated physical verification flow from an electronic Design Rule Manual (eDRM) to XML, to OpenDFM and to four different DRC engines.

|-

| style=”width: 53px” | 03:15pm – 04:30pm

| Standards for a 3D World

Si2 is focusing on developing design flow standards, its area of expertise, to support both 2.5D and 3D designs using through silicon vias (TSV). Specific areas being covered at this time include standards for sharing constraints for power distribution networks, thermal constraints to define such things as “keep-out areas” and constraints to import IP (both dies and blocks) into pathfinding and constraints out of pathfinding into downstream design of individual dies, stacks and interposers. To ensure consistency in standards and to prevent duplication, the Open3D TAB is connected to other relevant groups to ensure a complete solution.

So, this short session will include a presentation of status of activities in the TAB followed by a panel discussion which will include industry experts who will present some of the issues being addressed now and also cover some of the future challenges.

- Presentation: Open3D TAB Status / Next Steps: Riko Radojcic (Qualcomm)

- Panel: Arif Rahman (Altera), Ravi Varadarajan (Atrenta), Aveek Sarkar (Ansys), Keith Felton (Cadence Design Systems), Dusan Petranovic (Mentor Graphics), Riko Radojcic (Qualcomm), Alex Samoylov (Invarian)

|-

| style=”width: 53px” | 04:30pm – 06:00pm

| Si2 Open Networking Reception

This complimentary reception will continue the celebration for the 10th Anniversary of OpenAccess. Free hors d’oeuvres and refreshments will be provided. This event is sponsored by Cadence Design Systems, GLOBALFOUNDRIES, LSI, NanGate, and Spectral Design & Test.

|-

Apache Ansys Update 2012

Apache is one of the brightest stars in the EDA universe. Paul McLellan has done a nice job covering them before and after the Ansys acquisition. Check out the Apache SemiWiki landing page HERE. The Apache wikis are also very well done and it has been a pleasure working with the Apache marketing team. Expect more innovative things from Apache Ansys right around the corner, believe it.

Continue reading “Apache Ansys Update 2012”

The Apple iPhone5 Olympic Launch Scenario

Just days after I posted a blog on an early September iPhone 5 launch, the spies from Asia started flooding the rumor mills with Apple supply chain maneuvers that are not easily hidden suggesting that D-Day logistics are farther along than we imagined. This flood of information, coupled with the heightened Samsung-Apple Battle Royale set for 2H 2012, left me wondering if there was an alternative scenario: An Apple iPhone 5 Launch right before the Samsung sponsored Summer Olympics that start July 27[SUP]th[/SUP] in London. Call it the Marketer’s Dream Scenario for disrupting the enemy’s well-planned, already in motion, expensive plans that can be leveraged to catapult Apple firmly into Mobile Tsunami dominance.

Over the course of the past year, the smartphone market has consolidated around Samsung and Apple as their combined market share has increased by 20% to roughly 53%. Apple’s market share is ecosystem driven that delivers high profits for the pleasure of iOS across all devices. Samsung, on the other hand, is cost driven with more of its units selling at lower price points. If current trends continue, meaning Google or Nokia don’t generate traction with their new smartphones, the game could be over in another 12-18 months as the duopoly head to 70% share or more. What’s more, if Apple does get an injunction on Samsung for shipping what they see as an IP violating Android O/S based smartphone, then the game tips further to Apple’s advantage. Samsung would need to retool with a more expensive Microsoft O/S.

In the course of the past two weeks, word has arrived that the supply of 3.5” LCD displays used on the current iPhone are expected to be reduced by 20% in this quarter and by a third for the rest of the year. Sounds like a move to the $99 slot. A few days later, word from the reputable WSJ arrives that the new 4” iPhone 5 display will ramp in production in June, which seems early if the fully product launch is September or October. WSJ has been known to only publish Apple articles that have a legitimate source (meaning Apple inspired or contrived).

On Monday, a report by PiperJaffray confirms what I wrote on May 9th, that Apple has cornered Qualcomm’s 28nm 4G LTE supply. This means Samsung, HTC and everyone else are impacted with limited supply through the end of 2012. As the only vendor with a low power 4G LTE solution, Apple seeks to retain its premium position while expanding market share. In addition, Apple will follow through on the product theme that all new devices and PCs will contain high-resolution “Retina” screens and an option for 4G LTE. The new iPAD launched in March will be followed by Ivy Bridge based Mac Book Pro’s and Mac Air’s to be launched at WWDC in mid June. So while Samsung announced a 4G LTE Galaxy III a couple weeks ago, the availability has been delayed until July, prior to the Olympics. But what if the quantity is limited, where will customers turn?

If, as I suspect, Apple knew Qualcomm would be stretched to ramp 28nm this summer, then it makes sense that they would take advantage of the situation by locking down supply and launching as soon as possible, even to the point of taking orders 4-6 weeks in advance of shipment as they have done in the past. The rest of the market would be frozen while Apple proceeds to suck all the oxygen out of the room. In terms of actual iPhone5 product SKUs, Apple would likely reserve 4G LTE for the $399 spot while offering 3G versions at $199 and $299.

In terms of timing of an “Olympic Launch”, my best guess would be Tuesday July 24[SUP]th[/SUP] which gives the press multiple days to fawn over the new product before everyone turns their attention to the games. The announcement would precede an earnings call on July 26[SUP]th[/SUP] in which the majority of the focus would be not on the recently concluded quarter but on the revenue outlook for 2H 2012. Tim Cook would then have successfully crossed the chasm of an iPhone revenue downturn that occurs when the general public gets wind of a new device. My guess is that the iPhone 5 hardware will be more stable going forward than the iPAD, where Apple has lots of room to expand the product line further up into the PC space and down into the Amazon Kindle direction.

What is the message from Apple’s iPhone 5 launch and the projected growth to come? For some vendors, a decision must be made as to whether to enter the Apple Keiretsu or focus on other markets. After all, according to IHS iSuppli, Apple is expected to buy $27B of semiconductors this year, or roughly 9% of the worldwide total. DRAM, Flash and processors are firmly under their control. Apple may soon have the upper hand in its negotiations with Qualcomm and Intel related to preferred pricing and supply of baseband and leading edge foundry capacity. The two may, in the end, need each other in order to have enough heft to protect their assets from commoditization.

Full Disclosure: I am long AAPL, INTC, QCOM, ALTR

3D Transistors and IC Extraction Tools

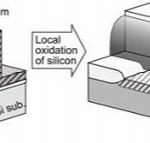

Have you ever heard of a Super Pillar Transistor? It’s one of many emerging 3D transistor types, like Intel’s popular FinFET device.

In the race to continuously improve MOS transistors, these new 3D transistor structures pose challenges to the established IC extraction tool flows.

Foundries have to provide an Effective Profile to EDA companies that describes the shapes used to fabricate any MOS device plus all of the interconnect levels. TCAD tools can be run to simulate what the shapes of an effective profile should be.

How can foundries keep their process proprietary yet provide a geometry for extraction tools to actually use?

Now that’s a constant challenge. I met with Carey Robertson of Mentor Graphics to better understand how the challenges of 3D transistors are being met with the latest generation of IC extraction tools like Calibre xACT 3D.

Q: Intel has an early lead in production-ready 3D FinFETs. When will the Foundries offer FinFETs?

A: The foundries are saying that FinFETS will be used at the 14nm node, however there is much speculation that we could see FinFETS at the 20nm node.

Q: How does a 3D transistor effect the job of IC extraction?

A: The complexity of the effective profile is always increasing, so we have to use more elaborate 3D models that in return require more processing time for detailed extraction used by memory and analog designs.

Q: Will rule-based methods be sufficient in IC extraction?

A: Only for the M1 and higher interconnect layers, for the lower layers near the MOS device you need to really use a field solver to get the accuracy.

Q: How is extraction changing now with 3D?

A: Before we could have a wide distinction between interconnect models and transistor models for parasitics. Now we are using a 3D field solver to extract the R and C values for the MOS source and drain connections.

With 3D devices the MOSFET can even be broken up into multiple fins. We are moving from a table-based and rule-based extraction approach, to explicit calculations. These calculation are just more computational and require longer run times.

Q: What is different about Mentor’s 3D field solver?

A: Our tool is called xACT 3D and is a field-solver used by designers and is well suited for FinFETS and 3D devices. Caliber xACT 3D has a deterministic field solver (similar to Raphael in the past, although faster), and the output is a SPICE netlist. The extraction input is a GDS II file and foundry decks, so it feels like a standard extraction tool.

The old Raphael was 1,000x slower than rule-based approaches so is limited to TCAD and small transistor counts.

xACT 3D is maybe 3 to 4x slower than rule-based solvers, however to get results faster you can add more hardware and still stay within 2% of TCAD tool results.

Q: What is the capacity of your 3D field solver?

A: xACT 3D has been run on multi-million transistor memory layouts (see the STARC paper at DAC), flat. It’s not meant for digital sign off. If you want to send the most accurate netlist to SPICE or Fast SPICE for circuit simulation then xACT 3D is the tool for you.

If you want to analyze multi-million digital gates into PrimeTime, then consider a selected net flow.

Q: What is new with xACT 3D this year compared to last year?

A: Well, we have performance improvements (memory up to 4X, analog about 1.5X to 2X), foundry decks at 20nm, more foundry support, plus faster and better viewing of results.

Q: Who would be interested in using a 3D field solver?

A: Memory designers using pillar transistors would need to use a field solver.

Q: Does your field solver work hierarchically?

A: No, it’s not a hierarchical tool although it can read a hierarchical layout and produce a hierarchical netlist.

Q: Will you be presenting at DAC this year?

A: We may be in a panel discussion organized by Ed Sperling, so stay tuned.

Q: What are some other trends happening in IC design today?

A: With Double Pattern Technology (DPT) being used at 20nm it is creating more corners for simulation, so at 28nm we had 5 corners and at 20nm we will have from 11 to 15 corners. More corners means more simulation time.

Q: Who else at Mentor is presenting at DAC this year?

A: Claudia Relyea is presenting with ST in a poster session on 3D extraction.

Karen Chow has a presentation on sensitivity analysis with STARC.

Summary

The DAC conference and trade show is going to be exciting this year as the EDA vendors have adapted their IC design tools to enable design with 3D transistors. Find Mentor Graphics in booth #1530 at DAC.

Solido Design Automation Update 2012

Having spent a considerable amount of time with Solido, they were one of the founding members of SemiWiki, I can tell you that at 20nm the Variation Designer Platform is a critical part of the emerging 20nm design methodology. You can read more on Solido’s SemiWiki landing page HERE. It is well worth the click.

With technology rapidly going mobile, demand is driving IC development to high-integration, higher-performance at lowest power, a competitive cost, and still in time to meet market demands. In creating these SoCs at the leading edge, process nodes increased variability is a serious risk. Solido is THE leading solution provider to derisk variation, providing maximum yield at the performance edge, with specific solutions for memory, standard cell, low power and analog/RF design.

You can tell a lot about a company by their DAC content. For a relatively small company Solido is delivering a very big value proposition:

Solido Variation Designer Memory+ is used for memory design to achieve maximum yield on high-performance designs. Solido will demonstrate how Memory+ runs the billions of Monte Carlo samples needed for high-sigma (up to 6-sigma) verification of bit cells and sense amps, giving fast and accurate visibility into the increasing effects of variation on design in nanometer technologies. Using the industry-standard simulators commonly used in memory design to achieve SPICE-accurate results, Memory+ is fast enough for use in the design loop. Memory+ will be demonstrated both from the command line and from a graphical environment.

Solido Variation Designer Standard Cell+ delivers the highest-quality standard cell libraries in less time. Solido will demonstrate how Standard Cell+ optimizes a library of cells across the increasingly significant variation effects in nanometer technologies, allowing efficient migration of a standard cell library to a smaller process node or second source. Attendees will see how to leverage Solido’s meta-simulation technology to enhance standard SPICE simulation and manage performance-yield tradeoffs. Operating at the command line for full batch operation, Standard Cell+ is also used as an environment for design debug and results visualization.

Solido Variation Designer Low Power+. To minimize power in today’s portable devices, numerous power states in SoCs need to be considered and verified against thousands of corner cases. Solido will demonstrate how its Low Power+ uses Fast PVT meta-simulation technology, delivering a typical 2x-10x productivity gain in design verification coverage across power states, PVT corners, and layout RC corners. Attendees will see how Low Power+ actively finds and simulates only the worst-case corners while providing predictive results for non-worst-case conditions, giving full coverage at a fraction of the simulation cost.

Solido Variation Designer Analog+. Solido will demonstrate how its Analog+ product builds on the well-established Cadence® Virtuoso® Custom Design Platform to delivers simulation efficiency and design closure against worst-case PVT corners and extracted 3-sigma statistical corners. Analog+ delivers a 10x average efficiency increase for PVT signoff, more consistent Monte Carlo analysis with multiple stop-on-yield criteria, fast extraction of statistical corners at a target sigma, and efficient, intuitive, interactive design sizing. All capabilities provide extensive visualization and debug to assist in efficiently achieving high-yielding designs.

You can sign up for a Solido DAC meeting HERE. Send me a note and I will meet your there!