Modern SoC design for artificial intelligence workloads has fundamentally shifted the role of the network-on-chip (NoC) from a simple connectivity fabric to a primary architectural determinant of system performance, power, and scalability. As compute density increases and heterogeneous accelerators proliferate, data movement increasingly dominates overall system behavior. Consequently, NoC architecture must be treated as a first-order design decision rather than a late integration step. The white paper Considerations When Architecting Your Next SoC: NoCs with Arteris emphasizes that modern AI SoCs face bottlenecks not in computation but in arbitration, memory access, and interconnect bandwidth, making NoC topology, buffering, and quality-of-service policies critical for achieving target performance metrics.

AI-centric SoCs differ from traditional designs in both traffic characteristics and integration complexity. Contemporary systems integrate CPUs, GPUs, NPUs, DSPs, and domain-specific accelerators, generating bursty and highly concurrent traffic patterns that are sensitive to contention and tail latency. These characteristics challenge traditional bus-based architectures and demand scalable NoC topologies capable of balancing throughput and latency. Furthermore, advanced process nodes increase wire delays and routing congestion, making physical implementation constraints tightly coupled to architectural decisions. As a result, topology selection must account for both logical hop count and floorplan feasibility, since physically long interconnects may negate theoretical performance advantages.

One of the most consequential architectural decisions in AI SoCs is the coherency model. Hardware cache coherency simplifies programming but introduces coherence traffic and scalability challenges, particularly with snooping and directory mechanisms. Software-managed coherency, by contrast, reduces hardware complexity and allows deterministic accelerator behavior, though it increases compiler and runtime overhead. Dedicated AI accelerators often favor software-managed memory to minimize unpredictable latency, while heterogeneous SoCs typically adopt hybrid approaches to maintain compatibility with legacy CPU clusters. This coherency choice directly influences NoC traffic patterns, arbitration logic, and memory hierarchy organization, demonstrating that interconnect architecture cannot be separated from system-level memory decisions.

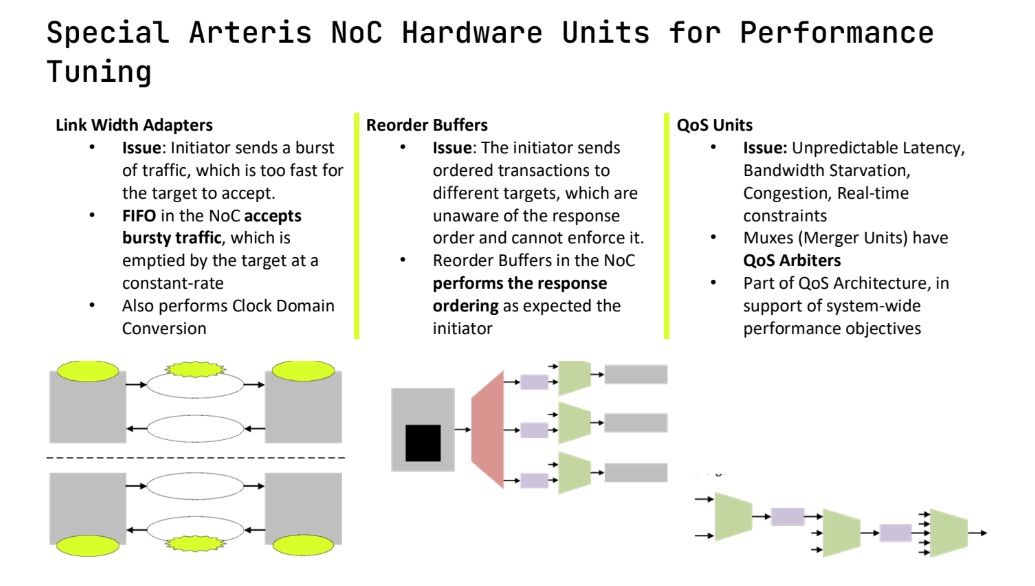

Beyond topology and coherency, specialized NoC hardware units are essential for handling mixed workloads. Link-width adapters enable burst absorption and clock domain crossing, reorder buffers preserve transaction ordering across multiple targets, and QoS arbitration logic ensures latency-sensitive traffic is prioritized over bulk transfers. These features help maintain predictable latency under heavy load, which is crucial in AI systems combining control traffic with large tensor data movement. Without carefully designed QoS policies, critical real-time transactions may suffer starvation, leading to performance variability and degraded system efficiency.

Physical floorplanning also plays a major role in NoC design. Centralized interconnects may minimize logical complexity but can create routing congestion and timing closure risks, whereas distributed NoC implementations align better with partitioned floorplans and reduce long wire lengths. Early architectural modeling should therefore incorporate physically plausible assumptions, including clock domains, power partitions, and IP placement. Ignoring these factors often results in late-stage redesigns that increase schedule risk and engineering cost.

A structured methodology for NoC development further reduces implementation risk. Effective design flows begin with traffic modeling and high-level performance analysis, followed by topology exploration, floorplan alignment, pipeline insertion for timing closure, and validation through physical synthesis constraints. Iterative feedback between architectural modeling and physical implementation allows designers to converge on viable solutions before RTL commitment. Automation frameworks such as topology generation tools can further improve productivity, reduce wire length, and enhance latency by embedding floorplan awareness into the exploration process.

As SoCs scale, physical partitioning becomes unavoidable due to power domains, clock islands, and organizational boundaries. Partitioned NoCs must maintain connectivity while supporting isolation, retention, and reset sequences during power transitions. Coordinating these behaviors across domains is complex and must be addressed early to avoid functional and verification issues. Proper planning ensures that the NoC remains operational across low-power states and wake-up sequences without introducing deadlocks or data corruption.

Bottom line: Architecting a NoC for modern AI SoCs requires holistic consideration of traffic patterns, coherency models, topology, QoS mechanisms, physical floorplanning, and power management. Treating the NoC as a system-level design problem rather than a connectivity afterthought enables scalable performance, efficient power usage, and predictable implementation schedules in increasingly complex heterogeneous silicon platforms.

Also Read:

The 10 Practical Steps to Model and Design a Complex SoC: Insights from Aion Silicon

Live Webinar: Considerations When Architecting Your Next SoC: NoC with Arteris and Aion Silicon

Architecting Your Next SoC: Join the Live Discussion on Tradeoffs, IP, and Ecosystem Realities

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.