It’s not easy having to deliver one of the last tutorials on the last day of a conference. Synopsys drew that short straw for their tutorial on formal methodologies at DVCon this year. Despite that they delivered an impressive performance, keeping the attention of 60 attendees who said afterwards it was excellent on technical content, substance and balance for a wide audience. This was their second content-rich, marketing-light tutorial in this conference (following low-power verification). Worth remembering for next year.

Sean Safarpour of Synopsys (another Atrenta alum) kicked off with a quick and pragmatic review of “Why Formal?”. These have become almost pro-forma now that more of us are getting formal (usage at over 30% in ASIC and an amazing 20% in FPGA last year). Sean got through these slides quickly – contribution to shift, left, different tools for different problems, complementary formal and simulation analysis, the pros and cons for formal and the greatly simplified on-ramp to formal use.

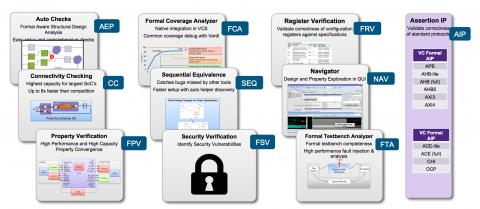

The second part of the tutorial, also presented by Sean, dug deeper into this formal for everyman/everywoman, enabled through pre-packaged applications. This isn’t a new topic, but it’s worth stressing how accessible and valuable these can be for covering important sections of a testplan and for exploring deep properties. You can quantify coverage contributions from formal analysis and can demonstrate that areas you can’t cover in simulation may be unreachable, so can be dropped from coverage analysis. Serious value and easily accessible – there’s no good reason not to use capabilities like these. General adoption in this area hadn’t yet reached property-checking levels last year but it is growing much faster.

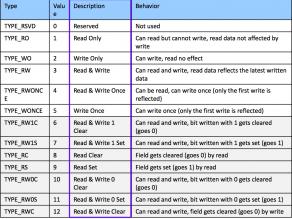

A favorite of mine checks register properties; this is a no-brainer for formal analysis and for formal apps. IPs and designs host vast numbers of registers to configure, control and observe behavior, typically accessed through standard interfaces like AXI. But these aren’t just vanilla read/write registers. They can have a wide range of special properties, across the register or bitfield by bitfield. A bitfield may be readable or writeable or both, perhaps it may be read/written only once, read or write operations may set/clear the field, the register may mirror another register, and so on. Checking all these possibilities in simulation can be painful, at minimum to setup the testbench, and is often incomplete. Formal apps for register checking are easy to setup (needing just spreadsheet descriptions of expected bitfield properties) and you can be confident they are complete. Again, why wouldn’t you do this?

Getting a bit more complex, Vigyan Singhal of Oski, a formal guru with always interesting ideas, presented on end-to-end formal verification. Here his objective was to fully verify an IP using only formal methods (an idea I’m hearing a lot more). Vigyan made a case that serious progress can be made in this direction, if we are willing to work harder. He cited blocks like MACs, USB, DMA and memory controllers, bridges, and GPIO blocks as candidates for this kind of proving.

Of course, today this isn’t as simple as an app. Abstraction becomes important, as do symbolic approaches to verification which can, though clever techniques, fully verify key aspects of the functionality of say a data transport block by checking just a limited set of possibilities. Very interesting concepts – maybe not something most of us would try unassisted, but this points to what we might expect to see eventually in packaged solutions. Will it completely replace simulation on these blocks? I would guess not, but it does seem possible that formal will eventually do more of the heavy lifting.

Mandar Munishwar from Qualcomm followed with a neat sequel. Maybe you did what Vigyan suggested but you’re still not convinced from a signoff perspective. How can you more thoroughly check coverage to make sure you didn’t miss hidden problems?

He started with a very interesting concept – the proof-core. When we think about proving an assertion we think about checking within the cone of influence (COI) leading up to the assertion. But a proof for an assertion doesn’t necessarily have to look at the whole cone of influence; the prover only extends out though just the piece of logic (the formal core) required to prove the assertion – which may be a lot smaller than the COI. This means that any potential bugs beyond the formal core may be missed. He suggested logic mutation to expose such problems; change the logic slightly then re-run the proof, and repeat with more mutations. Based on his experiments, he found at least one more problem on the DMA controller he used as his test DUT. What Mandar wants to get to is higher levels of formal coverage by pushing analysis, through mutation, beyond an initially limited set of formal cores.

Pratik Mahajan from Synopsys wrapped up with a few future-looking areas. The first was around a goal that many formal users will welcome. As we know, formal depends on multiple engines – BDD, SAT, ATPG and more. Each has special strengths and weaknesses, each has a host of parameters, you need to know when you should selectively push deeper beyond nominal proof depths, and you need to know when you should switch from one approach to another. Knowing how to make these choices is a big part of why the full scope of formal property-checking has been viewed as a PhD-only topic; you must know how to orchestrate all these possibilities.

Pratik described an approach to putting all that orchestration in a box, along with managing distribution of jobs out to server farms (since many tasks can run in parallel). He didn’t get into too much detail but it sounds like this is an adaptive approach, starting from some well-known recipes, with ability to fine-tune as results start to come back. From an end-user perspective, this could make more complex and more complete proofs much more accessible to non-experts.

Pratik also covered dealing with inconclusive proofs; another sore spot for formal users. Anything he can do to help here will be greatly appreciated. And he discussed work being done on machine learning in formal and assistance in bug hunting which I covered in an earlier blog on FMCAD.

I must apologize to the presenters for my highly-abbreviated treatment of what they presented. I hope at least I conveyed a sense of the rich set of formal options they presented, both for today and to for the future. You can learn a lot more from Tutorial9 from the DVCon download.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.