There was a comment recently that design for low power is not an event, it’s a process; that comment is  absolutely correct. Power is affected by everything in the electronic ecosystem, from application software all the way down to layout and process choices. Yet power as a metric is much more challenging to model and control than metrics like timing and area since it depends on factors across that range, particularly activity, which in turn is heavily dependent on use-cases.

absolutely correct. Power is affected by everything in the electronic ecosystem, from application software all the way down to layout and process choices. Yet power as a metric is much more challenging to model and control than metrics like timing and area since it depends on factors across that range, particularly activity, which in turn is heavily dependent on use-cases.

Still, a practical design methodology can’t iterate over so wide a span, so each stage aims to optimize – using realistic use-case data – within what can be reasonably controlled (or at least determined as constraints to feed forward) at that stage. One important observation helps: it has been amply demonstrated that power-optimizations made at higher levels in the system have a bigger impact than those made at lower levels. So when the architecture has been fixed, you are going to get the biggest bang for your buck through RTL optimizations. Which is not to say that you won’t polish all the way down to layout if you’re going after picowatt savings, but the Pareto principle suggests you should put most of your effort into the RTL. Unless of course you are unable to change the RTL, which can happen if you want to avoid re-qual.

What are the options at RTL? Architecture and target process are already fixed. Your choices are to reduce leakage in areas that are not very performance sensitive by controlling the Vt mix, to power-down islands of logic during periods those functions are not needed, to reduce redundant/useless activity by gating clocks and related signals, and to scale down V[SUP]2[/SUP]f power (again in islands) where feasible through dynamic voltage and frequency scaling (DVFS). Depending on where you are starting, this bag of tricks together can give you 30% or more reduction in power, or as little as a handful of percent reduction if you’ve already significantly optimized the design. Energy (integrated power over time, which is important for battery life) is mostly controlled by how long you can keep most of the logic in a low/zero power state and how much power is consumed in turning it back on.

Of course, doing all of this stuff comes with costs. Even clock gating adds at least one cycle of latency in turn-on time. Power-domains can be significantly slower to reactivate because they have complex power-up sequences (turn power on, reset/start clocks, restore state from retention registers). And then there’s the issue of what happens when something is switching on or off and something else wants to talk to it. This requires work to prove either that such a problem can never happen or that there is handshake logic in place to ensure that these cases will be handled gracefully. And all of this added circuitry consumes space, may create new timing problems and adds more complexity to verification. All of which means that while you may find lots of ways you could reduce power, they’re not all going to be equally desirable when balanced against other consequences of making those changes. PowerPro’s state of the art solution provides a way to start this analysis by considering all options, automated and guided for power-saving and interactive exploration of these options with feedback on power reduction and cost metrics.

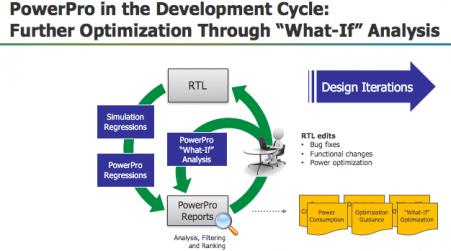

Mentor makes the point that all of this optimization could be handled more effectively if regular RTL designers were to get more involved in optimization for low power. Today this objective is generally handed off to power experts who, while skilled in that domain, necessarily have limited understanding of total design objectives, leaving you wondering what gets left on the table. However in high-pressure design schedules it’s sometimes difficult to see how design teams can significantly rework assignments. Perhaps instead PowerPro can enable a more comprehensive discussion between block, subsystem and top-level designers, the power experts and the verification engineers in debating which power-saving options are most worthy of consideration in the design. Doing this can start with the power expert filtering through the a range of possible directions to boil down to a limited set of most promising scenarios.

At that point being able to interactively flip through scenarios (enabled by nearly real-time performance in PowerPro option what-ifs) would enable optimal choices made by the collective product team, each bringing their own area of expertise to consider a scenario from bandwidth, latency, area, power, performance/criticality and verification complexity perspectives.

At that point being able to interactively flip through scenarios (enabled by nearly real-time performance in PowerPro option what-ifs) would enable optimal choices made by the collective product team, each bringing their own area of expertise to consider a scenario from bandwidth, latency, area, power, performance/criticality and verification complexity perspectives.

You can read more detail on PowerPro in the link at the end of this blog. A couple of interesting questions came up after the Webinar. One touched on how accurate dynamic power estimation is without a SPEF for the design, the other concerned vectorless estimation. Mentor answered both questions well in my view. First, RTL power estimation is good for relative comparisons, which is exactly what you need it for (is this option better than that). Absolute correlation with silicon is not the goal, nor is it likely possible before the design is fully implemented. Second, RTL block designers usually want to know about vectorless estimation because they don’t have much in the way of vectors. Vectorless can give you ballpark estimates but I wouldn’t invest a lot of time in power-saving tweaks based on this analysis – the error-bars on this kind of analysis can easily swamp potential power-savings.

The Mentor Webinar can be found HERE.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.