Wally Rhines gave the keynote at DVCon yesterday. He started out with a game of “name that graph” which was unfortunately a bit spoiled since when the names were revealed the first line was off the top of the screen. But he extrapolated several trends such as the decreasing number of fabs (the current trend is that there won’t be any left by 7nm) or the number of EDA companies left for Synopsys to acquire. Or that in a few more years, designers will spend 100% of their time on verification.

Wally Rhines gave the keynote at DVCon yesterday. He started out with a game of “name that graph” which was unfortunately a bit spoiled since when the names were revealed the first line was off the top of the screen. But he extrapolated several trends such as the decreasing number of fabs (the current trend is that there won’t be any left by 7nm) or the number of EDA companies left for Synopsys to acquire. Or that in a few more years, designers will spend 100% of their time on verification.

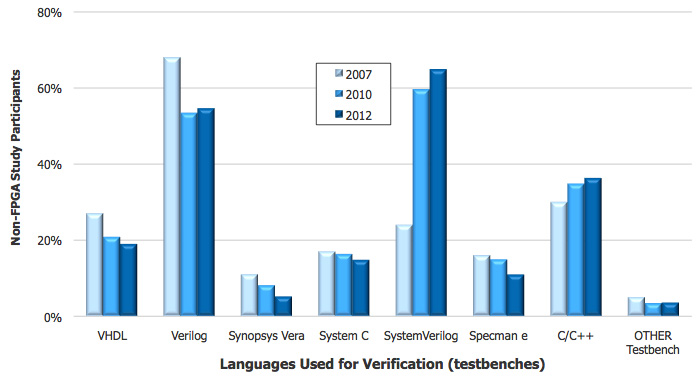

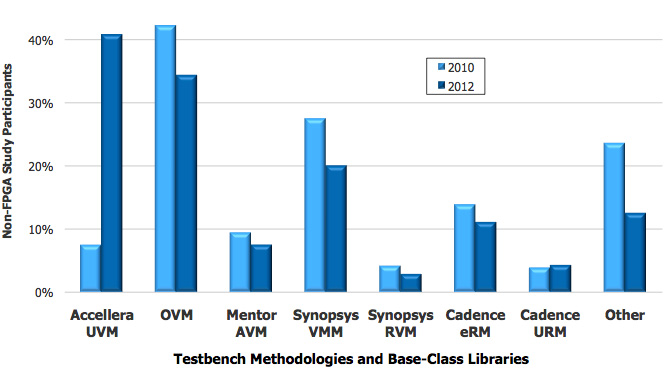

But then he had some more serious graphs. Mentor had run a 3rd party survey examining trends in verification. This survey has been done under various auspices several times in the last decade. But it was done blind, nobody who participated knew it was Mentor sponsoring the survey, and by the way that the question that asked about simulators had Cadence, Mentor and Synopsys pretty much equal meant that it didn’t get biased towards Mentor’s own customers.

I’m not going to try and recap all the graphs here, but pull out a few trends that Wally highlighted. Or is that highlit?

The first trend is that standardization has gone a long way, especially around SystemVerilog which is almost universally used for large designs and is the only language that isn’t shrinking apart from a little use of C++.

Accelera UVM is also having huge growth at the expense of everything else so that there is increasing standardization of the verification flow.

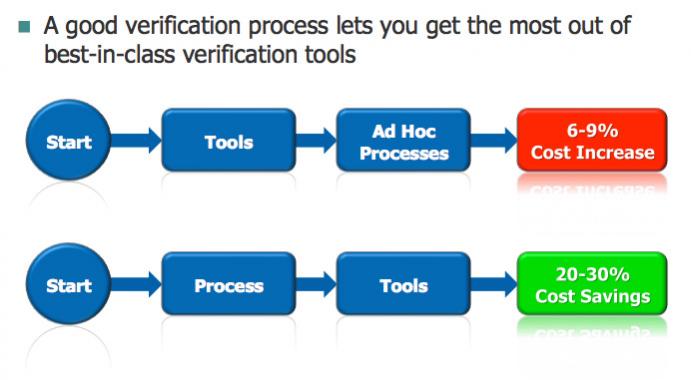

One thing that is clear is that adoption of advanced verification methodologies and a structured approach to verification results in much higher productivity. The old way of buying some tools and then using them in an ad hoc way just doesn’t cut it. Verification costs go up. But groups that have a good process for verification and the tools to support it see their costs decrease.

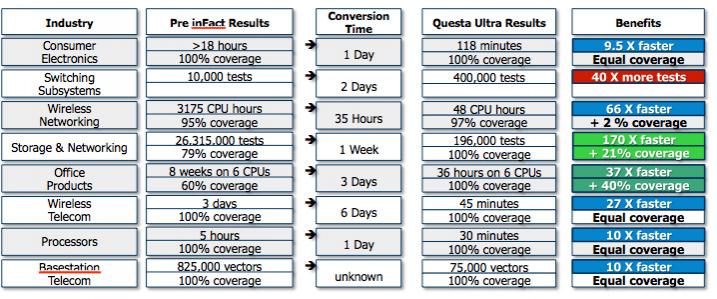

One particular area that has a huge impact is intelligent test bench. It significantly reduces the redundancy inherent in constrained random. Last time Wally gave a keynote at DVCon he offered a deal that anyone could send him their verification suite and if he didn’t get a 10X improvement then the software was free. First design they got…improvement only 9.5X. Aargh. But every other design was much faster, some by as much as 60X.

Formal verification has also advanced. Partially this is more powerful capabilities, but also that the technique no longer requires a degree in rocket science to use it. Also, by focusing the technology on smaller applications such as connectivity or unknown analysis make formal approaches much easier to apply and with much faster run-times.

The next area Wally feels is ripe for some standardization is the system level. This is currently at the point that everyone is jockeying for position but something might emerge around transactional level models (TLM) used to drive both high level synthesis and virtual platforms for software development.

Maybe he’ll be giving the keynote again in a couple of years and be able to report how system level productivity has improved driven by standards, better processes and more powerful tools.

Share this post via:

Comments

0 Replies to “Wally Rhines: Name That Graph!”

You must register or log in to view/post comments.