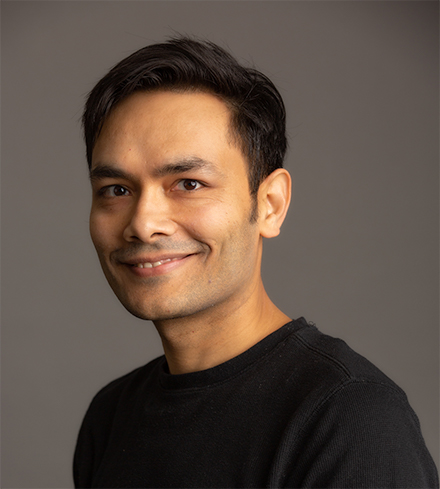

It was my pleasure to meet Veerbhan Kheterpal. Veerbhan has founded three technology companies and has full stack expertise spanning software to silicon across Edge & Datacenter applications. Currently, he is a CEO & co-founder of quadric.io, a company that has created a new processor architecture for high performance on-device computing.

It was my pleasure to meet Veerbhan Kheterpal. Veerbhan has founded three technology companies and has full stack expertise spanning software to silicon across Edge & Datacenter applications. Currently, he is a CEO & co-founder of quadric.io, a company that has created a new processor architecture for high performance on-device computing.

Prior to quadric.io, Veerbhan was a technical co-founder of 21, Inc where he was focused on bringing power-efficient ASICs to the cryptocurrency space. Veerbhan served in various roles ranging from designing custom ASICs (3 production chips in 18 months), developing web scale blockchain backends & building consumer facing mobile apps. Prior to 21, Veerbhan co-founded Fabbrix, Inc, which was acquired by PDF Solutions. Fabbrix was focused on software that enabled design for manufacturability of complex Integrated Circuits. Veerbhan is an entrepreneur at heart and always looking for breakthroughs in technology, relationships and parenting.

What’s the backstory of quadric?

In 2016, we started building an agricultural robot that was going to transform first, vineyard management, and eventually, crop management of any kind — reducing costs, minimizing arduous tasks for humans, and maximizing crop returns. While this might seem like a lofty goal, I was working with two talented partners, and we had just built and shipped a very complicated technical product in the bitcoin computing space. With our combined technical backgrounds, and access to cutting edge technology, we had no doubt about our ability to build this first-of-its-kind robot that would transform the agriculture industry.

We had just one problem: we couldn’t make it work. I mean we made it work — we wrote code and developed software and designed and built hardware prototypes that functioned. But we soon came to realize these prototypes would never become the affordable, scaleable, commercially viable products we had envisioned.

Why not? In short, the existing technology platforms built with CPUs and GPUs simply didn’t support the compute performance and capacity that was required with the power footprint. This forced us to conduct a deeper inquiry into the power consumption of our software. This led us down a path of inventing a new processor architecture; one that generalizes the dataflow paradigm and delivers on a higher level of power efficiency for a wide range of algorithms in Machine Learning, Computer Vision, DSP, Graph Processing & Linear Algebra.

What problem are you solving?

Quadric’s processor is built by developers for developers. Developers are creative beings. Just like we were attempting to get cutting edge algorithms to work on our robot, developers continue to push the envelope of algorithms in order to bring delightful experiences to everyday devices. Recent examples of these algorithms are driven by rapid research in AI models. Once they have developed their dream algorithm, they start pulling their hair out when trying to deploy it at scale. Existing computing solutions for deploying intensive workloads are either too power hungry (think GPGPUs) or too restrictive in capability (think AI chips/accelerators). Further, AI inferencing doesn’t run on its own, it is frequently accompanied by classical data processing steps which require the developers to include additional specialized hardware such as FPGAs/DSP chips. Besides the hardware complexity, this leads to additional software integration complexity. Quadric’s architecture makes it easy to ship high performance AI inference combined with classical data processing with a single software model.

Further, quadric’s architecture is scalable which means that if a certain power/performance combination does not fit your application, just pick a different size of our hardware keeping the software the same.

How fast does on-device AI get deployed? What are the applications?

Over the past several years we have seen at scale AI inference deployments in the cloud. A few examples such as recommendation engines and voice assistants come to mind. However, cloud deployment of inference has its limitations primarily due to privacy or round trip latency reasons. Due to latency reasons, robotics, automotive, video game consoles & smart sensors have already deployed on-device compute solutions and are gaining volume with tremendous momentum.

Recently, we are seeing privacy driven requirements that need compute to move away from the cloud and be deployed on-device. Driven by privacy laws, the security industry is going through this transformation. This is driving the next generation of devices to include on-device inference capability.

If you step back and view AI as tomorrow’s “data driven” software as opposed to yesteryear’s “code driven” software, we are going to see a future with more than 80% of devices performing some sort of deep neural network in the next 5 years.

What about on-device training of machine learning models?

Great question. Training at the Edge or on-device training has been very difficult so far due to lack of specialized hardware and the diversity of processors at the Edge. As the next generation of devices gain specialized compute capabilities, it becomes feasible to perform limited amounts of training on the device itself. Now, the possibility of it alone doesn’t drive adoption – you need powerful drivers to move the market. We are seeing one such driver starting to affect this change where a limited set of customers are looking for on-device hardware capability that allows them to portion of the AI training algorithm on the device itself. This is different from solutions like homomorphic encryption that also attempt to preserve privacy.

To summarize, it’s still early days of “training at the edge” but the driving forces are assembling and multiple solutions are being proposed.

On-device inferencing market is super hot. How does quadric stand out?

Software and hardware scalability.

Most solutions in AI chip space are focused on accelerating layers and topologies that are well known today. However, this means the developer is locked into the family of DNN architectures of today. AI is changing fast and still taking large innovative leaps. Because Quadric’s hardware is general purpose, our software can scale to any data parallel workload. This gives developers the superpower to ship any new algorithm whether it is a new type of DNN layer or a domain transformation without limitations.

Further, a single instance of Quadric’s architecture can scale from 200 milliwatts to 20 watts. This gives us scalability across multiple applications and makes it worthwhile for large customers to adopt Quadric’s solution. Quadric’s solution is also designed to deliver cutting edge performance while providing this level of software and hardware scalability.

The industry is moving towards using multiple domain specific accelerators. How does Quadric fit in?

Quadric has taken a general purpose dataflow approach to the high performance computing problem. Our secret sauce is in the co-design of our instruction set and the accompanying compiler software that optimize for simultaneous compute and data movement. Most others are taking a DNN specific dataflow approach that quickly becomes obsolete as AI models evolve. The key is to acknowledge that the full system requires AI inferencing that is accompanied by custom data preprocessing or post processing algorithms. Due to its general purpose nature, Quadric’s architecture can replace multiple types of accelerators (DSP, Vision, AI) with a single one. This leads to a win for our customers on several axis:

- 2x-3x Faster Software Integration

- 2x-3x Faster Hardware Integration

- 2x-5x Higher Performance/Watt

- 2x-3x Lower Latency

- 2x-3x Lower Cost

As an entrepreneur, what advice would you give someone founding a startup or thinking about starting one in the semi space?

The semiconductor space is relatively harder for startups. This requires the right mix of short term thinking while having an organic path to building a long term defensible moat. My advice to entrepreneurs is to think critically about the semiconductor product and their value proposition. Here some questions that you want to answer:

- Am I creating enough short term value to be able to build my company while having a path to becoming a sizable enterprise? E.g. The biggest risk that investors perceive is the “defensible path to scaling beyond the first few design wins”.

- Is my product going to get easily commoditized after everyone realizes its value? Eg. First movers on bitcoin mining chips made profits which later disappeared due to intense competition that caught up quickly.

- How fast can competition catch up?

- Do I have a defensible moat even if a better product comes along?

Another key feature of valuable semiconductor companies is that they are not hardware companies! You have to think about software as early as possible in the game. At Quadric, we built the software before we built the hardware. We also consistently invested more than 70% of R&D capital in software. This strategy has worked well for us.

Also Read:

CEO Interview: Pete Rodriguez of Silicon Catalyst

CEO Interview: Sivakumar P R of Maven Silicon

Ten Lessons Learned from Andy Grove

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.