I’ve talked before about Mentor’s work in high-level synthesis (HLS) and machine learning (ML). An important advantage of HLS in these applications is its ability to very quickly adapt and optimize architecture and verify an implementation to an objective in a highly dynamic domain. Design for automotive applications – for example in an intelligent imaging pipeline such as you might find for object detection in a forward-facing sensor – present all of these challenges.

Evolving Demands in Automotive Design

Certainly they can be on very tight deadlines; one example mentioned below required a team to develop three designs in a year. But two other constraints are even more challenging. First, verification suites are naturally based on images, often at 4K resolution, with 8-12-bit color depth and 30 frames per second. On top of this ML inference testing suites using images of this complexity can be huge, since correct detection in these applications needs to be near-foolproof.

Finally, the ecosystem from SoC developer to module maker to auto OEM has become much more tightly coupled, especially to meet the tighter requirements of ISO26262 Part 2 and now also SOTIF (safety of the intended function, another emerging ISO standard). Part 2 and SOTIF demands have placed more burden on the value chain as a whole, from IP suppliers through SoC integrators to Tier1s and the automotive OEMs, to ensure that the final product can meet safety requirements. For example Part 2 now requires a confirmation review to “provide sufficient and convincing evidence … to the achievement of functional safety”. This is a matter of judgment, not just meeting metrics; a tier 1 or a chip maker can require additional support from lower levels to meet that objective, which means that design specs can continue to iterate until quite late in the design schedule.

Under these constraints RTL-based design flows would be impossibly challenging; there simply wouldn’t be enough time to experiment with enough architecture variations, verify over huge reference image databases and respond to and re-characterize and re-verify late-stage changes from Tier1s or OEMs.

This is where HLS shines. You can develop code in C/C++ and experiment with architectures at least an order of magnitude more efficiently than you can at RTL since these are algorithmic problems most easily represented in that format (or in MATLAB or the common ML frameworks, to which the Mentor HLS solutions can connect). You can also run verification of those giant datasets at this level, multiple orders of magnitude faster than RTL-based verification. (I believe this should even be faster than emulation since C-modeling is close to virtual prototyping which runs at near-real time performance.) And in response to late changes, you can incorporate those changes at the C-level and re-verify and re-synthesize pretty much hands-free, limiting impact on your schedule.

Case Studies

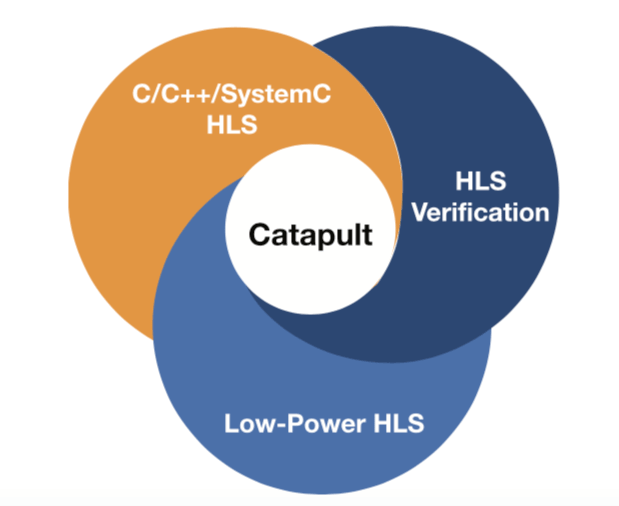

Mentor recently released a white-paper (see below) on outcomes for three of their customers using their Catapult flow for designs in the automotive imaging pipeline. Bosch, a well-known mobility Tier1, are finding it valuable to enhance their own differentiation by building their own IPs and ICs for image recognition. This was the example where a design team had to produce three designs in a year. Using the Mentor flow they were able to pull this off and deliver a 30% power reduction because they were could easily experiment with and refine the architecture for power. They also commented that it will be much easier to migrate the C-based model to new designs and evolving standards than it would have been with an RTL model.

Chips and Media, a company providing hardware IP for video codec, image processing and computer vision (CV), also used the Mentor flow to develop a new CV IP. This was their first time using the HLS flow and they ran an interesting experiment with two teams, one developing with HLS, the other with hand-coded Verilog. The Verilog team took 5 months to complete their work, with little experimentation on architecture, whereas the HLS took 2.5 months. This was from a cold-start – they had to train on the tools first, then develop the C code, synthesize and so on. Apparently they were also able to experiment quite a bit in this period.

Finally, ST are well-known for their image signal processing (ISP) products, commonly used in automotive sensors. They have seen comparable improvements in throughput for such designs, delivering (and this is pretty awe-inspiring) more than 50 different ISP designs in two years, ranging in size from 10K gates to 2 million gates. Try doing that with an RTL-based flow!

You can learn more about these user examples and more detail on the Catapult HLS flow HERE.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.