Verification technologist Hemendra Talesaraattended a conference in Austin and asked me to post this article on verification execution for him as a blog. I first met Hemendra when he worked at XtremeEDA, and now he works at Synapse Design Automation – a design services company.

“In theory there is no difference between theory and practice, but in practice there is”

– Harry Foster

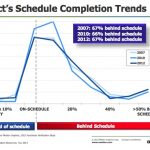

At a recent conference in Austin, I heard one of my favorite verification-philosopher-scientist, Harry Foster, gave a talk on how, in spite of advances in verification technology, methodology and processes; we are barely keeping up with Verification. Two thirds of the projects are always behind schedule. This has been constant for last five plus years for which they tracked the data. Good news is that this is true in spite of an increase in Verification Complexity (Harry cited different ways folks have looked at verification complexity – although the golden standard for Complexity is still illusory; maybe Accellera will focus on it next) and shrinking schedule. Bad news is that in spite of all the advances and maturation of tools, technology and processes, Verification Execution remains the number one headache for management.

Let’s look at it from another perspective. There is at least one third of the projects that are either on-time or ahead of schedule.

A schedule slip just indicates the gap between planning and execution. Where is the gap?

Let’s acknowledge that indeed, there are a few difficulties in planning a Verification Effort. In earlier days Verification was not very distinct from design tasks and was well understood by design managers. But, as design complexity has grown, Verification has become a lot more complex. Tools and Technology also have become more sophisticated. The skillset required to execute is also becoming more diverse. Scoping, Planning and leading a Verification Effort have also become a lot more complex. Verification is consuming more resources. Harry extrapolates that at the rate more resources are thrown in Verification, in twenty-five years only thing designers will be doing is Verification :rolleyes:.

Indeed, part of the problem is that planning itself requires that one understands what it takes to deploy these new technologies and the demand it places on skillset, resources, effort etc. A gauge on complexity of tasks at hand and a good understanding of new verification technologies is required. There are standard project management practices that can be employed for initial planning, scoping, scheduling etc. The problem starts when plans do not reflect reality, but, market constraints or wishful thinking of the management.“Realism is at the heart of execution, but organizations today are full of people who like to avoid or shade reality”

– Larry Bossidy and Ram Charan

Even, if the plan did reflect reality, reality is constantly shifting. Impact of specification changes and overall scope of the tasks that need to be dealt with are not always reflected in the plan. Perhaps Verification today demands more agility than a typical design task of earlier days.

Yes there are difficulties, but, even then at least one third of the projects are finishing on time.

Finally, if we look at this slide from Harry, it appears that on average almost 36% of the time spent on debugging. Now, if one must shade reality to please the upper management, what is it that is most likely going to be cut short in the planning process? Not so predictable “debugging”, which is mostly in the final phase of verification. This is the verification execution reality. Actual mileage for each project will vary. But, does your typical plan actually reflect similar distribution?

I invite you to share your experience and insight to help understand where do we fall short on the plan? Where do we miss on execution? Or when we ship on time, what is it that we do right? Are current project management practices adequate to deal with Verification issues?

I am looking forward to your thoughts and comments.

Hemendra Talesara

lang: en_US