Well today, i’m easing my way back in from vacation. Took a camper, 6 kids, 1 wife with bun in the oven and saw the great USA. 17 States, roughly 5500 miles. It was great fun and tiring at the same time. The Grand Canyon was a blessing but I really enjoyed the ‘The Four Corners‘ where UT, CO, NM, AZ all meet. I had each kid lay down and made sure they got the chance to be in four states at the same time. Below is my pic. Now the X formation is of course a tribute to the Mother Ship Xilinx and not to the fact at I was eXhausted.

While I was away, Xilinx published a very valuable document named “UltraFast Embedded Design Methodology Guide“. As you know, FPGAs have radically changed over the years and Xilinx has added ARM processors to them and FPGAs are now SoC’s, named Zynq. One very rarely can just sit down and start banging out code for any serious design. The Zynq design takes planning and process/methodologies like any other design, but with a few twists.

In the past, FPGAs were scary, and only used and programmed by hardware engineers, this has changed as well. Who is this guide for? The table below, sums it up very well, it is for anyone who is using a Zynq SoC in their design.

The guide is broken down into seven chapters,

- Chapter 1: Introduction

- Chapter 2: System Level Considerations

- Chapter 3: Hardware Design Considerations

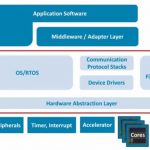

- Chapter 4: Software Design Considerations

- Chapter 5: Hardware Design Flow

- Chapter 6: Software Design Flow Overview

- Chapter 7: Debug

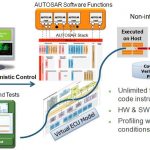

The most important chapter in my opinion is Chapter 2, System Level Considerations. This is where the trade space and bake off’s begin to decide where functions/algorithms need to live. For example, you have a million point FFT that needs to be solved. Based on the latency, power and resource usage will determine if this FFT is going to reside in the programmable logic or within the ARM processor system.

The other important factor is data movement, where is the data going? Can it get there fast enough? As these exercises start to begin many domino’s will fall. This guide provides much attention to the issue of memory and how it impacts the system and as you know, it is all important.

The bottom line is the Xilinx Zynq SoC design is a team effort, and all must be willing to overlap and work together. Want to have a great low power Xilinx Zynq design? The figure below really shows that all involved have an impact on power, whether you are an software engineer, hardware engineer or system architect.

The Xilinx Zynq Series will be the best selling FPGA of all time. Download the guide and get started with Zynq today!