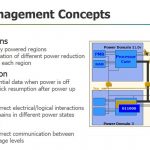

Power management is a perennial topic these days, and it came up in several presentations at the recent ARM Techcon in Santa Clara in mid November. The techniques covered in these talks address dynamic and static power consumption. The IEEE 1801 standard deals with specifying power design intent in Universal Power Format (UPF) and typically covers these power saving methods: RTL clock gating, power gating, multi-voltage design and voltage/frequency scaling.

In HDL based designs the UPF specification is in a separate file from the functional specification, which makes for a much cleaner deign flow. Nevertheless, the UPF is used to alter the design implementation as it progresses. Each of the techniques above involve structural changes to the implementation. Clock gating affects the clock tree implementation. Power gating affects supply lines. And, multi-voltage and frequency scaling also require level shifting and clock domain crossings.

While each of these techniques is very effective, because of they change circuit operation, a verification strategy is necessary to ensure proper operation of the design. I attended a talk by Ellie Burns from Mentor where she discussed using their Questa PASim, QuestaCDC, and Questa Formal to help with verification. Let’s look at the Mentor verification solution in more detail to understand how it can help avoid design failures due to flawed power reduction implementation.

The signals that pass between power domains need have isolation so that signals from powered down blocks do not cause spurious toggling on connected blocks. Toggling can consume power and cause data corruption. In many cases it is important to ensure that state information is retained when a block is powered off so that it can be restored when the block returns to operation. It is also essential that level shifters are inserted wherever there is a difference in operating voltage between connected blocks.

Beyond static checks like those above, there is a need to verify power up/down and reset/restore operations for each block and power domain. Because the system will have a number of power states, operation of the system in each state and each transition should be checked.

During her talk Ellie gave an overview of using Questa’s power aware simulation features to perform these verification operations. Questa has extensive support for IEEE 1801. It gives users a visualization of the power management structure and behavior, and can automatically detect power management errors. Lastly is helps with test plan generation and reporting power management coverage.

One observed failure that Ellie cited was a block resuming from a power down that required a signal from another block that turned out to be inactive as well. This prevented the block from properly resuming. Verification of all the state transitions would have caught this.

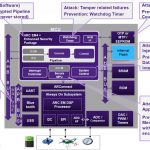

Mentor also offers Questa Formal for verifying that the hardware generates the correct power control signals in the correct sequence. Questa Codelink is also part of the power verification suite because power states are under software control.

The Questa power aware suite offers comprehensive verification for the sophisticated power management regimes that are required for mobile and power sensitive SOC’s. It covers RTL and gate level verification. It allows full simulation of power state transitions and help with debugging with power aware waveform viewing that shows isolation and retention information.