At NXP, we’re very excited about the prospects for our new i.MX 7 and 8 series of applications processors, which we’re manufacturing on 28nm FD-SOI. As noted in part I of this article series, the new i.MX 7 series, which leverages the 32-bit ARM v7-A core, is targeting the general embedded, e-reader, medical, wearable and IoT markets, where power efficiency is paramount. The i.MX 8 series leverages the 64-bit ARM v8-A series, targeting automotive applications, especially driver information systems, and well as high-performance general embedded and advanced graphics applications.

Choosing an FD-SOI solution gave our designers some specific tools that helped them to more easily and robustly deliver the features our customers are looking for. Here in part 2, we’ll look a little more deeply into the markets each of these chip families is targeting, and the role FD-SOI plays in helping us meet our specs.

i.MX 7 Series: IoT, wearables and so much more

Announced last June, the first members of our new 7 series — the i.MX 7Solo and i.MX 7Dual product families — will be hitting the market shortly. We’ve been shipping samples since last year, and the response has been tremendous. (You can read about the i.MX 7 IoT ecosystem we’re helping create for our customers here and support for wearable markets here.)

Our i.MX 7 customers are building products for power- and cost-sensitive markets. That of course includes a vast array of innovative IoT solutions and wearables, but also solutions for other parts of the embedded market like handheld point-of-sale (POS) and medical devices, smart home controls and industrial products. The i.MX 7 series also continues NXP’s industry leading support for the e-reader market via integration of an advanced, fourth-generation EPD controller.

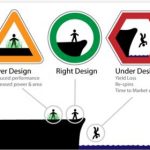

For all these markets, excellent performance is very important, but both dynamic and static power figures are really key. When you’re creating a system with power efficient processing and low-power deep sleep modes, you enable a new tier of performance-on-demand, battery-operated devices that are lighter and cheaper, and in a virtuous cycle require smaller batteries.

The next members of the NXP i.MX 7 series combine ultra-low power (dynamically leveraging the reverse back biasing you can do with FD-SOI) and performance-on-demand architecture (boosted when needed with FD-SOI’s forward back-biasing). It’s the industry’s first general purpose microprocessor family to incorporate both the ARM[SUP]®[/SUP] Cortex[SUP]®[/SUP]-A7 and the ARM Cortex-M4 cores (customers can choose between single or dual A7 cores). These technologies, together with our new companion PF3000 power management IC, unleash the potential for dramatically innovative, secure and power efficient end-products for wearable computing and IoT applications.

The initial offering of i.MX 7 was designed (on 28nm bulk) with Cortex-A7 cores operating up to 1 GHz, while the Cortex-M4 core operates at up to 200 MHz. The Cortex-A7 and Cortex-M4 achieve processor core efficiency levels of 100 microWatts (μW) /MHz and 70 μW /MHz respectively.

A Low Power State Retention (LPSR), battery-saving mode can be improved by FD-SOI and consumes only 250 μW, representing a 3x improvement over our previous generation (on 40nm bulk). That’s almost 50% better than our competitors. Plus it minimizes wake up times without requiring Linux reboot, while supporting DDR self-refresh mode, GPIO wakeup, and memory state retention.

The next members of the i.MX 7 series, with FD-SOI dynamic back-biasing, enable different blocks to be reverse or forward back-biased on the fly to attain always-optimal power savings or performance. Additional power optimization features are enabled to achieve leadership power efficiency. We’ve optimized FD-SOI dynamic back-biasing to enable performance-on-demand architecture through which the i.MX 7 series meets the bursty, high-performance needs (this is when forward back-biasing kicks in) of running Linux, graphical user interfaces, high-security technologies like Elliptic Curve Cryptography, as well as wireless stacks or other high-bandwidth data transfers with one or multiple Cortex-A7 cores.

When high levels of processing are not needed, low-power modes kick in with reverse back biasing of the critical subsystems, and the ongoing, real-time work is carried on by the smaller, lower powered Cortex-M4.

All things considered, it’s perhaps no surprise that we expect i.MX 7 series solutions for cost-sensitive markets to be a key driver of our long-term i.MX portfolio expansion.

i.MX 8: Revolutionizing automotive, interactive multimedia/display apps

Our new i.MX 8 series portfolio, based on 28nm FD-SOI process technology, targets highly-advanced driver information systems and other multi-media intensive embedded applications. It incorporates those same key attributes as the i.MX 7, but extends them into realms the industry has never experienced. We believe the i.MX 8 series is poised to revolutionize interactivity in multimedia and display applications across all kinds of industries.

i.MX 8 incorporates innovations in the processor — complex graphics, vision, virtualization and safety to help revolutionize interactivity for a wide range of uses in many, many markets. The capabilities of this family is broad, but one of the places it’s going to be the biggest game-changer is in what is becoming the e-cockpit of your car.

For almost two decades, SOI has shone in the embedded processing world. In addition, NXP counts every major automotive maker in the world amongst its customers for our devices. Entering the new e-cockpit frontier, 28nm FD-SOI is the logical choice in making the i.MX 8 series meet and exceed the stringent requirements of top automotive OEMs for years to come.

The i.MX 8 series leverages ARM’s V8-A 64-bit architecture in a 10+ core complex that includes blocks of Cortex-A72s and Cortex-A53s.

All the FD-SOI advantages discussed above for the i.MX 7 are also being brought to bear here (the power envelope for automotive designers being extremely strict). But in the hot and electrically noisy automotive environment, FD-SOI also plays an important role in ensuring robust operation.

The way we see it, your car’s multimedia centric e-cockpit will revolve around the i.MX 8, a single chip that drives all displays from infotainment to heads-up-displays (HUD) to instrument clusters. It’s optimized for the intelligent transfer of data and information management from multiple subsystems within the IC – as opposed to only delivering raw performance through one or two processing blocks.

For drivers and passengers alike, we’re looking at a very different world: one that includes the spread of advanced heads-up displays, intuitive gesture control, natural speech recognition, augmented reality, enhanced convenience and device connectivity. (I wrote a blog exploring the possibilities last fall – you can read it here.)

And of course, it will be secure from hackers, and fail-safe for critical systems.

From our customers’ standpoint, they can design a single hardware platform and scale it across multiple market segments with the unique approach to pin and software compatibility within the i.MX product families.

The i.MX family has been leveraged in over 35 million vehicles since it was first launched in vehicles in 2010. So with all these new features, and low-power and robust performance, we see a very bright future for FD-SOI and the i.MX 8 in automotive. It’s going to be a great ride.

By Ronald M. Martino, Vice President, i.MX Applications Processor and Advanced Technology Adoption, NXP Semiconductors