This week Cadence introduced Legato™ Reliability Solution, intended to address increased challenges in designing high-reliability analog and mixed-signal ICs for automotive, industrial, aerospace and defense applications.

Continue reading “Legato Reliability Solution”

Retooling Implementation for Hot Applications

It might seem I am straying from my normal beat in talking about implementation; after all, I normally write on systems, applications and front-end design. But while I’m not an expert in implementation, I was curious to understand how the trending applications of today (automotive, AI, 5G, IoT, etc.) create new demands on implementation, over and above the primarily application-independent challenges associated with advancing semiconductor processes. So, with apologies in advance to the deep implementation and process experts, I’m going to skip those (very important) topics and talk instead about my application perspectives.

An obvious area to consider is low power design. Automotive, mobile (phone and AR/VR) and IoT applications obviously depend on low power (even in a car, all the electronics we are adding can quickly drain the battery). AI is also becoming very power-critical, especially as it increasingly moves to the edge for FaceID, voice recognition and similar features. 5G on the edge, in enhanced mobile broadband applications for example, must be very carefully power managed thanks to heavy MIMO support and the consequent parallelism required to support high throughput rates.

Power is a special challenge in design because it touches virtually all aspects, from architecture through verification and synthesis, then through to PG netlist. Certainly you need uniformity in specifying power intent. The UPF standard helps with this but we all know that different tools have slightly different ways of interpreting standards. Mix-and-match flows will struggle with varying interpretations, to the point that design convergence can become challenging. The same could even be true within a flow built on a single vendor’s tools unless they pay special attention to uniformity of interpretation. So this is one key requirement in the implementation flow.

Another big requirement, associated certainly with automotive but also long-life, low-support IoT, is reliability. We demand very low failure rates and very long lifetimes (compared to consumer electronics) in this area. Implementation must take into consideration on-chip variation (OCV) in timing. I’ve written before about the impact of local power integrity variations on local timing. Equally, power-inrush associated with power switching and unexpectedly high current demand in certain use modes increases the risk of damaging electromigration (EM). Traditional global margin approaches to manage this variability are already painfully expensive in area overhead. Better approaches are needed here.

Aging is a (relatively) new concern in mass market electronics. One major root-cause is negative-bias temperature instability (NBTI) which occurs when (stable) electric fields are applied for a long time across a dielectric (for example, when a part of a circuit is idle, or a clock is gated for long periods). This causes voltage thresholds to increase over time which can push near-critical paths to become critical. Again, it would be overkill (and too expensive) to simply margin this problem away so you have analyze for risk areas based in some manner on typical use cases.

Thermal concerns are another factor and here I’ll illustrate with an AI example. As we chase the power-performance curve, advanced architectures for deep neural nets are moving to arrays of specialized processors with need for very tightly coupled caching and faster access to main memory, leading to a lot of concentrated activity. Thanks to FinFET self-heating and Joule heating in narrower interconnects this raises EM and timing concerns which must be mitigated in some manner.

Still on AI, there’s an increasing move to varying word-widths through neural nets. Datapath layout engines will need to accommodate this efficiently. Meanwhile, the front-end of 5G, for enhanced mobile broadband (eMBB) and even more for mmWave must be a blizzard of high performance and highly parallel activity, in order to sustain bit-rates of 10Gbps. For eMBB at least (I’m not sure about mmWave), this is managed through a multi-input, multi-output (MIMO) interface through multiple radios to basestations, therefore multiple parallel paths into and out of the modem. In addition, there is support for highly parallel processing from each radio into one or more DSPs to implement beamforming to identify the strongest signal. Getting to these data-rates requires very tight timing management in implementation, also very tight power management.

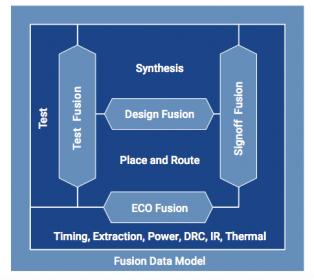

So yeah, I would expect implementation for these applications to have to advance beyond traditional flows. Synopsys has developed their Fusion Technology (see the opening graphic) as an answer to this need, tightly tying together all aspects of implementation: synthesis, test, P&R, ECO and signoff. The premise is that all these demands require much tighter correlation and integration through the flow than can be accomplished with a mix-and-match approach. Fusion Technology brings together all of the Synopsys’ implementation, optimization and signoff tools and purportedly has demonstrated promising early results.

If you’re wondering about power integrity/reliability, Synopsys and ANSYS have an announced partnership, delivering RedHawk Analysis Fusion, an unsurprising name in this context. So they have that part covered too.

I picked out here a few topics that make sense to me. To get the full story on the Fusion technology, check out the Synopsys white-paper HERE.

5 Ways to Gain an Advantage over Cyber Attackers

Asymmetric attacks, like those in cybersecurity, benefit the aggressor by maintaining the ‘combat initiative’. That is, they determine who is targeted, how, when, and where attacks occur. Defenders are largely relegated to preparing and responding to the attacker’s tempo and actions. This is a huge advantage.

Awakening Business

The business world is beginning to understand some of the inherent aspects they face. Warren Buffett, the Oracle of Omaha and CEO of Berkshire Hathaway, has stated cyber attacks are the “number one problem with mankind” and explained how attackers are always ahead of defenders and that will continue to be the case.

Not all is lost of course, but we must be cognizant of the attacker’s strengths in order to form a winning defensive strategy. Cybersecurity is not just patching or an exercise in engineering, rather it is a much larger campaign that involves highly motivated, skilled, and resourced adversaries on a battlefield that includes both technology and human behaviors. If you only see cybersecurity as a block-and-tackle function, you have already lost.

Finding Rocks

Some believe it is imperative to spend inordinate amounts of time and resources attempting to find every possible weakness, so the vulnerabilities can be closed. This is a near impossible task that nobody has ever achieved. It is a sinkhole of resources that can consume everything put forward.

The truth about vulnerability scanning strategies it that most are never exploited. Therefore, only a subset actually pose a material threat. Prioritization is key because it is a waste to commit security resources to threats that will never manifest. Just because something is possible, does not mean it will occur.

Consequently, I think the act of trying to find ALL vulnerabilities is a failing strategy, as it will consume far too many resources and still likely result in a compromise. As Fredrick the Great said, “In trying to defend everything he defended nothing”.

But that has not stopped the industry from travelling down this path. For many, it has become a rut of singular focus. The lure is that it seems like familiar territory, similar in nature to other information technology problems, and something which can be explained and partially measured. However, it is a mirage. Appearing just out of reach, it is easy to believe it is attainable. But no matter how far you walk, you never get there. Closing all vulnerabilities to be secure is a mentally satisfying theory, but in practice it is not practical. The emergence of vulnerabilities is tied to complexity and innovation. As long as technology continues to rapidly innovate and become more complex, vulnerabilities will never cease and therefore the process of closing them is never finished. In the end, organizations risk participating in a war of attrition where defenders are consuming vastly more resources than the opposition in a endless cycle of vulnerability discovery.

I would postulate you don’t need to address all vulnerabilities equally. I am not advocating abandoning the search for weaknesses, as there is great value in the exercise, but there should be allowances, prioritization, and permissible tradeoffs! In fact, it must be one part of a greater effort for organizations to manage the cyber risks of their digital ecosystems. It should never be the sole focus of a security group.

Other plans can counter such threats, especially if you are creative and have certain insights…. If you know your enemy, it can suffice to know how they will maneuver. Consider this, a chess player does not need to know all the possible future combinations. Rather, they lay traps, see the board from their opponent’s perspective, and move with insights to how their opponent will likely act. You beat the player, not the chessboard pieces.

The best way to gain an advantage over cyber attackers is to establish a professional, efficient, and comprehensive security capability that will last and adapt to evolving risks. Defenders must minimize the strengths of their opponents while maximizing their own advantages. Strategic planning is as crucial as operational excellence.

5 Recommendations to Build Better Security:

1. Establish clear security goals, measures & metrics, and success criteria. Thinking you will stop every attack is unrealistic. Determine the optimal balance between residual risk, security costs, and productivity/usability of systems

2. Start with a strategic security capability that encompasses a Prediction(of threats, targets, & methods), Prevention (of attacks), Detection (of exploitations), and Response (to rapidly address impacts) in an overlapping and continuous improvement cycle. This reinforces better security over time and positions resources to align with the most important areas as defined by the security goals. Over time each area will improve to support each other and collaboratively contribute to a better overall sustainable risk management posture.

3. Understand your enemy. Threat agents have motivations, objectives, and methods they tend to follow, like water seeking the path of least resistance. The more you align your defenses to these, the more scalable and effective you become. Don’t waste precious resources on areas that don’t affect the likelihood, impact, or threats.

4. Incorporate both technical and behavioral controls. Do not overlook the human element of both the attackers and targets. Social engineering, as an example, is a powerful means to compromise systems, services, and environments, even ones that possess very strong technical defenses.

5. Maintain a vulnerability scanning capability (technical and behavioral) but prioritize the effort to identify and remediate those which pose the greatest risk. That includes most likely avenues to be exploited and ones which can cause unacceptable impacts. Knowing that most vulnerabilities will never be leveraged, this is an opportunity to effectively use resources and reallocate to other efforts where it makes more sense

The Long Game

As organizations approach cybersecurity challenges, they should consider a more balanced, prioritized, and proactive approach to managing cyber risks. They will get farther, faster, with less resources and more focus. This is not a sprint. As long as technology continues to innovate and be implemented, risks will continue evolve.

In the end, strategic warfare outpaces battlefield tactics.

Interested in more? Follow me on your favorite social sites for insights and what is going on in cybersecurity: LinkedIn, Twitter (@Matt_Rosenquist), Information Security Strategy blog, and Steemit

The Rise and Fall of ARM Holdings

Publishing a book on the history of ARM was an incredible experience. In business it is always important to remember how you got to where you are today to better prepare for where you are going tomorrow. The book “Mobile Unleashed” started at the beginning of ARM (Acorn Computer) where a company went from a crazy idea a couple of engineers had for designing a processor from scratch to being a monopoly of processor cores controlling roughly 95% of the world’s mobile electronics today.

ARM book number two book begins with the acquisition of ARM by SoftBank Group Corp, a Japanese multinational conglomerate holding company. Nobody saw this one coming and some people, including myself, still wonder why the sixth-largest telephone operating company (by total revenue of $74.7B) would buy a semiconductor IP company.

Unfortunately, under SoftBank, ARM profits are dropping due to aggressive expansion (headcount and R&D investment). The explanation from SoftBank is that ARM is being positioned to rejoin the public markets in five to seven years to become an even higher profiting company.

According to the recently released 2018 IP Design Report from IP-Nest, ARM royalties are up 17% but ARM licenses are down 6.8% which is a much more troubling trend for future royalty strength. New accounting practices can always be blamed but according to my information the problem is much more a case of a change in company culture and behavior. Compounding that is the rise of a disruptive ARM alternative and that is RISC-V which truly has hit phenomenon status.

We have been covering ARM since the beginning of SemiWiki in 2011 with 262 blogs published that have been viewed 1,617,437 times by 13,993 different domains. RISC-V is a recent addition to SemiWiki analytics and thus far we have published 9 blogs that have been viewed 148,047 times by 6,988 domains. You can expect expanded coverage of RISC-V on SemiWiki in the near future for sure. Disruption is coming to the CPU IP market and we will have a front row seat. Disruption is for the greater semiconductor good, absolutely!

You can read more about RISC-V HERE or you can go straight to the member page HERE.

One of the more interesting RISC-V developments is Intel’s investment in SiFive, a RISC-V implementer. SiFive was founded by the creators of the free and open RISC-V architecture to battle the escalating costs of chip design. One of those cost barriers of course is the upfront ARM licensing fee.

We have long led the call for a revolution in the semiconductor industry, and believe SiFive, and our technologies, demonstrate a significant path forward for the industry,” said SiFive CEO Naveed Sherwani. “This investment by Intel Capital will enable SiFive to empower any individual or company to produce a silicon solution that meets their needs, quickly and affordably.”

“RISC-V offers a fresh approach to low power microcontrollers combined with agile development tools that have the potential to help reduce SoC development time and cost significantly,” said Raja Koduri, senior vice president of the Core and Visual Computing Group, general manager of edge computing solutions and chief architect at Intel Corporation. “SiFive’s cloud-based SaaS approach provides another level of flexibility and ease for design teams, and we look forward to exploring its benefits.”

The working title of the book is Rise and Fall of ARM Holdings but it can certainly change to Rise and Fall and Rise Again of ARM Holdings. We will have to wait and see how the CPU IP disruption unfolds over the next year or three so stay tuned.

TSMC Technologies for IoT and Automotive

At TSMC 2018 Silcon Valley Technology Symposium, Dr Kevin Zhang, TSMC VP of Business Development covered technology updates for IoT platform. The three growth drivers in this segment namely TSMC low power, RF enhancement and embedded memory technology (MRAM/RRAM) reinforced both progress and growth in global semiconductor revenue since 1980 –from PC, notebook, mobile phone, smartphone and eventually IoT. For 2017-2022 period, CAGR of 24% and 6.2B units of IoT end devices shipment by 2022 are projected.

Continue reading “TSMC Technologies for IoT and Automotive”

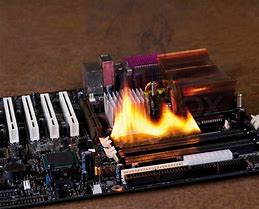

Webinar: Thermal and Reliability for ADAS and Autonomy

OK, so maybe the picture here is a little over the top, but thermal and reliability considerations in automotive in general and in ADAS and autonomy in particular, are no joke. Overheating, thermal-induced EM and warping at the board-level, in the package or interposers, are concerns in any environment but especially when you’re concerned about high-reliability, long lifetimes and nasty ambient temperatures. These can’t just be bounded to an on-die concern. The system as a whole has to meet reliability targets appropriate to the target ASIL rating and that means you have to look all the way from the enclosure and board down through regulators, to sensors and the SoCs in their packages. Calculating thermal profiles across this range and the potential impact on EM, stress and other factors, under a representative variety of use-cases and cooling strategies can be challenging. Join ANSYS to learn how you can manage this analysis using their range of tools spanning from the system to the die level and across multiple physics dimensions.

REGISTER HERE for this webinar on May 23[SUP]rd[/SUP], 2018 at 8AM PDT

Summary

Growing market needs for electrification, connectivity on the go, advanced driver assistance systems (ADAS), and ultimately autonomous driving, are creating newer requirements and greater challenges for automotive electronics systems. Automotive electronics, unlike consumer electronics, must operate in very harsh environments for extended periods of time. They must be safe and highly reliable, with a zero-field failure rate over a lifespan of 10 to 15 years. Thermal performance and reliability is critical for these high-power, intelligent electronics, which often include sensors and heterogeneous packaging systems.

Attend this webinar to learn how multiphysics simulations can address key reliability requirements for automotive electronics with thermal, thermal-aware electromigration (EM) and thermal-induced stress analyses across the spectrum of chip, package and system.

Speaker:

Karthik Srinivasan, Senior Corporate AE Manager, Analog & Mixed Signal Products, Semiconductor Business Unit, ANSYS Inc.

Karthik is currently working as a Senior Corporate AE Manager, Analog & Mixed Signal Products at the Semiconductor Business Unit of ANSYS Inc. His work focuses on product planning for analog/nixed signal simulation products and field AE support. His research interests include power estimation, power noise, reliability and thermal analysis for chip-package and system. He joined Apache Design Solutions in 2006 and has taken several roles as part of the field AE team. He received a B.S. in electronics and telecommunication engineering from the University of Madras, India, and an M.S. in electrical engineering from the State University of New York, Buffalo, in 2003 and 2005, respectively.

About ANSYS

If you’ve ever seen a rocket launch, flown on an airplane, driven a car, used a computer, touched a mobile device, crossed a bridge, or put on wearable technology, chances are you’ve used a product where ANSYS software played a critical role in its creation. ANSYS is the global leader in engineering simulation. We help the world’s most innovative companies deliver radically better products to their customers. By offering the best and broadest portfolio of engineering simulation software, we help them solve the most complex design challenges and engineer products limited only by imagination.

Machine Learning Drives Transformation of Semiconductor Design

Machine learning is transforming how information processing works and what it can accomplish. The push to design hardware and networks to support machine learning applications is affecting every aspect of the semiconductor industry. In a video recently published by Synopsys, Navraj Nandra, Sr. Director of Marketing, takes us on a comprehensive tour of these changes and how many of them are being used to radically drive down the power budgets for the finished systems.

According to Navraj, the best estimates that we have today put the human brain’s storage capacity at 2.5 Petabytes, the equivalent of 300 years of continuous streaming video. Estimates are that the computation speed of the brain is 30X faster than the best available computer systems, and it only consumes 20 watts of pwoer. These are truly impressive statistics. If we see these as design targets, we have a long way to go. Nevertheless, there have been tremendous strides in what electronic system can do in the machine learning arena.

Navraj highlighted one such product to illustrate how advanced current technology has become. The Nvidia Xavier chip has 9 billion transistors, containing an 8 core CPU, and using 512 Volta GPUs, with a new deep learning accelerator that can perform 30 trillion operations per second. It uses only 30W. Clearly this is some of the largest and fastest commercial silicon ever produced. There are now many large chips specifically being designed for machine learning applications.

There is, however, a growing gap between the performance of DRAM memories and CPUs. Navraj estimates that this gap is growing at a rate of 50% per year. Novel packaging techniques and advances have provided system designers with choices though. DDR5 is the champion when it comes to capacity, and HBM is ideal where more bandwidth is needed. One comparison shows that dual-rank RDIMM with 3DS offers nearly 256 GB of RAM at a bandwidth of around 51GB/s. HBM2 in the same comparison gives a bandwidth of over 250 GB/s, but only supports 16GB. Machine learning requires both high bandwidth and high capacity. By mixing these two memory types as needed in systems excellent results can be achieved. HBM also has the advantage of very high PHY energy efficiency. Even when compared to LPDDR4, it is much more efficient when measuring Pico joules per bit.

Up until 2015 deep learning was less accurate than humans at recognizing images. In 2015 ResNet using 152 layers exceeded human accuracy by achieving an error rate of 3.6%. If we look at the memory requirements of two of the prior champion recognition algorithms we can better understand the training and inference memory requirements. ResNet-50 and VGG-19 both needed 32 GB for training. With optimization they needed 25 MB and 12 MB respectively for inference. Nevertheless, deep neural networks create three issues for interface IP, capacity, irregular memory access and bandwidth.

Navraj asserts that the capacity issue can be handled with virtualization. Irregular memory access can have large performance impacts. However, by moving cache coherency into hardware overall performance can be improved. CCIX can take things even further and allow memory cache coherence across multiple chips – in effect giving the entire system a unified cache with full coherence. A few years ago you would have had a dedicated GPU chip with GDDR memory connected via PCIe to a CPU with DDR4. Using new technology, a DNN engine can have fast HBM2 memory and connect with CCIX to an apps processor using DDR5, with massive capacity. The CCIX connection boosts bandwidth between these cores from PCIe’s 8GB/s to 25GB/s. And, the system benefits from the improved performance from cache coherence.

Navraj also surveys techniques used for on-chip power reduction and for reducing power consumption in interface IP. PCIe 5.0 utilizes multiple techniques for power reduction. Both the controller and the PHY present big opportunities for power reduction. Using a variety of techniques, controller standby power can be reduced up to 95%. PHY standby power can be less than 100 uW.

Navraj’s talk ends by discussing the changes that are happening in the networking space and also talking about optimal processor architectures for machine learning. Network architectures and technologies are bifurcating based on diverging requirements for enterprise servers and data centers. Data centers like Facebook, Google and Amazon are pushing for the highest speeds. The roadmap for data center network interfaces includes speeds of up to 800 Gbps in 2020. Whereas enterprise servers are looking to optimize power and operate at 100Gbps by then for a single lane interface.

The nearly hour long video entitled “IP with Near Zero Energy Budget Targeting Machine Learning Applications” is filled with interesting information covering nearly every aspect of computing that touches on machine learning applications. While a near zero energy budget is a bit ambitious, aggressive techniques can make huge differences in overall power needs. With that in mind I highly suggest viewing this video.

Is there anything in VLSI layout other than “pushing polygons”? (9)

I moved from real layout work to management so I had little or no “hands-on” layout in my responsibility but I was very close to my team challenges in all 5 locations. During my 13 years in PMC Sierra I was involved in many initiatives some technical and some in developing relations with vendors. The biggest difference was that MOSAID was eager to get media coverage and PMC was very secretive of the tools or vendors it used. In this period I had to sign a lot of personal NDA as software companies wanted to ensure their ideas are kept confidential.

It started with integration of our microprocessor division from the former QED company. They were designing processors and the rest of PMC SoCs. We were using a simple TSMC process and they were using a “fancy” high-end foundry that offered additional speed and had an easy process migration road-map. The task was to unify the PDK, the tools and the efforts in such way that

our teams can provide IP to microprocessors for fast interfaces and we may use their Cores for our needs. In 90 nm we developed an internal LAMBDA process between the 2 flavours, the worst common denominator!!! A very challenging and interesting initiative that enabled me to use my Motorola experience. Working long distance with Sinan Doluca and his team was a challenging task but became a long time friendship. As a processor is very dependent on the quality of Register Files,

Multipliers, Adders, we started to work with vendors to find a Datapath friendly tool.

Sycon had a silicon compiler for Datapath as they were coming from Intel microprocessor group and CAD. They developed a new cell level solution to compliment the initial block level software. I was a very closed reviewer of all these developments and at one DAC the conference electrician had to wait until Jack Feldman finished to show me the “unreleased features” at 5 PM Thursday evening! That was exiting !!! At the same time Cadabra, who had a silicon compiler for cells, was trying to move up to block level but was not ready yet. I decided that the best option will be to work with Pulsic. They had a full custom/digital place and route solution that could add some Datapath options for microprocessor needs. I started to talk

to Dave Noble and Jeremy Birch to add features like global signals pre-placement and device placement based on “pre-routes”. We managed to get the features implemented but by that time PMC decided to stick with a single vendor so we lost the opportunity to use PULSIC. However LAMBDA flow required a lot of DRC fixing so we got Sagantec SiFix at that time, see my previous article.

One of the big issues CADENCE had was that users were reluctant to new tools, most of them were using Virtuoso L, not even the VXL. In top of VXL Cadence bought a few companies (Neolinear, CCT, QDesign,

etc.) with very powerful tools that had introduction issues. The main reason was high introductory price. Each of the licenses with advance features costs a lot of money and nobody was planning to use them for a very long time at once (CPU time)… For a 2 hours routing every week you needed to prove that 150K tool increases productivity and it can be quantified. After many discussion with Cadence I shared with them the tokens based system, an idea coming from Michael Reinhardt former CEO of Rubicad. You can pool together all the expensive licenses like VCP, VCR, VLM, MODGEN, etc. and call it … GXL. Each of these licenses has a now an equivalent value in tokens. Lets say 20 tokens for a global router, 30 for VLM, 6 for MODGEN. This way if you think you know what the team needs you purchase 60 tokens for a year and you can use at the same time (CPU controlled) 2 VLM license or 3 global routers or 10 MODGEN. Once you

release the licence somebody else can reuse the tokens for their needs by calling different tools from the pool. I cannot claim that GXL was my idea, but I was closely involved with Mike Stroobandt and benefited for the following years like many of you.

Saying you want Electromigration tools is a good start but not enough. We did solve the issue in PMC in 2002 but this was a post layout solutionand I wanted an “online as you go” option. I decided that the only way to make this topic popular is to socialise the concept with my friends in the industry and the software world. I built a short PPT and with each occasion I presented to Synopsys, Cadence, Mentor, ClioSoft, etc… From sales people to architects, from technical marketing to implementation guys.

I even spoke with university professors to push it. One of them, Jens Lienig, a German professor presenting in DAC panels, provided me with his papers on Electromigration. Apparently I was not the only one asking for it so Cadence Vinod Kariat and David White promised to do something about it, the problem they saw was to find capable drivers to take over such initiative. I proposed my old friends Michael McSherry & Ed Fisher. Michael was the former marketing manager for Calibre and Ed was the former engineering manager for IC Station, now both in Cadence. To my surprize the following DAC I was invited in a booth and meet both of them, ready to take over the challenge. We spent some time to go over the idea and the basic features. I was interested to follow-up but PMC did not agree to be part of Alpha or Beta testing so I did it from the sidelines… By the time theElectrically Aware Design or as you know it EAD, was ready for public use I was out of PMC. I enjoy all the demos and new developments on this tool as I consider it a revolutionary, by improving design and layout in full custom world in all technologies down to 7 nm.

During my PMC time I I worked with many companies to help them bring new ideas to market. From ClioSoft Laker 4, to Cadence EAD, from Mentor Graphics Calibre CB to Pulsic Animate, I always tried to push forward automation to make layout designers work easier and less monotone. I reviewed a lot of specifications, I was part of products reviews and demos “for your eyes only”, I had (and still have) a lot of fun.

I had endless debates with John Stabenow for complex features and demos (some in Cadence and some in Synopsys), Jeremiah Cessna for MODGEN with PCELLS or not, Olivier Arnaud for VXL grouping features, Philippe Hurat for advance nodes automation, etc. This names are only from Cadence and I have to thank Deana Spencer for enabling me to talk to a lot of internal architects and developers… I had similar talks with Mentor, Synopsys and many small startups to help them translate their vision into something we, the users, can relate… Many time things moved forward or changed direction, sometimes they disappeared like Cadabra or Cosmos. I am sorry that Mar Hershenson did not succeed to bring Barcelona Design or next solutions to success. I considered it a good revolution in automating layout for “template

circuits”. I was lucky to meet all these industry experts as my book was used in many cases to explain concepts or argue in flow debates.

We looked closely at Accelicon AVP with Mahesh Guruswamy help and Bindkey DRC cleaning tools with

Micha Oren, but by the time were ready to buy their tools the companies were bought and all got cancelled. Mixed Signal Layout group liked automation so much that with VCP + VCR Stephane Leclerc rebuilt the ACPD flow in 65 nm and we prepared a paper for CDN Live in 2008.

By 2012 we moved to a new technology node so mixed signal team decided to use automation at maximum by using schematic driven P & R. We needed a high speed library for the digital parts of our analog blocks. We ended up with almost 2000 cells “wish list”. We had 2 option, by hand or automated, but as this was never done at this level in PMC the risk of effort/schedule overrun was big in both cases. Based on our Return On Investment (ROI) presentation the manual solution was 4x the price of automation so it looked worthy. We used2 layout designers, 1 CAD and 1 circuit designer and created in 2.5 months 2000 high-performance standard cells layout in 28 nm running between 1.6 to 6.2 GHzspeed in post layout simulation. Special appreciation for Jens C. Michelsen the COO of Nangate who agreed to a short license “lease” and sent us a great trainer. In one week the team was able to tackle all tool issues and we were able to produce. The team finished 15 days ahead of plan and the final ROI including the tool price, training and ramp up went to 5x cheaper than hand crafted library.

Another proof that automation and not manuallabour is the future!

More to come… stay put on Sankalp contributions…

Dan Clein

Don’t Balkanize Automotive Safety

The Wall Street Journal reported, last week, that auto makers are lining up on opposite sides of the talking cars debate. Some car makers – General Motors, Toyota and Volkswagen – are pushing Wi-Fi-based dedicated short range communication technology (DSRC) while others – Ford, Audi and BMW – are emphasizing 5G for the same application.

“5G Race Pits Ford, BMW, against GM, Toyota”

The importance of this debate derives from the fact that both DSRC and 5G technologies offer the prospect of direct device-to-device or car-to-car communications for the purposes of avoiding collisions using the same wireless frequencies but with incompatible protocols. Both approaches enable communications between cars and, potentially, between cars and infrastructure and between cars and pedestrians.

Not only are the two means of inter-vehicle connections incompatible, they both require infrastructure that does not exist today. Estimates for the cost of deploying a complete DSRC system in the U.S. run as high as $108B. Deploying 5G will be equivalently expensive for wireless carriers.

The difference in deployment scenarios boils down to Federal and state departments of transportation in the U.S. (and elsewhere in the world) with little or no available funds to stand-up the required DSRC network. Meanwhile, wireless carriers, such as AT&T, have stepped forward to acknowledge that they are indeed prepared to deploy 5G technology more rapidly than they have ever deployed any previous technology.

n fact, wireless carriers are working with state regulators in the U.S. to receive permission to install the thousands of micro cells necessary to support 5G transmission. Many, if not most, of those micro cells will be installed along highways to support vehicle connectivity.

But the key to the talking car equation is the direct communications between vehicles that will enable collision avoidance applications along with the near instantaneous communication of road hazard information. Both DSRC and 5G technologies will enable these life-saving communications.

I emphasize the point because many automotive engineers are still skeptical that carriers will deploy wireless modules on their networks that will enable direct communications without network support or connectivity. The reality is that wireless carriers have been taking advantage of Wi-Fi technology to offload traffic for many years. Extending the functionality of Wi-Fi to vehicle safety applications is a small, but important and very real leap.

The bigger problem is the underlying conflict between the DSRC and 5G camps. Since DSRC and 5G share the same frequencies but cannot communicate directly the intransigence of DSRC advocates is setting the stage for the balkanization of vehicle safety.

There is no organic demand for DSRC, beyond commercial vehicle applications successfully deployed and demonstrated by fleet market operators such as Veniam. DSRC is not found in mobile phones and has seen only limited deployment in roadside infrastructure thanks to a few dozen DOT-funded projects around the U.S. – a scenario very much like what has emerged in Europe.

Just as auto makers appear to be divided over the issue of DSRC support, state-level DOTs are similarly split. Seventeen of the 50 state-level DOTs in the U.S. sent a letter to the U.S. DOT earlier this year calling for the Federal agency to proceed with the DSRC mandate.

Letter from Coalition for Safety Sooner from individual U.S. DOTs

These states clearly appreciate the opportunity to tap into the flood of funding ($108B) necessary to support the deployment of DSRC. The remaining states are either indifferent to DSRC or ambivalent or out-and-out hostile. States such as Virginia have already determined that they will waste no more of their own funds on a technology, DSRC, that is on the verge of being bypassed by the more advanced 5G network.

In the end, divisions between car makers and DOTs threaten to balkanize automotive safety while unnecessarily contributing to the increased cost of deploying safety systems. The wireless network being deployed by the carriers such as AT&T and Verizon will deliver this new safety proposition – effectively extending the range of existing vehicle sensors while expanding their capabilities.

The only path to market for DSRC is via a Federal mandate. The mandate is expected to add $300 dollars to the cost of a new vehicle without delivering any customer value proposition for another 10-20 years – i.e. the time it will take for DSRC to become sufficiently penetrated into the broader fleet of consumer vehicles.

At the meeting of the 5G Automotive Association two weeks ago in Washington, DC, the relief of attending DOT representatives in the room was palpable when an executive from AT&T detailed the company’s plans for 5G infrastructure investment. No such similar plan is on offer from the Federal government.

The best news for the DSRC side of the debate is that the estimated $700M spent thus far on DSRC development over the past 15+ years will not go to waste. The coding and standards and testing created for DSRC are now being applied to LTE-based C-V2X technology as well as 5G. C-V2X cellular networks will support PC5 mode 4 interfaces for direct vehicle communications. At the DC event Ford and Audi demonstrated collision avoidance maneuvers using C-V2X.

It’s time for car makers and DOTs to align on the implementation and deployment of 5G technology and abandon the expensive distraction known as DSRC. DSRC will preserve its relevance in the fleet industry and maybe for emergency response vehicles.

Safety systems in cars are expensive enough without requiring auto makers to simultaneously support two incompatible wireless safety systems. DOTs, too, are insufficiently funded to support two paths to saving lives.

Worldwide Design IP Revenue Grew 12.4% in 2017

When starting SemiWiki we focused on three market segments: EDA, IP, and the Foundries. Founding SemiWiki bloggers Daniel Payne and Paul McLellan were popular EDA bloggers with their own sites and I blogged about the foundries so we were able to combine our blogs and hit the ground running. For IP I recruited Dr. Eric Esteve who had never blogged before but he took to it quite quickly. I knew Eric from his IP reports at my previous position working with the foundries at Virage Logic.

Since going online in 2011 SemiWiki has published 693 IP related blogs with 3,572,124 views. Eric has written 277 of those blogs averaging close to 6,000 views per blog. Today Eric is by far the most respected IP analyst with the most detailed and accurate reports and it is an honor to work with him, absolutely.

According to the Design IP Report from IP-Nest the market is still doing very well with YoY growth of 12.4% in 2017. The ARM Group of Softbank (previously known as ARM Holdings) is again a strong #1 with IP revenues (licenses plus royalties) of $1,660 million and 46.2% market share, followed by Synopsys growing by 18% to $525 million and 14.7% share. Broadcom, being the addition of Avago + LSI Logic + Broadcom, is making an entry in the top 3, replacing Imagination. Both Cadence and CEVA are showing 20%+ growth in 2017.

IPnest has defined 11 categories ranking IP vendors. The CPU IP category is the largest with about 42% of revenues from design IP. There are strong disparities between CPU, DSP, and GPU & ISP as the weight of the CPU category is about 9x the DSP and 5x the GPU/ISP.

ARM is obviously #1 in the CPU category, and will probably keep this position for a long time due to the royalty mechanism. Nevertheless, we can see that ARM CPU IP license revenue has declined by 6.8% YoY, more than compensated by the royalty revenue growing by 17.8%. The reasons may be multiple. After the ARM acquisition by SoftBank, the accounting policy was changed creating what Eric calls an “artifact”. However, in my opinion we are starting to see the impact of RISC-V becoming a credible alternative to the ARM CPU hegemony. The 2019 Design IP report should confirm this.

In the Processor group (CPU + DSP + GPU & ISP), Imagination Technologies (IMG) is still #2 but I expect their royalty revenue to collapse when Apple effectively moves to an internal GPU solution. Now, if we consider the followers, both CEVA and Cadence have made 20%+ progression in 2017, then it wouldn’t be surprising to see one of these two companies becoming the #2 next year. In my opinion it will be CEVA but in any case the ranking in the Processor group will be disrupted next year.

The next group after Processor is the Physical IP, including: Wired Interface IP, SRAM memory compiler, other Memory Compilers, Physical Libraries, Analog and Mixed-Signal and Wireless Interface IP.

If we look at the Wired Interface IP category, it’s now at $735 million (20% YoY growth) and 20.5% of the total. Synopsys is the clear leader with about 45% market share, as well as in the Physical IP group with 35% market share.

If you take a look at the picture above you can see an interesting trend; the 2016/2017 evolution of the Wired Interface IP as a portion of the total moves from 19% to 20.5% when Processor IP declines from 58.3% to 56.4%.

As forecasted by IPnest in the “Interface IP Survey & Forecast”, the Wired Interface IP should reach $1 billion in a few years (2021 or 2022). Even though ARM is giving up in China through a so-called “joint venture”, the Wired Interface IP category will remain an island of (growing) stability in the 2020s.

Increasing complexity: can you imagine that the DDR5 memory controller PHY is now running at 4400 Mb/s? This is also 4.4 Gb/s or almost the PCIe Gen-2 data rate (5 Gb/s), which was one of the IP stars 10 years ago.

Eric was chairman of a panel during DAC 2017 “Growing IP market despite semi consolidation” and this panel came to a consensus: The IP market in 2010-2020 is like the EDA market in 1980-1990, outsourcing was the rule and the result was an EDA market completely externalized by 2000. As long as a function is not perceived as a differentiator by a design team, it can be outsourced and it will become an IP sold by commercial vendors (see the Top 10 list).

Bottom line: If you apply a 12% CAGR for the next 5 years you can easily predict a $6 billion IP market in 2022.

To buy this report, or talk to Eric you can contact him at eric.esteve@ip-nest.com. He will also be at DAC again this year if you want to meet him.