I previously wrote a blog about a session from Day 1 of the AI Hardware Summit at the Computer History Museum in Mountain View, CA, held just last week. From Day 2, I want to delve into this presentation by Bryan Bowyer, Director of Engineering, Digital Design & Implementation Solutions Division at Mentor, a Siemens Business. This conference brought together many companies involved in building artificial Intelligent and machine learning hardware solutions. Naturally, there were several discussions around AI software and applications as well. Day 1 of the conference was more about solutions in the data center, whereas Day 2 was primarily around solutions at the Edge.

Most solutions at the Edge have power restrictions. These often are battery-powered or energy harvesting devices such as remote cameras, robots, cell phones, and many other sensor-carrying devices. A different class of edge devices represents those devices which are always on, such as smart appliances, leading to power concerns for a different reason – because it is always on. Of course, higher power typically leads to higher heat dissipation which again will lead us to prefer lower power. At the Edge, power is critically important.

When you look at designing for low power, we have been given many new tools and techniques over the past few years. Some are in process technology and the potential power saving we achieve from going to new process nodes. These savings have been getting less dramatic at each node. There are also circuit innovations that can occur, such as new memory techniques. The biggest savings will come from architectural decisions. This is for two important reasons: (1) if you can find a more efficient algorithm you can save energy; and (2) if you can implement the system in a set of blocks that give you the ability to turn off a large amount of the system resources when they are not in use, you can also save substantial power. The challenge is how best to achieve this.

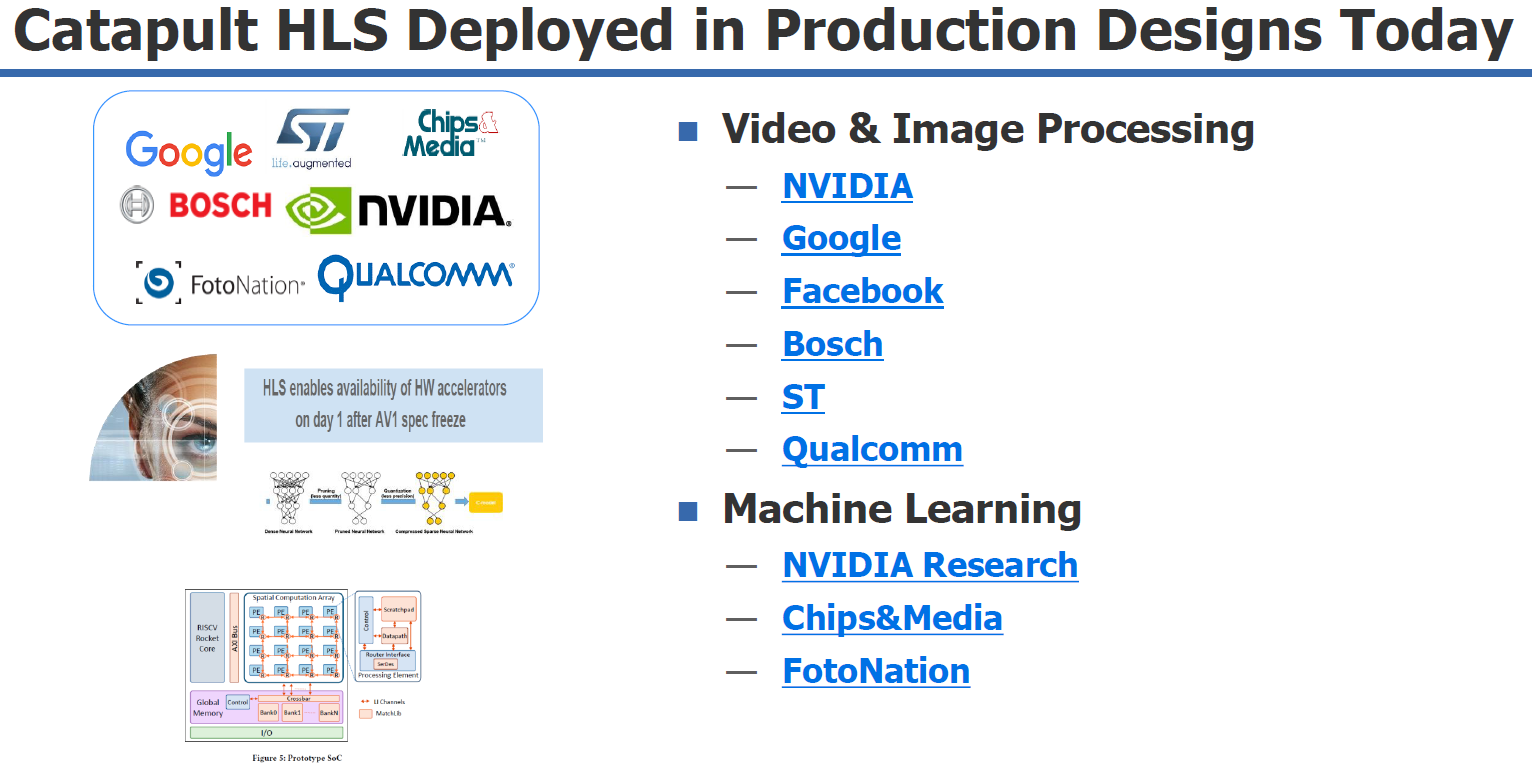

To explore the algorithmic space, you need to be able to work at a sufficiently high level to make a difference. By far, the simplest way to do this is with high-level synthesis (HLS). Companies are doing this today, particularly in the areas of video and image processing, and in machine learning. HLS can be applied to ASIC or FPGA design. It can even make it possible to make late functional changes without severely impacting the project schedule. Mentor’s Catapult HLS has been deployed by many top companies in this area, as shown in the diagram above. You can get more information on Catapult HLS here.

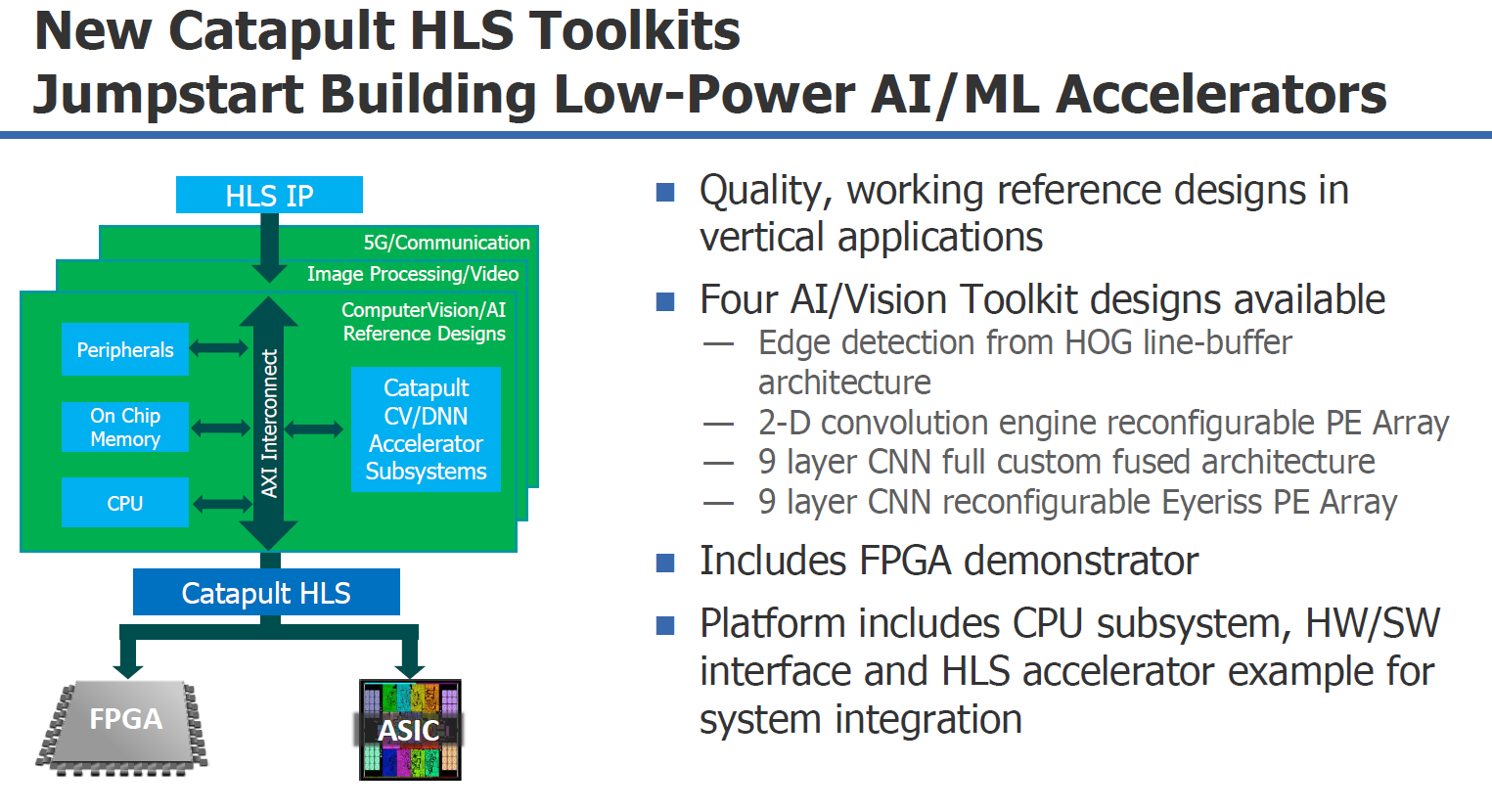

One of the significant challenges of building custom hardware solutions is trying to explore multiple architectural choices to find the best combination of power, performance, and area (PPA). One aspect of this is in estimating the impact in different levels of precision in different stages of the design. Reducing accuracy in earlier stage may have little impact on the final result, yet greatly reduce power or area. Exploring these architectural choices in RTL is impractical. Instead, designers are turning to High-level Synthesis (HLS) to survey these custom solutions. HLS provides several high-level optimizations such as automatic memory partitioning for complex memory architectures needed by the PE array, interface synthesis of AXI4 memory master interfaces for easily connecting to system memory, and synthesis of arbitrary precision data types for tuning the precision of the multiple hardware architectures. Since the source language for HLS is typically C++, it can easily plug back into the deep-learning framework where the network was created, allowing verification of the architected and quantized network.

For those working on AI/ML accelerators, Mentor has made it even easier to get started by providing Catapult HLS Toolkits. There are currently four toolkits available, as described in the figure above. These toolkits seem especially well suited to AI/ML designs in Edge devices. Mentor’s participation in the AI Hardware Summit, the number of customers already using Catapult HLS in production, and the release of these toolkits specifically targeting designs people need for AI and ML have me convinced that Mentor is quite serious about this area and designers in this area need to consider them.

Below are some additional resources on the Mentor website about this topic:

Chips&Media: Design and Verification of Deep Learning Object Detection IP

NVIDIA Case Study on High-Level Synthesis (HLS)

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.