It was inevitable that EDA applications would meet the cloud. EDA has a long history of creating some of the most daunting compute challenges. This arises from employing current generation chips to design the next generation chips. Despite growing design complexity, many tools have kept pace and even reduced runtimes from generation to generation of process technology.

It was inevitable that EDA applications would meet the cloud. EDA has a long history of creating some of the most daunting compute challenges. This arises from employing current generation chips to design the next generation chips. Despite growing design complexity, many tools have kept pace and even reduced runtimes from generation to generation of process technology.

Mentor’s Calibre is a good example of this, with its annual performance improvements of 25%. Because of that, Mentor’s level of innovation has kept up with the doubling of transistors seen from one node to the next. Naturally designers are glad that turnaround time has held steady over the years. This has been accomplished with foundry assisted rule deck optimization, runtime memory reductions, core engine improvements, and the addition of parallel operations. However, sometimes standing still is not enough.

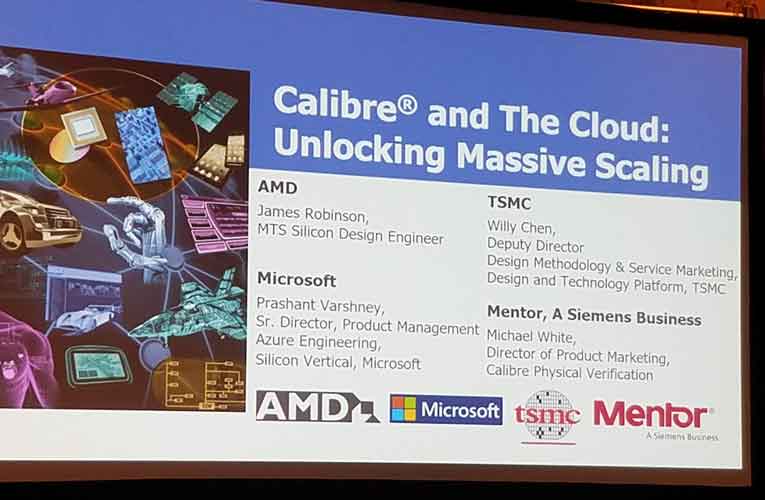

The cloud presents a huge opportunity to obtain absolute gains in throughput that cannot be realized in any other fashion. However, it is not as simple as launching a few cloud CPU instances and running tools. At the 2019 DAC in Las Vegas, Mentor hosted a four-way presentation about their cloud solution for Calibre. There is no doubt that DRC runtimes can be a bottleneck during the final stages of chip design prior to tapeout. This combined with the accompanying dataset size and computational complexity makes DRC an ideal candidate for cloud based improvements.

The other participants besides Mentor in the presentation were TSMC, Microsoft and AMD. Each of them plays a key role in development of the cloud solution for Calibre. Mentor has made many changes to Calibre to improve efficiency in the cloud. Michael White, Calibre Physical Verification Product Marketing Director, talked about how they worked to make launching cloud-based runs more transparent, by using architectural changes in the way jobs are scheduled and data is transferred to the cloud. Because the cloud can provision extremely large numbers of processor threads, Mentor has exploited every avenue to allow increased parallelization.

Next, we heard from TSMC’s Willy Chen, Deputy Director of Design Methodology & Services Marketing for their Design and Technology Platform. He talked about how on the 10th anniversary of the TSMC Open Innovation Platform (OIP), they worked with Mentor on the “Calibre in the Cloud” project. As part of this this Mentor has joined the OIP Cloud Alliance. This includes a cloud certification process, which focuses on security and creates a legal framework for all the necessary parties to work together.

For the certification process they used a TSMC N5 test chip with Calibre. This design has 500M gates and has a GDS size of 17 GB. The runtime was reduced from 24 hours to 4 hours. This was largely the result of being able to efficiently apply 1024 CPUs as compared to a baseline of 256.

Prashant Varshney, Senior Director, Product Management Azure Engineering from Microsoft spoke about how they looked at every aspect of the chip design process to understand the requirement for each step in terms of memory, CPUs, threading, etc. Using this information, they have mapped each step in the process to specific Azure resources. They also have unique technologies, both in-house and through partnership, for improving cloud performance. Netapp is helping them optimize NFS performance, CycleCompute allows them to bring up 60,000 cores in just 20 minutes. Lastly, AvereNFS helps improve I/O performance with a cloud disk read cache, which is useful for libraries, etc.

The most interesting aspect of the meeting was the AMD presentation. Here we literally see their latest hardware being used to design the next generation of hardware. AMD EPYC processors are used by Microsoft in the Azure Cloud. James Robinson, MTS Silicon Design Engineer at AMD spoke about their experience using Calibre in the cloud. He said that AMD EPYC is well suited for Calibre, with 4 silicon die in each package, containing a total of 32 CPUs that offer 64 threads. There are also 8 DDR4 channels for improved memory support.

Initially the memory requirements for Calibre were prohibitive. However, James observed that Calibre’s per instance memory requirements are reduced as jobs are distributed over greater numbers of processors. He was able to reduce the memory needs so that they fit into available instance types this way. Of course, this offers the added benefit of reduced runtimes. They also learned that it is more efficient to wait to allocate the worker instances until they are needed. Mentor made changes to include this improvement. Mentor gave AMD early cloud-optimized versions of Calibre for testing. James reported that AMD saw a 10 hour run reduced to just over 6 hours.

The takeaway from this presentation was that by combining the efforts of cloud providers, foundries and EDA vendors, significant gains can be made relative to running tools on premises with more limited resources. The cloud can be cost effective because you can literally buy “time”, one of the most valuable commodities for a business. James from AMD pointed out that he was able to apply 4,000 cores to his Calibre runs, which according to him, not even AMD has available on short notice.

After many years it seems that cloud computing for EDA applications is ready and can be an effective tool in increasing productivity. There is more information about Calibre in the Cloud on the Mentor website.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.