Hardware emulation arose as a necessity out of the needs of the eighties. By the mid-1980s, semiconductor designs had outgrown the practical limits of gate-level simulation. Gate-level simulation delivered accuracy, but at glacial pace; silicon prototypes performed at real-speed but arrived far too late. The industry needed a new instrument, a verification engine capable of executing real hardware models at meaningful speed while preserving the visibility and control required for thorough verification. Hardware emulation was born to fill that gap.

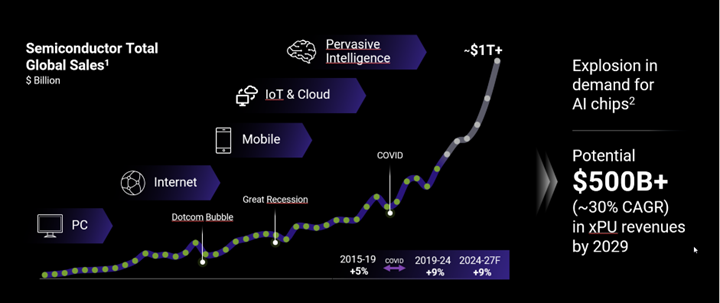

Who would have thought at that time that Moore’s law of complexity growth would be so dramatically outgrown by AI model complexity around the 2020s. Well, hindsight is 20/20 as we know. Let’s take a look at what actually happened with semiconductors demand:

Through 4 generations of major electronic systems eras: PC, Internet, Mobile, IoT & Cloud, one can argue that the evolution of emulation was driven by different paradigms fighting for market share through a different balance of technology advantages serving very broad market requirements. Today the market is primarily investing in AI chips which must execute AI models with explosive complexity growth. It is not a surprise that emulation architectures with a performance advantage for executing these long AI workloads are today’s winners in the market.

Let’s visit the journey of how all the emulation technologies evolved and how commercial FPGA-based architecture came out on top for today’s needs of AI system verification.

The first generation of emulators relied on vast arrays of commercial FPGAs, a radical leap forward at the time. These systems enabled pre-silicon validation of complex chips that would otherwise have required years of simulation alone. For nearly a decade, progress followed a predictable trajectory: each new generation of FPGA devices delivered greater capacity, higher performance, and the ability to map increasingly ambitious designs. Scale improved dramatically, but the underlying philosophy remained largely unchanged.

As these platforms grew, however, their limitations became impossible to ignore. Increasing logic capacity did not resolve the architectural constraints embedded in their foundations. Early FPGA-based systems carried what many engineers would later describe as their original “sins.”

The sheer number of FPGAs required to keep pace with exploding design sizes drove setup times into weeks or even months. Compilation cycles stretched into days, often delaying DUT readiness beyond project schedules and making iterative development painfully slow. Design visibility was equally constrained: internal observability depended on compiling probes into the fabric, consuming valuable resources, increasing routing congestion, and turning debug into a laborious exercise. Execution models were rigid, centered entirely on in-circuit emulation (ICE), limiting flexibility for interactive debugging. And the total cost of ownership—purchase, operation, and maintenance—placed these systems far beyond the reach of most engineering teams.

As a result, hardware emulation remained confined to the most critical verification challenges, typically late in the design cycle and within only the most advanced organizations. For many teams, it was not a day-to-day engineering platform but a scarce, high-value resource—powerful, indispensable, and perpetually in short supply.

The Seeds of a Great Divide Were Beginning to Grow

By the mid-1990s, the commercial landscape appeared stable on the surface, dominated by two primary players: Quickturn Design Systems and IKOS Systems. Yet beneath that stability, the field was undergoing a profound transformation. Designs were scaling rapidly, software stacks were growing alongside hardware complexity, and verification demands were shifting from block-level correctness to full-system behavior. The question was no longer whether emulation could scale accordingly, but how.

Out of these pressures emerged a fundamental divergence in architectural thinking. Vendors and engineering teams began reimagining what an emulator should be: not just a larger FPGA array, but a purpose-built verification instrument optimized for visibility, controllability, and system-level performance. This rethinking gave rise to three distinct hardware emulation architectures—each grounded in a different philosophy, each making different trade-offs in speed, scalability, and usability, and each shaping the trajectory of pre-silicon verification for decades to come.

The three architectural approaches that emerged came to be known as processor-based emulation, custom-FPGA-based emulation, and commercial-FPGA-based emulation. Each represented a distinct attempt to overcome the growing limitations of simulation while enabling hardware designs to be validated at meaningful speed and scale. The essential characteristics of these technologies can be understood by examining their origins, evolution, and practical trade-offs.

Processor-Based Emulation

IBM and the Emergence of Processor-Style Approach

In the early 1980s, IBM began exploring hardware acceleration techniques to improve design verification efficiency through projects such as the Yorktown Simulation Engine (YSE) and the Engineering Verification Engine (EVE). These systems functioned as simulation accelerators—special-purpose computing platforms designed to execute hardware descriptions expressed in software languages more quickly than conventional simulators. While they delivered measurable speed improvements, they still fell short of the performance required to apply real-world stimulus to the design under test (DUT).

By the mid-1990s, IBM had refined a new architectural direction centered on arrays of simple Boolean processors. These processors operated on design data structures stored in large, shared memories and were coordinated by sophisticated scheduling mechanisms. The approach proved adaptable to full emulation workloads, offering a scalable alternative to traditional simulation. Still, IBM did not capitalized on the technology.

Quickturn and the Commercialization of Processor-Based Emulation

After nearly a decade of experience with commercial FPGA-based emulation systems, Quickturn reckoned that despite important advances, these emulators revealed structural limitations that proved difficult to overcome. Achieving sufficient design capacity required interconnecting hundreds of FPGAs across multiple boards, creating significant logistical and engineering challenges. Partitioning and routing designs across this distributed fabric often needed months of preparation to avoid congestion and ensure deterministic behavior. Debug visibility had to be explicitly compiled into the design, competing with routing resources and slowing development cycles. Performance also failed to scale linearly with design size, with execution speed declining as workloads grew more complex.

In search for a solution, Quickturn evaluated a custom-FPGA architecture developed by a French startup by the name of Meta System. At the same time, Mentor Graphics, after abandoning earlier experimentation with emulation and selling all assets to Quickturn, pursued the same path. The resulting competition escalated into legal disputes over intellectual property, ultimately culminating in Mentor’s acquisition of Meta System.

Quickturn, already familiar with IBM’s processor-based work, moved decisively in that direction. Rather than commercializing the technology directly, IBM entered into an exclusive OEM agreement with Quickturn, enabling the latter to incorporate the architecture into a new generation of emulation systems.

IBM’s processor-centric architecture offered a compelling alternative. It addressed three of the most persistent constraints associated with FPGA-based systems: lengthy setup and compilation cycles, restricted debugging visibility, and performance degradation at large scale. One drawback—less visible at the time—was higher power consumption compared with FPGA-based solutions of equivalent capacity.

In 1997 Quickturn acquired the IBM technology and soon after introduced the Concurrent Broadcast Array Logic Technology (CoBALT) emulator, the first major commercial platform built on a processor-style architecture. The product achieved rapid market acceptance.

The competitive landscape continued to shift. Ongoing litigation between Mentor and Quickturn persisted until around 2002, when Cadence acquired Quickturn, resolving the disputes and consolidating key emulation technologies within its portfolio.

Cadence and the Scaling of Processor-Based Emulation

Following the acquisition, Cadence phased out Quickturn’s FPGA-based product lines and committed fully to the processor-based paradigm. This decision laid the foundation for the long evolution of the Palladium family of emulators, which became the company’s flagship platform.

Across successive generations introduced from the early 2000s onward, Palladium preserved its fundamental architectural principle: large arrays of simple processors working in concert to emulate hardware behavior at scale. With each iteration, design capacity expanded, execution performance improved, debug capabilities became more comprehensive, and compilation workflows grew faster and increasingly automated.

Two characteristics consistently defined the appeal of the platform. First, compilation times were significantly shorter than those associated with FPGA-based approaches, enabling faster turnaround during development. Second, engineers benefited from full design visibility at runtime without requiring special compilation steps, a powerful advantage for debugging and iterative verification.

Palladium also proved particularly strong in in-circuit emulation. A broad ecosystem of speed bridges enabled direct interaction with real hardware interfaces, allowing software and hardware to be validated together in realistic operating conditions.

These strengths were accompanied by structural trade-offs. Processor-based systems required substantial physical infrastructure and typically consumed more power than FPGA-based emulators of comparable capacity. Customer had to invest into costly watercooling infrastructure. Scaling to multi-billion-gate designs often demanded large installations composed of numerous cabinets. In transaction-based acceleration scenarios, processor-based platforms also tended to operate at lower execution speeds than competing architectures optimized specifically for that use case.

Despite these constraints, processor-based emulation established itself as a foundational technology in hardware verification, offering a unique balance of scalability, visibility, and productivity that continues to shape modern emulation platforms.

Custom-FPGA-Based Emulator

Custom FPGAs: A Parallel Innovation Path

While IBM was advancing processor-based emulation in the United States, a parallel and equally important line of innovation was taking shape in Europe.

In France, Meta System began developing a class of programmable silicon inspired by field-programmable gate arrays but engineered specifically for emulation workloads. These devices—often referred to as custom FPGAs—were not intended for general logic prototyping or ASIC design. They were purpose-built as the computational fabric of an emulator.

Unlike commercial FPGAs, whose architectures must accommodate a broad range of applications, Meta System’s programmable devices were optimized for the specific requirements of hardware verification. Their architecture combined configurable logic elements with a dense, deterministic interconnect matrix tailored for predictable timing. Embedded multi-port memories enabled efficient storage of design state and stimulus data, while high-bandwidth I/O channels supported connection to external systems and software environments. The devices also incorporated built-in debug engines, including memory-based probing capabilities, and dedicated clock-generation circuitry to maintain synchronization across large, mapped designs.

This specialization yielded several tangible benefits. Compilation and setup times were dramatically reduced because the routing and configuration problem was constrained and optimized for emulation rather than general synthesis. Designers gained full visibility into the design during execution, often without the need for lengthy recompilation cycles to insert probes. And as designs grew in complexity, performance scaled more predictably because the architecture had been engineered around the structural characteristics of emulated hardware rather than generic programmability. Compared with processor-based emulators, the custom-FPGA approach delivered similar functional capabilities with lower power consumption and a more hardware-centric execution model.

Mentor Graphics and the Commercialization of the “Emulator-on-Chip”

The promise of custom-FPGA-based emulation attracted industry attention. In 1996, after outmaneuvering Quickturn, Mentor Graphics acquired Meta Systems and introduced SimExpress, the first commercial emulator built around custom programmable silicon.

SimExpress was, in many respects, a proof of concept rather than a fully competitive platform. Housed in a compact chassis roughly the size of a small wine cellar, it could map designs of fewer than 100,000 gates at a time when leading ASICs were already exceeding the million-gate threshold. Yet its architectural direction was significant. Setup was simpler, compilation times were reduced from hours to minutes, and runtime visibility into the design was far superior to what many FPGA-based systems offered. The platform demonstrated how emulation-optimized silicon, paired with advanced verification software, could form a balanced environment for pre-silicon validation.

Mentor expanded on this concept with the introduction of Celaro in 1999, a substantially larger emulator with a nominal capacity of approximately five million gates. By clustering multiple systems, engineers could scale total capacity beyond twenty million gates—an important milestone as system-on-chip (SoC) designs grew rapidly in size and complexity.

The custom-FPGA approach, however, came with trade-offs. Because these devices did not match the raw logic density of the largest commercial FPGAs, more chips were required to implement large designs. Larger arrays meant longer interconnect paths and increased signal propagation delays. As a result, execution speeds often fell below one megahertz for large configurations—adequate for verification workflows, but slower than some competing FPGA-based emulators at equivalent capacity.

IKOS, Virtual Wires, and Transaction-Based Verification

A pivotal shift occurred in 2002 when Mentor Graphics acquired IKOS Systems. This acquisition brought two complementary technologies that would shape the next generation of emulation.

The first was the Virtual Wire interconnect methodology, originally developed by Virtual Computer Corporation (VCC) and later incorporated into IKOS platforms. Virtual Wire simplified the daunting task of routing large designs across many programmable devices by abstracting physical connectivity into a software-controlled interconnect layer. Engineers could reassign signal paths without physically rewiring boards, dramatically accelerating bring-up and iteration.

The second was IKOS’s work in transaction-based verification. Rather than exchanging low-level signal toggles between the testbench and the hardware model, the methodology elevated communication to higher-level transactions—data packets, protocol events, and software interactions. This approach significantly improved verification efficiency and enabled tighter coupling between hardware and software validation.

Mentor integrated these innovations into the Veloce emulation family, first introduced in 2007 and positioned as a new generation of emulator-on-chip systems. The architecture combined custom programmable silicon, scalable interconnect, and advanced verification software into a unified hardware-assisted verification platform.

Differentiation Through Verification Methodology

Where Mentor ultimately distinguished itself was not only in hardware but in methodology. Building on IKOS’s foundations, the company introduced TestBench Xpress (TBX), widely regarded as one of the most effective implementations of transaction-level acceleration. TBX enabled software testbenches—typically written in SystemVerilog, C, or SystemC—to execute efficiently alongside emulated hardware by offloading transaction handling to the host environment.

Mentor extended this approach further with VirtuaLAB, a suite of application-specific verification environments tailored to industry protocols such as USB, Ethernet, and storage interfaces. These environments allowed teams to validate real-world workloads and software stacks earlier in the design cycle, bridging the gap between pre-silicon hardware verification and system-level validation.

Evolution of the Veloce Family

Over the following years, the Veloce platform progressed through multiple generations. Each iteration increased design capacity, improved execution performance, and enhanced analysis capabilities. New features supported low-power verification, power estimation, hybrid emulation with virtual platforms, and functional coverage analysis. The systems evolved from niche verification engines into central pillars of hardware-assisted verification strategies for large SoCs.

In 2018, Siemens Digital Industries Software acquired Mentor Graphics and incorporated the Veloce product line into its broader electronic design automation portfolio. Development continued, with the platform adapting to the demands of billion-gate designs, complex software stacks, and heterogeneous compute architectures.

Today, the latest generation of this lineage is the Veloce Strato CS, part of the Veloce CS hardware-assisted verification platform. It represents the culmination of decades of architectural evolution—from custom programmable silicon and Virtual Wire interconnects to transaction-based acceleration and enterprise-scale emulation infrastructure—designed to support the verification of modern AI-driven, software-defined systems on chip.

The FPGA Renaissance

While processor-based and custom-FPGA-based emulators were establishing themselves in the market, a parallel transformation was unfolding in programmable logic.

By the late 1990s, new FPGA generations from Xilinx and Altera began to close long-standing gaps in density, speed, and routing flexibility. Devices could now host significantly larger portions of now popular system-on-chip (SoC) designs, while improved place-and-route tools shortened iteration cycles—an essential requirement for verification teams working under relentless tape-out pressure.

Around the turn of the millennium, Xilinx introduced the Virtex family, marking a pivotal inflection point. These devices combined higher logic capacity with faster interconnects and, crucially, read-back capabilities. Engineers could inspect internal registers and memory contents at runtime without recompiling the design. The trade-off was performance: read-back operations slowed execution, but the visibility they enabled proved invaluable for debugging complex systems. For verification engineers, this represented a new balance between observability and speed, one that would shape FPGA-based emulation strategies for years to come.

The rapid progress of commercial FPGAs reignited interest in building emulators directly from off-the-shelf programmable devices. Compared with custom silicon approaches, FPGA-based systems promised faster innovation cycles and lower development costs, while benefiting from the continuous performance gains delivered by FPGA vendors. This environment set the stage for a new wave of entrepreneurial activity.

Two startups, operating on opposite sides of the Atlantic, capitalized on this opportunity, each pursuing a distinct architectural philosophy.

In Silicon Valley, in 1999 Axis introduced a simulation accelerator based on a patented, Reconfigurable Computing (RCC) architecture. Implemented as arrays of FPGAs, the system—marketed under the name Excite—initially targeted acceleration of simulation workloads rather than full emulation. Within a couple of years, Excite evolved into Extreme, a more traditional emulation platform. One of Extreme’s defining innovations was its “Hot-Swap” capability, which allowed engineers to move designs seamlessly between the emulator and a proprietary simulator. This approach leveraged the interactive debugging strengths of software simulation while retaining the speed advantages of hardware execution, bridging two previously distinct verification domains.

At roughly the same time in Europe, a more disruptive initiative was taking shape. Emulation Verification Engineering (EVE), founded in 2000 by four former Mentor Graphics engineers, set out to rethink FPGA-based emulation from the ground up. In 2003, the company introduced ZeBu (Zero-Bugs), an emulator implemented on a compact PC card. The first version, ZeBu-ZV, incorporated two Xilinx Virtex-II devices: one dedicated to mapping the design-under-test (DUT), and the other tasked with accelerating transaction-level execution through a newly conceived Reconfigurable Testbench (RTB) technology.

This architectural decision proved pivotal. By elevating the testbench into hardware and enabling transaction-based verification, ZeBu significantly increased throughput and reduced communication bottlenecks between the DUT and verification environment. At the same time, the system leveraged Virtex read-back features to deliver runtime visibility into internal state without recompilation—again trading execution speed for powerful debugging capabilities.

The concept demonstrated both technical viability and commercial promise. Within a year, EVE expanded the architecture into a larger chassis configurable with arrays of Virtex FPGAs. The resulting system, ZeBu-XL, marked the transition from a compact/personal emulator to a scalable/enterprise emulation platform. Over time, the product line evolved through successive generations, each benefiting from advances in FPGA density, clocking, and tool automation.

A major milestone arrived in 2009 at DAC with the introduction of ZeBu Server, the progenitor of a long-lived product family. Designed for scalability from a single chassis to multi-rack configurations, ZeBu Server could reach nominal capacities of one billion gates. It introduced a higher level of automation, including incremental compilation, faster place-and-route cycles, and multi-user capabilities—features that reflected the growing industrialization of verification workflows.

Equally important were its economic and operational characteristics. ZeBu Server delivered greater execution speed than all competing emulators while consuming a fraction of their power of computing. Its pricing—reportedly dropping below a penny per gate in large configurations—reset expectations for cost efficiency in hardware emulation. The platform quickly gained recognition for offering one of the lowest total costs of ownership in the industry, positioning EVE as a formidable player in the verification market.

Architecturally, ZeBu also departed from the prevailing ICE-first (In-Circuit Emulation) mindset. Early systems emphasized transaction-based verification through RTB—later renamed Flexible Testbench (FTB)—operating at higher clock speeds than the DUT to maximize bandwidth and responsiveness. ICE capabilities were introduced in later generations, but transaction-level acceleration remained a defining strength, aligning with the increasing role of embedded software and system-level validation.

Synopsys Moves into Emulation

The growing relevance of hardware emulation did not go unnoticed by major EDA vendors. Synopsys, recognizing the strategic importance of the technology as early as the mid-1990s, attempted to enter the market through the acquisition of a startup named Arkos, which had developed a processor-style emulation approach. The effort proved unsuccessful, and, within months, Synopsys divested the company and its assets, which were subsequently acquired by Quickturn.

That early setback delayed Synopsys’ direct involvement in emulation for more than a decade. During this period, the market matured, FPGA-based solutions gained credibility, and system-level verification requirements intensified, driven by the rise of complex SoCs and embedded software stacks.

The turning point came in 2012, when Synopsys acquired EVE. By then, EVE was already delivering the third generation of ZeBu Server platforms and had established a reputation for performance, scalability, and cost efficiency.

Following the acquisition, Synopsys invested heavily in advancing the ZeBu roadmap. Capacity continued to scale, performance improved, and compilation times shortened through enhanced automation and tool integration. New analysis and use modes were introduced, supporting everything from software bring-up to system validation and hybrid prototyping.

These developments cemented commercial FPGA-based emulation as a long-term pillar of the verification landscape.

Summary

What began in the mid-1980s as a pragmatic use of rapidly advancing programmable logic has evolved into a cornerstone of modern semiconductor development—scaling to multi-billion-gate designs, enabling software-defined systems, and supporting the increasingly system-centric nature of verification.

At its core, the evolution of hardware emulation is a story of architectural divergence. Three distinct approaches emerged, each shaped by the technological constraints and verification demands of its time, and each redefining what emulation platforms could deliver.

The early dependence on commercial FPGAs exposed a fundamental mismatch between available technology and the requirements of deep system verification. That realization marked a turning point, giving rise to processor-based and custom FPGA-based architectures—two powerful but fundamentally different paths that sustained emulation through a decade of explosive semiconductor growth. Their later disruption by a new generation of FPGA-driven systems did not render earlier approaches obsolete; instead, it broadened the architectural landscape, underscoring a critical truth: no single emulation architecture can optimally address every verification challenge.

Over time, the role of emulation has expanded dramatically, driven by the convergence of evolving user demands and advancing technology. In the AI era, verification has become fully system-centric, requiring the execution of massive software workloads—such as large language models—on next-generation architectures. This shift places three factors at the center of emulation effectiveness: system capacity, execution performance, and interface connectivity. Only the right balance of all three enables meaningful pre-silicon validation at scale.

Among today’s competing approaches, commercial FPGA-based platforms are best positioned to deliver across all three dimensions—offering a compelling balance of scalability, speed, and real-world interfacing that aligns with the needs of modern AI system development.

Also Read:

Synopsys and TSMC Deepen AI Design Alliance: What It Means

How to Overcome the Advanced Node Physical Verification Bottleneck

Podcast EP342: The Evolution and Impact of Physical AI with Hezi Saar

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.