Even though design management systems are gaining popularity as a way to manage design data growth, they actually contribute to the problem of exploding data size. What we already know is that a linear increase in die size causes exponential growth in chip area, and that smaller feature sizes compound this effect in the same way. Additionally, larger teams are required for larger SOC projects with more components. These larger teams mean more users need copies of the design to do their jobs.

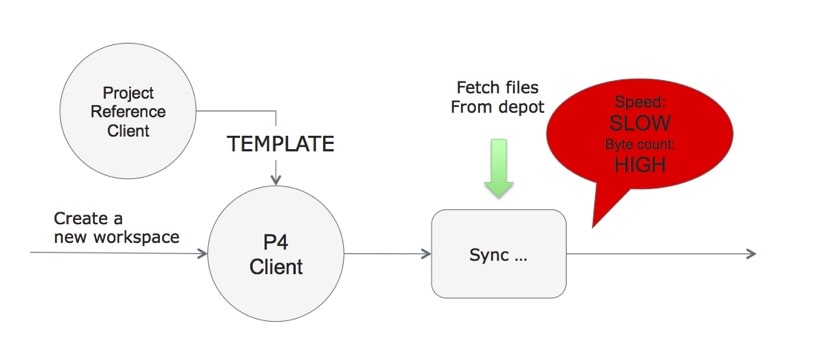

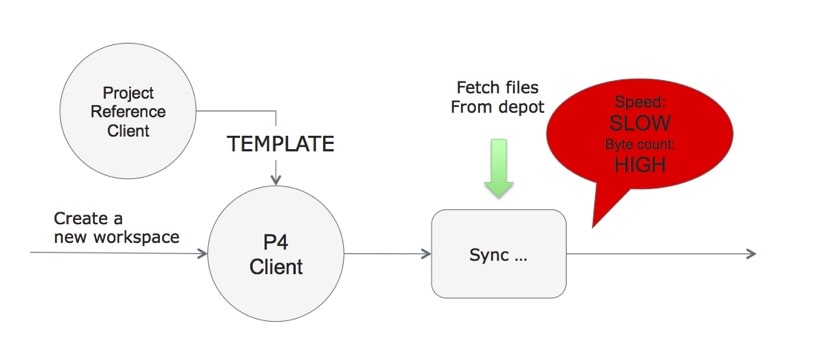

Conventional revision control systems give users copies of the design files in their workspace so that they can run design tools to modify the the design or run verification tools. Traditionally, creating and managing workspaces is difficult and painful at best. At its worst it can become a true nightmare. All kinds of application level techniques have been applied to try to make this process easier to run and manage.

Some approaches use de-duplication (dedup) to save space on servers, but it is necessary to create a full copy of the data before deduping can begin. Furthermore, after the data is copied to make a workspace, it then needs to be compared to other stored data on the fileserver to determine if there is duplicate data that can be consolidated. This amounts to a copy, two reads and a compare in order to reduce storage space. The bandwidth and compute penalty for this step is severe and can negate the advantages of the process, especially when dealing with terabytes of data.

File system links are also frequently used, but managing them can be troublesome. Using links in a directory to point to workspace copies of files is still an ‘application’ level solution to ‘service’ level problem. Links can become jumbled and create a web of file pointers that can be hard to parse. At the time a file needs to be modified the link has to be removed and the file data needs to be copied locally.

In reality often only a small number of files in any given workspace need to be modified, the vast majority are there for reading only. So what is called for is a robust and ideally transparent system for efficiently creating workspaces and allowing users to read and/or work on the files that are needed specifically for their task. Most importantly of all, the file operations must follow the permissions dictated by the design management system.

So, if you were starting from scratch, what would be the best design for a design management infrastructure? It would be based on Perforce or Subversion, or another standard revision control system. However, it would be differentiated by putting a fundamental understanding of the native revision control system into the file system itself.

Methodics has done just this with their WarpStor appliance. Yes, building a filer interface hardware unit is very unconventional by today’s standards. Thinking it through, it is not unlike what NetApp did with NFS, making it a service that is furnished through an appliance. Granted data management is a different beast, but there are many parallels.

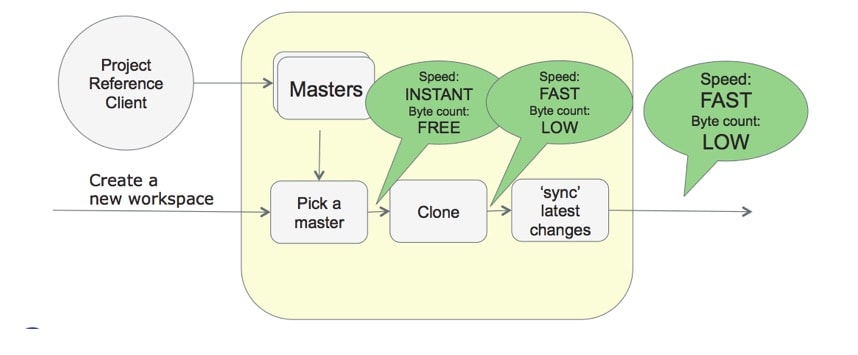

Methodic’s WarpStor uses managed design data on existing fileservers, but presents it to each user as local data that complies fully with the design management policies and procedures. Methodics likes to call this Version Control on Steroids. It’s easy to see why. The amount of data across the network used for design data drops significantly. Network traffic and bandwidth consumption drop sharply. Users see higher levels of responsiveness. Best of all, administrators and users see the full benefits of design management, including versioning, permissions, releases, etc.

To learn more about the internals and implementation of WarpStore, you can read the white paper on “WarpStor – Version Control on Steroids” on their website, here.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.