Security and machine learning (ML) are among the hottest areas in tech, especially for the IoT. The need for higher security is, or should be, blindingly obvious at this point. We struggle to fend off daily attacks even in our mainstream compute and networking environment. How defenseless will we be when we have billions of devices in our homes, cars, cities, utilities and farms, open to attack by any malcontent (or worse) with an urge to create chaos? Meanwhile, ML is gaining traction at the edge simply because, for many of these devices, the classic human-interface paradigm of keyboards and monitors/cryptic displays is too cumbersome, too difficult to use and too costly.

In support of raising capabilities in both of these domains, NXP recently launched a couple of new platforms and a toolkit for intelligence at the edge. I’ll start with the platforms, the LPC5500 microcontrollers and i.MX RT600 crossover processors. They argue a multi-layered approach to security in these platforms, including

- Secure boot for hardware-based immutable root-of-trust

- Certificate-based secure debug authentication

- Encrypted on-chip firmware storage with real-time, latency-free decryption

They’ve added a couple more important security features. Device-unique keys can be generated on-demand through a physically unclonable SRAM-based function (PUF). They also provide support for the DICE standard which is becoming increasingly popular in IoT identity, attestation and encryption. Even more interesting (to me), NXP are working on a relationship with Dover Microsystems, about who I’ll talk more in a later blog. NXP plan to integrate Dover’s CoreGuard technology offering an active, rule-based security mechanism.

On the ML side, NXP recently announced their eIQ software environment for mapping cloud-trained ML environments to edge devices. I found this to be one of most compelling parts of the NXP announcement. Normally when you think about mapping a TensorFlow, Caffee2 or whatever neural net model to a resource-constrained edge device, you think about mapping to specific NN architecture in that device. But what if you need to target a wide range of devices, all the way from CPUs up to dedicated ML cores? Will that require a different mapping solution and lots of ML expertise per platform? According to Geoff Lees, Sr VP and GM of microcontrollers, eIQ and the platforms mentioned above should make this multi-device targeting a lot easier.

I asked why anyone would want to implement ML on a CPU. After all, CPUs are famously the least effective platform for ML in terms of power per watt. I asked a similar question at an ARM press briefing last year and got what I thought was a rather defensive response. So I was curious to get NXP’s take. Geoff provided a great example of an intelligent microwave. This doesn’t need a lot of ML horsepower to handle (locally) trigger-word recognition and basic natural language processing for a very limited vocabulary. Or better yet, recognizing the food when you put into the microwave. Nor does it have to provide microsecond response times or run off a coin cell battery (since a microwave has to be wired anyway). So a Cortex M33 with its support for DSP processing is amply suited to the task and likely cheaper than more elegant NN platforms. Which should be important in a mass-market appliance.

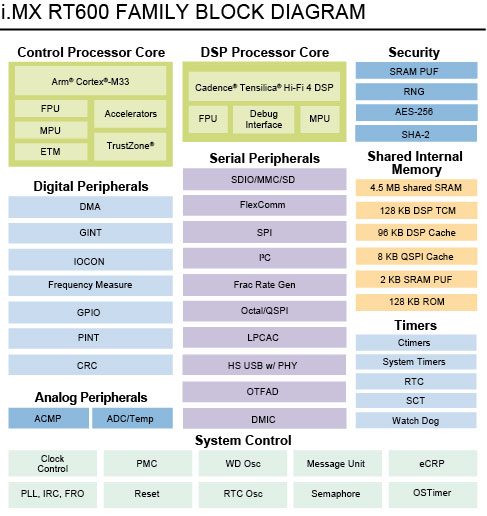

For fancier applications, you’ll still want to rely on a dedicated ML engine. In the i.MX RT600 family, this is the Cadence Tensilica Hi-Fi 4 DSP. Hopefully now you see the value of eIQ – a common ML mapping platform which can handle mapping to all NXP devices, from high-end i.MX 8QM down through mid-range devices to the Cortex M33-based devices.

As examples of how these technologies can be applied, NXP recently showed (at the Barcelona IoT World Congress) an industrial application in which they used various subsystems including drones for operator recognition (are you allowed to perform this function), object recognition for operator safety, voice control and anomaly detection to predict failures in drone operation. At TechCon they showed trigger word recognition and voice control and in vision they showed food recognition (for that microwave) and traffic sign recognition.

From microwaves to traffic sign recognition and factory floor automation, looks like NXP is making a play to own an important piece of edge processing, both in security and in machine learning, across a wide range of processor solutions.

Share this post via:

ASML High-NA EUV is Not Ready for High-Volume Production