Automotive markets have added pressure on semiconductor/systems design through demand for ISO26262 compliance – this we all know. But they have also changed the mix of important design types. Once class of design that has become very significant in ADAS, and ultimately autonomous applications, is image signal processing (ISP). Collision-avoidance, pedestrian detection, lane departure and many other features depend on processing images from a variety of sources. In all these cases, fast response is essential, especially in image preconditioning where hardware-based solutions will typically have an edge.

These functions handle a wide range of operations: defect correction, noise filtering, white balance, sharpening and many others. These can be handled through sequences of custom-tuned algorithms which are data-processing-centric rather than control-centric so particularly well-suited to high-level synthesis (HLS).

I check-in to HLS periodically to see how usage is evolving. You can look at this from a generic point of view – will it eventually replace RTL-based design across the majority of designs? I’m not sure that perspective is very illuminating. Methods change often not because a better way emerges to do exactly the same thing but because needs change and a new method better handles those new needs. In this case, automotive needs may be a stimulus for change.

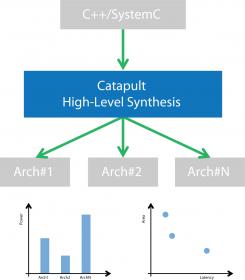

ISP is one example. If you need highest performance along with lowest power and cost, you want a hardware-based solution but this needs careful co-design with associated image-processing software. Getting to the best-possible solution also demands a lot of experimentation through architecture options, for example on word-widths, pipelining and operand sharing.

This is where HLS can really shine. Once you have got through the learning curve (with C++ or SystemC) and you have built up a library of reusable templates, for a new IP development verification and maintenance is much faster, in part because there are simply less lines of unique code for such an IP that would be required in RTL. This isn’t just my view. The Imaging group in ST in a recent webinar say that over the last 3 years they have built a library of 50+ IP, ranging from 10K gates to 2M gates, in a team of less than 10 designers. Naturally the templates are an important part of this and are, I would guess, fairly application-specific. But once built-up, it seems new IPs can be added/adapted quite quickly. This is a pay now or pay more later proposition.

The payback in this flow is quite compelling. First, an IP group is able to deliver very quickly to the SoC verification team a model for basic integration testing. After that the IP team go into detailed functional design for the IP, while exploring architecture tradeoffs and synthesizing to RTL tool (they are using Mentor Catapult). Their verification methodology is a very interesting aspect of this stage. First, the team use the same UVM testbench for both the high-level model and the generated RTL, which means that at RTL, no new verification development should be required, and indeed they find that once the C-level model verifies clean, the generated RTL also verifies clean.

Second, these testbenches are largely developed by the IP designers (with verification experts jumping into to handle special cases). Nice idea. Few product teams are swimming in under-utilized resources (and if they are, that’s probably not a good thing). Better leveraging what resources you have is always a plus, in this case getting more of the verification assets (TB, sequences, constraints, etc.) developed in the design team.

In the final phase of IP development, the team focus on PPA optimization. Again, the high-level nature of the design provides a lot of flexibility to make late-stage changes, in architecture if needed, to get to the most competitive solution that can be delivered. Unlike late-stage changes in RTL which can be very disruptive, here there’s no drama. HLS simply regenerates a new RTL, adapting also to parameter-controlled option changes as needed and the same UVM testbench is again used to test the generated RTL, a very quick process since it has already been proven on the C-level design.

The ST speaker wraps up with a few other observations. Following their methodology, they have been able to reduce total development/verification time on a typical IP by nearly 70%. And by doing the bulk of their verification development at the C++ level (where verification runs much faster), they are able to run thousands of tests in minutes rather than the hours that would be required at RTL, which means they can get to coverage closure much faster.

ST has been in the ISP business for a long time so their suggestions have to be considered expert. If you want to learn more about how they are using Mentor Catapult, you can read the white-paper HERE and view the webinar HERE.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.