Synopsys recently presented a webinar on using their own software to optimize one of their own IPs (an ARC HS68 processor) for both performance and power through what looks like a straightforward flow from initial configuration through first level optimization to more comprehensive AI-driven PPA optimization. Also of note they emphasized the importance for one IP team to be able to efficiently support multiple different design objectives, say for high performance versus low power, for derivatives and for node migrations. The flow they describe is supported both by capabilities provided by the ARC IP team and by Synopsys Fusion Compiler with DSO.AI.

The reference design flow (RDF)

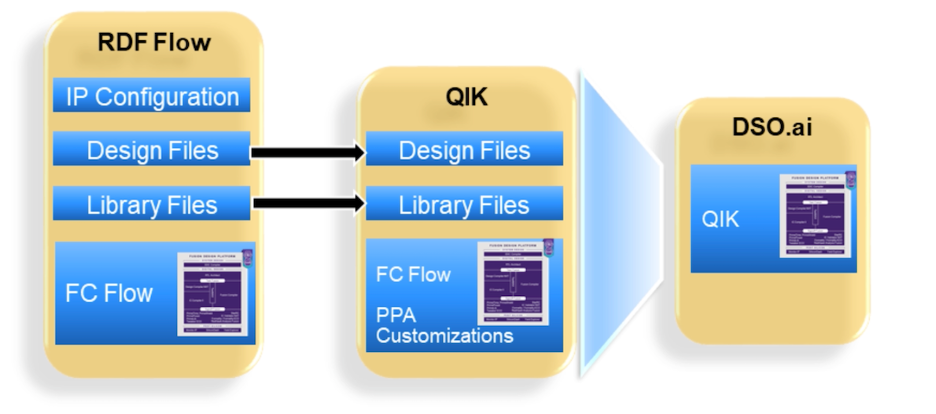

RDF provides a complete RTL to GDS flow, in this example targeting the TSMC 5nm (TSMC-5FF) technology library. The RDF configures the IP and generates the RTL files. It builds and instantiates required closely coupled memories through the Synopsys memory compiler and configures the implementation flow scripts which can be used in the Fusion Compiler flow to generate a floor plan. It also replaces clock gates and synchronizer cells with options drawn from the technology library.

John Moors (ASIC Physical Design Engineer, Synopsys) mentioned that RDF can optionally do DFT insertion and will generate RTL gate level simulation scripts, low power checks, formal verification scripts and SDA and power analysis flows, though they didn’t exercise these options for this test.

The Fusion quick start implementation kit (QIK)

Highly configurable IP like the ARC cores offer SoC architects and integrators significant flexibility in meeting their goals but this flexibility often adds complexity in meeting PPA targets. Quick start implementation kits aim to simplify the task, building on R&D experience, IP team experience and collaboration with customers. QIK kits are currently offered for HS5x, HS6x, VPX and NPX cores.

Kits integrate know-how for best achieving PPA and floorplan goals, including preferred choice for implementation (flat, hierarchical, ..), library, technology and design customizations, and custom flow features to maximize the latest capabilities in an IP family. They also include support for all the expected collateral checks including RC extraction, timing analysis, IR drop analysis, RTL versus gate equivalence checks and so on.

Frank Gover (Physical design AE at Synopsys) mentioned that these kits continue to evolve with experience and with enhancements in IP, in tools and in technologies. QIKs also include information to leverage DSO.AI. DSO.AI has been covered abundantly elsewhere so I will recap here only that this uses AI-based methods together with reinforcement learning to intelligently explore many more tradeoffs between tunable options than could be found through manual search.

Comparing Baseline, QIK and QIK+DSO.AI

The team found that QIK alone improved frequency by 17% and QIK+DSO.AI improved frequency by 23%. QIK improved total power by 49% (!) and leakage power by just over 9%. These number got a little worse after running DSO.AI – total power improvement dropped to 44% and leakage power dropped to -5% (worse than the baseline). However, Frank pointed out that their metric for optimization was based on a combination of worst negative slack, total negative slack, and leakage power, in that order, so performance optimization was preferred over leakage. Still, a 90MHz gain in performance for a power increase of only 0.6mW is pretty impressive.

One interesting observation here: Slack and leakage measurements are based on static analyses. Dynamic power estimation requires simulation, very unfriendly to inner loop calculations in machine learning, even for the specialized approaches used by DSO.AI. From this I suspect that all the dynamic power improvement in this flow comes from the QIK kit, and that incorporating any form of dynamic power estimation into ML-based methods is still a topic for research.

When looking at efficiency in applying this flow to multiple SoC objectives, Frank talks about both a cold start approach with no prior learning (only user guidance) and a warm start approach (starting from a learned model developed on prior designs/nodes). First time out of the gate you will have a cold start and might need to assign say 50 workers to learning, from which you would choose the best 10 results and pass those on to the next epoch. Learning would then progressively explore the design space, ultimately converging on better results.

Once that reference training model is developed, you can reuse it for warm starts on similar design objectives – a node migration or a derivative design for example. Warm starts may require only 30 workers per epoch and quite likely less epochs to converge on satisfactory results. In other words you pay a bit more for training at the outset, but you quickly get to the point that the model is reusable (and continues to refine) on other design objectives.

Nice summary of application on a real IP, with clearly valuable benefits to PPA. You can review the webinar HERE.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.