The weather in Taiwan last week was very nice, not too hot but certainly not cold. The same could be said for the TSM stock which broke $16 after the October financial report where TSMC reported a sales increase of 15% over September. Revenues for this year thus far increased 19% over last year so why isn’t TSM stock at $20 like I predicted earlier this year?

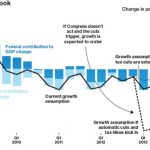

I blame the Q4 and Q1 Fear, Uncertainty, and Doubt (FUD) everyone is talking about. I blame the “US Fiscal Cliff” everyone is writing about, it even has a wiki page! I was asked by politicians if my family was better off now versus four years ago and the answer is YES, absolutely! Why? Because money is cheap, the interest rate on my debt is less than half, and because I continue to invest in the future.

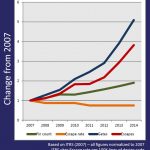

TSMC has done the same thing. TSMC has spent a record amount this year on CAPEX and R&D and it shows. 28-nanometer revenue and shipments more than doubled during Q3 2012 and total 28nm wafer revenue increased from 7% in Q2 to 13%. Expect 28nm revenue to exceed 20% of total wafer revenue in Q4 and will be more than 10% for the whole year.

TSMC 28nm capacity increased 5% to 3.8 million wafers in Q3 and was fully utilized. As Co-Chief Operating Officer Dr. Shang-Yi Chiang said at ARM TechCon last month, “The biggest 28nm challenge was forecasting with demand for 28nm this year being 2-3x of what was forecast.”

Congratulations to everyone on the success of 28nm TSMC. Teamwork, patience, and investment wins again! Let us not forget the “28nm does not work” FUD at the beginning of the year. As I predicted 28nm will be the best process node we will see for years to come, believe it. Since the other foundries are still struggling with it, I predict 28nm will be the most successful node in the history of TSMC. 28nm may even get a chapter in the book Paul McLellan and I are writing, if not a full chapter, certainly an honorable mention.

Back to the fiscal cliff – what will I do in the next four years? I will continue to invest but also pay down my debt. I did support President Obama for a second term and I strongly suggest he do the same, invest and pay down the National Debt. I offer the same advice to TSMC, continue to invest and the fabless semiconductor ecosystem will have another great four years!

Last quarter TSMC invested $1B in ASML for EUV and 450mm technology. TSMC also bought 35 acres of land in Zuhan (near Hsinchu Science Park) for another GigaFab research and manufacturing facility that will produce 450mm wafers starting at 7nm. TSMC 2013 CAPEX and R&D is expected to be “in the same ball park” as 2012, of course that all depends on 20nm and 16nm FinFETS and how accurate the 2013 forecast is. My guess is that TSMC 2013 revenue will beat 2012 by single digits and, due to the cost of 20nm and 16nm, CAPEX and R&D will also grow by single digits.

Remember, I’m not an analyst, journalist, or financial expert, I’m just a blogger who drives a Porsche.