Matt Elmore has a two-part blog about the growing complexity of signal integrity analysis, both on the chip itself and the increasingly complex analysis required to make sure that signals (and power) get in and out of the chip from the board cleanly, especially to memory, which requires simultaneous analysis of chip-package-system or CPS. Part1 Part2

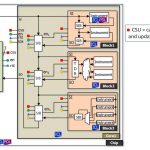

A particular problem is analyzing simultaneously switching outputs (SSO) when using DDR to access off-chip memory. The big challenge in CPS signal integrity simulation is the sheer complexity. It is simply not possible just to take all the relevant parts of the design and throw them into a SPICE simulator. The power grid alone may have millions of nodes. One potential solution is to divide the simulation up into smaller pieces, such as analyzing each byte separately, but that means that interaction between adjacent bytes is assumed to be irrelevant, which might turn out to be a very expensive assumption if wrong. The reality is SSO issues are a global problem (many outputs switching together affect the power supply voltage across all I/Os and indeed the core of the chip).

A better approach is to accurately capture all the relevant characteristics in a considerably reduced model, with enormously shorter runtime. This has been possible for the power delivery network (PDN) for some time but now channel model reduction advances are able to retain the accuracy required while modeling the whole system from end to end: die, package, PCB, memory. Previous generation simulation technology is just inadequate to simulate full I/O b banks (128+ bits) in a reasonable time. So accurate modeling is the key to successful high speed (17Gb/s) interface designs.

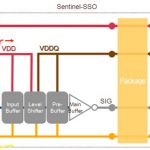

I/O buffer performance is highly susceptible to on-die power noise. I/O buffers firing simultaneously will draw current from the battery in sharp increments, resulting in voltage drop and a shared fluctuation of effective supply levels (Vdd-Vss). In order to take this into account, the power/ground routing of the I/O ring must also be modeled. Leveraging a chip power model with power/ground extraction and reduction technology helps create a compact model of the resistive, capacitive, and inductive coupling of the I/O ring power grid.

SPICE is still the de facto simulation engine for DDR signal and power integrity simulation. Using a tool, such as Apache’s Sentinel-SSO solution for example, helps bundle these modeling technologies together in a single interface designed for signal integrity. The netlisting and connection of these models is automated by the tool and fed into the SPICE simulator of choice. After simulation, the timing, jitter, and noise waveforms and metrics are displayed for review. Previously, full-channel SSO simulations of the chip, package, and PCB were unobtainable. However, designers now have advanced modeling technology to sign-off their systems with reasonable turnaround times.