Evolving opportunities call for new and improved solutions to handle data, bandwidth and power. Moving forward, what will be the high-growth applications that drive product and technology innovation? The CAGRs for smartphone and data center continue to be very strong and healthy.

Continue reading “FinFET For Next-Gen Mobile and High-Performance Computing!”

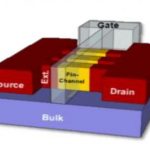

Advanced Micro Device’s New Polaris FinFET-Based Architecture Could Open New Doors

It seems of late like there is an unlimited thirst for GPU performance at the right power efficiency. Whether it is deep learning, object recognition, artificial intelligence, simulations, VR or AR, the industry desperately needs GPU improvements. Many within the graphics industry would agree that a new era of graphics performance and efficiency is upon us. This new era is partly thanks to the fact that the entire industry is finallymaking the transition from the 28nm process node down to the 14 and 16nm FinFET (3D transistor) process nodes, a full process node shrink. It’s part process but it’s also architecture.

Advanced Micro Devices (AMD) Radeon Technologies Group’s (RTG) newest GPU architecture, codenamed Polaris, is the company’s latest architecture that is specifically designed with FinFET in mind enabling higher levels of performance while using significantly less power and using a smaller chip. This should translate to some extremely impressive power and performance improvements that could inevitably drive improved value to consumers while also improving yields and hopefully margins of the companies that make those GPUs.

The FinFET shrink to 14 and 16nm has been a long time coming, since the last shrink to 28nm happened in 2011, 4 years ago or an eternity in the semiconductor industry. Back in 2011 when AMD’s Radeon 7900 series, the first 28nm GPU, was announced, the most popular smartphones were the Galaxy S2 and iPhone 4. That’s how long it has been. So you can imagine why the entire industry is absolutely excited to make the transition to 14nm, it has probably felt like an eternity for them, I know it has for me. There was originally promise that 20nm was a possibility as a half-node shrink between 28 and 14/16 FinFET but the reality was that the process node was not good for multi-billion transistor GPUs and both Advanced Micro Devices and NVIDIA were forced to skip 20nm. So here we are in 2015, still using 28nm and both Advanced Micro Devices and NVIDIA are on their 3rd and 4th generations of GPUs using 28nm and constantly looking for ways to improve performance without increasing.

This leads us to where AMD is now, AMD’s Polaris architecture is built with FinFET transistors from TSMC and Globalfoundries. The reason why AMD hasn’t clearly stated whether or not their GPUs are 14nm or 16nm is because they have been working with both TSMC and Globalfoundries whose FinFETs are stated differently. Globalfoundries is based on Samsung’s 14nm process, which is also 14nm and combines Samsung’s low power expertise with Globalfoundries high performance expertise to hopefully deliver a very efficient and powerful GPU. TSMC has traditionally been AMD’s and before AMD, ATI’s fab of choice. TSMC’s FinFET process is a 16nm process, but for all intents and purposes 14nm and 16nm FinFET are pretty much interchangeable in terms of power savings when compared to 28nm. We’ll have to wait to see real products to see if that’s really the case.

No two companies’ process nodes are identical, so the reality is that we could see TSMC’s transistors deliver slightly better power efficiency while Globalfoundries’ could deliver a physically smaller chip with slightly more performance, as was seenwhen Apple dual sourced their Apple A9 with TSMC and Samsung. AMD is stating that FinFET offers a 20-35% improvement in terms of performance over 28A planar, which is a huge performance boost and much less variation over planar, meaning a much more reliable yields and performance. This performance improvement is also in conjunction with a power reduction of up to 50-60% compared to 28A planar, which is also massive when you consider what that can mean for GPUs in mobile form factors and servers. All of this results in AMD making the biggest performance per watt jump in the history of AMD GPUs, which includes all of ATI’s history as well.

AMD RTG’s new Polaris architecture, which AMD is calling an SoC Architecture, has a new GPU macro architecture which features their new 4th generation of their GCN (Graphics Core Next) compute units as well as support for HDMI 2.0a, DisplayPort 1.3 and 4K H.265 encode and decode. AMD claims that their Polaris GPU is really an SoC architecture because “GPUs are more than just Graphics IP.” They claim the SoC definition because of the collection of different cores and engines which include multi-media, display, caches, memory controllers and power management. Even so, there are a lot of components inside of a GPU and we can all agree that they are only becoming more complex and capable with every generation. Part of that comes from all of the improvements that are made over time. With Polaris, these improvements are being made almost across the board with a new geometry processor, new command process, new multimedia cores, new L2 cache, new memory controller, new display engine and as stated earlier new 4th generation of GCN compute cores.

All of these improvements should translate to some pretty impressive performance and power figures as mentioned earlier. Because this new architecture is coming with a massive node shrink, the end result of the biggest performance per watt improvement in the company’s history. The company actually showed this off in a side by side demo back in December when the company invited selected press and analysts to Sonoma to talk about the future of RTG. There, they showed AMD RTG’s Polaris architecture playing Star Wars Battlefront at 1080P versus a competitor.

In their demo they capped the GPU to 60 FPS for both GPUs and compared the power consumption of the two GPUs showing that their competitor’s whom they didn’t expressly name was running at almost twice the power consumption as their parts. The Polaris GPU ran at 86W while the competitor part ran at 140W, which is absolutely a huge difference and a massive improvement. However, they did not disclose exactly which competitor GPU they were comparing against so we can’t know exactly how recent the comparison is. Additionally, the competitor’s part is a 28nm GPU so it will very likely be more power hungry in almost any scenario. Even so, 86W for 60 FPS 1080P gaming in a game as beautiful as Star Wars Battlefront is absolutely amazing. The key here is not a competitive analysis, but the improvements from the architecture, new process, and improved transistor geometry.

Advanced Micro Devices (AMD) isn’t positioning the Polaris architecture at launch as an architecture that will be the fastest on earth, which is interesting. It is a change of strategy and positioning on the part of the company which I believe is a good one. Constantly trying to prove that you have the fastest graphics card on earth isn’t going to actually move graphics cards in volumes. The reality is that the middle of the product stack is what moves GPUs and if your focus is on the middle and upper middle of the market at first and delivering the best value there you have the best opportunity to increase sales, profitability and market share. On the other hand, AMD doesn’t have anything to fall back on if they moss the mark in the middle, and that’s a risk.

Advanced Micro Device’s RTG is saying that they expect Polaris to enable new kinds of products and ship in thin and light notebooks, small form factor desktops and discrete graphics cards with fewer power connectors. As we always see with new nodes, it’s always easier to launch the smaller, less complex GPUs that deliver better value and performance per watt and then follow up with the bigger more powerhouse GPUs. Additionally, the real killer applications for Polaris, I believe, are in the notebook form factors where power is always a concern and where discrete GPUs are still needed as resolutions continue to hike upward. Notebooks are so power and thermally constrained that such massive reductions in power consumption will translate to MUCH more powerful notebook GPUs which could broaden the gap between tablet and notebook performance. Products based on AMD’s new Polaris Architecture are expected to be available in mid-2016, roughly when we could also see a potentially competitive product from NVIDIA as well.

How to handle petabyte-scale traffic growth?

If you search the web for IP traffic growth, you will find many graphics, but the common result is that IP traffic is growing with high CAGR for many years and will again continue to grow with such high CAGR for the next five years. For example the global mobile data traffic is expected to grow with 53% CAGR 2015-2020… even if the smartphone shipment itself will see more modest growth. Before ending in a data center, this data traffic will pass through networking and telecom infrastructure.

SoC for backhaul equipment have to be designed to support this 20% increase of global IP traffic every year. The performance of these SoCs has to be on the bleeding edge, both in terms of data bandwidth, which is needed to exchange large amounts of data and minimum latency, which is needed to support intensive computation. The SoC area has to be pushed to the ASIC technology limit, but the chip has to be kept economically viable. Last imperative requirement, the Time-To-Market (TTM) has to be as short as possible because the bandwidth demand is growing so fast. All of the above translates into very aggressive SoC specifications and design requirements.

One of NetSpeed’s customers is market leader in the networking segment and the company has to launch new designs as frequently as needed to support the rapid data bandwidth expansion. To optimize design resource, architecture & front-end design was done by the customer and the back-end by 3[SUP]rd[/SUP] party ASIC vendor. The previous approach for SoC interconnect design was based on spreadsheet analysis. It was too time consuming, leading to major issues that severely impacted the schedule, including a long iterative loop with the ASIC vendor and last-minute bug discoveries which forced late design changes. The company made an exhaustive review of the other NoC solutions and NetSpeed’s NoC IP was selected because of its more efficient packet processing architecture.

What are the design challenges for this 7[SUP]th[/SUP] generation networking SoC? They are mostly linked with performance, in term of frequency increase and bandwidth to be multiplied by 4X from previous generation, SoC area (cost) and TTM for this extra-large SoC.

Selecting NetSpeed’s NocStudio, with it’s interconnect synthesis engine, had a major impact on the project schedule because it provided a very fast turnaround, 10x faster than before. As an example, the interconnect RTL and C++ and test bench generation took minutes instead of the 6 to 8 months previously required! Such a fast turnaround time when using NocStudio allowed rapid SoC-level architecture modeling and performance analysis that was ideal for optimizing the network for the company’s requirements.

Managing and avoiding deadlocks is the new frontier for complex, multi-core SoCs. Using patented algorithms and formal methods to design NoCs that are correct-by-construction, NocStudio generated an architecture that was deadlock-free at the application level.

Throughput is a measure of how much actual data can be sent per unit of time across a network, channel or interface. While throughput can be a theoretical term like bandwidth, it is more often used in a practical sense, for example, to measure the amount of data actually sent across a network in the “real world”. At design stage, architects have worked to increase SoC bandwidth by 4X from previous generation. When the SoC will be integrated in a networking system, we can expect the throughput to be improved by the same factor, if not more.

NetSpeed interconnects can be optimized to reduce wire lengths and buffer count. Reducing wires and buffer count has a direct impact on both power consumption and area. In this particular case, using NetSpeed’s solution led to a 25% reduction in wires, this allowed the company to avoid an expensive metallization level.

Finally, because NetSpeed’s solution dramatically reduces development time, it has helped the company to optimize valuable human capital by allowing it to offload key architects sooner so they can focus on other areas of the SoC.

This blog is extracted from NetSpeed “Networking” Success Stories. You can read more about this story and Mobile AP, Automotive SoC, Networking, Digital Home SoC or Data Center Storage stories here

From Eric Esteve from IPNEST

More articles from Eric…

Emerging Smartphone Display Insights from Patents

US201403549 illustrates a display that includes the multifunctional pixels. Each multifunctional pixel can include several display areas as well sensors. Combining visual display technology with sensors provides the touchscreen operation by use of a finger, stylus or other object positioned on or near the visual display, or to acquire fingerprint information from a finger placed on the display or other biometric information. For example, with an ultrasonic sensor, biometric information about a fingerprint including fingerprint ridges and valleys can be obtained. A stylus or stylus tip can be detected when positioned on or near the multifunctional pixel.

US9122349 illustrates a new image sensor panel that can be easily integrated with a display panel and can capture graphical, textual, or other information from the two-dimensional surface of an information bearing substrate without having to focus. The new image sensor panel enables a smartphone display screen to scan business card, document images, and capture fingerprints.

US20150220197 illustrates a touch display with a 3D force sensor that is able to simultaneously sense the magnitude of a force and the 3D tilting angle of the force when touching the force sensor. The 3D force sensor can detect its orientation and the magnitude and 3D direction of a force applied to its surface. The force can be non-parallel and non-orthogonal to the surface of the 3D force sensor. Several 3D force sensors are simultaneously used to detect the orientation of an object and the magnitude and 3D direction of the forces applied to the object. Several 3D force sensors also can be used to detect the tilting of a vertical or horizontal stacking of the objects relative to one another. Measurement of the 3D tilting angle of the force when the force is non-parallel and non-orthogonal to the surface can be used in many electronic device applications. For example, in a gaming application, if the magnitude of a force represents a speed of a movement, the 3D titling angle of the force can represent the direction of the movement in three-dimensions on the computer display.

Typically, the display area of a smartphone is not sufficient to allow whole documents or pages to be displayed and read with ease. US20150198978 illustrates a detachable flexible display to allow a user to view and manipulate documents. When attached to the smartphone, the display can be wrapped around the smartphone when the smartphone is not being used, and can be unwrapped when required to view documents. Alternatively, the display device can be detached from the smartphone and used as a standalone display.

US20150220058 illustrates a hologram display smartphone that can display a holographic image without a separate light source (laser diodes, light emitting diodes, organic light emission diodes) for illuminating the hologram.

More articles from Alex…

To EUV, or not to EUV, that is the question!

SPIE is next week so if you would like to meet me in person that is where I will be. SPIE is the big lithography and patterning conference for semiconductor professionals. Since I work with the foundries during my day job, SPIE is an important conference. SemiWiki blogger Scott Jones will also be there. During the day Scott does semiconductor costing and pricing models so this is a big event for him too.

Technologies for semiconductor lithography R&D,

devices, tools, fabrication, and services..

This year the hot topic is EUV of course. As we get closer to 7nm and 5nm the pressure is really on because the window of EUV opportunity is closing quickly. It will also be interesting to see what the different camps are doing (Intel, TSMC, Samsung, GlobalFoundries). The most recent news has GlobalFoundries and SUNY Poly working together on a $500M R&D program in upstate New York. IBM and Tokyo Electron are also part of this deal.

I find this interesting for several reasons. First and foremost, it is part of Governor Cuomo’s commitment to maintaining New York State’s global leadership in nanotechnology research and development. Semiconductors are critical to our infrastructure and national security so it is nice to see a politician acknowledge the importance of R&D. The current presidential candidates seem to have missed this very important detail. If you want to make America great again you had better include semiconductors in your platform, right?

“GLOBALFOUNDRIES is committed to an aggressive research roadmap that continually pushes the limits of semiconductor technology. With the recent acquisition of IBM Microelectronics, GLOBALFOUNDRIES has gained direct access to IBM’s continued investment in world-class semiconductor research and has significantly enhanced its ability to develop leading-edge technologies,” said Dr. Gary Patton, CTO and Senior Vice President of R&D at GLOBALFOUNDRIES. “Together with SUNY Poly, the new center will improve our capabilities and position us to advance our process geometries at 7nm and beyond.”

This also tells me that GlobalFoundries is still committed to leading edge process development. Did you notice that Gary Patton did not mention 10nm? During my visit to Malta last fall I strongly suggested GF skip 10nm and go straight to 7nm. There has been no official announcement but do not be surprised if you see one coming soon because 10nm will be the next 20nm (short life node), absolutely.

The other thing I’m interested in next week is learning more about EUV and the different implementations. Clearly Intel, TSMC, Samsung, and GlobalFoundries are not sharing EUV techniques, or are they sharing through ASML? Does anybody know the answer to this? Clearly Intel will focus EUV (pun intended) on their microprocessor business. But will that implementation also be used for Intel SoCs and FPGAs? How about Memory? Will Samsung use EUV for memory and SoCs? And which one of TSMC’s many customers will benefit the most from EUV? How about Apple? Will EUV have the throughput required for the many millions of Apple SoCs?

Inquiring minds want to know!

More articles from Daniel Nenni

Why Apple’s Fight is Fruitless!

The article suggested by LinkedIn is titled “Should Apple fight a court order to unencrypt iPhones? #unlockiPhone”. However, my question is this, why didn’t the FBI just go to the NSA for what they needed? Why didn’t the FBI just hire the latest hacker who was able to hack the iphone and give him the same deal other hackers before him got? Jail or a nice cushy job while he’s on probation for his ‘cooperation’? Why didn’t the FBI just recruit one of Apples software engineers? I’m sure at least ONE of them would take the bite…ummm, job.

Continue reading “Why Apple’s Fight is Fruitless!”

New CEVA X baseband architecture takes on multi-RAT

What we think of as a “baseband processor” for cellular networks is often comprised of multiple cores. Anecdotes suggest to handle the different signal processing requirements for 2G, 3G, and 4G networks, some SoC designs use three different DSPs plus a control processor such as an ARM core. That’s nuts. What is the point of having a DSP core if you have to have 3 of them, plus another core for control? Continue reading “New CEVA X baseband architecture takes on multi-RAT”

Tesla’s Secret Weapon!

Some CEO’s make things look easy and appear to enjoy their work. CEO’s like Richard Branson and Warren Buffet come to mind. These are guys that clearly enjoy what they are doing and, as a result, make everything they do look effortless and fun.

Tesla’s Chairman and CEO, Elon Musk, is another one of these types. There is an impishness in his communication of the company’s performance that conveys the overwhelming impression that no matter how bleak or dangerous the financial circumstances of his company may look, he has a plan that will ensure that everything works out fine for investors and customers alike.

Musk’s blithe spirit derives from his direct ownership of the customer relationship.

Tesla reported earnings last week. It is safe to say that financial analysts viewed those results as falling somewhere on a spectrum between disappointing and disastrous. But Musk skillfully evinced sanguinity. Analysts had expected a small profit, but instead were told of a tripling of the company’s net loss to $320.4M with an operating loss of $113.9M for the company’s 2015 fourth quarter. Tesla says it can achieve a net profit within 12 months.

Tesla’s fourth quarter sales rose 27% to $1.21B relative to the year-ago period. The company’s full year net loss was $889M on sales of $4.05B. Tesla said it sees deliveries of Model S sedans and Model X SUVs reaching between 80,000 and 90,000 in 2016, with Model X weekly production expected to peak at around 1,000 a week in the second quarter.

Musk’s serenity derives from a fundamental advantage he holds over the entire automotive industry. Tesla owns and controls its customer relationships directly.

The power of this control is manifest in the over-the-air software update strategy that Tesla has pioneered and perfected. While the rest of the automotive industry wrestles with dealer franchise agreements that limit the ability to directly update vehicle software, Tesla is able to nimbly plunge ahead with added features and functions long after the vehicle sale.

In fact, Tesla has been able to avoid vehicle recalls and has been able to charge thousands of dollars for performance upgrades, such as Autopilot, delivered via over-the-air software updates. Competing incumbent automakers have mastered this technology, but have been constrained in deploying it by dealer agreements which generally require that software updates be performed by the dealers.

Of course, owning the customer relationship has even greater implications in the context of Tesla building wireless connections into all of its cars. Tesla is mastering the process of continuous improvement of its existing cars while working on new cars.

In the earnings call last week Musk noted that the company continues to identify approximately 20 Model S enhancements every week. “Mostly, these are little tiny nuance things that most people wouldn’t notice,” said Musk (as transcribed by SeekingAlpha – http://tinyurl.com/zkhum82). “But, it is a continuous improvement process. That’s why I say, when people say, when should they buy Model S? Like what model year? It’s like, we don’t really have model years. We keep improving the car. If you want to wait until the car stops improving, you’ll be waiting forever.”

Reliability is improving steadily as well. In his letter to investors, Musk wrote: “The cost of first year repair claims for cars produced in 2015 was at about half the level of cars produced in 2014, and about one quarter the level of cars produced in 2012.”

Of course, the theme of constant improvement was reflected throughout the earnings call with emphasis on cost reductions targeted at improving overall profit margins. It is clear that unambiguous customer ownership facilitates data collection and analysis – giving Musk a fundamental market advantage.

Competing car makers must guess or depend on third-party survey organizations to determine whether they are achieving improvements in reliability and customer satisfaction. Tesla owners, on the contrary, feel a direct link to Musk himself. He is speaking directly to them, even if his audience is financial analysts on an earnings call.

The data collection engine that underpins Tesla’s product development and customer and vehicle relationships is reflected in the Autopilot launch. In the investor letter Musk noted: “Our customers are now driving over 107,000 Tesla vehicles in 42 countries and have traveled nearly two billion miles. Tesla’s Autopilot (which is not present in all 107,000 vehicles) is learning at the rate of over a million real world miles per day.”

The dealer-centric world of incumbent automakers is not only hampered by the customer ownership conflict (Who owns the customer? The dealer or the car maker?) it is also driven by the demands of sales, marketing, incentives and advertising.

Traditional car dealers have to move the metal. With vehicles sales hitting record levels in the U.S., these demands have only increased.

Tesla doesn’t advertise and its prices are fixed. Nevertheless, Tesla is outselling all cars in its class with the smallest dealer network, no advertising and no haggling.

In fact, the demand-driven nature of Tesla’s sales is reflected in Tesla’s limiting of access to the Model X for test drives while the company was sorting out kinks in the production process. The message to analysts: Tesla did its best to tamp down interest in the car rather than stimulating demand. “There’s no channel to stuff,” said Musk, speaking more generally, “because there is no channel.”

Constant improvement, customer ownership, data collection and analysis are all hallmarks of the not-so-secret weapon of vehicle connectivity deployed by Tesla. Whether or not Tesla can build, sell and maintain cars profitably remains to be seen, but Tesla has mastered the customer relationship fundamentals.

Competitors know that customer ownership is a much bigger hill to climb than power density. Tesla is gradually converting that hill into an impenetrable wall as it prepares for a 2017 launch of the Model 3.

More articles from Roger…

FinFETs, Power Integrity and Chip/Package Co-design

FinFETs have brought a lot of good things to design – higher performance, higher density and lower leakage power – promising to extend Moore’s law for a least a while longer. But inevitably with new advances come new challenges, especially around optimizing for power integrity in these designs.

One of these challenges is around power noise. FinFET transistors have high drive strengths; combine that with higher transistor densities and you have higher switching current densities. And because FinFETs enable larger designs, dark silicon (power gating) and voltage scaling become more important across more domains to keep power consumption low. And that leads to higher peak currents (inrush when you turn a domain on, for example). Of course all of this clever power switching and voltage scaling has to be supported by an increasingly complex power distribution system with multiple voltage domains (100+ in some cases) and power gate switches.

These factors drive higher voltage drops in the supply grid, and those voltage drops are more consequential because you are running at lower supply voltages in these technologies. If you compare the power integrity design challenge with a less aggressive technology where you might get 100mV swings on a 1V supply (a 10% swing), now you might face 150mV swings on a 700mV supply (a 21% swing), leaving you with a much lower margin for error. Your dynamic voltage drop (DvD) analysis has to be very complete and very accurate.

This noise issue has become so critical that it is now important to model a per-bump model of the package along with the chip because you really need to co-analyze and co-optimize package and die power and ground networks. That increases the scale of the simulation and analysis task.

Electromigration and self-heating in FinFETs present additional challenges in reliability. Higher drive strengths and thinner wires directly increase EM problems in driven wires, but self-heating raises temperatures around the transistor (since oxide layers isolating the fins limit heat dissipation), and that is thought to potentially affect reliability in neighboring interconnects. (ChipGuy has more on this topic in SemiWiki). So now you need to do not only EM analysis but also thermal-aware EM analysis.

Finally, there’s the usual problem: bigger designs, more complex analysis, so longer run-times. Ansys has analysis solutions in RedHawk for all the problems I have described, but has also made significant advances in distributed multiprocessing (DMP). They already had DMP for simulation, now this has been extended to extraction and data processing and they have been able to demonstrate a 10X speedup on a 1B transistor design. Faster is always good of course, but the impact is more important than that. This level of speedup enables simulating longer time periods which is especially important when checking board integration expectations.

RedHawk has been lead in the power integrity space for over 10 years with 2000+ tapeouts at multiple technology nodes. You can learn more about latest advances in RedHawk analysis by registering for this WEBINAR.

Internet of Things- Keeping the Best Things First

The growth of the Internet of Things and embedded and wearable devices—will have widespread and beneficial effects by 2025. In all of these exponentially-growing technologies — artificial intelligence, robotics, nanomaterials, biotech, bioinformatics, quantum computing, Internet of everything — these files that are going to transform everything we have. Digital technologies such as mobile, social media, smartphones, big data, predictive analytics, and cloud, among others are fundamentally different than the preceding IT-based technologies. Newer technologies touch the customers directly and in that interaction create a source of digital difference that matters to value and revenue. We call that source a digital edge.

According to Cisco, currently there are 10 billion things – phones, PCs, things – connected to the Internet. That is merely 600ths of one percent of the actual devices and things that exist right now. There are over one trillion devices out there right this very minute that are not talking to the Internet – but soon enough they will be. Some 6.4 billion connected “things” will be in use worldwide in 2016, up 30% from this year, according to a new report from Gartner Inc.

In 2016, 5.5 million new things will get connected every day, and total connected things will reach 20.8 billion by 2020, the report says. Gartner estimates that the Internet of Things (IoT) will support total services spending of $235 billion in 2016, up 22% from 2015.

The Internet of Things represents an emerging reality where everyday objects and devices are connected to the Internet, most likely wirelessly, and can communicate with one another at some intelligent level. It is also assumed that, as the physical and digital worlds integrate more closely with each other, and the number of connected devices is predicted to reach 25 billion by 2016, the IoT will enhance and evolve our ability to manage and process information.

1. IoT will touch nearly every area of utility operations

The IoT is at the center of this transformation. It connects assets, people, products, and services to streamline the flow of information, enable real-time decisions, heighten asset performance, mitigate supply chain risks, empower people, and help ensure product quality and consistency. Leading utility companies are investing billions in the IoT and realizing returns that range from increased overall equipment effectiveness, reduced cost of quality and compliance, improved customer service, and increased return on innovation. They are beginning to transform their business practices and recognize that, in time, the IoT will touch nearly every area of utility operations and customer engagement.

The IoT will connect formally unconnected things to the Internet, bring new ways to automate processes, and provide a slew of data that needs to be analyzed. In my experience often when a new trend is breaking it is the more mundane applications and use cases that appeal to the public, perhaps because of the underlying complexity of the idea. “People want to analyze vast amounts of data and be able to do things with that information that are relevant and impactful. Over the past few years, the definition of “Smart Cities” has evolved to mean many things to many people. Yet, one thing remains constant: part of being “smart” is utilizing information and communications technology and the Internet to address urban challenges.

2. Other examples of Internet of Things activities include:

(A) Smart cities where ubiquitous sensors and GPS readouts allow for vastly smoother flows of traffic; warnings and suggestions to commuters about the best way to get around traffic– perhaps abetted by smart alarm clocks synched to their owners’ eating and commuting habits and their day-to-day calendars.

(B) Remote control apps that allow users’ phones to monitor and adjust household activities—from pre-heating the oven to running a bath to alerting users via apps or texts when too much moisture or heat is building up in various parts of the home (potentially alerting users to a leak or a fire).

(C) Subcutaneous sensors or chips that provide patients’ real-time vital signs to self-trackers and medical providers.

(D) Vastly improved productivity in manufacturing at every stage, as supply chain logistics are coordinated.

( E) Sensored roadways, buildings, bridges, dams and other parts of infrastructure that give regular readings on their state of wear and tear and provide alerts when repairs or upgrades are needed.

(F) Paper towel dispensers in restrooms that signal when they need to be refilled. Municipal trash cans that signal when they need to be emptied. Alarm clocks that start the coffee maker.

(G) Smart appliances working with smart electric grids that run themselves or perform their chores after peak loads subside.

We will wear smart clothes and smart things. The world will also be thick with smart things as well, including products for sale that communicate what they are, what they cost, and much more. Moderating between ourselves and the rest of the world will be systems of manners. So, for example, we might wear devices that signal an unwillingness to be followed, or to have promotional messages pushed at us without our consent. Likewise, a store might recognize us as an existing customer with an established and understood relationship. Google Glass today is a very early prototype and has little, if any, social manners built-in, which is why it freaks people out.

3. Internet of Things will be a transformational trend over the next five to ten years

The Internet of Things will bring with it a whole new explosion of data that, if managed correctly, can be of enormous value to the contact centre and customer experience delivery. Contact centres will be able to gain more control of customer service by the Internet of Things providing them with new streams of information that is integrated in to their existing infrastructure. Needless to mention, many expect that a major driver of the Internet of Things will be incentives to try to get people to change their behavior—maybe to purchase a good, maybe to act in a more healthy or safe manner, maybe work differently, maybe to use public goods and services in more efficient ways. Even with these challenges, we think the Internet of Things will be a transformational trend over the next five to ten years. And we think that companies’ abilities to adapt and thrive in this new era of the Internet of Things is very likely to determine who the next set of winners and laggards will be in this new connected age. But if you step back and look at this from the overall perspective of our society as a whole, we also think that there’s going to be significant benefits to human safety, to our health, and to the environment.