SiFive held a RISC-V Technology Symposium on February 26 at the Computer History Museum in Mountain View. Keith Witek, SiFive SVP Corporate Development and Strategy kicked off the event and introduced the first keynote speaker Martin Fink, Western Digital CTO, at the time acting CEO of the RISC-V Foundation (as of this writing, Calista Redmond was just appointed the new CEO of the RISC-V Foundation). He shared a slide showing the growing RISC-V ecosystem from tools vendors, to IP/semi chip providers and design/foundry services. He stated that, moving forward, the areas of focus will include standards/specs, ecosystem growth, awareness and education.

Sunil Shenoy, SVP RISC-V IP BU, outlined the SiFive elements of leading the semiconductor design revolution with a global presence and reach including 12 offices, 320+ employees and 300+ tape outs so far and expertise in cloud chip design, RTL, physical design, silicon and design platforms on top of having the nucleus of the key RISC-V inventors, and a deep pool of technical and management talent.

The industry adoption for RISC-V is growing from Western Digital’s transitioning of 1 Billion+ cores per year to RISC-V, NVIDIA’s all future GPUs using RISC-V, India adopting RISC-V as national ISA, US DARPA mandating RISC-V in recent security proposals. Design wins are occurring in microcontrollers, wearables, networking, communications and storage.

The current challenges in hardware designing minimum viable products include cost, time and expertise. Currently a leading-edge technology node chip development costs $500M+ for 7nm, takes 2 to 4 years to develop and requires numerous experts in at least 14 disciplines.

In order to accelerate the innovation cycle addressing slow development, increased cost and increasing dependency on many experts, SiFive is reshaping the Silicon business by enabling free and open instruction set architecture, simplifying custom silicon development with templates and providing easy access allowing design in the cloud.

SiFive offers Core IP product Series (E, S, 2/3/5/7 Series, and U 5/7 Series). With 32 and 64 embedded Cores (some multi core capable) and 64-bit high-performance or multi-core application cores. The graph above summarizes the features by application, performance, cost targets of the core portfolio of offerings. Embedded RISC-V development can occur on platform boards such as HiFive 2 and the higher performance Linux capable HiFive Unleashed with expansion board.

Taking a page from the Software industry built on software stacks, SiFive is enabling the creation of a hardware stack where the customization is the focus with the lower stack levels mostly automated reducing cost and improving schedules while maintaining consistency of the design flow process.

SiFive also is enabling a DesignShare ecosystem where third party IP providers allow early integration and verification of their content in a safe cloud-based environment and where SiFive manages the NDA/contract and collects NRE/royalties at the appropriate time simplifying the customer-vendor interface and process.

The figure below shows a sample of the growing list of the participating IP providers. SiFive had the world’s first cloud Tape-out with Microsoft (Freedom U540) and the world’s first RISC-V SSD controller (FADU).

Simon Davidmann, Imperas CEO then followed, addressing getting the best approach from RISC-V with Application-targeted custom instructions. RISC-V adopters can be developing internal cores, are IP providers, creating open-source IP, enabling open source ecosystem as a business, or taking advantage of open-source RTL by incorporating in their products. Two types of adopters can either be creating new architectures with a ‘freedom to innovate’ key requirement or adopters where ‘free’ is the key requirement in order to support research, education, FPGA platforms and open-source communities.

The innovators want to add their own custom extensions and need quality simulation models to debug and analyze the custom instructions and to do RTL design verification. Imperas provides the high-performance CPU models, modeling technology with instruction-accurate verification simulators, tools, debuggers for embedded software development, debug and test purposes. Imperas Fixed Platform Kits (FPK) allow delivery models to customers and partners for pre-sales evaluation and development.

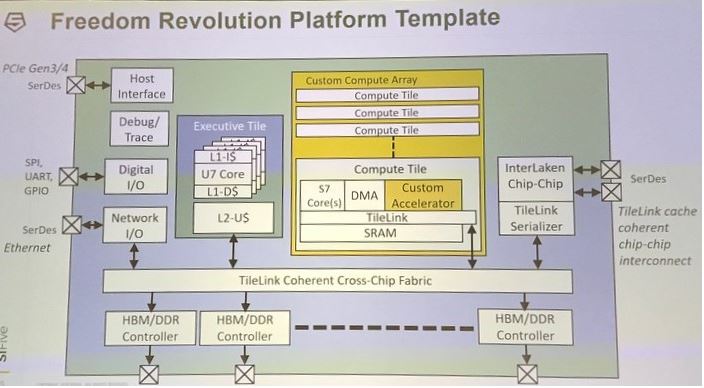

Megan Wachs, SiFive VP of Engineering discussed HiFive Freedom RISC-V Development Platforms. Two SiFive chip platforms include Freedom Everywhere (TSMC 180nm) and Freedom Unleashed (TSMC 28nm) allow customers to customize their SOC by combining pre-integrated configurable SoC architectures, processors, interconnect, off-chip interfaces, on-chip IP from a catalog of SiFIve and DesignShare partner IP providers along with the customer’s own IP. The HiFive1 Arduino-compatible RISC-V Development Board is sold out but a refresh is coming soon. The HiFive Unleashed, the first multi-core RISC-V Linux development board can be ordered on crowdsupply.com for $999.

The Freedom SDK along with SiFive’s Platforms allow configurability of the number and type of cores, includes peripherals and memory map with per-peripheral configurability. SiFive’s Open Source repository allows building one’s own Linux-capable FPGA image.

Ravi Thummarukudy, Mobiveil CEO highlighted RISC-V based platforms for SSD and IoT applications. Mobiveil provides IP and services for high speed interfaces, switches, bridges, memory controllers. To address data center energy and I/O bottlenecks, Mobiveil has a computational storage solution with filtering, scanning, data compression and many other features such as reducing I/O contention and improved power (up to 70% power savings) resulting in improved network performance, lower cost and latency. Mobiveil presented their configurable NVMe SSD Controller platform and reference design and the IoT SoC development platform.

Shafy Eltoukhy, SiFive SVP/GM Custom SoC BU outlined the SSD custom SoC solution with CPU features for the Storage applications using 64-bit real-time addressability for Big Data and real-time applications for Fast Data. AI uses cases for SSD helping in failure prediction, storage tuning, adaptive caching are some examples of what RISC-V can help address.

The Physical Design Platform features a robust silicon engineering methodology with comprehensive checklists throughout the analysis, exploration, implementation and tape-out phases. Custom SoC metrics include 140+ million units shipped with an average 25 DPPM, 300+ tape-outs spanning 100 different end applications for 150 unique customers from tier-1 system companies to startups.

Darrin Jones, Sr Director Technology Development for Azure Cloud Services Infrastructure put in perspective the semiconductor market exceeding 1 trillion devices in 2018 with about 6% CAGR since 2000. Azure boasts 2 million miles of intra data-center fiber, 55 Azure regions, 100+ data centers with millions of servers deployed. The Azure silicon development allows the design execution and verification, place and route and physical verification for advanced nodes such as 7nm with faster product iterations. SiFive, Cadence Design Portal and EDA tools and TSMC VDE are all deployed on top of the Microsoft Azure cloud infrastructure. Performance, price and agility are combining to offer compelling solutions that are seeing adoption.

End Part I