The second chapter of our book “Fabless: The Transformation of Semiconductor Industry” describes the ASIC business and how important it is. That was more than 10 years ago and the ASIC business is still at the forefront of the Semiconductor industry and is a key enabler of the AI revolution we are experiencing today.

First let’s talk about the ASIC business then let’s talk about what Lip-Bu Tan said in his prepared statement on the most recent investor call about the new Intel Central Engineering Group and the ASIC and design services business.

Application-Specific Integrated Circuits are custom-designed semiconductors engineered for specific applications, delivering unmatched performance, power efficiency, and cost-effectiveness compared to general-purpose chips like CPUs or GPUs. ASICs are pivotal in high-volume markets such as artificial intelligence, high-performance computing, telecommunications, automotive, and consumer electronics. Unlike programmable devices, ASICs are optimized for specific tasks, enabling innovations like AI accelerators, 5G/6G infrastructure, and edge computing. The ASIC ecosystem thrives on collaboration between fabless design houses, foundries, and end-users, driving technological advancements.

As of 2025, the global ASIC market is valued at more than $20 billion and is expected to double in the next five years. Key growth drivers include surging demand for AI, edge computing, and advanced connectivity (5G/6G). Systems companies are now doing their own ASICs often times using an ASIC company to jump start internal chip design efforts. Apple’s first iPhone SoC used an ASIC service as did Google’s first TPU.

There are two basic types of ASIC companies, semiconductor companies like Broadcom and Marvell who also do ASICs and ASIC specific services companies like Alchip and AION Silicon who just do ASICs thus not competing with customers. Here are brief descriptions of the ASIC companies I know personally. Alchip and AOIN Silicon are current SemiWiki partners.

Broadcom Inc.

Broadcom is a semiconductor giant with a robust ASIC portfolio. It designs custom silicon for networking, storage, broadband, and AI, serving hyperscale data centers with tailored chipsets, such as accelerators for clients like Google. Broadcom’s ASIC business blends merchant silicon with bespoke designs, contributing significantly to its revenue. In Q2 2025, Broadcom reported record revenues, with custom ASIC segments achieving gross margins exceeding 50%. Its strength lies in AI leadership and diversified offerings.

Marvell Technology Inc.

Marvell specializes in data infrastructure semiconductors, with a growing focus on custom ASICs for AI, 5G, and cloud computing. Transitioning from storage controllers, Marvell now prioritizes high-speed, low-power SoCs and interconnects. Its Q2 FY2026 revenue surged 58% driven by ASIC demand from AI and networking sectors. Partnering with leading foundries, Marvell is well-positioned for AI-driven growth, emphasizing scalable, high-performance silicon solutions.

Alchip Technologies

Taiwan-based Alchip, established in 2003 in Taipei, is a fabless ASIC leader specializing in HPC and AI. Renowned for rapid prototyping and first-silicon success, Alchip collaborates with tier-one cloud providers and TSMC, leveraging advanced nodes for machine learning accelerators and automotive chips. As a TSMC Value Chain Alliance member, Alchip offers end-to-end services, from SoC design to manufacturing, ensuring high-performance, low-latency solutions. Its 2025 focus on sustainable silicon design strengthens its competitive edge in AI and networking markets.

AION Silicon

AION Silicon, a lesser-known but emerging player, focuses on innovative ASIC solutions for AI and IoT applications. Based in the U.S., AION emphasizes customizable, high-efficiency chips for edge computing and smart devices. While smaller than Broadcom or Marvell, AION’s agile approach and partnerships with foundries position it for growth in niche markets. Its 2025 roadmap highlights low-power AI accelerators, targeting cost-sensitive applications.

Arm, Qualcomm, MediaTek and other chip companies have also joined the custom silicon business but that is another story. Now let’s talk about what Lip-Bu announced:

“By connecting our architectures through Nvidia NVLink, we combine Intel CPU and x86 leadership with Nvidia unmatched AI and accelerated computing strengths, unlocking innovative solutions that will deliver better customer experience and provide a beachhead for Intel in the leading AI platform of tomorrow. We need to continue to build on this momentum and capitalize on our position by improving our engineering and design execution. This includes hiring, promoting top architecture talents, as well as reimagining our core roadmap to ensure it is the best-in-class features. To accelerate this effort, we recently created the Central Engineering Group, which will unify our horizontal engineering functions to drive leverage across foundational IP development, test chip design, EDA tools, and design platforms. This new structure will eliminate duplications, improve time to decision-making, and enhance coherence across all product development.”

“In addition, and just as important, the group will spearhead the build-out of a new ASIC and design service business to deliver purpose-built silicon for a broad range of external customers. This will not only extend the reach of our core x86 IP, but also leverage our design strengths to deliver an array of solutions from general purpose to fixed-function computing.”

Bottom line: Brilliant move by Lip-Bu Tan! The ASIC business is critical but it is also VERY competitive. Rather than trying to compete with TSMC’s Value Chain Alliance or acquiring a large ASIC group (which is what Broadcom and Marvell did), Intel doing custom ASICs centered around Intel/Nvidia IP using Intel Foundry manufacturing and packaging is the right thing to do, absolutely.

Fill those fabs!

Also Read:

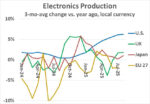

AI Revives Chipmaking as Tech’s Core Engine

Advancing Semiconductor Design: Intel’s Foveros 2.5D Packaging Technology