I’ve written before about the growing utility of FPGA-based solutions in datacenters, particularly around configurable networking applications. There I just touched on the general idea; Achronix have developed a white-paper to expand on the need in more detail and to explain how a range of possible solutions based on their … Read More

Tag: bernard murphy

Here Come Holograms

A quick digression into a fun topic. A common limitation in present-day electronics is the size and 2D nature of the display. If it must be mobile this is further constrained, and becomes especially severe in wearable electronics, such as watches. Workarounds include projection and bringing the display closer to the eye, as in … Read More

Machine Learning Optimizes FPGA Timing

Machine learning (ML) is the hot new technology of our time so EDA development teams are eagerly searching for new ways to optimize various facets of design using ML to distill wisdom from the mountains of data generated in previous designs. Pre-ML, we had little interest in historical data and would mostly look only at localized… Read More

Cloud-Based Emulation

At the risk of attracting contempt from terminology purists, I think most of us would agree that emulation is a great way to prototype a hardware design before you commit to building, especially when you need to test system software together with that prototype. But setting up your own emulation resource isn’t for everyone. The … Read More

Synopsys Opens up on Emulation

Synopsys hosted a lunch panel on Tuesday of DAC this year, in which verification leaders from Intel, Qualcomm, Wave Computing, NXP and AMD talked about how they are using Synopsys verification technologies. Panelists covered multiple domains but the big takeaway for me was their full-throated endorsement of the ZeBu emulation… Read More

A Functional Safety Primer for FPGA – and the Rest of Us

Once in a while I come across a vendor-developed webinar which is so generally useful it deserves to be shared beyond the confines of sponsored sites. I don’t consider this spamming – if you choose you can ignore the vendor-specific part of the webinar and still derive significant value from the rest. In this instance, the topic is… Read More

Virtualizing ICE

The defining characteristic of In-Circuit-Emulation (ICE) has been that the emulator is connected to real circuitry – a storage device perhaps, and PCIe or Ethernet interfaces. The advantage is that you can test your emulated model against real traffic and responses, rather than an interface model which may not fully capture… Read More

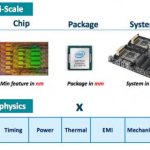

Webinar: Ansys on Multi-Physics PDN Optimization for 16/7nm

On the off-chance you missed my previous pieces on this topic, at these dimensions conventional margin-based analysis becomes unreasonably pessimistic and it is necessary to analyze multiple dimensions together. People who build aircraft engines, turbines and other complex systems have known this for quite a long time. You… Read More

IP Diligence

I hinted earlier that Consensia would introduce at DAC their comprehensive approach to IP management across the enterprise, which they call DelphIP (oracle of Delphi, applied to IP). I talked with Dave Noble, VP BizDev at Consensia to understand where this fits in the design lifecycle.

IP management means a lot of different things.… Read More

Why Ansys bought CLKDA

Skipping over debates about what exactly changed hands in this transaction, what interests me is the technical motivation since I’m familiar with solutions at both companies. Of course, I can construct my own high-level rationalization, but I wanted to hear from the insiders, so I pestered Vic Kulkarni (VP and Chief Strategist)… Read More