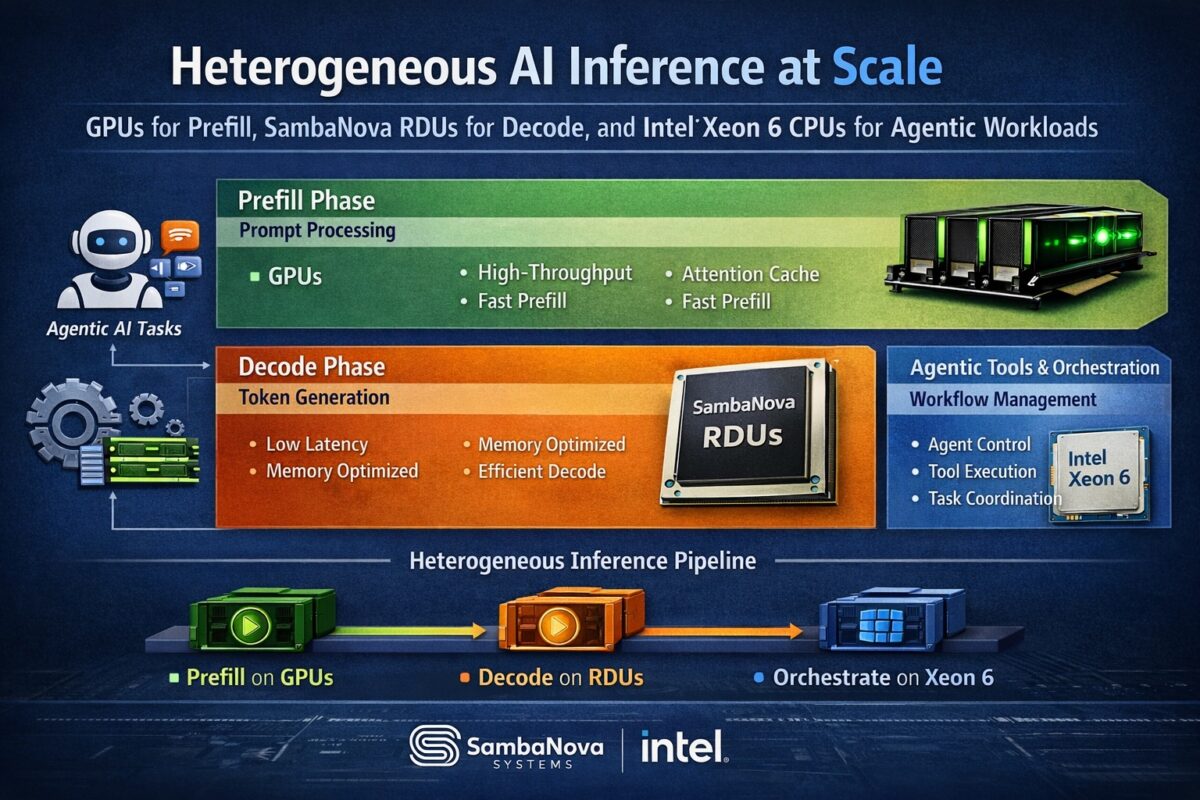

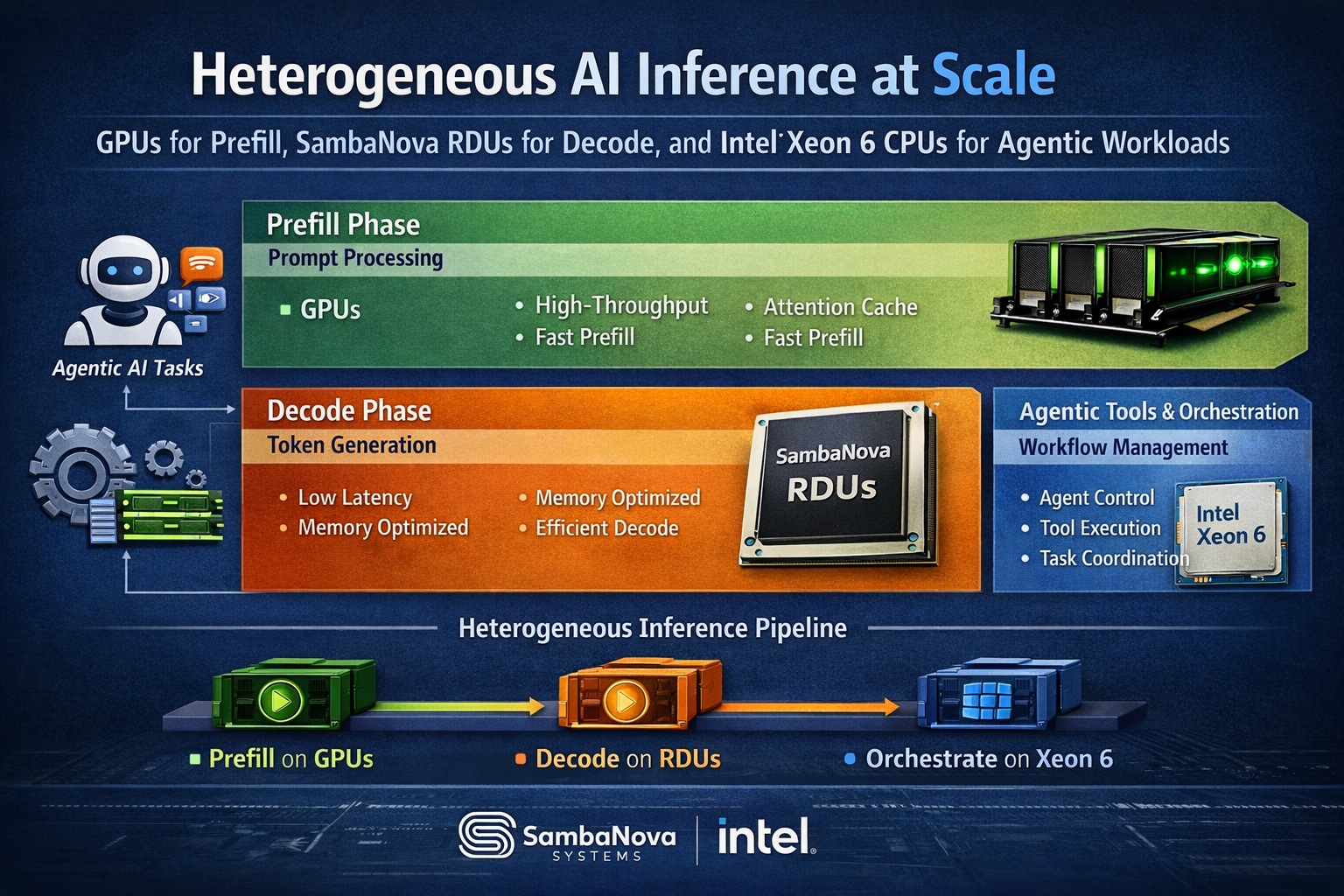

SambaNova Systems and Intel have introduced a blueprint for heterogeneous inference that reflects a significant shift in how modern large language model (LLM) workloads are deployed. Instead of relying on a single accelerator type, the proposed architecture assigns different phases of inference to specialized hardware: GPUs for prefill, SambaNova Reconfigurable Dataflow Units (RDUs) for decode, and Intel® Xeon® 6 CPUs for agentic tools and orchestration. This design addresses the growing complexity of agentic AI systems, where reasoning loops, tool calls, and iterative execution create heterogeneous compute demands that cannot be efficiently served by homogeneous accelerator clusters.

At the core of the proposal is the observation that inference is not a monolithic workload. It consists of distinct computational phases with different performance bottlenecks. The prefill phase processes the user prompt, computes attention matrices, and builds key–value caches. This stage is highly parallel and compute-intensive, making GPUs the most efficient hardware choice. GPUs excel at dense matrix operations and high-throughput tensor math, allowing rapid ingestion of large prompts and minimizing time-to-first-token latency. By isolating prefill onto GPU resources, the architecture ensures high utilization of GPU compute capabilities without wasting cycles on sequential token generation.

Following prefill, the workload transitions to the decode phase, where tokens are generated one at a time. Decode is fundamentally different from prefill: it is memory-bandwidth bound and heavily dependent on efficient access to attention caches. GPUs, while powerful, often underperform in decode scenarios because their architecture is optimized for large batched operations rather than sequential token generation. SambaNova’s RDUs are designed specifically for dataflow-oriented execution, enabling optimized memory access patterns and efficient handling of transformer inference during decode. This specialization improves token throughput and reduces latency, especially for long-context or multi-step reasoning workloads.

The third component of the architecture is the use of Intel® Xeon® 6 CPUs for agentic tools and orchestration. Agentic AI systems increasingly involve external actions such as database queries, API calls, code execution, and workflow management. These tasks are not well suited for accelerators but benefit from general-purpose CPU capabilities, large memory footprints, and mature software ecosystems. Xeon 6 processors act as the control plane, coordinating execution between GPUs and RDUs while also handling tool invocation, validation, and decision logic. This separation allows accelerators to remain focused on model inference while CPUs manage procedural logic and integration with enterprise systems.

This heterogeneous architecture delivers several system-level benefits. First, it improves hardware utilization by ensuring each processor operates within its optimal performance envelope. GPUs handle parallel compute-heavy tasks, RDUs manage memory-bound token generation, and CPUs execute control and orchestration logic. Second, the design enhances scalability for agentic workloads. As agents perform multiple reasoning steps, decode latency accumulates; specialized RDUs mitigate this bottleneck. Third, the architecture enables modular infrastructure scaling, allowing organizations to independently scale GPU, RDU, and CPU pools depending on workload demands.

Another key advantage is improved cost efficiency. GPU-only deployments often suffer from underutilization during decode or orchestration phases. By offloading those tasks to specialized hardware, the system reduces the need for excessive GPU capacity. This approach aligns with emerging data center trends that emphasize disaggregated compute and composable infrastructure. Additionally, using x86-based CPUs for orchestration ensures compatibility with existing enterprise software stacks, reducing integration complexity.

The blueprint also highlights the evolution of AI workloads toward agentic reasoning systems. Traditional chat-style inference involved single-pass generation, but modern agents iteratively plan, execute, and refine outputs. These workflows create alternating compute patterns: dense prompt processing, sequential decoding, and CPU-driven tool execution. A heterogeneous architecture maps naturally to this pattern, reducing performance bottlenecks and improving responsiveness.

In summary, the SambaNova–Intel blueprint demonstrates a practical pathway toward next-generation AI infrastructure. By combining GPUs for prefill, RDUs for decode, and Xeon 6 CPUs for agentic tools, the architecture reflects a shift from homogeneous accelerator clusters to specialized compute fabrics. This design improves performance, utilization, and scalability for agentic AI workloads, and it signals how future AI data centers may evolve to support increasingly complex reasoning systems.

Building the Blueprint for Premium Inference

Also Read:

Intel, Musk, and the Tweet That Launched a 1000 Ships on a Becalmed Sea

Agentic AI Demands More Than GPUs

Silicon Insurance: Why eFPGA is Cheaper Than a Respin — and Why It Matters in the Intel 18A Era

Share this post via:

Comments

One Reply to “Disaggregating LLM Inference: Inside the SambaNova Intel Heterogeneous Compute Blueprint”

You must register or log in to view/post comments.