Harry Foster opened and wrapped a tutorial at DVCon 2022 on “The Best Verification Strategy You’ve Never Heard Of”. Harry started with a common refrain on verification; we face a crisis thanks to a combination of growing complexity in the systems we are able to design, yet double exponential growth in verification cost for those systems. He looks at this as an unintended consequence of separating verification from design around the 1990s.

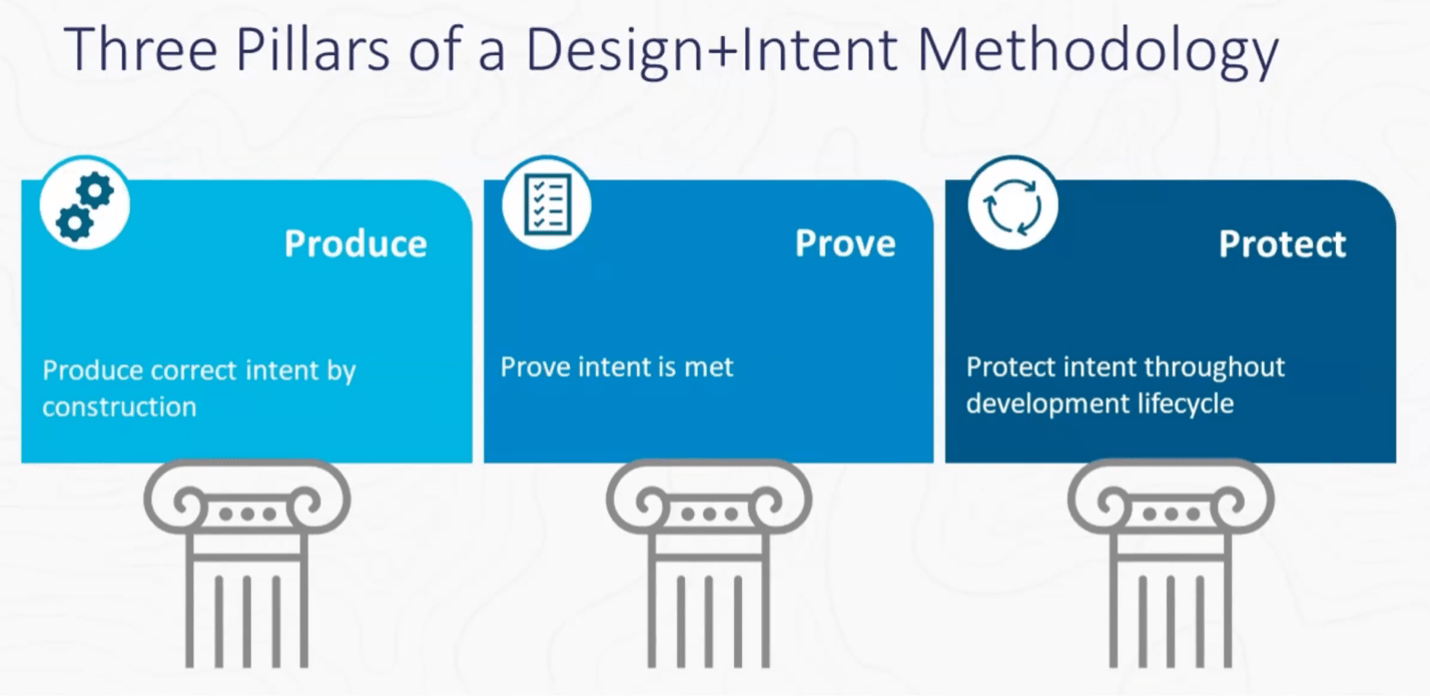

This separation seems logical, but it leads to a problem Deming called out over half a century ago. Inevitably we gravitate towards trying to verify quality into the product rather than designing in and controlling quality from the outset. Which as Deming pointed out does not work well in any engineering context and is certainly not scalable. Harry suggests a different strategy based on design strongly coupled with intent focused insight all the way through the design lifecycle. He maps this onto 3 pillars: producing correct intent by construction, proving that the intent is met, protecting the intent throughout the design lifecycle. Harry also has whitepaper on this topic which is a good read.

Produce

The main point here is that the density of bugs per N lines of code is more or less independent of the application – video games, mobile apps, or hardware design. Aside from using pre-validated IP, the best knob to control total number of bugs in a design is to reduce the number of lines of code by coding in higher-level language (HLL).

Which naturally leads most of us to think of SystemC or C++. This section of the tutorial illustrates with an example HLL design for a digital pre-distortion block, compensating for non-linear behavior in the following power amplifier. They also cite a Google paper at HotChips on using HLL to build a video codec, for which Google asserts they found 99% of functional bugs before running any RTL simulations. A design in a high-level language will create less bugs and those bugs will emerge faster thanks to faster simulations.

Other signal processing functions derive similar value – communications, video and audio pipelines are common examples. The growing importance of all these functions highlights the benefits of this approach to SoC design in general. Of course, not all functions build on signal processing, but the principle of HLL still stands in my view, though in different domain-specific languages. For example, you might choose to implement a GPU in Chisel.

Prove

I’m a big believer in the message from this part of the tutorial – that before you run a minute of simulation, you should be running as much static analysis as you possible can. This section of the tutorial laid out a pretty detailed list, some familiar, some less so. This starts with Linting to find semantic, structural and stylistic problems. Then onto formally supported Linting to detect potential deadlocks in state machines, value overflow in assignments and similar issues. Then initializations and X-checking. Next, domain crossing checks for clocks and resets. Then design connectivity checks (did any top-level connections break in the last drop?). And register checks.

Then it gets more interesting, at least for me. Operational assertions, coded against the OneSpin TiDAL library, check functionality against specifications using formal methods. They apply their approach to trojan detection in which they can prove not only that a core does what it is supposed to do but also that it does not do anything it is not supposed to do. They cite a paper (not easy to find) presented at GOMAC in which they found a Trojan kill switch.

Static checks are also important in implementation, where logic correct at RTL can become incorrect in a gate level mapping. Or in FPGA implementation, where equivalence checking between implementation and RTL can be quite different from ASIC flows. Overall, what is important is that all these checks are static, amenable to relatively quick runtimes. This is critical in continuous integration disciplines. There, most potential failures must be flushed out quickly before longer simulation regressions start.

Protect

I confess the presentation on this topic confused me. Perfectly reasonable product pitch on the features and benefits of the Siemens EDA hardware accelerator line. But what did that have to do with Protect? I decided maybe it was so obvious to the presenter that it didn’t need explanation. I looked back at the earlier paper which helped a little with this statement:

Finally, the Protect pillar consists of analysis tools that ensure the intent of the design is retained throughout the entire development life cycle; for example, identifying new metastability issues potentially introduced during the synthesis and implementation process.

Maybe through continued regression , the strategy can continue to ensure that design intent stays on track. Sounds reasonable, but I would welcome some more explanation on the connection between Protect and these activities.

You can watch the recorded tutorial HERE.

Also read:

Scalable Verification Solutions at Siemens EDA

Power Analysis in Advanced SoCs. A Siemens EDA Perspective

Faster Time to RTL Simulation Using Incremental Build Flows

Share this post via:

Comments

5 Replies to “Siemens EDA on the Best Verification Strategy”

You must register or log in to view/post comments.