Synopsys has posted on the SolvNet site a fascinating talk given by Dr. Theo Drane of Intel Graphics. The topic is datapath equivalency checking. Might sound like just another Synopsys VC Formal DPV endorsement but you should watch it anyway. This is a mind-expanding discussion on the uses of and considerations in formal which will take you beyond the routine user-guide kind of pitch into more fascinating territory.

Intellectual understanding versus sample testing

Test-driven simulation in all its forms is excellent and often irreplaceable in verifying the correctness of a design specification or implementation. It’s also easy to get started. Just write a test program and start simulating. But the flip side of that simplicity is that we don’t need to fully understand what we are testing to get started. We convince ourselves that we have read the spec carefully and understand all the corner cases, but it doesn’t take much compounded complexity to overwhelm our understanding.

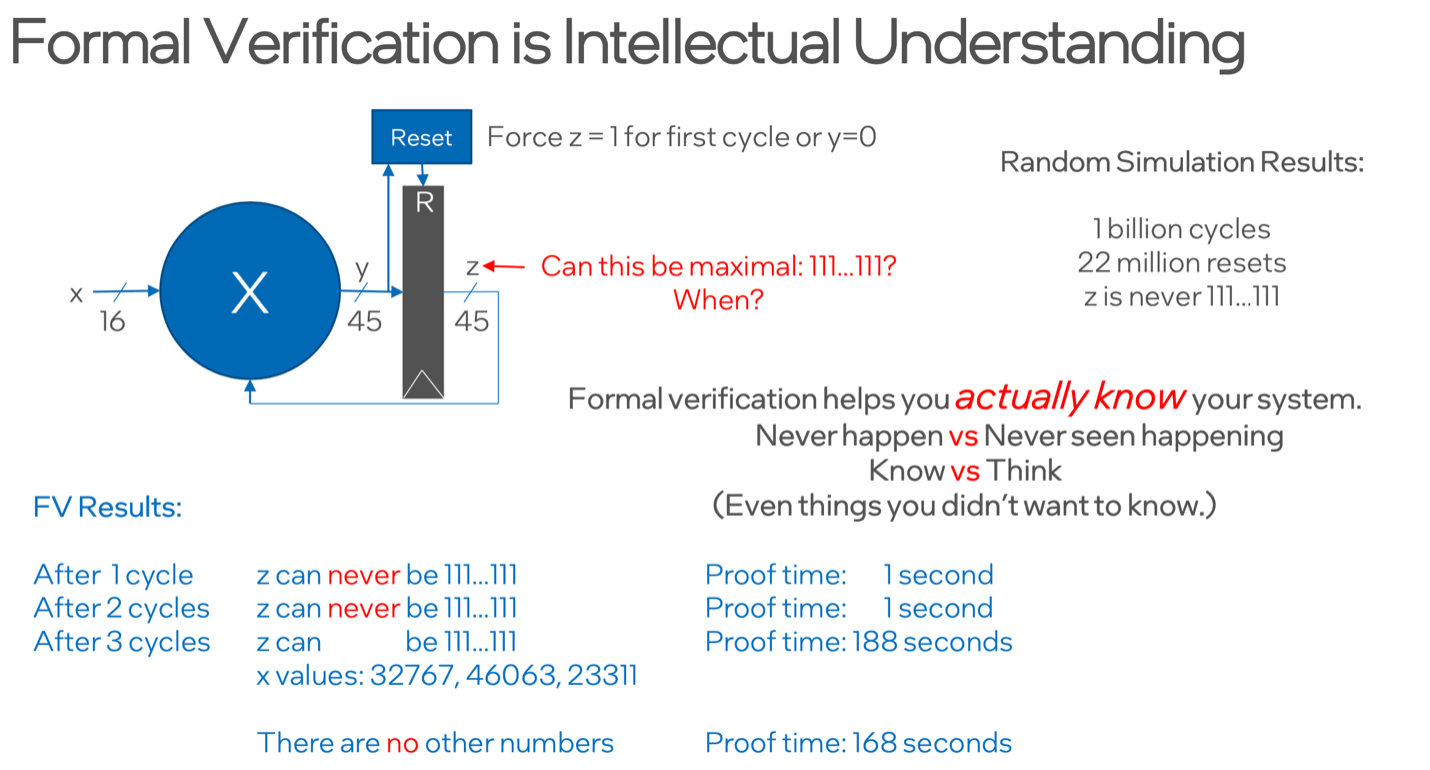

Formal encourages you to understand the functionality at a deep level (at least if you want to deliver a valuable result). In the example above, a simple question – can z ever be all 1’s – fails to demonstrate an example in a billion cycles on a simulator. Not surprising, since this is an extreme corner case. A formal test provides a specific and very non-obvious example in 188 seconds and can prove this is the only such case in slightly less time.

OK formal did what dynamic testing couldn’t do, but more importantly you learned something the simulator might never have told you. That there was only one possible case in which that condition could happen. Formal helped you better understand the design at an intellectual level, not just as probabilistic summary across a finite set of test cases.

Spec issues

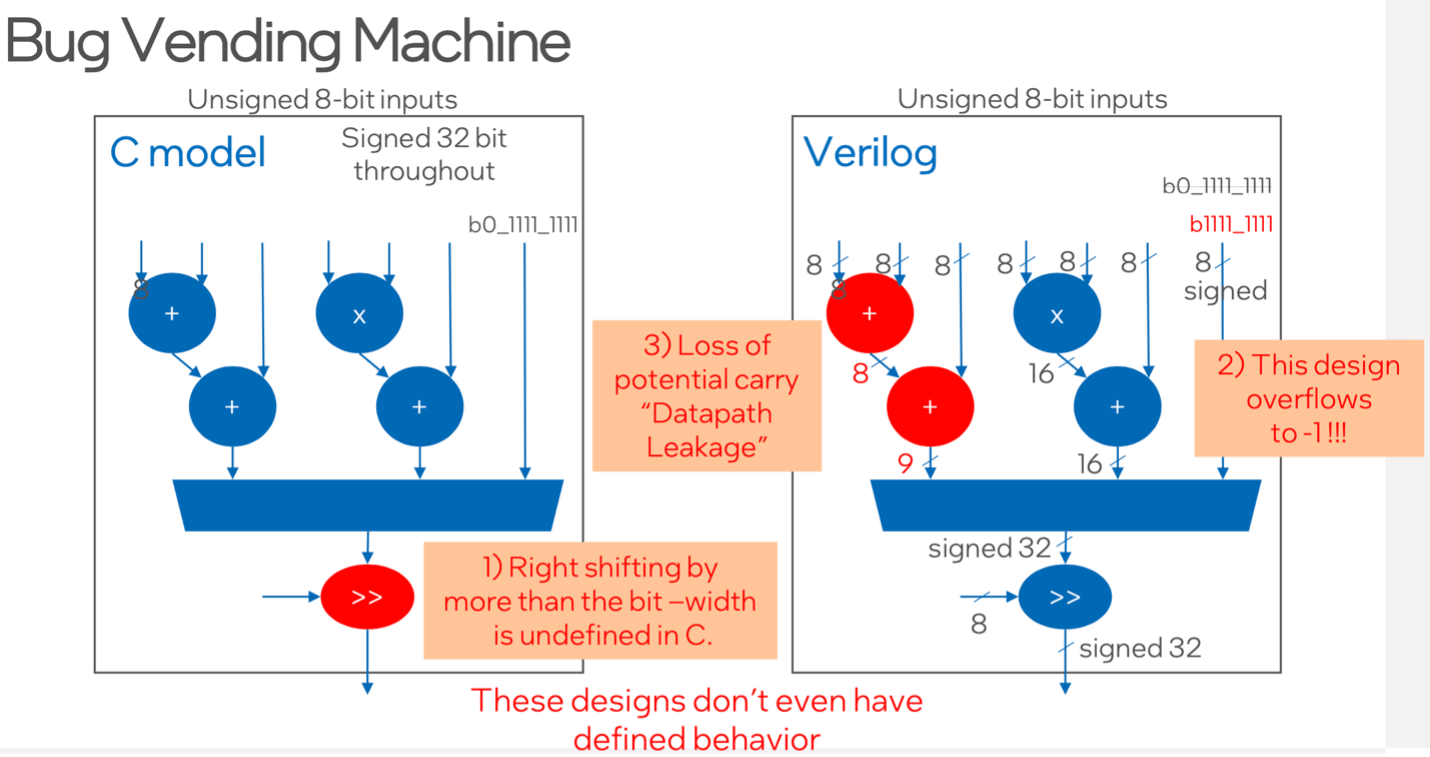

Theo’s next example is based on a bug vending machine (so called because when you press a button you get a bug). This looks like a pretty straightforward C to RTL equivalence check problem, C model on the left, RTL model on the right. One surprise for Theo in his early days in formal was that right-shift behavior in the C-model is not completely defined in the C standard, even though gcc will behave reasonably. However, DPV will complain about a mismatch in a comparison with the RTL, as it should. Undefined behavior is a dangerous thing to rely on.

Spec comparison between C and RTL comes with other hazards, especially around bit widths. Truncation or loss of a carry bit in an intermediate signal (#3 above) are good examples. Are these spec issues? Maybe a gray area between spec and implementation choices.

Beyond equivalence checking

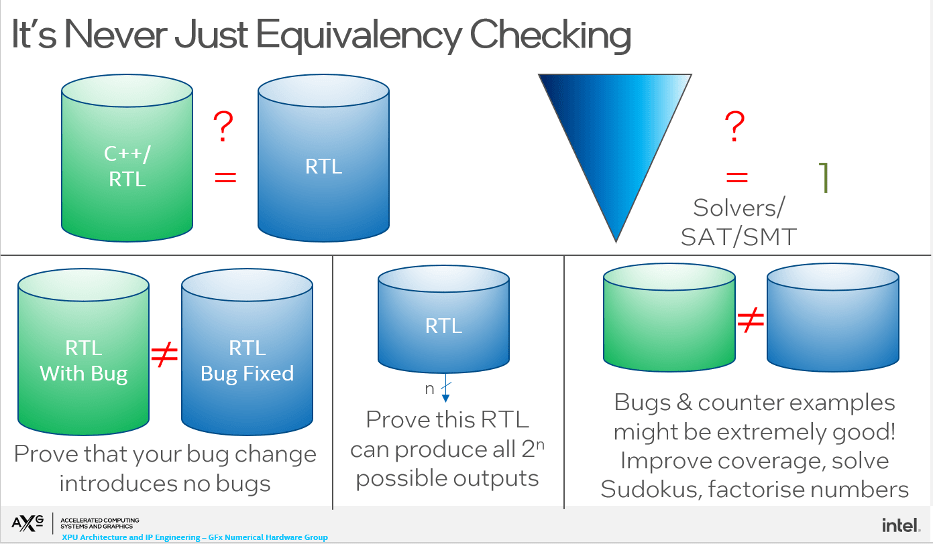

The primary purpose of DPV, it would seem, is to check equivalence between a C or RTL reference and an RTL implementation. But that need is relatively infrequent and there are other useful ways such a technology might be applied, if a little out of the box. First a classic in the implementation world – I made a change, fixed a bug – did I introduce any new bugs as a result? A bit like SEQ checking after you add clock gating. Reachability analysis in block outputs may be another useful application in some cases.

Theo gets even more creative, asking trainees to use counter examples to better understand the design, solve Sudokus or factorize integers. He acknowledges DPV make be an odd way to approach such problems but points out that his intent is to break the illusion that DPV is only for equivalence checking. Interesting idea and certainly brain-stretching to think through such challenges. (I confess I immediately started thinking about the Sudoku problem as soon he mentioned it.)

Wrap up

Theo concludes with a discussion on methodologies important in production usage, around constraints, regressions and comparisons with legacy RTL models. Also the challenges in knowing whether what you are checking actually matches the top-level natural language specification.

Very energizing talk, well worth watching here on SolvNet!

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.