Autonomous vehicle progress is in the daily news, so it’s quite exciting to watch it develop with the help of SoC design, sensors, actuators and software from engineering teams spanning the entire globe. Tesla vehicles have reached Level 2 autonomy, Audi e-tron is at Level 3, and Waymo nearly at Level 5 with robot taxis being tested in Phoenix and Silicon Valley with a human driver ready to take the wheel. How are EDA, IP and systems companies facing the challenges of delivering electronic systems to enable autonomous vehicles that are safe under all conditions?

To help answer that question I attended a webinar from Mentor, a Siemens business, presented by Dave Fritz – he’s the Global Technology Manager for Autonomous and ADAS. Three key concepts were shared to frame the webinar discussion on how to validate Autonomous Vehicles:

- Correct operation can only be determined in the context of the entire vehicle and the environment within which it is operating.

- Constrained random testing cannot guarantee coverage corner cases not possible with physical platforms, requires correlation between virtual and physical models.

- Consolidation of functionality is inevitable and will follow the path of other industries that have gone through the same transformation.

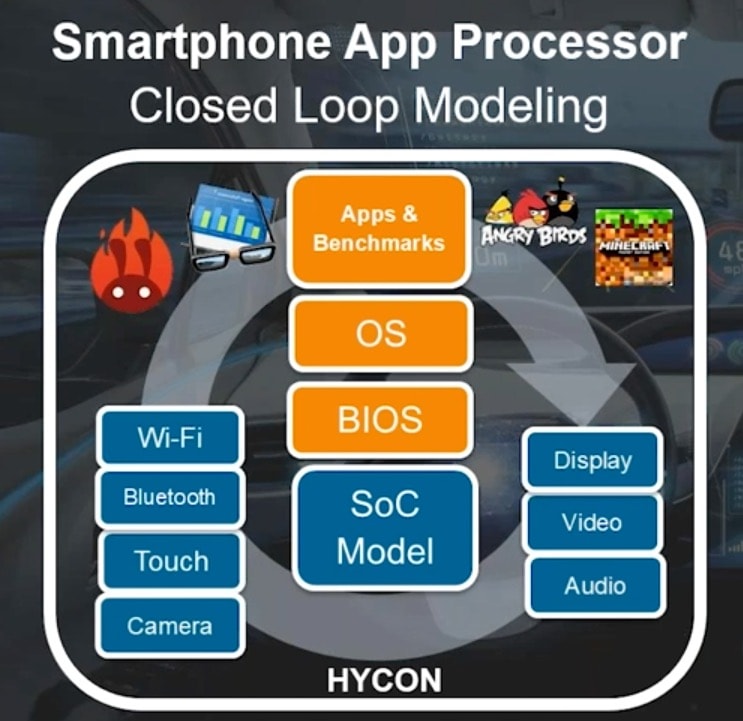

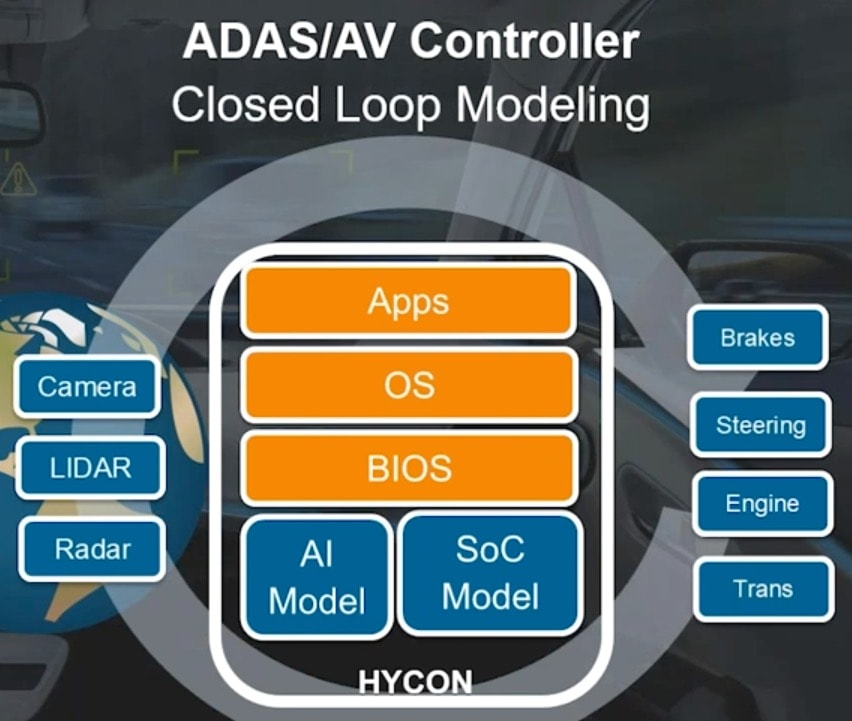

There are similarities in how a Smartphone Application Processor gets designed and validated compared to an ADAS/AV Controller, as they both have closed loop modeling as shown below with inputs on the left, outputs on the right, and a stack in the middle:

With an AV the input stimulus comes from the real world driving conditions, so the number of states is huge, something way beyond what an App Processor would encounter, so a new validation methodology is sought. Instead of using a hardware-driven development process where hardware is developed first, then software and validation in sequence later, the continuous integration process of hardware and software being developed in parallel is a better approach.

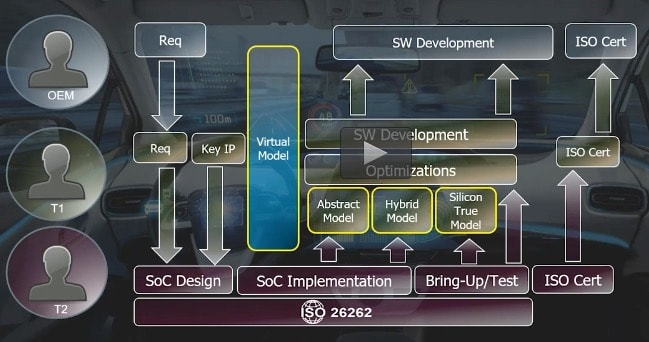

The ecosystem for automotive design is quite complex, with multiple vendors which in turn calls for increased collaboration in order to meet the stringent ISO 26262 requirements for functional safety.

Shown in blue above is the Virtual Model, and this early model is what allows AV design teams to get to market quicker by simulating and validating the entire environment.

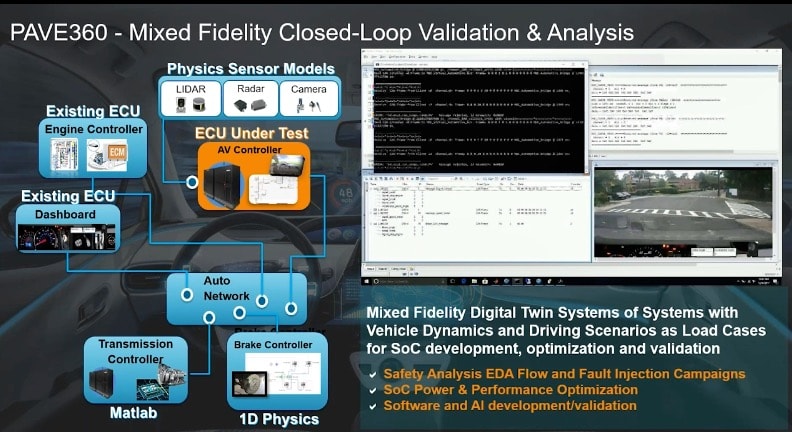

From a product viewpoint Siemens has assembled quite a wide swath of technology called PAVE360 that allows automotive scenarios to be modeled, video viewed, and sensor models generating raw data for LIDAR, Radar and camera.

The beauty of this methodology is that a complete system like AV can be modeled and validated even prior to silicon. In the example Dave talked about how the ECO was modeled with a PowerPC core, the braking used physics-based control, transmission was modeled in Matlab, and even the dashboard was instrumented. Scenarios can be brought into PAVE360 from accident databases or created. Big questions are answered when running scenarios like:

- Did we avoid the accident?

- Did the occupants survive?

Yes, you can even model what happens to the passengers in terms of airbag interactions.

It’s wonderful to hear about AV companies driving millions of actual miles to build up experience in real driving, but to reach sufficient safety levels it has been estimated that you need to drive billions of miles, a goal not likely to happen. With virtual scenarios you can drive the billions of miles under any scenario.

Summary

The webinar also addressed issues like the fidelity of modeling abstraction, using formal methods, correlating between physical and virtual models, and handling corner cases. What I came away with after this webinar was that using PAVE360 as a platform creates a high confidence that virtual models indeed match physical, and that you can catch corner case issues in the lab before in the field. Of course, you want to continue on-road testing to ensure that there are no surprises with virtual testing.

To view the archive webinar start here.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.