With the unprecedented increase in semiconductor design size and complexity design teams are required to accommodate multiple design constraints such as multiple power domains for low power design, multiple modes of operation, many clocks running, and third party IPs with different SDCs. As a result timing closure has become extremely complex and tricky. While false paths may take away all your attention towards timing closure, some of the real timing issues may get missed, and certain subtle issues such as incorrect exceptions may show up later in the chips or ask for re-spin. As such, timing constraints by nature are incomplete or inconsistent and evolve through the design process. A valid constraint at block level may become invalid at chip level, thus impacting the timing closure and overall design cycle. And that gets further complicated when you have to promote the validated constraints from IP to SoC or push down from SoC to IP during IP integration and then signoff at SoC level taking into account all modes of operations, detailed validation and post-layout repair. So, it’s high time we have an automated comprehensive timing solution and constraints signoff flow to accelerate timing closure and reduce design risks.

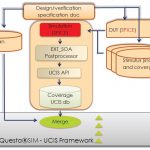

Early in this month, it was a nice opportunity to attend an interesting webinaron SpyGlass Timing Constraints Signoff Flow, presented by Mark Baker, Director of Product Marketing at Atrenta. For me, it was an extra pleasure to hear Mark as I know him from my Cadence days. What I found was a real comprehensive flow where everything about timing constraints is taken care of, starting from creation and validation including exception verification, all the while providing management of constraints through signoff.

An SDC can be created from scratch or incrementally added to an existing set of design constraints. The creation process works at the RTL level by identifying constraints for primary clocks, generated clocks, and primary IO; of course uncertainly and latency has to be added by the user. Identification of all clock crossings is automatic, setting false paths (FPs) between asynchronous CDC. Architectural exceptions or false path constraints are generated to avoid false timing violations between exclusive clocks. Addition of these exceptions can lead to faster timing closure.

Constraint validation is done at RTL as well as netlist level, checking completeness, consistency and correctness within a robust debugging environment. There are over 300 rules covering clocks, I/O delays, structural exceptions and methodology based rules with full support of SDC standards. The solution supports commonly used non-SDC constructs as well. All clock constraints are taken into consideration for consistency of clock intent. Any constant propagation conflicts including forward and backward propagation are flagged.

Formal waveform verification is done to avoid mismatch in design and timing intent which can give a false sense of timing closure but actually can lead to chip failure or re-spin. Complete clock domain analysis or relationship reporting between all clocks and generated clocks are done by extracting clocks, false paths and uncertainties. The setup for CDC, power and exception verification is done automatically while pointing out any conflict that can lead to incorrect timing or CDC.

Timing Exception Verification is a major step to ensure adequate and correct timing exceptions are applied to the design. Accurate timing exceptions lead to faster timing closure without overdesigning, thus enabling better power and area optimization. The asynchronous FPs are detected using CDC solution, quasi-static FPs through simulation and synchronous FPs using functional verification. The Multi-Cycle Path (MCP) verification is done by using patented formal techniques, providing a fast solution with high rate of completion. The overall idea is to identify exceptions meaningful to design implementation that accelerates back-end timing closure through industry standard STA flows. Closure of exception verifications are achieved faster by intelligently monitoring any changes to the exceptions throughout the implementation, use of assertions, incremental verification and ensuring that a path is either timed or verified for synchronization.

The constraints are gracefully managed throughout the design by detecting and incrementally adding missing constraints at any stage and checking their equivalence at every stage under different scenarios, a patented capability in SpyGlass Timing Constraint Signoff Flow. There can be a scenario where there may be 2 SDCs created for a single design; the equivalence between the two SDCs can be easily checked. Similarly, SDCs at block and chip levels can be checked for their equivalence. Also, equivalence between 2 SDCs for two different flavours of a design can be checked.

A design can have multiple functional and test modes and for each mode of operation there is a separate SDC file. In order to simplify the job and save runtime for implementation tools such as Synthesis, P&R and STA, the SDC files of multiple modes can be merged into a single file representing a virtual mode that has timing constraints of all individual modes. To ensure that the merged mode has all timing aspects of individual modes (with pessimism in merged constraint) the SDC equivalence between an individual mode and the merged mode can also be checked.

The overall health of timing analysis can be measured by a nice mode wise coverage summary that points out any aspects of timing that’s not covered such as missing clock constraints, unconstrained registers or ports and so on. The timing coverage analysis report acts as a good indicator of signoff readiness in all modes.

As a concluding remark, this flow covers all aspects of timing constraints for a robust optimized design; it ensures correct, consistent and complete constraints with all clock relationships properly defined, constraints checked against design intent, timing exceptions verified, constraint equivalence ensured at each stage of design, modes merged for faster implementation and exception coverage report generated for timing signoff.

It’s a nice webinar to attend and learn about timing constraint issues in today’s designs and how they can be addressed using a comprehensive timing constraints signoff solution.

Also read –

Expert Constraint Management Leads to Productivity & Faster Convergence

SpyGlass CDC: A Comprehensive solution for addressing CDC issues

An Approach to Clock Domain Crossing for SoC Designs

Smart Clock Gating for Meaningful Power Saving