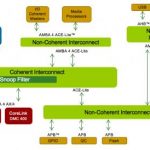

The advantage of working with cache memory is the great boost in performance you can get from working with a local high-speed copy of chunks of data from main memory. The downside is that you are messing with a copy; if another processor happens to be working in a similar area, there is a danger you can get out of sync when reading and writing copies of the same main-memory addresses. That’s where cache-coherence protocols come in, to keep those copies in sync where needed, and since ARM is the de-facto supplier of cache-coherent bus-fabrics connecting their processors to cache memories and to other IPs, VIP becomes essential to verify correct usage across all the flavors of AMBA your design may contain.

The Synopsys VIP for the AMBA4 ACE and AMBA5 CHI protocols is an excellent example of why use of proven VIP is so important in testplans. You have to contend with multiple protocols, coherent and non-coherent agents and interconnect, all the possible varieties of state transitions among the agents, ordering complications and more. Since the ACE specification alone is nearly 200 pages, it would be crazy to try to recreate all the required checks and particularly all the coherency protocol checks associated with these standards. Instead you buy a proven VIP which self-configures (given some guidance) to your design and generates a testbench that will run sequences to perform all the standard-required tests, provide related checks, do coverage analysis and more. Which leaves you free to focus on how your architecture applications perform to your market objectives.

So yeah, VIP is good, etc, etc but isn’t this just another in a long line of VIP? Well not quite. Suppose just for the sake of argument that you had the time, money and interest to build this yourself (you don’t – there are no extra-credit projects in this area). Of course this would take lots of expertise and time to evolve/debug/prove what you had built. But that isn’t really so different from other VIP. What makes this domain especially challenging is the non-determinism in cache-based systems and potentially long latencies between source of errors and the ultimate effect of those errors, all made more complex in systems with coherency management. For all practical purposes, the number of types of software that can run on these systems, multiplied by the number of types of data they must process, multiplied by an unpredictable level of real-time interrupts gives you an infinite number of possible scenarios hitting caches in unpredictable sequences.

What happens if you get this even a little bit wrong? Unlike a small error in a peripheral protocol, an error in cache behavior is an error in the heart of the machine – there really are no bounds to how badly (or, worse yet, subtly) that can affect behavior. You may not even see the impact of a low level bug inside the time-windows you are testing – an error of this type can manifest quickly or can take days to bubble-up to observable misbehavior on silicon. Which means that this is an area where any level of imperfection truly is not an option.

For these reasons, cache coherence VIP must be developed by protocol experts in very close collaboration with the IP provider (ARM). It has to provide comprehensive tests and checks for all possible failure modes and it has to provide super-streamlined debug support, starting from a protocol view and drilling down to signal and logic root causes, because if it takes you too long to debug problems, you’ll run out of time before you really know the coherency interaction is really safe. That’s really why this VIP is, in an important sense, more critical than any other VIP.

You can learn more about the Synopsys AMBA VIP HERE.