I’ve written before about how Ansys applies big data analytics and elastic compute in support of power integrity and other types of analysis. A good example of the need follows this reasoning: Advanced designs today require advanced semiconductor processes – 16nm and below. Designs at these processes run at low voltages, much closer to threshold voltages than previously. This makes them much more sensitive to local power fluctuations; a nominally safe timing path may fail in practice if temporary local current demand causes a local supply voltage to drop, further compressing what is now a much narrower margin.

Continue reading “Big Data and Power Integrity: Drilling Down”

Tuning in to Tesla Radio

The rumor is spreading far and wide that Tesla Motors’ CEO Elon Musk is talking with major record labels about creating a streaming audio service dedicated to Tesla vehicles. The widespread industry reaction is head scratching and eye rolling at what is seen as the latest chapter in the Musk follies – Solar City, Model 3, Autopilot, Gigafactory – but a closer look reveals genius at work.

It was five years ago that Harman acquired Aha Radio and began work on an in-dash content aggregation platform. Later iterations suggested that Aha Radio could become a platform for car companies to curate their own branded content delivery experience in their cars with the help of Aha. Unfortunately, Harman and Aha sought to preserve Aha as a branded aggregation platform and the possibility of “Honda Radio” or “Mazda Radio” fell by the wayside.

Harman continues to work on the Aha platform – expanding it into the Ignite data aggregation and monetization system it offers today – but the OEM-branded radio ship has sailed. What the car companies failed to perceive, but which Musk has grasped, is that core vehicle development expenditures in the world of connected cars is slowly but inexorably shifting away from expensive internal combustion engine engineering teams and towards data management and connectivity platforms.

In the auto industry of the future data will indeed be the new vehicle fuel and the engine driving industry growth will be artificial intelligence. The change is coming about both slowly and rapidly and Musk and Tesla are at the tip of the spear because of the complete commitment to an electrified powertrain.

Car makers have struggled with the expensive process of trying to create and maintain their own content and data aggregation platforms. Most car makers, with the exception of Toyota and Tesla, have thrown in the towel on trying to own the in-vehicle content delivery system, recognizing and deferring to the greater resources and band recognition of Apple and Alphabet. This was a mistake.

Detached from the monumental investments associated with the pursuit of incremental enhancements in performance and fuel efficiency sought be car makers dependent on ICEs, Tesla is free to pursue the next generation challenges of owning and curating content consumption in cars. Tesla’s ICE-focused competitors have been more amenable to surrendering some control to the likes of Apple (CarPlay) and Alphabet (Android Auto) and SiriusXM. Not so Tesla – and Toyota.

In the world of electrified and connected cars differentiation will be less centered on powertrain than it will be on customer engagement. With a much lower service component (fewer moving parts, no oil changes, etc.) car companies will need to leverage connectivity and in-vehicle communication platforms to reinforce brand messages and raise the level of customer engagement.

Tesla understands that content consumption in the connected car is a privileged value creating proposition and one which is ripe for value creation and new forms of customer engagement. Apple and Alphabet with CarPlay and Android Auto are offering one approach. Pandora, Spotify and SiriusXM each have their own approaches.

Combined with broadcast radio and the driver’s own recorded content, content consumption in the car is rapidly fragmenting with no single winner. In spite of these incursions in the dashboard the car radio remains the ideal, contextual, free, content delivery platform integrating entertainment, news, weather, sports and traffic in a distraction mitigated hands-free/eyes-free platform.

It’s no coincidence that the dominant advertisers on broadcast radio are focused on selling, servicing or insuring cars. The symbiosis is powerful and enduring.

SiriusXM has demonstrated that there is the potential for billions of dollars in subscription-based revenue. A Tesla-created content delivery platform is more likely to be ad-supported focusing on what is the single most attractive captive listening audience anywhere: Tesla owners! Having a connected and contextual listening experience with audience measurement elements integrated with automated driving presents a unique value proposition that ANY advertiser would want a piece of.

Pandora-like, Tesla’s system could be free/with ads or ad-free/with subcription – but could also integrate all content/information sources in a single platform – WITHOUT SiriusXM/CarPlay/Android Auto. Tesla Radio will be the answer to these “alien” offerings from third parties with their “own agendas.”

Like everything else at Tesla, the imperative is in-house, vertically-oriented, bespoke solutions not contingent or dependent on any dominant external “platform” provider – ie. no thank you Apple, SiriusXM, Microsoft, HERE, Alphabet, etc. etc. This isn’t always good strategy and won’t always produce sound results. Tesla bid Mobileye adieu after the fatal crash in Florida followed by some desperate scratching and scraping to put together an equivalent alternative self-driving system.

Tesla sees that connected cars are a platform opportunity. From the beginning, Tesla has been building its own platform unreliant on the platforms of others. The strategy is clear from sharing patents to building the largest global fast-charging network – it’s all about the platform.

Competing car makers have surrendered the opportunity to “take over” the customer engagement opportunity in the car cabin around content consumption. In other words, competing car makers have surrendered or are surrendering their platform potential.

Companies like Honda flirted with this in the early days of smartphone integration in cars and Internet connections to dashboards. But, ultimately, Honda surrendered to the Apple/Alphabet pressure and has taken a more conservative path – along with the rest of the industry.

Toyota has resisted the Apple/Alphabet pressure but has so far failed to offer a compelling competing solution. (Toyota has signed on with Ford-developed Smart DeviceLink which may yet emerge as an open source means for car makers to create their own platforms.) Tesla understands that every car company should be branding the in-vehicle content consumption experience. Think Chevy Radio, Ford Radio, Chrysler Radio – an integrated, customized, content experience curated for owners of a particular brand’s cars whereby urgent messages – regarding recalls, service messages, emergency notifications, local concerts etc. etc. could be communicated within the car and at the command of the vehicle owner: “Tune to Tesla Radio for the latest information on vehicle updates, recalls, new charging locations and Tesla owner events in your area.”

Anyway, the opportunities and possibilities are endless – and Tesla wants to take advantage. It may be years before car companies recognize that the in-dash infotainment system is rapidly becoming more than just the car radio. That system is becoming an essential information gateway between the car and the cloud and the car company and the owner. By the time the competition understands this, Tesla will be miles down the road creating new customer engagement experiences. Stay tuned.

Why I remain optimistic about Tesla

Tesla’s stock price recently took a hit because of concerns about its delivery capabilities and about increasing competition from carmakers who are switching their product lines to electric. With a market cap still exceeding $50 billion, it can be easy to argue that Tesla’s price remains severely inflated, especially when you compare it with those of GM and Ford — which produce 20 times more revenue. You can understand why Tesla remains one of the most shorted stocks — with billions of dollars in bets against it.

But Tesla has an advantage that many people don’t understand: It is much more than an automotive company; it is a technology company building technology platforms. With these, it is positioning itself to also become the leading player in the energy industry and sharing economy. It will bring the same integration, data analysis and elegance to these industries as it did to cars.

I have referred to my Tesla Model S as “a spaceship that travels on land.” It drives differently from any other kind of car, and is lightning fast, smooth and slick. To me, other electric vehicles, such as the BMW i3, the Mercedes B-Class, the Nissan Leaf and the Chevy Bolt, all of which I have driven, seem by comparison to be a clumsy repackaging of old technologies. They feel more like cassette players than iPods. I have every expectation that Tesla’s Model 3, which I have on order, will be almost as good as my Model S, despite costing half as much.

Tesla cars have been designed from the ground up as computers on wheels. Almost every function is controlled by software, and this enables the company to continually analyze data and optimize its functioning — just as Google Search and Apple Siri do. With the billions of miles’ worth of driving data it is gathering, Tesla is on track to deliver full autonomous driving capability earlier than many other car manufacturers will. And because all of its new cars, including the Model 3, come equipped with the sensors that will be necessary to the autopilot software once it’s released, Tesla has a considerable advantage over its rivals.

In July 2016, Elon Musk announced that Tesla would use these technologies to enable a ride-sharing platform called the Tesla Network, through which owners will be able to rent out their cars as autonomous taxis, thereby recouping their investments and even making profits from their cars. As he explained, “Since most cars are only in use by their owner for 5 percent to 10 percent of the day, the fundamental economic utility of a true self-driving car is likely to be several times that of a car which is not.” With highly sought-after cars and a head start, Tesla could grab a significant share of the vehicle-sharing economy — an economy that is expected to transform the transportation industry and disrupt the automobile market.

Tesla is already diversifying its businesses so that it doesn’t sink with the automotive industry when this happens. Underlying the Tesla cars is another technology platform that it is commercializing: the battery. Tesla’s Powerwall is a rechargeable lithium-ion battery that provides homes with the storage of solar-captured energy for use at night or during power outages. This complements the solar roof tiles that Tesla is selling, which look like ordinary tiles and are priced competitively. So what you have is what Musk has called “a smoothly integrated and beautiful solar-roof-with-battery product that just works.” This is the kind of advantage and elegance that came with the Apple iPhone, which integrated music, telephony, and computer applications into one device.

In the same way as Tesla could make car ownership a revenue generator for its drivers, it could do the same for solar power. Homeowners could share their excess energy with other homeowners and provide charging stations for others’ Tesla vehicles. This would also dramatically expand the Tesla supercharger network, enabling charging of cars almost anywhere.

On a larger scale, Tesla is offering utility companies grid-scale energy-storage systems, demand for which will enable it to scale up its production and gain a cost advantage. It has just won a bid to provide the government of South Australia with the world’s largest lithium-ion battery system — 129 MWh of storage, deliverable at up to 100MW, enough to power more than 30,000 homes. Musk committed to having the system installed in a record 100 days. And the data Tesla gathers from this installation will allow it to optimize energy-storage operations as its self-driving data enables it to optimize its cars.

Yes, Tesla has had production issues and missed delivery targets. But that is how technology companies work: They iterate until they get things right; they think big, take risks and change the world. They make extremely optimistic projections and often miss these, and this is what causes investors to panic and short stocks. But when these companies shoot for the moon, they achieve much more than they might otherwise. This is why they often end up being the most valuable of all — and defying gravity.

For more, read my new book,The Driver in the Driverless Car: How Our Technology Choices Will Create the Futureand visit my website: www.wadhwa.com

SiFive RISC-V and the Future of Computing!

Having started my career during the mini computer revolution it has been an incredible journey from using computers that consumed entire rooms – to the desktop – and now we have supercomputers in our pockets. It makes me chuckle when I hear people complaining about their smartphones when they should be jumping up and down saying, “I have a supercomputer I have a supercomputer!”

Our book, “Mobile Unleashed”, documents how computing went from desktops to our hands and wrists through the history of ARM and their work with Apple, Qualcomm, and Samsung. As Sir Robin Saxby wrote in the book foreword:

“If you’re not making any mistakes, you’re not trying hard enough. I’ve been quoted as saying that in an interview some years ago and when you find yourself defining a new industry, as we did, you try very hard and you make a lot of mistakes. The key is to make the mistakes only once, learn from them and move on.”

ARM certainly made mistakes, mistakes that the next computing revolutionaries have learned from which brings us to RISC-V and SiFive.

RISC-V (pronounced “risk-five”) is a new instruction set architecture (ISA) that was originally designed to support computer architecture research and education and is now set to become a standard open architecture for industry implementations under the governance of the RISC-V Foundation. The RISC-V ISA was originally developed in theComputer Science Divisionof the EECS Department at theUniversity of California, Berkeley.

Going up against a monopoly like ARM is not an easy task but knowing what I know today I would do it exactly like RISC-V and SiFive. Start at the academic level, open source it (Linux versus Microsoft), and move up from there. I’m convinced that some of “the next big things” will come from young minds so open source and Universities is the place to be.

SiFive is the first fabless provider of customized semiconductors based on the free and open RISC-V instruction set architecture. Founded by RISC-V inventors Krste Asanovic, Yunsup Lee and Andrew Waterman, SiFive democratizes access to custom silicon by helping system designers reduce time-to-market and realize cost savings with customized RISC-V based semiconductors.

We started following SiFive last year and published two articles that are worth reading:

RISC-V opens for business with SiFive Freedom

SiFive execs share ideas on their RISC-V strategy

This week SiFive announced a new CEO, Dr. Naveed Sherwani, to take RISC-V to the next level. I was able to chat with Naveed right before the announcement. It is interesting to note that Naveed is an academic with: EDA experience (he wrote an EDA textbook: Algorithms for VLSI Physical Design Automation), Semiconductor experience (started the Microelectronics Services Business at Intel and co-founded Brite Semiconductor), and ASIC experience (he was co-founder and CEO of Open Silicon). So who better to lead RISC-V into the design enablement phase of business?

When you talk to Naveed you will discover all of this experience bundled into a very high energy and passionate leader. The first question I asked of course was, “Why did you join SiFive?” The answer is, “Passion for computer architecture, the impressive list of RISC-V Members, the academic connection, the country connection (India for example has adopted RISC-V versus the advent of paying billions of dollars to ARM), and the global connection (open source spurs innovation all over the world to benefit mankind).”

The second question I asked was, “How will they go from the “Not ARM” market to the “Better than ARM” market?” The answer was cost, power, and performance.

The third question I asked was, “How will SiFive make money?” The answer was, “Think RedHat for semiconductor IP”:

We make buying Coreplex IP easy and hassle-free.

- Choose and customize your Coreplex IP, with open pricing

- Fill out your ordering information

- We’ll electronically send you our straightforward, 7-page license agreement

- It’s that simple

- No long sales calls

- No restrictive NDAs.

- No opaque pricing

- Just customize, order, and sign, and you’ll have your IP in several business days

- All of SiFive’s Coreplex IP come with 1-year of commercial support

According to a recent study by ARM, more than one trillion IoT devices will be built between 2017 and 2035. If ARM is challenged on both technology and price I would expect that number to be significantly higher, absolutely.

Smart Speakers: The Next Big Thing, Right Now

There are many “next big thing” possibilities these days in tech but mostly behind the scenes; few are front and center for us as consumers. That is until smart speakers started taking off, led by Amazon Echo/Dot and Google Home. The intriguing thing about this technology is our ability to control stuff without needing to type/tap/click on a device. We can be hands-free, working on something which requires most of our attention yet still get useful information (Alexa, where’s the oil drain plug on a 2014 Honda Civic?). Or we can simply be lazy (Alexa, play Guardians of the Galaxy). Don’t underestimate the power of convenience – a good part of the American economy runs on it. Maybe this is just another fad but it feels to me like a fundamental shift in user experience (UX).

These speakers are a wonderful example of convergence of multiple technologies to create a new user application, which after all is what is driving much of the current tech boom. I don’t think I’ve found another company who explain the landscape, shortfalls, opportunities and sheer entertainment value of smart speakers as well as CEVA. Naturally they have a vested interest – they provide a lot of the IP behind the first stages of voice recognition – but they also clearly have a good perspective on the larger space.

Moshe Sheier (Dir Strategic Marketing) kicks off with a review of current and emerging smart speakers. We already know about Amazon Echo (the strong market leader) and Google Home (about a quarter of the market), with crumbs split between the likes of LG, Harmon Kardon and Lenovo. However, Apple has now entered the race with HomePod and Microsoft is planning to enter with Harmon Kardon. Amazon will also license Alexa to non-Amazon devices. Meanwhile in China, Alibaba, Baidu and others are marketing their own devices. An interesting feature in the Baidu device is a screen (also recently introduced by Amazon) and a camera to track and identify faces to authenticate purchase requests.

Moshe points out that devices look very similar, though with varying numbers of microphones (affecting far-field performance) and speakers, with bigger differences in the AI behind the speakers for voice recognition, interpretation, conversational skills, etc. This is where big players are likely to have a significant advantage and perhaps why Amazon is eager to license Alexa – the biggest revenue-maker may be the one who has the most extensive AI knowledge-base, rather than hardware. But that still points to an opportunity for hardware makers to expand voice recognition to many more domains – application specific hardware solutions, perhaps with some local intelligence, while leveraging big AI platforms in the cloud for detailed recognition and response.

Eran Belaish (PMM for audio/voice at CEVA) opens with a reminder of why voice-based control can be essential (Samsung Gear and GoPro Hero are good examples) and an entertaining look at Alexa conversational skills and Easter eggs (the kind of thing we enjoyed in the early days of Siri). He also adds that Gatebox from Japan has added a holographic character to add a further human (?) touch to interaction. Eran goes on to talk more about the component technology in and behind the speakers which I touched on in an earlier blog. This includes voice-activation and high-quality detection, supported through adaptive beam-forming (which is why more microphones are better for far-field detection) and can support speaker tracking and separation when multiple people are speaking. Acoustic echo-cancellation is another important component; the smart speaker must be able to distinguish speaker commands while it is playing music, as well as handling speech reflections beyond the primary source.

Eran then adds an enlightening and entertaining review to put bounds on the interpretation of “smart” in smart speakers. First up is the familiar ad-placement problem. Proprietary solutions will natural default to promoting sales through their own sites; Amazon and Alibaba are obvious potential offenders here. Perhaps litigation in Europe over search engine and Android biases will set guidelines before this becomes problematic. He follows with a fun video of Echo and Google Home trapping each other in an infinite loop. Even more entertaining, a TV news piece on a 6-year old saying “Alexa, buy me a dollhouse” managed to share the problem with those in the audience who had Amazon devices, becoming probably the first viral propagation through a TV news show.

Eran points out some other limitations. As we quickly learned with Siri, the AI behind voice recognition does a good superficial job of mimicking intelligence but it’s not difficult to trip up. Getting to true natural conversation or even understanding is still a long way off. On a different topic he talks about the need for hands-free interfaces especially in the context of battery-driven devices (I also talked about this in my earlier blog). This may seem odd at first – after all smart speakers are intrinsically hands-free, but even when they’re doing nothing they’re burning power listening for commands. In a battery-based device, this isn’t a good idea. Amazon’s Tap was an attempt to fix this but you still have to tap the device first. A better approach should use close-to-zero power listening technology to handle voice-based activation. He gets into this more in the next link.

Eran continues with a review of futures in voice recognition. This starts with that near-zero power voice-activation. A startup microphone company called Vesper, working with the DSP Group, have demonstrated a voice-activation system which burns as little as 100[SUP]th[/SUP] of the power of a standard microphone in standby. A second possibility he mentions is the potential for biometric identification through voiceprints. This would have obvious value when making purchases but can also be useful to discriminate between multiple potential users (Alexa, what’s my schedule today, when there may be multiple potential users). Eran suggests this capability, at some level, may be closer than we think.

He closes with a couple of other must-haves. Memory support is essential for moving closer to natural understanding. He cites as an example asking what beer he ordered last month for an event. The smart speaker has to search back in time for when you ordered that beer. A trickier case in my view, also requiring ambiguity resolution, would be something like “Alexa, where did I park the car?” followed by “Are the windows open?”. He wraps up with a discussion on emotion detection through voice analysis and ultimately also computer vision. BeyondVerbal has a product in this space which aims to help smart speakers tune their understanding to the emotion content of what you say. This is a very interesting domain – Siri and Alexa teams are already working with this company.

My suggestion – follow CEVA on voice and audio. These guys are plugged into to the trends and obviously are developing lots of technology to support this direction.

Five Challenges to IoT Analytics Success

The Internet of Things (IoT) is an ecosystem of ever-increasing complexity; it’s the next wave of innovation that will humanize every object in our life. IoT is bringing more and more devices (things) into the digital fold every day, which will likely make IoT a multi-trillion dollars’ industry in the near future. To understand the scale of interest in the internet of things (IoT) just check how many conferences, articles, and studies conducted about IoT recently, this interest has hit fever pitch point last year as many companies see big opportunity and believe that IoT holds the promise to expand and improve businesses processes and accelerate growth.

However, the rapid evolution of the IoT market has caused an explosion in the number and variety of #IoT solutions, which created real challenges as the industry evolves, mainly, the urgent need for a reliable IoT model to perform common tasks such as sensing, processing, storage, and communicating. Developing that model will never be an easy task by any stretch of the imagination; there are many hurdles and challenges facing a real reliable IoT model.

One of the crucial functions of using IoT solutions is to take advantage of IoT analytics to exploit the information collected by “things” in many ways — for example, to understand customer behavior, to deliver services, to improve products, and to identify and intercept business moments. IoT demands new analytic approaches as data volumes increase through 2021 to astronomical levels, the needs of the IoT analytics may diverge further from traditional analytics.

There are many challenges facing IoT Analytics including; Data Structures, Combing Multi Data Formats, The Need to Balance Scale and Speed, Analytics at the Edge, and IoT Analytics and AI.

Data structures

Most sensors send out data with a time stamp and most of the data is “boring” with nothing happening for much of the time. However once in a while something serious happens and needs to be attended to. While static alerts based on thresholds are a good starting point for analyzing this data, they cannot help us advance to diagnostic or predictive or prescriptive phases. There may be relationships between data pieces collected at specific intervals of times. In other words, classic time series challenges.

Combining Multiple Data Formats

While time series data have established techniques and processes for handling, the insights that would really matter cannot come from sensor data alone. There are usually strong correlations between sensor data and other unstructured data. For example, a series of control unit fault codes may result in a specific service action that is recorded by a mechanic. Similarly, a set of temperature readings may be accompanied by a sudden change in the macroscopic shape of a part that can be captured by an image or change in the audible frequency of a spinning shaft. We would need to develop techniques where structured data must be effectively combined with unstructured data or what we call Dark Data.

The Need to Balance Scale and Speed

Most of the serious analysis for IoT will happen in the cloud, a data center, or more likely a hybrid cloud and server-based environment. That is because, despite the elasticity and scalability of the cloud, it may not be suited for scenarios requiring large amounts of data to be processed in real time. For example, moving 1 terabyte over a 10Gbps network takes 13 minutes, which is fine for batch processing and management of historical data but is not practical for analyzing real-time event streams, a recent example is data transmitted by autonomous cars especially in critical situations that required a split second decision.

At the same time, because different aspects of IoT analytics may need to scale more than others, the analysis algorithm implemented should support flexibility whether the algorithm is deployed in the edge, data center, or cloud.

IoT Analytics at the Edge

IoT sensors, devices and gateways are distributed across different manufacturing floors, homes, retail stores, and farm fields, to name just a few locations. Yet moving one terabyte of data over a 10Mbps broadband network will take nine days. So enterprises need to plan on how to address the projected 40% of IoT data that will be processed at the edge in just a few years’ time. This is particularly true for large IoT deployments where billions of events may stream through each second, but systems only need to know an average over time or be alerted when a trends fall outside established parameters.

The answer is to conduct some analytics on IoT devices or gateways at the edge and send aggregated results to the central system. Through such edge analytics, organizations can ensure the timely detection of important trends or aberrations while significantly reducing network traffic to improve performance.

Performing edge analytics requires very lightweight software, since IoT nodes and gateways are low-power devices with limited strength for query processing. To deal with this challenge, Fog Computing is the champion.

Fog computing allows computing, decision-making and action-taking to happen via IoT devices and only pushes relevant data to the cloud, Cisco coined the term “Fog computing “and gave a brilliant definition for #FogComputing: “The fog extends the cloud to be closer to the things that produce and act on IoT data. These devices, called fog nodes, can be deployed anywhere with a network connection: on a factory floor, on top of a power pole, alongside a railway track, in a vehicle, or on an oil rig. Any device with computing, storage, and network connectivity can be a fog node. Examples include industrial controllers, switches, routers, embedded servers, and video surveillance cameras.”. Major benefits of using fog computing: minimizes latency, conserves network bandwidth, and addresses security concerns at all level of the network. In addition , operates reliably with quick decisions, collects and secures wide range of data, moves data to the best place for processing, lowers expenses of using high computing power only when needed and less bandwidth, and gives better analysis and insights of local data.

Keep in mind that fog computing is not a replacement of cloud computing by any measures, it works in conjunction with cloud computing, optimizing the use of available resources. But it was the product of a need to address many challenges; real-time process and action of incoming data, and limitation of resources like bandwidth and computing power, another factor helping fog computing is the fact that it takes advantage of the distributed nature of today’s virtualized IT resources. This improvement to the data-path hierarchy is enabled by the increased compute functionality that manufacturers are building into their edge routers and switches.

IoT Analytics and AI

The greatest—and as yet largely untapped—power of IoT analysis is to go beyond reacting to issues and opportunities in real time and instead prepare for them beforehand. That is why prediction is central to many IoT analytics strategies, whether to project demand, anticipate maintenance, detect fraud, predict churn, or segment customers.

Artificial Intelligence (#AI) use and improves current statistical models for handling prediction. AI will automatically learn underline rules, providing an attractive alternative to rules-only systems, which require professionals to author rules and evaluate their performance. When AI applied it provides valuable and actionable insights.

There are six types of IoT Data Analysis where AI can help:

1. Data Preparation: Defining pools of data and clean them which will take us to concepts like Dark Data, Data Lakes.

2. Data Discovery: Finding useful data in the defined pools of data

3. Visualization of Streaming Data: On the fly dealing with streaming data by defining, discovering data, and visualizing it in smart ways to make it easy for the decision-making process to take place without delay.

4. Time Series Accuracy of Data: Keeping the level of confidence in data collected high with high accuracy and integrity of data

5. Predictive and Advance Analytics: Very important step where decisions can be made based on data collected, discovered and analyzed.

6. Real-Time Geospatial and Location (logistical Data): Maintaining the flow of data smooth and under control.

But it’s not all “nice & rosy, comfy and cozy” there are challenges facing using AI in IoT; compatibility, complexity, privacy/security/safety , ethical and legal issues, and artificial stupidity. Many IoT ecosystems will emerge, and commercial and technical battles between these ecosystems will dominate areas such as the smart home, the smart city, financials and healthcare. But the real winners will be the ecosystems with better, reliable, fast and smart IoT Analytics tools, after all what is matter is how can we change data to insights and insights to actions and actions to profit .

Ahmed BanafaNamed No. 1 Top VoiceTo Follow in Tech by LinkedIn in 2016

References:

1. http://www.simafore.com/blog/3-challenges-unique-to-iot-analytics

2. https://www.linkedin.com/pulse/why-iot-needs-fog-computing-ahmed-banafa

3. http://www.odbms.org/2016/09/five-challenges-to-iot-analytics-success/

4. https://www.linkedin.com/pulse/ai-catalyst-iot-ahmed-banafa

5. https://www.linkedin.com/pulse/secure-model-iot-blockchain-ahmed-banafa

6. http://www.gartner.com/newsroom/id/3221818

EDA Machine Learning from the Experts!

Traditionally, EDA has been a brute force methodology where we buy more software licenses and more CPUs and keep running endless jobs to keep up with the increasing design and process complexities. SPICE simulation for example; when I meet chip designers (which I do quite frequently) I ask them how many simulations they do for a given task. The answer is always, “It depends on how many licenses and CPUs I have access to during a given time period.” The correct answer of course is “As many simulations as I need to get the best power, performance, area, and yield for my design.” FinFETs have exacerbated this problem as expected by a select few EDA experts which has led us to our new trendy buzzword Machine Learning (ML).

For the record, Machine Learning (adaptive algorithms) gives computers the ability to learn without being explicitly programmed. Arthur Samuel coined the term in 1959 while at IBM. You can see a lengthy definition on Wikipedia.

For a detailed description of ML as it applies to EDA please see these four videos from a panel at #54DAC.

First we have Eric Hall of Data Science (formerly Broadcom):

So, what is machine learning? Well, briefly it’s giving a set of features — X’s, we want to predict the outcome, Y. I’ll mostly be talking about supervised learning, and at times relating it to characterization prediction…

Next we have Ting Ku from Nvidia:

So, Machine learning seems to be the biggest buzz out there right now. So, what’s the magic?The first thing we need to learn is the classification of machine learning. I have a really, really simplified way of looking at things…

Next we have Sorin Dobre of Qualcomm:

My presentation is seven minutes. We do not have time to present all the techniques for machine learning. So, it’s more focused on the applicability in the EDA space, more of a kind of forecast, to use these techniques to address the design problems we are seeing at 10 nanometers, 7 nanometers and beyond…

And finally we have Jeff Dyck of Solido:

One of the things I wanted to talk about is, a lot of people are buzzing about machine learning right now. We’re excited about it. We’re trying to apply it. There are lots of in house projects that are looking to apply methods that already exist to solve big problems…

Based on my travels around the world attending conferences and meeting with customers I can tell you that ML is in fact real and will be the next big thing in EDA, absolutely.

The first ML EDA products that I have seen in production are in theSolido ML Characterization Suite (below) which uses machine learning to accelerate statistical characterization of standard cells, memory, and I/O. It delivers true 3-sigma LVF/AOCV/POCV values with Monte Carlo and SPICE accuracy, and handles non-Gaussian distributions. It adaptively selects simulations to meet accuracy requirements, minimizing runtime for all cells, corners, arcs, and slew-load combinations. This suite is in use by top semiconductor companies and foundries around the world so the results are real, not a result of marketeering.

Predictor

Reduces library characterization time by 30 to 70%

Solido ML Characterization Suite Predictor uses machine learning to accelerate characterization of standard cells, memory, and I/O. Predictor accurately generates Liberty models at new conditions from existing Liberty data at different PVT conditions, Vtfamilies, supplies, channel lengths, model revisions, and more. It significantly reduces up-front characterization time as well as turnaround to generate library models, and works with NLDM, CCS, CCSN, waveforms, ECSM, AOCV, LVF, and more.

Statistical Characterizer

Generates Monte Carlo accurate statistical timing models >1000x faster than Monte Carlo

Solido ML Characterization Suite Statistical Characterizer uses machine learning technology to accelerate statistical characterization of standard cells, memory, and I/O. It delivers true 3-sigma LVF/AOCV/POCV values with Monte Carlo and SPICE accuracy, and handles non-Gaussian distributions. It adaptively selects simulations to meet accuracy requirements, minimizing runtime for all cells, corners, arcs, and slew-load combinations.

For fast, accurate, statistical characterization, Statistical Characterizer and Predictor can be used in combination. Use Statistical Characterizer on anchor corners to quickly add Monte Carlo accurate LVF, and then use Predictor to create and expand remaining corners, including statistical characterization data, generating savings of more than 50% without compromising accuracy.

Making Sensors of the World

How many sensors do you think you own? Let’s start with your thermostat. It’s simple, right? You might guess one or two, and if you owned a 2[SUP]nd[/SUP] generation Nest you’d be wrong. It comes with a light sensor, heat sensor (in addition to temp), microphone, carbon monoxide sensor, smoke sensor, and occupancy sensor. Also, you might have a home security system – so, more sensors. I have a Blink Home security system and it comes with sound, motion and temperature on each camera unit, in addition to the camera itself.

Next is your phone. They most often come with GPS, compass, accelerometer, multiple temperature sensors for internals and ambient temp, and proximity sensor to tell where if your ear is against the screen. Phones also have fingerprint and heart rate sensors, cameras, microphones and more. The tallies I have seen online suggest there are around 20 sensors in many phones.

We are just getting started. I have an app for my MacBook that shows the internal hardware sensors. These include voltage, current and temperature for many individual components of the system. Batteries are instrumented, as well as GPU’s, the external case, and of course the CPU. MacBooks also have light sensors, cameras, microphones and more.

I’ve already lost count and now we are getting to the good stuff – cars. I own an older car but it has a bevy of sensors for running the engine and reducing pollution – The include temperature sensors, O2 sensors, pressure for emissions, and a variety of others. It also has motion and sound for the alarm. The antiskid system has rotation sensors on the wheels as well as accelerometer and gyroscope. Oh, did I mention the GPS yet.

According to an article written by Sidense, cars today have 60 to 100 sensors, and smart cars will easily reach 200. The automotive industry alone will use 22 billion sensors per year by the year 2020. Stop and consider that for a second. The article entitled “Sensors Everywhere” by Jim Lipman and Andrew Faulkner is filled with interesting information. For instance, with the requirements for so many automotive sensors, the market for them will grow from $22B in 2016 to $37B in 2022.

The article also outlines a lot of the other applications that will be using sensors, now and in the future. Indeed, the Internet of Things would be pretty useless without sensor technology. You might as well move it all back to mainframes in a server room if you do not intend to gather physical or electromagnetic information from the device operating environment.

Sidense is interested in sensor technology because implementing sensor based designs for connected devices and systems requires non-volatile memory. There are a lot of different varieties of non-volatile memory that are used based on system requirements. One Time Programmable (OTP) NVM is uniquely suitable for an important subset of these needs. The underlying technology relies on a single transistor bit cell that is fabricated using a standard layer stack-up. This is nice because it does not add any extra fabrication steps or costs. The small size of the bit cell is attractive, as well as are the area savings.

The memories can be programmed before distribution or programming can be done in the field using an integrated charge pump or external rail voltage suitable for write operation. Because there is no stored charge – the bit is programmed with a physical change to the thin oxide of the 1T bit cell – the data retention is excellent. The Sidense OTP-NVM is very rugged and suitable for extreme environments – making it ideal for automotive and other applications with wide ranging temperatures and physical stresses.

The Sidense article outlines some of the use cases for OTP-NVM. Sensors need to store calibration data, and while this data rarely changes, it’s nice to have provisions for updates. OTP-NVM is an easy and effective way to handle this requirement. Security is another ripe area for OTP-NVM. The data in the bit cells is nearly impossible to hack. Side channel attacks are also thwarted due to OTP-NVM characteristics that the article discusses. OTP-NVM is used to store unique device ID’s, crypto keys, system configuration and feature keys, among other things. Because larger blocks of OTP-NVM can simulate re-writeable memory, with a limited number of rewrites, it can be used for secure boot code storage, adding to root of trust in critical systems.

Clearly the applications and prevalence of sensors will only increase. Areas where they can be used not even mentioned here include smart buildings, medical, smart cities, and defense. There is high value in adding sensors to many areas of our lives and our world. Hopefully this technology can be used to increase efficiency and improve our quality of life. Regardless new and more sensors are a certainty. If you want to read the full article by Sidense on the applications of OTP-NVM in sensors, look on the Sidense website.

Prototyping GPUs, Step by Step

FPGA-based prototyping has provided a major advance in verification and validation for complex hardware/software systems but even its most fervent proponents would admit that setup is not exactly push-button. It’s not uncommon to hear of weeks to setup a prototype or of the prototype finally being ready after you tape-out. Which may not be a problem if the only goal is to give the software team a development/test platform until silicon comes back, but you’d better hope you don’t find a hardware problem during that testing.

It doesn’t have to be this way. Yes, prototype setup is more complex than for simulation or emulation but a systematic methodology can minimize this overhead and start delivering value much faster. A great stress-test for this principle is a GPU since there are few designs more complex today; any methodology that will work well on this class of design will almost certainly help for other designs. Synopsys recently presented a webinar on a methodology to prototype GPUs using HAPS.

Paul Owens (TMM at Synopsys) kicks off with guidance on schedule management / risk reduction which should be second nature to any seasoned engineer, but it’s amazing how quickly we all forget this under pressure. You should bring the design up piecewise, first proving out interfaces and basic boot, then you add a significantly scaled-down configuration of cores (for this class of design with scalable arrays of cores) and only towards the end to you start to worry about optimization. These bring-up steps can cycle much faster than the whole design.

With that in mind, start with a design review – not of the correctness of the design but it’s readiness to be mapped to the prototyper. This is platform-independent stuff – what are you going to do with black-boxes, behavioral models, simulation-only code, how are you going to map memories, what is a rough partitioning of the design that makes sense to you?

Next you should start thinking about requirements to map the design to the prototype and how you’re going to adapt with minimal effort when you get new design drops. Here’s where you want to work on simplifying clocks, merging clock muxes and making clocks synchronous. You may also want to add logic to more easily control boot. Paul recommends separating such changes for the main design RTL to the greatest extent possibly to simplify adapting to new drops.

Paul’s next step is preparing first bitfiles, with a strong recommendation to start with low utilization (40-50% of resources) with automatic partitioning. That may seem self-serving, but you should ask yourself whether you’d rather save money on a smaller prototyper and spend more time fighting to fit your design or spend more time in debug and validation. Designs aren’t going to get smaller so you might as well cough up now because it will speed setup and you’ll need that capacity later anyway. Obvious things to look for and optimize here are multi-chip clock paths (leading to excess skew) and multi-chip paths in general. Paul also suggests that while you can start with an early version of the RTL, you should wait for a frozen drop before considering FPGA place and route because this takes quite a long time.

One point Paul stresses is that you should be planning debug already at this stage, particularly when you’re working with a scaled down design. Generous debug selections will greatly simplify debugging when you get around to bring-up.

For prototype setup Paul suggests a number of best practices including a lockable lab to keep the curious out, adequate power supplies, ESD protection, disciplined cabling and smoke testing (i.e. general lab best practices). For initial bring-up he notes that the biggest challenge often is getting the design through reset and boot. Multiple interfaces exacerbate this problem – that’s why you should put effort into checking those interfaces earlier.

When you’re done with all of this, then you can look at optimization. Here you should consider timing constraint cleanup and possibly partitioning optimization. But Paul adds that in his experience it is difficult to improve significantly on partitioning over what the automatic partitioner will deliver, however changing partitioner options, cable layout and some other factors can have significant impact.

Finally you move on to system verification and validation! Overall, I think you’ll find this approach is a very practical and useful exposition of how to get through prototype bring-up with a minimum of fuss and maybe have feedback from prototype V&V actually have impact on design before tapeout 😎. You can see the full webinar HERE.

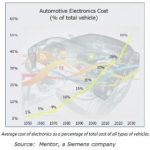

Automotive IC Design Requires a Unique EDA Tool Emphasis

Semiwiki readers are no doubt very familiar with the increasing impact of the automotive market on the semiconductor industry. The magnitude and complexity of the electronic systems that will be integrated into upcoming vehicle designs reflects the driver automation, safety, and entertainment features that are in growing demand. The figure below illustrates the anticipated “value” of the electronics that will be present in future years.

Continue reading “Automotive IC Design Requires a Unique EDA Tool Emphasis”