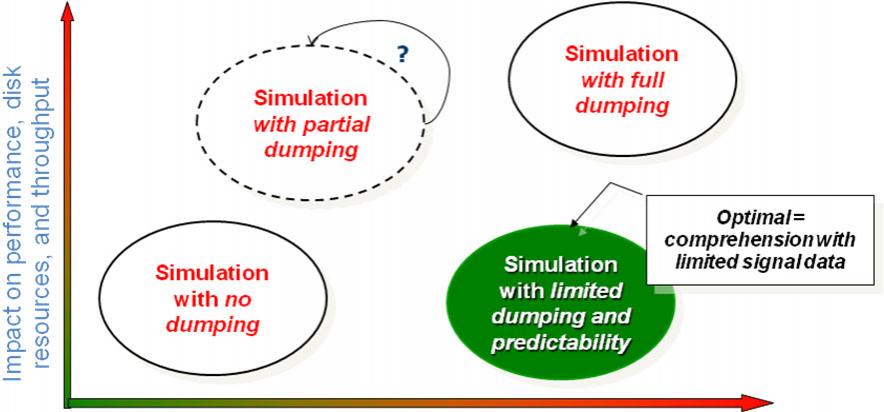

One of the challenges with verifying today’s large chips is deciding which signals to record during simulation so that you can work out the root cause when you detect something anomalous in the results. If you record too few signals, then you risk having to re-run the entire simulation when you omitted to record a signal that turns out to be important. If you record too many, or simply record all the signals to be on the safe side, then the simulation time can get prohibitively long. In either case, re-running the simulation or running it very slowly, the time taken for verification increases unacceptably.

The solution to this paradox is to record the minimal essential set of data needed from the logic simulation to achieve full visibility. This guarantees that it will not be necessary to re-run the simulation without slowing down the simulation unnecessarily. A trivial example: it is obviously not necessary to record both a signal and its inverse since one value can easily be re-created from the other. Working out which signals form the essential set is not feasible to do by hand for anything except the smallest designs (where it doesn’t really matter since the overhead of recording everything is not high).

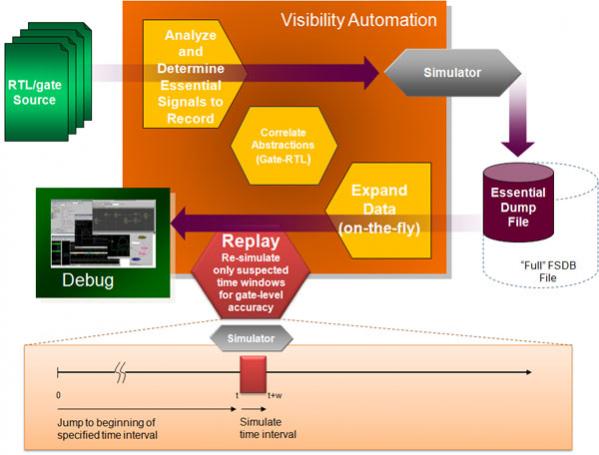

SpringSoft’s Siloti automates this process, minimizing simulation overhead while ensuring that the necessary data is available for the Verdi system for debug and analysis.

There are two basic parts to the Siloti process. Before simulation is a visibility analysis phase in which the essential signals are determined. These are the signals that will be recorded during the simulation. Then, after the simulation, is a data expansion phase when the signals that were not recorded are calculated. This is done on-demand, so that values are only determined if and when they are needed. Additionally, the signals recorded can be used to re-initialize a simulation and re-run any particular simulation window without requiring the whole simulation to be restarted from the beginning.

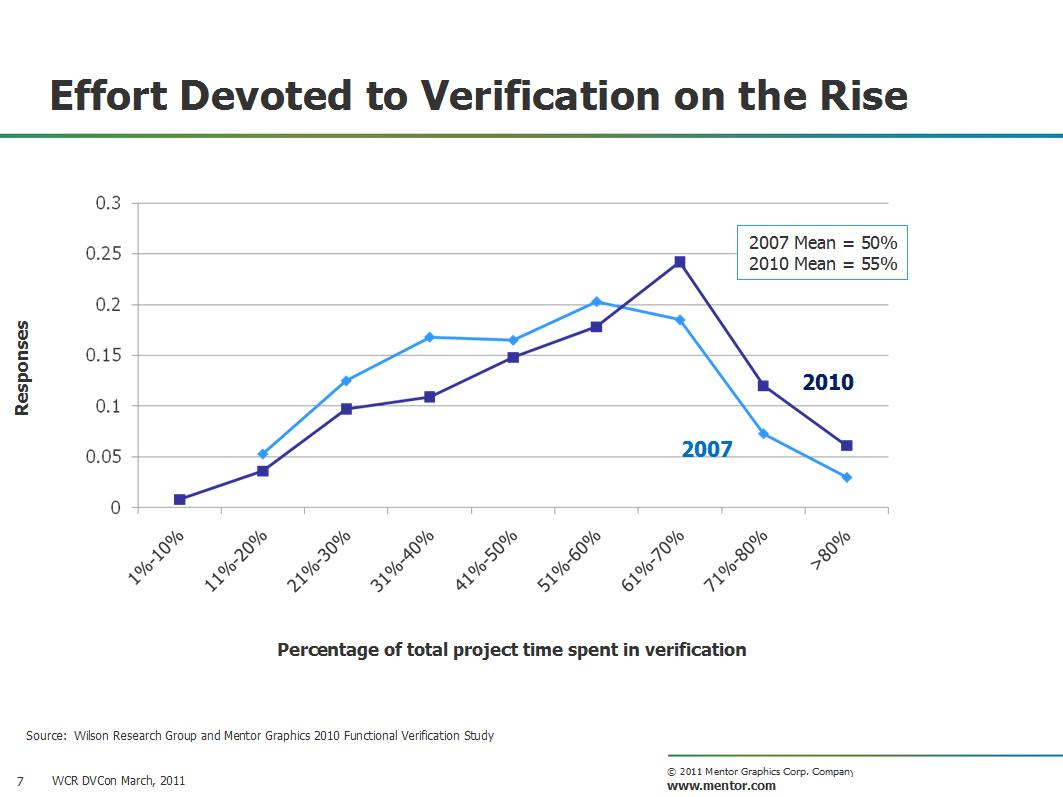

Using this approach results in dump file sizes of around 25% of what results when recording all signals, and simulation turnround times around 20% of the original. With verification taking up to 80% of design effort these are big savings.

More information:

Share this post via:

Crossing the Yield Cliff: IDP V6 and the Future of Manufacturing Forecasting