Digital audio processing is evolving into an art form, particularly in high-end applications such as automotive, cinema, and home theater. Innovation is moving beyond spatial audio technologies to concepts such as environmental correction and spatial confinement. These sophisticated soundscapes are driving a sudden increase… Read More

Tag: cache coherence

Accelerating Cache Coherence Verification

It would be nice if there were a pre-packaged set of assertions which could formally check all aspects of cache coherence in an SoC. In fact, formal checks do a very nice job for the control aspects of a coherent network. But that covers only one part of the cache coherence verification task. Dataflow checks are just as important, where… Read More

Cache Coherence Everywhere may be Easier Than you Think

I attended one of the Arm partner events in Cambridge many years ago, when they first talked about the coherent hub for managing cache coherence. I was impressed, but the obvious question even then was how any non-Arm IP was going to hook into this hub. They had a solution, of course, the ACE interface, and I left satisfied. As is the … Read More

Quick Error Detection. Innovation in Verification

Can we detect bugs in post- and pre-silicon testing where we can drastically reduce latency between root-cause and effect? Quick error detection can. Paul Cunningham (GM, Verification at Cadence), Jim Hogan and I continue our series on novel research ideas. Feel free to comment.

The Innovation

This month’s pick is Logic Bug Detection… Read More

Trends in AI and Safety for Cars

The potential for AI in cars, whether for driver assistance or full autonomy, has been trumpeted everywhere and continues to grow. Within the car we have vision, radar and ultrasonic sensors to detect obstacles in front, behind and to the side of the car. Outside the car, V2x promises to share real-time information between vehicles… Read More

Verification, RISC-V and Extensibility

RISC-V is obviously making progress. Independent of licensee signups and new technical offerings, the simple fact that Arm is responding – in fundamental changes to their licensing model and in allowing custom user extensions to the instruction set – is proof enough that they see a real competitive threat from RISC-V.

Which all… Read More

How Should I Cache Thee? Let Me Count the Ways

Caching intent largely hasn’t changed since we started using the concept – to reduce average latency in memory accesses and to reduce average power consumption in off-chip reads and writes. The architecture started out simple enough, a small memory close to a processor, holding most-recently accessed instructions and data … Read More

Tutorial on Advanced Formal: NVIDIA and Qualcomm

I recently posted a blog on the first half of a tutorial Synopsys hosted at DVCon (2018). This blog covers the second half of that 3½ hour event (so you can see why I didn’t jam it all into one blog :D. The general theme was on advanced use models, the first half covering use of invariants and induction and views from a Samsung expert on efficient… Read More

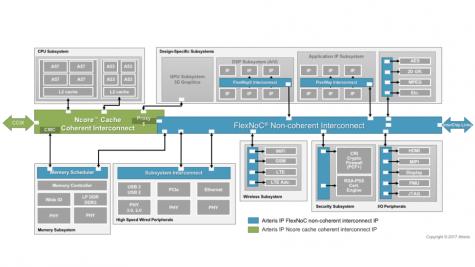

Connecting Coherence

If a CPU or CPU cluster in an SoC is the brain of an SoC, then the interconnect is the rest of the central nervous system, connecting all the other processing and IO functions to that brain. This interconnect must enable these functions to communicate with the brain, with multiple types of memory, and with each other as quickly and predictably… Read More

Cache Coherent Systems Get a Boost from New Technology

The speed and power penalties for accessing system RAM affect everything from artificial intelligence platforms to IoT sensor nodes. There is a huge power and performance overhead when the various IP blocks in an SOC need to go to DRAM. Memory caches have become essential to SOC design to reduce these adverse effects. However, … Read More