The Synopsys Formal group have a reputation for putting on comprehensive tutorials/workshops at DVCon and this year again they did not disappoint. The theme for the Thursday workshop was tackling complexity in control and datapath designs using formal. Ravindra Aneja, who I know from Atrenta days, kicked off the session with their main objective: to overcome common concerns raised by folks interested in formal but concerned about the extent to which it can contribute. These should sound familiar: on what types of design can I use formal, how well does it scale to large functions and what do I really save by using formal?

Ashish Darbari, CEO of Axiomise next presented on formal for SoC designs. I don’t think I can do justice to his full presentation, so I’ll just mention a few points he emphasized for scalability. First, and most obviously, apply formal to smaller functions (such as complex state machines) that are so tangled they are really hard to verify comprehensively using dynamic verification. For larger functions, he suggests using a method he calls proof-engineering; this is simply breaking down a larger problem into smaller pieces which you can prove individually and then assemble into fuller, more complete proofs on the larger system. That shouldn’t be too scary – it’s engineering 101 after all. He talks about common methods in formal to handle these, including assume-guarantee and case-splitting. Don’t worry about the jargon; the underlying concepts in these techniques are not at all complicated.

Nitin Mhaske (another Atrenta alum) next talked about using formal to verify control logic. generally considered to be the sweet spot for formal. Widely cited examples in this space include complex state controllers; Nitin use a PCIe/USB LTSSM and a 10G/40G Ethernet state controller. I would add cache coherency controllers as another good example. What all of these have in common is many states and multiple paths to those states, complex state transition conditions and difficulty in ensuring that all possibilities have been considered in verification. Nitin detailed techniques to attack verification of these systems, also how to look deeper in a design using bug-hunting to check behavior beyond what you can intuitively see.

The final section attracted me especially because the speaker (Per Bjesse of Synopsys) talked about formal verification of datapaths, a topic typically considered a no-no for formal. Synopsys have been quietly advancing their HECTOR™ technology (now under the hood in their DPV app) for several years now and seem to have some serious customer validation. These include proofs from 32-bit to 128-bit FPUs across all the standard opcodes from ADD to MULT, DIV and SQRT, many completing in minutes, others in no more than a few hours and the most complex in around 6 hours.

They have also discovered that this analysis is particularly promising for proofs in systolic arrays of multiply-accumulate (MAC) functions. Does that sound familiar? It should; these are the basis of neural net (NN) architectures. Of course proofs at this level are not going to prove that an image is correctly recognized, but they will prove that the foundation logic matches the intended implementation. This is not as trivial in many cases as it may sound; for example, it has become very common to have varying and non-standard word-widths between planes in inference NNs at the edge. I can imagine this foundational level of verification could prove quite important in the overall verification plan.

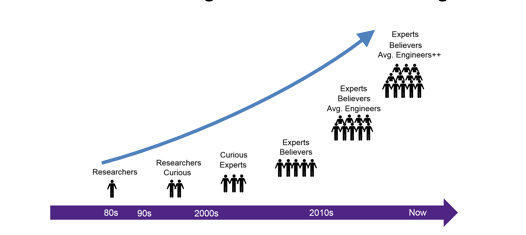

What’s under the hood? I was told that the number of proof engines is often smaller than for control proving, and includes the familiar BDD, SAT and SMT methods, though more tuned to datapath proofs. Since a good deal is automated, effort in using this technology can actually be simpler than for general formal verification; examples mentioned were integer multiply (with a result < 20 bits), AES single round and floating-point half-precision. Also, the datapath-proving algorithms work with both C and RTL, a significant convenience when verifying algorithms developed in C/C++ and obviously allowing for much faster proofs at this higher level of abstraction. My take-away? If you’re still on the fence about formal, scalability is manageable, proving in control logic already has well-established value and proving in datapath logic is now looking more practical. As for value in the overall verification task, many companies are already doing this as an adjunct to dynamic verification. They have reported that it saves them time, because their formal group can start finding bugs while the dynamic folks are still building testbenches. It adds confidence because those functions that have been proven formally are known to be solid. And it mostly replaces simulation for those functions assigned to formal proving. I say mostly because I have seen cautious verification managers still use interface assertions from formal proofs in dynamic testing. But they’re not repeating the formal testing, they just want added confidence (after all, the people who created the formal properties can make mistakes too).

You can learn more about the full range of Synopsys formal capabilities HERE.

Share this post via:

Comments

2 Replies to “Managing Formal Complexity Even into AI”

You must register or log in to view/post comments.