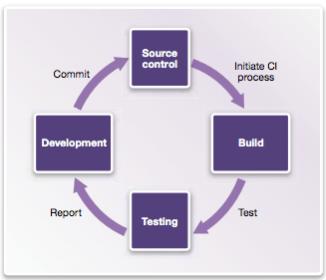

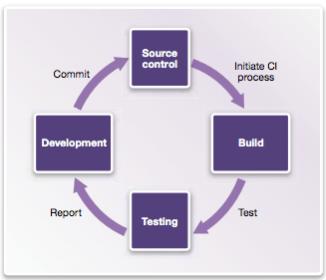

A familiar refrain in software development, as much as in hardware development, is that the size and complexity  of projects continues to grow as schedules shrink and expectations of quality can increase dramatically. A common approach to managing this challenge in software programs is agile development practices and one aspect of that approach is continuous integration (CI). In CI any checkin by any developer triggers in effect a production build and test. Broken builds are immediately flagged to all developers to encourage quick correction to deviations from a working build rather than allowing hidden integration issues to accumulate. CI is primarily an engineering discipline, but naturally it benefits greatly from automation support. Popular open-source tools in this domain include Jenkins for CI automation and Linaro LAVA for test build/automation.

of projects continues to grow as schedules shrink and expectations of quality can increase dramatically. A common approach to managing this challenge in software programs is agile development practices and one aspect of that approach is continuous integration (CI). In CI any checkin by any developer triggers in effect a production build and test. Broken builds are immediately flagged to all developers to encourage quick correction to deviations from a working build rather than allowing hidden integration issues to accumulate. CI is primarily an engineering discipline, but naturally it benefits greatly from automation support. Popular open-source tools in this domain include Jenkins for CI automation and Linaro LAVA for test build/automation.

Agile methods in general and CI in particular were developed as software engineering practices assuming a stable hardware platform. But how do you apply these concepts when, as in many embedded applications, that hardware is still in development? Hardware-based prototype models are available too late in product development or are too slow (or both), so software developers depend heavily on (software-based) virtual prototype (VP) models which model hardware behavior with just enough detail to ensure that software running on the VP will behave similarly on the ultimate hardware, yet can still run at near real-time speed.

Synopsys Virtualizer Development Kits (VDKs) provide the kind of VP platform you need to connect to CI frameworks. VDKs can be developed from scratch starting from (high-level) transaction-level models, or you can start from one of several pre-built solutions such as the family of VDKs for ARM processors which you can then customize to match your specific design configuration. The ARM VDKs, for example, provide out-of-the-box support for Linux and Android, plug-n-play with software debuggers from ARM, Lauterbach and GNU and reference virtual prototypes for several DesignWare interface IPs, among many other features. VDKs aim to get you up and running quickly with a full-functioned VP, requiring a minimum of tuning on your part.

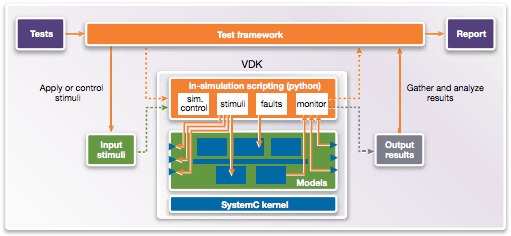

Once you have your VDK, you can integrate it into a CI framework. For more technical details, see the link at the end of this blog. Here I want to touch on how VDKs integrate into CI testing frameworks and what that integration enables. Each VDK provides interfaces to connect input stimuli from the framework to the VDK and output information from the VDK back to the framework. Control commands for objectives like run-control can also be communicated between the framework and VDK via a TCP/IP connection to a scripting layer within the model.

VDKs provide support for many types of analysis but a couple are especially interesting here. Let’s start with support safety standards per ISO 26262. It has been said that there are more lines of code in a Ford Fusion that there are in a Boeing 777. You’re not going to develop, maintain and enhance that kind of code-base in a waterfall model; you have to use something like CI. Also safety standards compliance for that code has to be based on in-the-loop testing. Again, software developers don’t have the hardware available to do that testing until late in the development cycle but with VDKs they can do Fault Mode and Effect Analysis (FMEA) using virtual models, which they can instrument through the (scriptable) fault injection capability provided in VDKs. They can inject faults on memory content, registers and signals, or on the loaded embedded software; this is completely non-intrusive, requiring no modification to the embedded software. Additionally, the scripting layer provides full control to define complex scenarios and corner-cases based on complex triggers which may be difficult to easily reproduce in the real hardware. This capability is completely deterministic so fault scenarios are repeatable and can be included in regression testing.

In a similar vein, code coverage is a key metric in the completeness of any software testing. VDKs provide an on-target code coverage measurement solution, not requiring software instrumentation. In both this case and the fault coverage case, coverage can be integrated into CI coverage, using plugins like Cobertura.

You might possibly have missed one rather important point in all of this. Of course software teams are able to develop and continuously integrate software based on VDK platforms long before the hardware is ready. But because the VDK is also software, they can do so in parallel; they don’t need to plug into hardware prototypes for development or CI testing. That’s rather important when you want to implement CI. Imagine having all the CI infrastructure setup then having to stand in line to get your turn on a hardware prototyper; that would rather defeat the object of the exercise. But you can have as many VDKs running simultaneously as you need; VDKs make CI possible when hardware is also under development.

You can read more about how VDKs can support continuous integration HERE.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.