The 40-Year Evolution of Hardware-Assisted Verification — From In-Circuit Emulation to AI-Era Full-Stack Validation

For more than a decade, Hardware-Assisted Verification platforms have been the centerpiece of the verification toolbox. Today, no serious semiconductor program reaches tapeout without emulation or FPGA-prototyping playing a central role. HAV has become so deeply embedded in the development flow that it is easy to assume it has always been this way.

But it wasn’t.

With a bit of slack around the exact starting point, this year marks roughly the 40th anniversary of hardware-assisted verification, broadly inclusive of both hardware emulation and FPGA prototyping. Over four decades, HAV has evolved from a niche approach into the indispensable backbone of modern silicon development. Its history is also, in many ways, a mirror of the semiconductor industry itself: as chips grew more complex, as software took over, and as AI began reshaping everything, HAV repeatedly reinvented its purpose.

Long before HAV became a recognized category, there were precursors. IBM, for example, experimented with hardware acceleration through systems such as the Yorktown Simulation Engine and later the Engineering Verification Engine. These machines were essentially simulation accelerators—special-purpose computers designed to run hardware models faster than traditional software simulators. They represented an important step forward, but they remained fundamentally tied to the simulation paradigm. They improved speed, yet not nearly enough to apply real-world scenarios to a design-under-test.

HAV platforms belong to a different class of engines. Early emulators relied on reprogrammable hardware, typically arrays of FPGAs, configured to mimic the behavior of a design-under-test (DUT). For the first time, engineers could interact with a representation of the chip before tapeout at execution speeds approaching real life. Companies such as PiE Design Systems, Quickturn, IKOS, and Zycad pioneered this new verification approach, laying the foundation for what would become a defining pillar of semiconductor development.

The evolution of HAV can be understood across three broad eras: the early years, when hardware complexity first forced emulation into existence; the middle period, when software became dominant and HAV moved into virtual environments; and the maturity era, where AI workloads have driven hardware prominently to the center of architectural innovation.

The Early Years: Hardware Complexity Forces the Rise of Emulation

In the early 1980s, semiconductor designs were defined almost entirely by hardware. Embedded software, if present at all, played a minor role. The industry was driven by pioneers in processors and graphics chips, pushing toward what seemed then an astonishing milestone: one million gates. Verification relied overwhelmingly on gate-level simulation, which served as the universal standard of the time.

As designs grew larger, however, simulators encountered an unavoidable performance wall. Limited host memory forced designs to be swapped to disk, simulation runtimes ballooned, test vector volumes exploded, and the sheer computational load to achieve acceptable fault coverage became unmanageable. Full-system validation before tape-out was becoming increasingly impractical, if not outright impossible. The industry needed something faster, something closer to silicon.

Hardware-assisted verification emerged as a response to this crisis. Early HAV platforms were deployed primarily in In-Circuit Emulation mode. In ICE configurations, the emulator was physically cabled into a live target system, allowing engineers to exercise the DUT in a realistic environment with real peripherals. This was revolutionary. Instead of relying on synthetic vectors, designers could validate chips with real workloads, providing a level of realism that synthetic simulation vectors simply could not achieve. For the first time, verification began to resemble how the chip would behave in the field.

The promise was enormous, but the reality was often painful. Early emulators were complex to setup, finnicky to operate, and prone to frequent failures. Long setup times regularly pushed verification readiness past management deadlines, and reliability issues rooted in cabling and hardware dependencies led to frequent downtime. Mean-time-between-failure (MTBF) could be measured in hours rather than weeks or months, forcing verification teams to spend more effort debugging the emulator itself than debugging the DUT. Even so, despite these frustrations, the trajectory was clear: verification could no longer remain purely software-based.

The Middle Ages: Software Eats the World and HAV Becomes the Verification Backbone

The design landscape changed profoundly in the decades that followed, as functionality began migrating steadily from hardware into software code. This shift was famously captured in Marc Andreessen’s 2011 observation that “software is eating the world.” His prediction proved remarkably accurate across nearly every industry touched by computing. SoCs became software-defined platforms, where intelligence increasingly lived in firmware, operating systems, drivers, and application stacks. Hardware was no longer the entire product—it was the substrate for software code.

This transition transformed verification. Static test patterns were no longer capable of exercising the full complexity of modern designs. Instead, engineers turned to software-driven stimulus, using high-level testbenches to validate behavior across broad functional domains. Hardware verification languages and more abstract methodologies emerged to support this new reality.

HAV engines, once used mainly for real-time ICE validation, adapted by becoming the execution engines behind these software-centric environments. The industry formalized the interaction between software testbenches and hardware-mapped DUTs through transaction-based verification, standardized under IEEE SCE-MI. Rather than toggling signals cycle-by-cycle, engineers could interact with the DUT through high-level transactions, dramatically improving performance and productivity.

This shift also removed many of the practical limitations of earlier generations. Virtualized environments reduced reliance on physical connections and eliminated the need for speed adapters required in ICE-based setups. Verification IP replaced hardware interfaces, enabling scalable, fully digital verification ecosystems.

As the industry embraced “shift-left,” HAV platforms emerged as its most powerful enabler. They allowed engineers to bring up software much earlier in the design process, starting with bare-metal initialization and extending through drivers, and operating systems. Verification was no longer limited to functional correctness in isolation; it now encompassed the behavior of the complete hardware/software system, long before silicon existed.

By this stage, HAV had become much more than a way to make verification faster. It was increasingly the bridge between hardware and software development, allowing teams to work in parallel rather than in sequence, and establishing hardware-assisted verification as the backbone of modern system validation.

The AI Age: AI Restores Hardware Centrality and HAV Goes Full-Stack

From the mid-2010s onward, the industry entered a new era, driven by the explosive rise of artificial intelligence. The narrative evolved beyond software eating the world into something more radical: software consuming the hardware. In the AI age, software does not simply run on hardware, rather, it increasingly dictates what hardware must become. The demands of modern AI models are so extreme that they are reshaping processor architectures from the ground up.

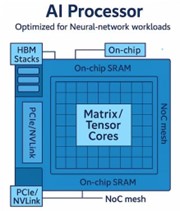

Generative AI exposed the limits of traditional, general-purpose architectures. The enormous data movement requirements and computational intensity of modern models overwhelmed CPUs and strained even highly optimized systems. To keep pace, the industry embraced specialized architectures, including GPUs, FPGAs, and purpose-built AI accelerators designed around massive parallelism and tensor computation.

These developments dramatically increased the scale and complexity of SoC designs. AI-era chips routinely contain billions of gates, heterogeneous compute clusters, and sophisticated networks-on-chip (NOC) to move data efficiently between processing elements. See figure 1.

In this context, HAV has taken on a fundamentally expanded role.

Verification is no longer confined to functional correctness in sub-billion-gate designs; it must now scale to multi-billion-gate systems and address far more than logic alone and even more than software stacks. Today’s HAV platforms are increasingly used to evaluate power and thermal behavior, analyze performance, validate safety and security requirements, capture full system-level interactions, and run realistic workloads that reflect how the design will operate in the real world.

At the same time, ICE-style operation matured into a critical engineering capability rather than a legacy mode. This is especially true in at-speed FPGA-based prototyping, where real physical interfaces must be validated at speed before committing a design to manufacturing. By allowing the design-under-test to interact with actual PHY hardware early in the cycle, ICE enables teams to uncover integration, timing, and signal integrity issues that purely virtual environments cannot expose. In doing so, it strengthens both hardware and software confidence long before tape-out.

Just as importantly, AI hardware cannot be separated from its software ecosystem. Compilers, runtimes, libraries, kernels, and deployment frameworks are no longer afterthoughts, they are inseparable from whether the hardware succeeds or fails. HAV platforms are therefore used to run real AI workloads well before silicon exists, ensuring that hardware and software evolve together rather than sequentially. The feedback loop between architecture and execution is increasingly closed before tape-out, not after first silicon.

In this sense, verification in the AI age has become truly full-stack. HAV is no longer just a tool for validation, it is now the environment where hardware/software co-design converges, enabling what might be called a new paradigm: software-driven tape-out.

Conclusion: HAV as the Engine of Hardware–Software Convergence

After four decades of evolution, the semiconductor industry has come full circle. Hardware complexity initially drove the rise of emulation as a practical necessity. The software era that followed expanded HAV’s role, connecting it to virtual environments, richer software stacks, and broader system-level workflows. Today, the AI revolution is once again reshaping the landscape, restoring hardware to the center of innovation and demanding unprecedented levels of specialization, efficiency, and scale.

What has changed most fundamentally, however, is the meaning of that centrality. Hardware is no longer designed first and programmed later. The defining trend of this decade is software-defined design, where architecture is shaped as much by compilers, runtimes, and workloads as by transistors, interconnects, and logic structures. The boundary between hardware and software has blurred into a single, tightly coupled engineering problem.

HAV platforms sit precisely at this intersection. They can no longer be viewed as tools for checking correctness in isolation. Instead, they have become essential environments for validating architectural intent—where hardware meets real software, where performance assumptions are tested under realistic workloads, and where system-level trade-offs are exposed while meaningful design changes are still possible. In the era of software-driven tape-out, HAV is the mechanism that closes the loop before silicon exists.

Hardware is once again the center of the universe, not because software has become less important, but because software has become so important that it now defines the hardware itself.

The metrics of success have shifted accordingly. Choosing an HAV platform is no longer simply a matter of how many gates can be verified for functional correctness. It is now about whether the full spectrum of software-driven use cases can be executed, analyzed, and optimized before tape-out. In this new era, hardware is not just the foundation beneath softwa2026 Outlook with Abhijeet Chakraborty VP, R&D Engineering at Synopsysre innovation, it has become one of its primary engines.

Also Read:

Smarter IC Layout Parasitic Analysis

Accelerating Static ESD Simulation for Full-Chip and Multi-Die Designs with Synopsys PathFinder-SC

2026 Outlook with Abhijeet Chakraborty VP, R&D Engineering at Synopsys

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.