I have always been curious about how Austemper-based safety methodologies (from Siemens EDA) compares with conventional safety flows. Siemens EDA together with Rambus recently released a white paper on getting a root of trust IP to ASIL B certification. This provides a revealing insight beyond the basics of fault simulation into a detailed campaign on an industrial scale IP. Siemens EDA and Rambus describe using the Austemper toolset on the on Rambus RT-640 root of trust IP, and the steps they went through to achieve functional safety metrics required for ASIL B certification.

The Austemper toolkit

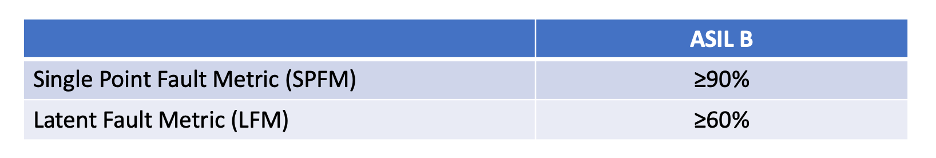

In a typical FMEDA flow you would first use spreadsheets and engineering judgment to decide where you should insert safety mitigation techniques in an RTL design. Then you would run a fault campaign using fault simulation to determine how effectively your mitigation techniques have worked, as measured by the appropriate ASIL requirements. This could lead to lengthy loops to reach targets such as those for ASIL B.

In the Austemper flow, SafetyScope will estimate FIT rates and FMEDA metrics, and suggest a preliminary fault list before safety insertion. It can then be run again after fault simulation to provide a summary report with final metrics and detection coverage. Kaleidoscope runs fault simulation, categorizing faults as detected, not detected, not triggered, or not observed (at an observable point).

Faults modeled in the analysis

Following the standard, the Austemper flow models three types of faults:

Transient. These are as the name suggests temporary faults and may result from cosmic rays, electromagnetic interference, or other transitory stimuli. The flow runs a quick pseudo-synthesis to find state elements, putting these into the fault list. During analysis such a fault will be enabled at the outset then removed after some time window, remaining active until that point. The length of the window is configurable.

Permanent. These are durable faults and may result from design errors, configuration errors, deadlocks or other influences which can create a stuck state. Candidates include state and non-state elements and are modeled using stuck-at-1 or 0 values, just as in DFT analyses. These errors persist throughout a fault simulation.

Latent. These faults are very tricky to find and to mitigate because they result from a failure depending on two or more faults in the system, especially when one of them occurs in safety mitigation logic. Austemper models latent faults with one stuck-at in the functional logic and one in the corresponding safety system. (Latent faults depending on 3+ simultaneous failures have very low probability.)

Practical considerations in the fault campaign

Fault simulation of many faults over a large circuit could consume a huge amount of time without careful planning. The Siemens and Rambus guys suggested several techniques they used to keep this manageable.

First, they don’t always work with the full fault list. They strategically evaluate subsets of faults at different stages to slim down the set, before working on the hardest cases. For example, they analyze first around known safety-critical areas. Then they (temporarily) reduce the fault-tolerant time interval (FTTI) to determine faults which can be detected quickly. With similar intent, they temporarily treat sequential elements as observable points, allowing them to filter out any faults which reach a primary output without triggering an alarm.

This ultimately leaves them with a subset of undetected faults which must be analyzed for the full FTTI to determine if any escape to an output without raising an alarm. These are the most expensive to evaluate since they can fanout through multiple cycles, creating multiple simultaneously active faulty traces before ultimately registering as detected or otherwise.

Fault simulation depends on stimulus vector which may not trigger a fault, or may trigger it but not lead to it raising an alarm or being observed at a primary output. These faults they consider unclassified. Improving the stimulus may help but there are limits to that option for a software based and heavily parallelized software fault simulation. They suggest a couple of options to reduce the number of unclassified faults. In bus simplification, they assert that if a fault in one bit is detected, then all bits get the same classification. They make a similar assertion for duplicated instances of a module. If all faults within one instance are successfully classified, then all instances in other instances are also deemed classified. Finally they set an empirical threshold for the number of stimuli against which they test. A level at which they feel they tried “hard enough”. Arbitrary yes, but I don’t know how I would do any better.

Nice paper. You can read it HERE.

Also Read:

The State of IC and ASIC Functional Verification

ASIL B Certification on an Industry-Class Root of Trust IP

3DIC Physical Verification, Siemens EDA and TSMC

Share this post via:

Comments

One Reply to “ASIL B Certification on an Industry-Class Root of Trust IP”

You must register or log in to view/post comments.