Even as Honda Motors puts a so-called Level 3 semi-autonomous vehicle on the road in Japan – 100 of them to be exact – the outrage grows over semi-autonomous vehicle tech requiring driver vigilance. Tesla Motors and General Motors have taken this plunge, creating a driving scenario where drivers – under certain circumstances – can take their hands off the steering wheel as long as they are still paying attention.

Allowing for the hands-off proposition, for some, requires the definition by the system designer of a so-called operational design domain (ODD). This appropriately named protocol describes the acceptable circumstances and functional capability of the semi-automated driving.

The ODD concept is raised in the UNECE ALKS (Automated Lane Keeping System) regulation that defines the enhanced cruise control functionality and the systems and circumstances that make it allowable – including a “driver availability recognition system.” Critics have begun speaking up. The latest is Mobileye CEO, Amnon Shashua.

In a blog post, Shashua asserts that all of these developments represent examples of “failure by design.” By allowing vehicles to drive under particular circumstances, the offending designers are setting the stage for catastrophic Black Swan events because the ODD does not provide for evasive maneuvers, only braking.

It’s worth noting that the main Black Swan scenario envisioned by Shashua is the autonomous driving car following another car, when the leading car swerves to avoid an obstruction and the following autonomous vehicle is incapable of reacting fast enough to avoid the obstruction. Ironically, one of Shashua’s proposed solutions to this problem is to ensure that “the immediate response (of the robotic driver) should handle crash avoidance maneuvers at least at a human level.”

The irony is that in the circumstance of a robotic driver following another car, the robotic driver may in fact be able to respond more rapidly than the human driver. So why should human driving be the standard in all cases? There are clearly circumstances where robotic driving will be superior – too numerous to list here.

Let’s review the UNECE ALKS ODD requirements for semi-autonomous driving – what the Society of Automotive Engineers describes as Level 3 automation:

The Regulation requires that on-board displays used by the driver for activities other than driving when the ALKS is activated shall be automatically suspended as soon as the system issues a transition (from robot driver to human) demand, for instance in advance of the end of an authorized road section. The Regulation also lays down requirements on how the driving task shall be safely handed back from the ALKS to the driver, including the capability for the vehicle to come to a stop in case the driver does not reply appropriately.

The Regulation defines safety requirements for:

- Emergency maneuvers, in case of an imminent collision;

- Transition demand, when the system asks the driver to take back control;

- Minimum risk maneuvers – when the driver does not respond to a transition demand, in all situations the system shall minimize risks to safety of the vehicle occupants and other road users.

“The Regulation includes the obligation for car manufacturers to introduce Driver Availability Recognition Systems. These systems control both the driver’s presence (on the driver’s seats with seat belt fastened) and the driver’s availability to take back control (see details below).”

So the UNECE is very specific about when its ALKS ODD applies. In a recent SmartDrivingCar podcast, hosted by Princeton Faculty Chair of Autonomous Vehicle Engineering Alain Kornhauser, Kornhauser complains that the average driver is unlikely to either study or comprehend the ODD or the related in-vehicle user experience – i.e. settings, displays, interfaces, etc.

For Kornhauser, the inability of the driving public to understand the assisted driving proposition of semi-autonomous vehicle operation renders the entire proposition unwise and dangerous. He also appears to assert that additional sensors are necessary to avoid the kind of crashes that have continued to bedevil Tesla Motors: i.e. Tesla vehicles on Autopilot driving under tractor trailers situated athwart driving lanes, and certain highway crashes with stationary vehicles.

What Shashua and Kornhauser fail to recognize is that Tesla has actually brought to market an entirely new driving experience. While Tesla has clearly identified appropriate driving circumstances for the use of Autopilot, the company has also introduced an entirely new collaborative driving experience.

A driver using Autopilot is indeed expected to remain engaged. If the driver fails to respond to the vehicle’s periodic and frequent requests for acknowledgement, Autopilot will be disengaged. Repeated failures of driver response will render Autopilot unavailable for the remainder of that day.

More significantly, while appropriately equipped Tesla’s are able to recognize traffic lights and, in some cases, can recognize the phase of the signal, when a Tesla approaches a signalized intersection it defaults to slowing down – even if the light is green – and requires the driver to acknowledge the request for assistance. The Tesla will only proceed through the green light after being advised to do so by the human driver.

This operation and engagement occurs once the driver has made the appropriate choices of vehicle settings and may not require that the drive understand the vehicle’s operational design domain. When properly activated, the traffic light recognition system introduces the assistance of a “robot driver” that is humble enough to request assistance.

Regarding Shashua’s concern that a too-narrowly defined ODD, that does not provide for evasive maneuvers, is a failure by design. But appropriately equipped Tesla’s are capable of evasive maneuvers. In fact, appropriately equipped Tesla’s in Autopilot mode are capable of passing other vehicles without human prompting on highways. It’s not clear how well these capabilities are understood by the average Tesla owner/driver.

The problem lies in the messaging of Tesla Motors’ CEO, Elon Musk. Musk has repeatedly claimed – for five years or more – to be on the cusp of fully automated driving. Musk insists all of the vehicles the company is manufacturing today are possessed of all the hardware necessary to enable full self driving – ultimately setting the stage for what he sees as a global fleet of robotaxis.

The sad reality is that these claims of autonomy have displaced a deeper consumer understanding of what Tesla is actually delivering. Tesla is delivering a collaborative driving experience which provides driver assistance in the context of a vigilant and engaged driver. But Musk is SELLING assisted driving as something akin to fully automated driving.

This is where the Tesla story unravels. Current owners that understand and choose not to abuse this proposition view Musk as a visionary, a genius, who has empowered these drivers with a new driving experience.

Competitors of Tesla, regulators, and non-Tesla owning consumers are angry, intrigued, or confused. Some owners may even be outraged at the delta between the promise of Autopilot – as seen and heard in multiple presentations and interviews with Musk – and the reality.

To add insult to injury, those drivers that have suffered catastrophic crashes in their Tesla’s – some of them fatal – have discovered Musk’s willingness and ability to turn on his own customers and blame them and their bad driving behavior for those crashes – some of which appear to be failures of Autopilot. This is the critical issue.

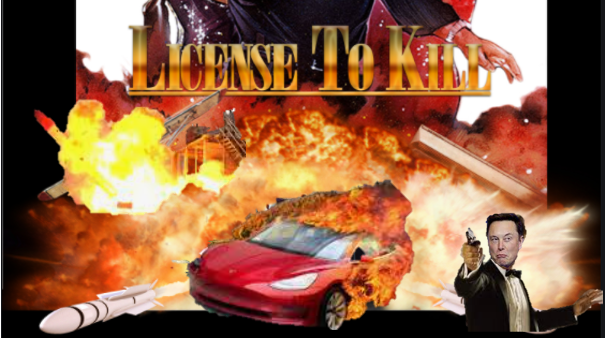

Musk is essentially using his own ODD definition to exempt Tesla of any responsibility for bad choices made by Tesla owners – or even misunderstandings regarding the capability of Autopilot. As a result, Musk’s marketing has indeed given Tesla a license to kill by enabling ambiguous or outright misleading marketing information regarding Autopilot to proliferate and persist.

The collateral impact of this may well be insurance companies that refuse to pay claims based on drivers violating their vehicle’s end user licensing agreement – the fine print no one pays attention to. Musk is muddling the industry’s evolution toward assisted driving even as he is pioneering the proliferation of driver-and-car collaborative driving.

Can Tesla and Musk be stopped? Should they be stopped? How many more people will die in the gap that lies between what Autopilot is intended to do and what drivers think it is capable of? How many is too many?

The saddest part of all is that Musk is an excellent communicator, so there is no question that he knows precisely what he is doing. That somehow seems unforgiveable.

Share this post via:

ASML High-NA EUV is Not Ready for High-Volume Production