Google’s Project Tango is a prime example of a sophisticated application pushing the boundaries of what is possible within the power envelope of a mobile device. Its objective is to combine 3D motion tracking with depth sensing to understand how a device is moving and gauge its surroundings precisely. Continue reading “DSP gives Project Tango a power dip”

The "Great Acceleration"

The “Great Acceleration” is upon us. On all fronts technology in everything is advancing at an ever accelerating rate due to improvements in education/training, increased power and functionality of hardware, software, access to information(Google, technical publications, etc.) prototyping techniques (3D Printing, sophisticated design and test software for literally everything from software, to semis, to physical objects and devices), more people working on literally everything from every angle with the net providing collaboration and off loading of just about any task. All this coupled with an ever increasing ways of raising capital and assembling talent and you get to where we are now, Rapid acceleration of any task we can even imagine, including the very problems acceleration creates.

All this is leading to the ability to have everything in abundance if we so choose. The problem is that most individuals, social structures and government can’t keep up and this fundamental change to abundance, leading to increased instability and dislocation. The semi and software sectors are leading the way and are advancing so much faster than everything else we have dislocations that while creating great things also create great instability and damage. Organizations, companies, investors and individuals that master this will do well, while many will suffer from the dislocations and instability.

To take full advantage of the opportunities before us will not only require changes in us, but all the organizations we deal with. A Gallup poll done in September found 75% of all adults consider corruption pervasive throughout our government and justly so. Sadly much of this is just a failure of not wanting to adapt to the accelerating changes. It is not just government that has problems, but our very social structure for government is just a reflection of the society on which is still based on the slower moving progress of the past.

Since the semi sector is among the leaders in accelerating change, we can no longer work in a vacuum indifferent to the world around us. With knowledge and power go responsibility and to forget this will have dire consequences for everyone. When we at the forefront of change if we forget the consequences and applications of what we do, we can literally sink the ship. It is at this point we must remember “When the ship sinks, first class goes down too”.

Technology can bring one quality that will help everyone, transparency, which is the key to honesty and integrity. Transparency also has the awesome power to solve problems by making them apparent. It is critical that transparency is maintained so we can solve problems before they become dangerous. Already it is easy to see where new forms of finance have put many people and governments in dangerous straights. Many things can now become obsolete well before the financing to pay for them is completed. There is nothing we can’t solve and the semi sector can/will play a key part in the solutions, many are already well under development. Bellow is a brief partial list of examples of solutions under way.

Energy: solar in many forms, wind, underwater turbines, nuclear, stripping hydrogen from hydrocarbons, nuclear, advanced fracking for not only oil/gas but geothermal

Food: advanced forms of algae and microbial that already produce 40 times what current agriculture land produces with the potential of 700 times. We might not eat this, but most food is grown for animal feed. This would solve food, land and water problems.

Water: advanced desalinization, recycling of waste water to drinking quality

Transportation: ultra efficient cars, ships, planes and trains

Medical:vastly lowered costs and increased productive life span currently being literally attacked from almost every vector

Robotics/AI: will open up the oceans with vast resources of all types, improve and lower education costs, free us up for far more productive and pleasurable pursuits and greatly leverage our labor (creating more dislocation and challenges)

Efficiency: new glass, numerous new engines, hybrid systems, new insulating materials, new reflective surfacing coatings, etc. etc., but most of all in all processes from creation to production to distribution to use and disposal.

Transparency and flexibility will be key if we are going to deal with these changes without massive social conflict and with semiconductors being at the heart of much of this, this is the place to start and should be taken into account from the inception of any new technology which is just another form of power and should be treated and respected as such for technology without wisdom can be very dangerous. The semi/mems sector is the leader in acceleration, so it is up to us to ask these critical questions and develop solutions.

A Synergistic Chip-Package-System Analysis Methodology

Looking back, 2015 was a significant year for mergers and acquisitions in the EDA industry. The Semiwiki team maintains a chronology of major transactions here.

As I was reviewing this compendium, one of the entries that stands out is the acquisition of Apache Design Solutions by Ansys, Inc. a couple of years ago.

At that time, there was some uncertainty expressed as to the synergy between the two firms, one being rather system-centric and the other focused on the analysis challenges of deep submicron chip designs. Many assumed that Apache would be an acquisition target of the purple, red, or green EDA company, rather than Ansys.

Ansys is a premier supplier of package, board, and system-level tools for electromagnetic and mechanical analysis. The HFSS finite-element analysis and SiWave simulation tools are the gold standards for extraction and simulation of complex time- and frequency-domain characteristics of package/board traces, for signal integrity and power integrity (SI/PI) loss assessment. As SerDes channel data rates continue to be pushed aggressively by system designers, the required accuracy of the SI models necessitates the precise models available from HFSS. The Ansys Multiphysics tool suite provides several (coupled) analysis capabilities:

- mechanical analysis of materials stress and deformation to evaluate attach and assembly reliability

- electromagnetic propagation analysis for EMI compliance, and

- thermal modeling to ensure reliable system cooling

As a startup in the early 2000’s, Apache developed the leading RedHawk toolset for chip-level IR voltage drop and electromigration analysis. The on-chip power domain management techniques that were rapidly being adopted demanded a more sophisticated IR and EM analysis methodology. The dynamic nature of power domain cycling to different cores and IP on-chip necessitated more focus on RLC extraction and local switching activity measures, including power-gating related current transients. Yet, the separation of chip and package/board analysis resulted in the use of simplistic “budgets” for higher-level losses for chip signoff.

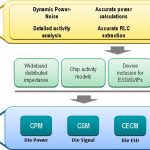

Since the acquisition, the synergy of the Ansys-Apache combination has become very evident, as the merged resources have continued to refine a comprehensive chip-package-system (CPS) modeling and analysis methodology, including:

- power distribution network (PDN) analysis

- simultaneous I/O switching activity analysis

- EMI analysis

- chip I/O electrostatic discharge analysis

- thermal analysis

The key to this approach is the generation of various chip abstract models, which are promoted and integrated into the package/system analysis framework. The following two figures illustrate the CPS analysis strategy.

This figure illustrates how the abstracts are incorporated into package/board analysis.

As an example, the power integrity analysis methodology focuses on traditional chip power grid sign-off, including identification of any local switching hotspot issues. A sophisticated RLC model of the chip and package power distribution network is then incorporated into the Chip Package Model (CPM) abstract. The CPM includes pad-level switching currents and capacitances, as well. The “chip-aware” board power distribution analysis incorporates the CPM. Using SiWave, the system designer can now optimize pin assignment, board power plane definition, decoupling capacitor selection and placement, and integration of the voltage regulator module(s).

For signal integrity (and simultaneous switching) analysis, the Chip Signal Model (CSM) abstract is used. The CSM methodology extends the traditional IBIS model approach to include I/O pad ring parasitics, and significantly, the I/O power noise impact on circuit behavior.

For electrostatic discharge reliability analysis, the wafer foundry’s design enablement team will provide ESD design guidelines and related rule checks to IP developers focused on SerDes, memory interface, and general purpose I/O circuits. However, customers may have unique robustness requirements for HBM, MM, and CDM discharge events, outside the characterization range used by the foundry. The Ansys methodology includes generation of the Chip ESD Compact Model (CECM) abstract. Designers can now evaluate ESD protection in a complete system environment model for their application, through the board and package pins.

An analysis methodology that is emerging as crucial to circuit, signal, and system reliability is the thermal profile of the chip-package-system implementation. The importance of accurate thermal modeling is magnified by the increasing use of multiple die-in-package offerings – e.g., 2.5D and 3D designs with various interposer and multi-plane signal redistribution layers. The Chip Thermal Model (CTM) abstract is integrated into the larger Ansys thermal analysis toolset, as illustrated below.

Ansys has continued to expand their CPS methodology, incorporating accurate and detailed chip abstract models into their industry-leading package/board-level tool suites. The synergy between the chip and system tools offers a comprehensive modeling environment, which is absolutely critical for SI, PI, EMI, ESD, and thermal reliability analysis.

There is no longer any doubt that the Apache acquisition belongs in the “win-win” column. For more details on the Ansys/Apache CPS methodology, links to technical papers are available here.

-chipguy

Leveraging HLS/HLV Flow for ASIC Design Productivity

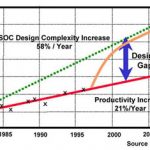

Imagine how semiconductor design sizes leapt higher with automation in digital design, which started from standard hardware languages like Verilog and VHDL; analog design automation is still catching up. However, it was not without a significant effort put in moving designers from entering schematics to writing RTL, which could be automatically verified and synthesized to gate level netlist.

Today, the designs have grown bigger and more complex with a complete system appearing on a chip. It’s a tedious task writing RTL for a complete chip and verifying it with a single verification approach. Design and verification productivity is at stake, which needs urgent improvement through change in design methodologies.

Higher levels of abstractions are possible where the system can be described in higher level languages like ‘C’ or SystemC, which can be simulated several hundred times faster than RTL. This provides a great opportunity for a quick architectural exploration at the top level for best PPA (Power, Performance, and Area) with the least investment of resources and time. This novel approach is taking longer than expected in its proliferation across the semiconductor design industry. However, there are promising tools and methodologies, which are being adopted by leading SoC and IP design companies and are being deployed in their standard design flows. Let’s review how this is being done and what more needs to be done to bring this approach in the main stream of SoC design and verification.

Qualcomm, the largest fabless semiconductor company has established a standardized high-level synthesis (HLS) and high-level verification (HLV) based design flow throughout the company. The flow has been successfully used in several image processing, video processing and other IP of different complexities; and chips taped out using those IP. This is an outcome of Qualcomm’s multi-year work with Calypto (now Mentor Graphics). Qualcomm also presented about their HLS/HLV based methodology in the 52[SUP]nd[/SUP] DAC.

Mentor’s Catapult[SUP]®[/SUP] 8synthesizes C++/ SystemC into an optimized RTL. Testing the synthesizable C++/SystemC catches bugs more quickly, because the code simulates hundreds of times faster and is typically 1/5[SUP]th[/SUP] the number of lines of comparable RTL. Much less debugging is then needed on the generated RTL, which accelerates verification downstream and reduces the total verification time by half. The RTL is also power optimized through Mentor’s PowerPro[SUP]®[/SUP] optimization and analysis technology under the hood in Catapult 8. The verification starts very early in the design cycle. The reference model in C/C++ is refined and then cleaned through SLEC[SUP]®[/SUP] CPC, the C property checking tool, which detects all static errors in the code and also checks the assertions and cover points set by the user. The HLS model thus produced is easily synthesized into an optimized and correct RTL that can easily go through the downstream design flow. Also SLEC (Sequential Equivalence Logic Checker) can formally verify the generated RTL against the source C/C++.

A homogeneous system in C/C++ provides quick and extensive architectural exploration and verification closure through HLS/HLV methodology. The test infrastructure used during HLV can be reused during RTL verification, thus improving the productivity of downstream verification flow as well.

Starting with an algorithm, the architecture can be appropriately divided between hardware and software. The hardware can be modelled further into synthesizable and non-synthesizable ‘C’. The algorithmic hardware model thus created is synthesized using Catapult HLS tool and verified in ‘C’. The ‘C’ regression thus created can be complemented with RTL regression during RTL verification.

By making small changes in the code of algorithmic model or in the constraints on the synthesis tool, one can quickly generate new design architecture and compare it with the older ones. This provides an opportunity to quickly iterate over several architectures and determine the best architecture for a design. Also the designs with different power profiles can be reviewed with this approach, which is not possible with traditional RTL flow because power data becomes available very late in the design cycle.

Complete verification coverage can be attained in 3 stages; 100% functional/structural coverage during C/SystemC verification, 100% functional coverage during RTL functional verification, and complete structural coverage during RTL structural verification. Any uncovered hole must be determined to be unreachable.

Qualcomm has used an established C codebase for certain designs by simply changing the target library for technologies ranging from 65nm to 14nm. The enhancements and new features can be easily added onto that codebase. It’s clear that the ECO flow is quite fast and efficient. So, the changes can be done quite late in a design cycle.

Well, HLS/HLV methodology works very efficiently at a higher level (C/C++/SystemC) and complements very well with the downstream RTL flow. However, design signoff still takes place at RTL and gate level due to limitations in current HLS toolset and methodology, which works mostly with algorithmic models at IP level.

While talking with Bryan Bowyer, Director of Engineering at Calypto Systems Division of Mentor Graphics, I learnt that they are working with partners to make SLEC more generic to check equivalence between ‘C’ and RTL. Also, Mentor is driving an effort going on in Accellera SystemC Synthesis Working Group to standardize HLS flow through a common synthesizable subset of SystemC across the industry.

The way design signoff has moved up from gate to RTL level today, we hope the signoff moves further up to ‘C’ or SystemC level in future.

For more details on Qualcomm’s HLS/HLV methodology, a whitepaper at Calypto’s website can be referred HERE.

Pawan Kumar Fangaria

Founder & President at www.fangarias.com

HDCP 2.2, Root of Trust, Industry’s First SHA-3 Security IP from Synopsys

Did you know that by 2020 90% of cars will be connected to Internet? Great, but today, there are already more than 100 car models affected with security flaws (Source: theguardian.com, 2015). That 320 apps are installed on average smartphone device? It would be a complete success, but 43% of Android devices allow installation of unverified apps (Source: Slideshare.net, 2015). Let’s talk about IoT, according with HP Fortify study made in 2014, 70% of most IoT devices contain serious security vulnerabilities. These statistics sounds like security is still considered by the system designers as a luxury option, like was for example the simulation of an electronic design in the 1980’s. Just before efficient and user-friendly simulators become to be used by most of the design team and EDA become a real market. The dissemination of connected cars and devices is exploding, but we are still in the early days of security IP systematic usage.

The demand for an always more connected world is pushing threats on the increase, the scenario are multiples. You may expect to suffer from theft & replacement of credentials, rogue devices connected to the internet, attacks to other devices connected to a network, happening at home or at your professional environment. In the industry, companies may be weakened by software and IP theft or tampering, cloning or snooping of sensitive data. To make it short, individuals and companies need to be protected when using connected systems or these systems need to integrate the most efficient security IP to protect users.

Elliptic has been completely focused on security since the company foundation and the acquisition by Synopsys has allowed furthering extending the security IP port-folio development.

Cryptography IP

Supporting the latest standards like AES, SHA-2, SHA-3, PKA or TRNG, these cryptographic algorithms can be implemented in HW or SW and highly configurable for optimal size or performance. For example, pipelined AES core variants scale to 100+ Gbps performance. Integrating security IP enable the development of customized IP subsystems or building blocks for security protocols accelerators.

Platform Security: tRoot

Synopsys tRoot is a secure hardware Root of Trust, an embedded security module providing chipsets with their unique identity that can’t be tampered with. tRoot is made of hardware plus firmware and provides safe environment to create, store and manage secrets critical for the system, like keys, certificates and other data. You can see a typical tRoot implementation in an application processor in the above picture.

Security Subsystems: Content Protection

Elliptic is providing HDCP 2.2 content protection IP for Miracast, HDMI and DisplayPort. This IP, certified both for Transmit and Receive, self-contain Root of Trust, supports firmware upgrade and can be easily configured for different high resolution uncompressed content.

HDCP 2.2 is WiFi certified Miracast solutions in production devices, protecting the link between Miracast devices. This IP solution is integrated with major Miracast/WiFi Display stacks.

We think that the security IP segment is just starting to grow, we may expect security IP to penetrate most of the connected applications. As an end user, I really hope security IP to become ubiquitous!

More about Synopsys security IP here

From Eric Esteve from IPNEST

How Wireless Modem IP is Aiding Roadmap to 5G Chipsets

The semiconductor industry is steadily charting its course toward 5G chipsets with the availability of extremely complex system-on-chip (SoC) designs that support the surge of data traffic over next-generation wireless networks. Take Blu Wireless Technology, for instance, the IP supplier from Britain that is using the Arteris FlexNoC interconnect in its Hydra WiGig and backhaul subsystems.

Blu Wireless, which designs and licenses wireless modem IP for multi-gigabit communications standards like WiGig, is among the early entrants in the commercial 60 GHz chipset market. The chipsets for 60 GHz millimeter wave radio with up to 7 Gbit/s speeds have passed the certification tests and are ready for production and launch in 2016.

Blu Wireless develops PHY/MAC solutions for high-speed wireless networks

The tri-band WiGig chipsets—comprising of the transceiver, power amplifier and antenna—can handle links at 2.4 GHz, 5.8 GHz and a number of unlicensed 60 GHz bands.

For s start, the WiGig-ready chipsets aim to cut the TV cord by facilitating in-home video streaming and other high-speed multimedia applications in the entertainment domain. But the far bigger prize for these chipsets is the nascent 5G market where Blu Wireless is eyeing its IP for both backhaul links to small cells as well as high-bandwidth links to 5G terminals.

Anatomy of a 5G Baseband Chipset

The Bristol, England–based Blu Wireless is a supplier of IP for gigabit-rate wireless modems operating in the millimeter-wave frequency bands. The company’s 60 GHz modem is based on its Hydra PHY/MAC technology that boasts a phased-array antenna.

It’s a baseband DSP solution that uses a hybrid hardware and software architecture while employing heterogeneous multiprocessing technology in order to reduce interference. Here, it mixes fixed-function DSP blocks with highly-optimized parallel vector DSPs, which in turn, creates a very complex DSP pipeline.

That’s essential because multiple DSPs in the digital controller have to handle a lot of arbitration and quality-of-service (QoS) tradeoffs—for example, latency vs. bandwidth—while requiring to limit the size of the die and implement SoC-wide power management. As a result, Blu Wireless needed an SoC interconnect solution that would allow it to implement end-to-end QoS and guarantee the on-chip latency and bandwidth requirements.

Blu Wireless has licensed the Arteris FlexNoC interconnect IP for its Hydra WiGig and 4G backhaul subsystems. The Arteris FlexNoC fabric primarily caters to the digital side, where it serves as the main interconnect in the baseband, allowing Blu Wireless to make latency and bandwidth optimizations per link.

The FlexNoC interconnect architecture encompasses serialization, buffering, arbitration, etc.

The digital controller is responsible for high-bandwidth digital signal processing. Arteris is also offering its FlexNoC interconnect solution on the analog side, but it’s a smaller NoC part.

The Arteris FlexNoC interconnect IP allows easy simulation and exploration to decide link widths, the number of wires and QoS setting. Here, FlexExplorer, a component of FlexNoC interconnect fabric, allows the simulation using SystemC models of candidate network-on-chip (NoC) architectures.

Specifically, for WiGig, the challenge for the digital controller is to keep the RF system “fed” to achieve the best possible data rates. The rates to and from the PHYs that are connected to the controller exceed 2 Gbit/s. Blu Wireless sells both digital and analog IP for WiGig to comms chipmakers and calls it the MAC subsystem.

Heading Toward 5G

It’s worth remembering that millimeter wave is most likely going to play a crucial role in breaking the bandwidth barriers for the realization of 5G networks. The industry giants like Samsung are heavily focusing their 5G development work on millimeter wave communication amid its potential as an ultra-dense network for small cells.

Moreover, 5G isn’t merely about mobile Internet services offered over wide-area cellular networks. In fact, the notion of 5G encompasses multiple connectivity solutions to provide communications services for a myriad of new devices, ranging from smartwatches to drones to connected cars.

Blu Wireless founder and CEO Henry Nurser demonstrating a 60 GHz module

Blu Wireless is part of the XHaul Project—one of the initiatives from the European Commission’s 5G Infrastructure Public Private Partnership (5G-PPP)—that is focusing on backhaul technologies while using both millimeter-wave radio and fiber-optic links.

Blu Wireless is contributing millimeter wave modems in the trial network that is targeting sub-1ms latency and speeds of up to 10 Gbit/s. The XHaul Project is being conducted in Blu Wireless’ hometown Bristol; other participants include Huawei, Telefonica I+D, TES Electronic Solutions and the University of Bristol.

Also read:

Interconnect Watch: 3 Chip Design Merits for Network Applications

Future Shock – IoT benefits beyond traffic and lighting energy optimization

The year is 2029, approximately 35 years after the Internet went mainstream and today the world, its people and things are connected in ways that the Internet’s pioneers could never have imagined. The Internet of Things (IoT) or as Cisco put it, The Internet Of Everything (IoE) is now a reality, enhancing our lives in myriad ways.

Continue reading “Future Shock – IoT benefits beyond traffic and lighting energy optimization”

China’s chip plans an economic attack on Taiwan?

An economic “bear hug” cold war invasion? If China can’t get Taiwan by politics or force maybe it can get them by a backdoor economic takeover starting at their most crucial spot – Chips. An ulterior motive for China’s semiconductor aspirations, the $100B silicon Trojan Horse?

Its quite clear that semiconductors and electronics are the rocket fuel that has been powering Taiwan’s economy for as long as anyone can remember. I don’t know if PC motherboards are made anywhere else on the planet and clearly TSMC is an economic powerhouse which spawns many other supporting industries built around and on the semiconductor devices that come rolling out of its fabs.

The tiny little runaway island off the coast of China is economically huge and in many ways in a much better position in the critical technology area than its large and jealous neighbor.

Becoming a “player” in the semiconductor industry…

Its also clear that China needs to be even more in the technology space and a key ingredient is semiconductor device technology which they are still lacking. Yes they have SMIC and fabs from outside companies being built but they are not up to par with the US, Korea or Taiwan. This limits them to always being dependent on others .

So, spending $100B to get in the chip business makes all the sense in the world for them and I am frankly surprised they didn’t start earlier than this year (in earnest). It will help them both economically and militarily.

Infiltrating Taiwan’s economic core?

If China can intertwine its economic investments with companies like TSMC, ASE, UMC and others if will greatly accelerate what is already the “fait accompli” of Taiwan being merged back into greater China. What might otherwise take another generation could likely be accomplished in a much shorter period without so much as a rattled saber or shot fired. Essentially taken over from the inside out not the outside in…..

Other targets outside Taiwan could also help

If China buys Global Foundries at some fire sale price as Abu Dhabi exits stage left they could get their hands on a lot of IBM’s semiconductor secret sauce which they would not have been able to directly purchase a year ago. But who’s to argue now that its a foreign entity selling to another foreign entity? China could also pick up AMD at fire sale pricing and get immediate access to server/cloud capable CPU’s as well as PC compatible parts which already are produced by Global Foundries.

The only thing missing is memory….maybe the Japanese will get out of the last remaining island of semiconductor technology and sell off Toshiba’s memory operations….hey, you never know. In short , with a $100B wallet it isn’t that hard to buy your way into the semiconductor business.

Could put heat on Korea as well…

Although Taiwan is a clear target and perennial thorn in the side of China, Korea is not that far from China and the counterpoint to China’s North Korean Puppet state. Right now a big slug of both Taiwan and Korea’s technology economic output is exported to China. If that goes away because China can produce it all by itself it would put a torpedo in the sides of both economies that would significantly weaken them.

Expect China to accelerate the Semiconductor M&A in 2016

With all these benefits and a large war chest there is no reason to think that China will slow down in 2016. We would expect that China may be the gasoline thrown on the fire of an existing M&A semiconductor bonfire.

This could certainly help the depressed overall valuations in the semiconductor industry that have been left in the dust by, Apps, IOT, the cloud and everything software driven….

Apple buys a fab????

We could not help but notice that Apple has bought a former Maxim semiconductor fab in San Jose , not too far from its headquarters and right next door to a new Samsung building (interesting poetic justice…).

Although this is far from a state of the art fab running 14nm devices its does come fully furnished with 197 semiconductor manufacturing tools from the standard suspects. It was recently shut down in July so its not a graveyard.

Not building the A10 any time soon…..

It is a small, low volume/prototype capable operation that is likely suited for the other ancillary chips that go into apple devices (all that semiconductor glue that holds everything together). At $18M is not even a microsecond’s rounding error in Apples tsunami of money but the fact that they are doing it is interesting.

It was also recently revealed that Apple has a secret R&D facility in Taiwan that appears to be aimed at display technology.

Both of these moves appear to make Apple appear a lot more “Samsung like” in their ability to develop chip and display technologies.

Going Vertical?

Samsung has been a vertical manufacturer for a long time now and it has obviously served them well. These two moves seem to imply that Apple is perhaps taking a more active/controlling role in the design and manufacture of all parts that go into its devices. It sure sounds like a change in strategy, perhaps its a way to try to say ahead and differentiate Apple products as having outsiders develop technology doesn’t keep it out of the hands of competitors.

If these are the first two shots in this new direction we can imagine that Apple could easily buy its way into a lot of other key technology areas, after all its not doing a lot else with its cash hoard and this seems like a great strategic use.

Maybe Apple will put the silicon back in silicon valley!!!

How to Overcome HW Project Release Nightmares

Is a software development release methodology a “square peg in a round hole” when it comes to hardware design? To answer this question we have to look at how exactly hardware design projects differ from their software counterparts. Intuitively we know they are fundamentally different. Let’s take a second to dig deeper to understand why development methodologies for software do not map well into the hardware space, and then discuss solutions that do work.

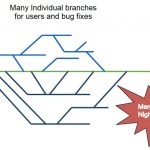

HDL’s are a lot like the source code found in software projects, but downstream in the flow we encounter many binary files. These include custom layout files, place and route data bases, and models for simulation, etc. The presence of binary files breaks the ability to use diff and to merge two separate but parallel versions of a file. Really only one person at a time can work on a layout cell, for instance.

Hardware projects involve large teams that often are using or modifying the the same data. It’s common for sub-teams to have tens of members and for the entire project to include hundreds of members. These teams are arrayed over partitions of the design and they also cross functional boundaries, such as front end, simulation, verification, physical, physical verification, test, DFM, silicon engineering, etc.

When it comes to the number of files that need to be managed and the amount of data, we see tremendous numbers. A single workspace may consist of tens of thousands of files, or even more. Not just the number of files, but the total amount of data is enormous. It can head up into the tens or hundreds of gigabytes.

Lastly, the flow steps themselves can take days, or weeks. Place and route steps, verifying physical design rules, extraction for LVS or simulation, and many other steps easily can take days or longer.

All of these characteristics make it hard to overlay the popular branch/merge release flow onto physical design projects. In this approach each designer or developer creates a branch of the main trunk and can work on any or all of the files. It is isolated from the work of others, and down the road it will be merged back into the trunk. For software projects, files can be diff’ed and separate versions can be merged, based on the specific edits.

Because each team member has their own copy, it means that there are potentially many branches. In a hardware design team of 20 or 50 people, you can see that this can create a lot of branches, and eventually it will be some unlucky team member’s job to try to recombine them into something like a workable release. The lengthy work cycles mentioned above add to this by causing the branches to live long lives before any attempt to merge them back into the main trunk. This means that the baseline will have moved along significantly and increases the amount of data that needs to be reconciled.

Methodics has posted an interesting summary of best practices for data management used in hardware design projects. Their EDA focused data management solution is agnostic when it comes to the underlying revision control system that is used. This makes it easier for large enterprises to combine systems and achieve better integration. As a result of this a lot of people use Perforce based data management systems for their design data. The summary that Methodics provides talks about the alternative to the branch merge release process as used in Perforce. This recommended solution is referred to as Release on Trunk.

The Methodics white paper goes into more detail, but the main points are that each user creates a workspace based on a known good release. When they make changes they return them to the main trunk. They also keep current on releases that are updating the main trunk. Finally, when it is time for them to create a release based on their updates, they ensure they have the latest release updates in their workspace and run full regressions there before making the release.

Methodics also briefly also covers how to deal with the massive workspaces that are generated in hardware design flows. They point back to underlying capabilities in Perforce Helix and show several examples of ways they can be used to minimize the storage requirements.

The entire white paper is on their website and is interesting reading. It’s good to know that enterprise data management solutions can be applied to EDA design flows. But at the same time it is critical to understand where and how differences arise and how to adapt to them.

How to Solve the Business Gap in SEMI Industry?

This white paper about Cadence innovative mixed-signal IP concept “Cadence Multi-Link PHY IP (SerDes, Analog Front-end, and DDR) to Design SoC Platform breaking the “Business Gap” on 14/16FF” describe the problem, the emergence of a “business gap” linked with incredibly high development cost when targeting most advanced FinFET technology nodes, and the solution, integrating a Multi-Link PHY IP. Chip makers targeting the lucrative market segments like FPGA, Mobile, Networking or Data Center are forced to jump on the most advanced technology node for several reasons. They all have to be the first to bring the highest possible processor performance or bandwidth (servers, networking), or the first to launch an Application Processor (smartphone) offering both maximum possible features, higher performance and at par or lower power consumption than the previous generation. The SoC development schedule is constrained by the market expectation, pushing for the lowest possible Time-To-Market (TTM). Designing a SoC on 14/16FF nm and below, also means finding a solution to a critical business problem linked with the increasingly high development cost. The IP reuse concept is born in the 1990’s to help optimizing the chip design. Commercial IP market took off in the 2000’s to solve the so-called “design gap”, as designer productivity didn’t grow fast enough to allow building System-on-Chip although CMOS technology scaling made it possible. In this white paper, the author introduces the “business gap” concept: amortizing the incredibly high development cost for a SoC targeting a single application is becoming extremely difficult if not impossible, except for very few applications like smartphone… Taking 14/16nm FF node as an example, the average number of IP blocks in a SoC is about 120 (source: IBS report, 2014). Still in the same SoC, interfaces represent about 50 IP blocks. This Cadence Multi-link PHY IP concept applies to high speed SerDes based interfaces. Let’s make it clear: Multi-Link is much more than Multi-protocol, a 10 year old, very cleaver concept reaching the limit, due to the business gap. The figure below is very helpful to understand that the Multi-link concept provides the flexibility of Multi-protocol plus re-use capability (here is the innovation): a single SoC can be designed to address multiple markets or applications. Let’s take a customer example as a real case. Designing a single but configurable SoC, this company has integrated 16 lanes Multi-Link PHY IP. To target Networking application, the PHY is configured as 16 lanes PCIe, to target Storage as 8 lanes PCIe, 8 lanes SATA and for Computing as a 12 lanes PCIe, 4 lanes 10G-KR. Amortizing the SoC development cost will be much easier as the ROI is now coming from three different markets, instead of a single application as it was the case before using the Multi-link concept. The benefits of using this Multi-link PHY IP concept are multiples as the various cost and resource intensive activities like IP integration, characterization setup and board design, qualification or PDK update are done only one time, leading to faster SoC design. Shorter TTM can be a priceless advantage for SoC addressing highly competitive markets. Such a Multi-protocols/Multi-links PHY IP offering Cost of Ownership (CoO) and TTM benefits all along the supply chain from fabless to foundries and EDA ecosystem. Cadence has enlarged this Multi-link concept to Analog Front End (AFE) IP for wireless communication, as well as to DDRn (LPDDRn) Memory Controller PHY IP. Reading this white paper, you will find much more detailed explanations which can’t fit in a blog like this, I really encourage you to read it (I must recognize that I’m the author…). You can access the white paper here. Moore’s law evolution is generating a “business gap”, designing a SoC on advanced technology nodes requires such high investment that the return may not be guaranteed if you target only one application. A conservative approach would be to keep designing on affordable nodes, 28nm or above, but it would prevent to benefit from extra performance and lower power, known to be the keys of success. Moving from chip architecture, one SoC targeting one application, to system architecture and creating a SoC platform allowing targeting several applications with the same SoC may break this “business gap”. This new approach is made possible by the flexibility offered by Multi-link PHY IP (SerDes or AFE). By Eric Esteve from IPNEST