As RTL design started to increase in the late 1980’s and early 1990’s, it was becoming apparent that some amount of management was needed to keep track of all the design files and their associated versions. Because of the parallels to software development, design teams looked to the tools and methodologies that were in use by software teams at the time.

Software teams had adopted Software Configuration Management solutions to handle the organization and versioning of their source code. RCS and CVS were two of the most popular revision control systems in use at the time, and semiconductor development teams began to adopt these for their development environment, eventually building methodologies around the use of these solutions.

It quickly became apparent that the differences between hardware and software design necessitated that more customized solutions needed to be developed for the semiconductor development teams. Binary databases for analog design needed to be supported, integration into the EDA environment were needed, and support for scripting and configuration files for EDA tool flow had to be developed.

In 1993, the consulting group at VIEWLogic began work on providing the first such environment for hardware teams. Building on top of RCS, they released ViewData, a plugin for the PowerView framework. This solution began to address the needs of managing configurations of files where RTL, schematics, and layout all made up the final design configuration.

In 1995, Dennis Harmon, Mitch Mastelone, Norm Sozio, and Eugene Connolly left VIEWLogic to form Synchronicity with the goal of providing the first true semiconductor design data management system that would manage design data across different development platforms and EDA tool environments. In 1996, they released DesignSync, which was built on top of a custom data management system that could handle the RTL and other ASCII data, and connectors into the solution that would interface with the EDA tools at the time. This solution became popular with analog designers, as now there was a way to handle the binary data and custom frameworks associated with Analog design.

Two years later, Srinath Anantharaman founded ClioSoft to continue to fill in the gaps that were not met by software SCM tools. ClioSoft launched the SOS design collaboration platform to target the challenges of hardware design. Like DesignSync, ClioSoft built SOS on top of a customized data management system, and developed technology to augment the traditional SCM approach to create a hardware configuration management (HCM) system while partnering with EDA companies to provide specific connectors into the EDA tools and methodologies.

In the ensuing years, there was a rise in the development of commercially available data management (DM) platforms. IBM Rational’s ClearCase and Perforce’s Helix were being adopted by development teams in many different industries. A new generation of open source solutions were also being developed, such as Subversion and later, Git. This allowed for a second generation of solutions to be introduced to the market that allowed for the adoption of solutions that were built on top of these commercially available solutions instead of running on proprietary data management systems.

In 2003, Shiv Shikland and Dean Drako founded IC Manage. Building on top of Perforce’s Helix data management solution, they released their Global Design Platform (GDP). By choosing to release their solution on top of a commercially available DM system, design teams were able to use a common DM system for software and hardware design, with the GDP client able to be customized for the needs of hardware designers.

Four years later, Simon Butler and Fergus Slorach founded Methodics. Methodics also chose to run on top of commercially available systems, but instead of limiting the solution to a single platform, they chose to allow users to run their choice of platforms, with Perforce and Subversion being the two most popular at the time. This further allowed customers to mix and match backend DM systems to fit their needs while having a common client, VersIC, running on top of the different systems for hardware design.

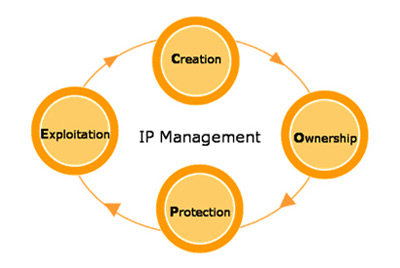

As design reuse began to gain traction in the early 2000’s and the use of third party IP began to grow, semiconductor designers were now faced with the challenge of managing designs for reuse, and managing the acquisition of third party IP. Design teams needed to know where to find internal IP for reuse and be able to track what versions were being used, in which projects it was being used in, and what products had taped out with what versions of IP. Third party IP complicated the problem, as each IP acquired often had a different contract that stipulated how the IP provider was to be paid for the IP’s use. Often, users of this IP would have to keep track of varying business terms that required the users to keep track of who looked at the IP, was it uses once or many times in a design, how many different designs was it used in, or how many parts were ultimately shipped after tape out.

Semiconductor design teams looked to the design management companies to provide solutions in this area. Synchronicity was first to market in the IP management space with IP Gear, Methodics released ProjectIC, IC Manage developed IP Central, and ClioSoft released DesignHub. Later, in 2004, Synchronicity would be acquired by MatrixOne, developer of one of the first PLM systems, to bring semiconductor design management closer to systems development. MatrixOne would then be acquired by Dassault Systemes in 2006. While DesignSync lives on as part of the ENOVIA PLM group in side of Dassault, IP management has been integrated into the ENOVIA PLM platform itself. Methodics has release Percipient as a follow on to ProjectIC, incorporating an IP LifeCycle Management (IPLM) methodology into the solution and providing integration to other engineering systems like requirements management and issue and defect systems.

Today, SoC’s continue to take advantage of reuse, with the number of IP cores in an SoC exceeding 100. The challenges facing the management of IP are still increasing. Functional safety requirements, such as ISO 26262 for automotive and DO-254 for aerospace, push semiconductor companies to provide evidence of a traceable path from requirements through design to verification and to document all work that has been done to meet those requirements. The need for these traceable flows require that IP management systems have links into requirements, verification and document management systems. Increasing use of third party IP are making designers look for robust IP portals with abundant IP meta data available so that they can accurately compare IP from different vendors. With the industries dependence now on IP, IP management systems will remain core to the effective collaboration of design teams for the years to come.

Also Read

Three things you should know about designHUB!