Every SOC that is designed must be tested and the premier conference for test is ITC, held last month in Anaheim, California.

I spoke with Robert Ruiz of Synopsys by phone on September 21st to get an update on what is new with EDA for test engineers this year. Robert and I first met back at Viewlogic when Sunrise was acquired in the 90’s.

The Big Picture

Over the years Synopsys has built and acquired a full lineup of EDA tools for test and they call it synthesis-based test:

Scan Test is a very well known technique and designers can choose either full-scan or partial-scan using the TetraMAX tool (technology initially acquired from Sunrise).

The STAR Memory System is technology acquired from Virage and it is well received in the industry with some 1 billion chips containing this IP to date.

Test engineers have a few choices with EDA tools, either buy from one vendor or from multiple vendors where you stitch the point tools together:

Questions and Answers

Q: With smaller process nodes there is more chance for Single Event Upset (SEU) in memories. How do you design for that?

A: SEU is mitigated by using Error Correcting Coding at the design stage.

Q: BIST is a popular test approach, but how do you know that your test electronics is OK?

A: The test electronics is treated like other parts of the logic design, and we add it to the scan chain too.

Q: Where would I use your Yield Analysis tool?

A: Within a foundry for example on the initial yield of new nodes there may be low yield, so this approach helps them find out where on each design the yield is being limited.

Q: Who else would use Yield Analysis?

A: We see users in IDM, Foundries and Fabless wanting to improve yield with this tool.

Q: How does static timing analysis work with ATPG?

A: In our test flow Static Timing Analysis (Prime Time) can guide TetraMAX (ATPG) for critical paths and area defects. Slack info sent to TetraMAX that will uncover any timing defects.

Q: Do temperature gradients cause speed faults?

A: Yes, and you could uncover any speed fault with our tools.

Q: What kind of Market share does Synopsys have in the EDA test tools?

A: Mentor and Synopsys are the top two suppliers for DFT tools. The top 20 semi companies roughly split in DFT between SNPS and MENT.

Q: Was Mentor Graphics the first to offer compression tools for test?

A: Yes, however our compression is more efficient compared to their approach.

Q: How are Synopsys test tools different from Mentor or Cadence tools?

A: The design flow using our logic synthesis is the biggest difference. For Memory and IO testing we lead in this IP (through Virage).

Q: Can scan compression create routing issues like congestion?

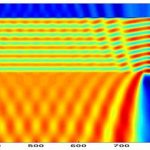

A: Yes, here’s a graphical comparison of routing congestion – white and red are high congestion in routing. Optimization for removing congestion is shown on the right-hand side. At synthesis stage any congestion will make the physical design tool run times much longer, or even fail to complete. Smart synthesis tools can help on congestion so that P&R times are quicker.

Q: What kind of industry endorsements do your DFT tools have?

A: We’re in the TSMC Reference flows.

Q: What are some pitfalls during test?

A: During test your chip may draw more power than in the budget (red is too much power), and that can damage the device during scan testing. Users can define their power budget during testing using DFT tools (green shows the budget), lower power can mean longer run times. This is a mature technology and it doesn’t require extra DFT up front (DFTMAX has compression).

SIG feedback – 19[SUP]th[/SUP] annual event this past Monday, tech papers presented. Samsung presented in 2010 for reduced tester power consumption.

Q: What’s happened after the Virage acquisition?

Q: Synopsys had a relationship with Virage before acquisition. Now the DFT tools know the scan chains and can connect memory scan to logic scan more efficiently. We can even re-use shift registers as scan elements, saving area up to 10% based on design style.

We can model memories with scan chains embedded, then optimize the ATPG runtimes. Use the Virage memories and Synopsys ATPG for the best test results.

Q: How do I optimize the number of scan chains?

A: Our tools let you optimize that.

Q: What is Yield Explorer all about?

A: This tool reads silicon data that is failing scan tests.

- – Accept physical design info and know where the defect is occurring (this via, that cell)

- – Also useful for volume diagnosis.

- – Defects today can be subtle, beyond just stuck-at faults, more cross-talk induced errors or noise errors.

This can help get to a higher yield quicker. Use the defective silicon data to help diagnose and pinpoint where to find failures. Create a candidate list of where to look. Designers can become more involved on where the failures are located at.

Q: How can you find and then verify IC defects?

A: Use etching techniques to find it visually. Yield analysis will pinpoint to the cell level what is suspect.

Q: Who is using this Yield Analysis capability?

Q: One customer is ST Ericsson who presented at SIG on Monday, they ran the Yield Explorer with TetraMAX and immediately found a via causing loss. They then made one design change, and yield went up 47%. They used the LEF/DEF feature to reduce area to look for loss. Fewer scripts to glue tools together.

Q: Yervant Zorian, tell me about Virage within Synopsys now.

A: We started 10 years ago to offer Memory test automation, and are now in our 4[SUP]th[/SUP] generation of memory test with some 1 billion chips using the Virage approach. The percentage of SOCs using RAM can be 60% now, so memory yield can limit the chip yield. Power is an issue during the testing of RAMs so you have to be intelligent about how to test these without exceeding limits.

In our IP with BIST the DFT tools used by the designer can analyze the results all the way down to the physical level.

The diagnosis of chips through JTAG ports allow the debug of all memories on the SOC in a low-cost fashion.

One challenge is how to diagnose SRAMs early in a new node that is just being defined. With Yield Analysis you can now use an automated approach to help improve yield for this.

All of our memory IP requires extensive characterization before it gets placed in a new SOC.

Summary

Synopsys offers a full suite of DFT tools and testable IP used by both design engineers and test engineers. The dominance of Design Compiler for logic synthesis is what makes the Synopsys tools different from the other vendor offerings.